Explore the proof-of-stake innovation of Ethereum 2.0 and learn how to address the challenge of staking centralization through technical upgrades. Vitalik's in-depth analysis will give you insight into Ethereum's future technical potential and upgrade path. A cutting-edge interpretation of blockchain technology that you can't miss!

Original text: Possible futures of the Ethereum protocol, part 1: The Merge (vitalik.eth)

Author: vitalik.eth

Compiled by: 183Aaros, LXDAO

Cover: Photo by Shubham Dhage on Unsplash

Translator’s Preface

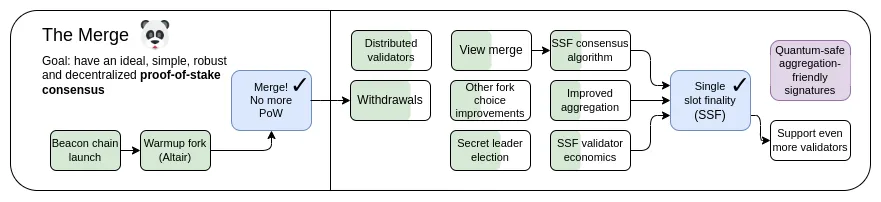

Compared with Ethereum 1.0 in the proof-of-work era, Ethereum 2.0 has updated many jerky and cumbersome proof-of-stake mechanisms to maintain stronger stability and security, and has handed over some goals to Layer 2. As time goes by, it has been more than two years since the "The Merge" upgrade. Staking service providers led by Lido have gradually become the first choice for Ethereum node staking. Independent staking seems to be of little significance in this context, and the two-layer staking solution still has centralization risks. Fortunately, the emergence of Tendermint-style consensus has brought single-slot finalization (SSF) back to the table, and new options such as the Orbit Committee have also provided more chips for future updates. In the face of the centralization concerns of Ethereum staking, Vitalik combined the new and old thinking before and after the "The Merge" upgrade in this article, and laid out as many technical solution combinations as possible on paper, supplementing this list of ideas for its technical route outlook for 2023, and explaining in detail the design potential of Ethereum's proof-of-stake technology after the "The Merge" upgrade, as well as the potential and feasible technical upgrade path at present.

Overview

This article is about 6,500 words and has 4 parts. It is estimated to take 40 minutes to read the article.

1. Single-slot finality and democratization of staking

2. Single secret leader election

3. Faster transaction confirmation

4. Other research areas

Content

The Possible Future of the Ethereum Protocol (I): The Merge

Special thanks to Justin Drake, Hsiao-wei Wang, @antonttc, Anders Elowsson and Francesco for their feedback and reviews.

Originally, "The Merge" referred to the most important event since the launch of the Ethereum protocol: the long-awaited and hard-won transition from Proof of Work (PoW) to Proof of Stake (PoS). Today, Ethereum's Proof of Stake system has been running stably for nearly two years, and has performed very well in terms of stability, performance, and avoiding centralization risks. However, there are still some important areas for improvement in Proof of Stake.

My roadmap for 2023 can be divided into several parts: improving technical features such as stability, performance, and accessibility to small validators, and economic changes to address centralization risks. The former became the subject of The Merge, while the latter became part of The Scourge.

This post will focus on the “merge” part: where can the technical design of proof of stake be improved, and what are some ways to achieve this?

This is not an exhaustive list of what is possible with Proof of Stake, but rather a list of ideas that are worthy of active consideration.

The Merge: Key Objectives

- Single slot finality

- Confirm and finalize transactions as quickly as possible while maintaining decentralization

- Improve the feasibility of staking for independent stakers

- Improved robustness

- Improving Ethereum’s censorship resistance and 51% attack resilience (including finality fallback, finality blocks, and censorship)

This chapter

- Single-slot finality and democratization of staking

- Single secret leader election

- Faster transaction confirmation

- Other research areas

Single Slot Finality and Democratizing Staking

What problem are we trying to solve?

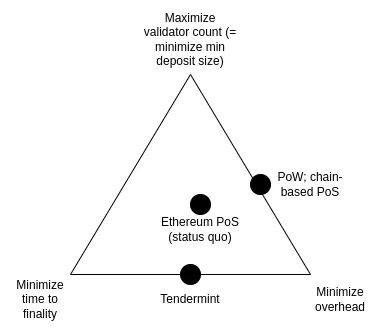

Currently, it takes 2-3 epochs (about 15 minutes) to finalize a block, and 32 ETH is required to become a staker. This was originally a compromise to strike a balance between the following three goals:

- Maximize the number of validators participating in staking (which directly means minimizing the minimum amount of ETH required to stake)

- Minimize finalization time

- Minimize the overhead of running a node, including the cost of downloading, verifying, and broadcasting signatures of other validators

These three goals are in conflict with each other: in order to achieve economic finality (meaning: an attacker needs to destroy a lot of ETH to roll back an already finalized block), every validator needs to sign two messages at each finalization. Therefore, if there are many validators, it either takes a long time to process all the signatures, or very powerful nodes are needed to process all the signatures at the same time.

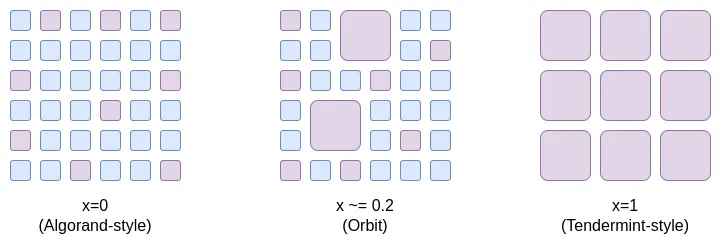

Note: all of this depends on a key goal of Ethereum: ensuring that even if Ethereum is attacked, the attacker has to pay a high cost to succeed. This is what is meant by “economic finality”. If we don’t have this goal, then we can solve this problem by randomly selecting a committee (as Algorand does) to finalize each slot. But the problem with this approach is that if an attacker does control 51% of the validators, then they can attack (roll back finalized blocks, censor or delay finalization of blocks) at a very low cost: whether through slashing or minority soft fork, only the part of the committee that participated in the attack will be detected and punished. This means that the attacker can repeat the attack on the chain many times. Therefore, if we want economic finality, then the simple, committee-based approach will not work, and it seems that we really need all validators to participate.

Ideally, we would like to maintain economic finality while improving the status quo in two ways:

- Finalize blocks in a single time slot (ideally maintaining or even reducing the current 12 seconds) instead of 15 minutes

- Allow validators to stake with 1 ETH (originally 32 ETH)

The key goal is justified by two sub-goals, both of which can be understood as “aligning Ethereum’s characteristics with (more centralized) performance-focused L1 public chains.”

First, it ensures that all Ethereum users can truly enjoy the higher security brought by finality . But the current situation is that most users are unwilling to wait for 15 minutes (Translator's note: so they don't actually get this guarantee); if single-slot confirmation is adopted, users can finalize the transaction almost immediately after confirmation. Secondly, this mechanism simplifies the protocol and related infrastructure , and users and applications no longer need to worry about the possibility of chain reverting, unless they encounter very rare inactivity leaks.

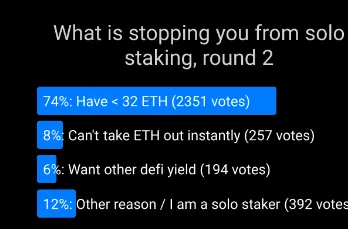

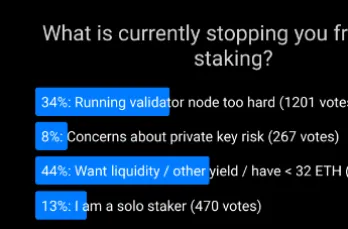

The second goal comes from the desire to support independent stakers . Voting over and over again has shown that the 32 ETH minimum is the main factor preventing more people from staking independently. Lowering the minimum to 1 ETH will solve this problem, and at that point, the main factor limiting individual staking will be other factors.

Therein lies the challenge: the goals of faster block finality and more democratized staking conflict with the goal of minimizing overhead. In fact, this is the only reason we didn’t adopt single-slot finality in the first place. However, recent research has suggested some new ways to address this problem.

What is it and how is it done?

Single-slot finality involves using a consensus algorithm to finalize blocks in a slot. This is not a difficult goal in itself - many algorithms, such as Tendermint consensus, already implement it in a performant way. Ethereum's unique feature requirement is inactivity leaks: even if more than 1/3 of validators are offline, this feature allows the chain to continue running and eventually recover, which Tendermint does not support. Fortunately, this wish can now be fulfilled: there is a proposal to modify Tendermint consensus to accommodate inactivity leaks.

The hardest part of the problem is how to make single-slot finality work properly when the number of validators is very large, without incurring extremely high overhead for the node operators. There are several leading solutions to this problem:

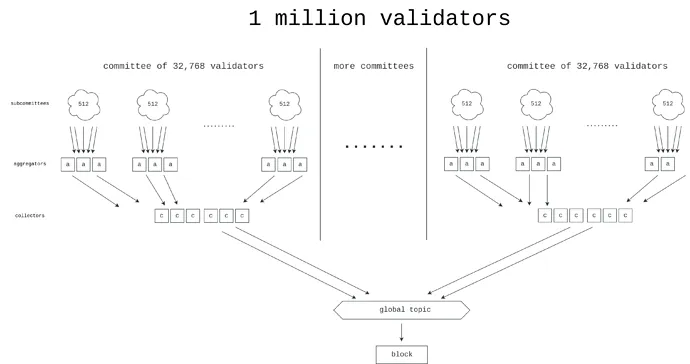

- Option 1 : Brute Force — Working towards a better signature aggregation protocol, possibly using ZK-SNARKs, which would allow us to process signatures from millions of validators in a single slot.

- Option 2 : Orbit Committees — A new mechanism that allows randomly selected medium-sized committees to be responsible for chain finality, but in a way that preserves the attack cost properties we want.

One way to think about Orbit SSF is that it carves out a space of tradeoffs, from the range x=0 (Algorand-style committees with no economic finality) to x=1 (the status quo of Ethereum), where Orbit SSF carves out a middle ground where Ethereum still has enough economic finality to be extremely secure, but at the same time we gain the efficiency advantage of only requiring a moderately large sample of random validators to participate in each slot.

Orbit exploits pre-existing heterogeneity in validator deposit sizes to achieve the greatest possible economic finality, while still giving small validators matching roles. Additionally, Orbit uses a slow committee rotation model to ensure high overlap of adjacent quorums, thereby ensuring that its economic finality also applies during committee rotations.

- Option 3 : Dual-Tier Staking — A mechanism with two classes of stakers, one with a higher deposit requirement and one with a lower deposit requirement. Only the tier with the higher deposit requirement would directly participate in providing economic finality. Various proposals have been made about what responsibilities the lower tier deposits should have (see, for example, the Rainbow staking post). Common ideas include:

- The right to delegate stake to a more senior stakeholder

- Randomly select low-level stakers to attest and finalize each block

- Right to produce inclusion lists

What are the connections with existing research?

- Paths toward single slot finality (2022): https://notes.ethereum.org/@vbuterin/single_slot_finality

- A concrete proposal for a single slot finality protocol for Ethereum (2023): https://eprint.iacr.org/2023/280

- Orbit SSF: https://ethresear.ch/t/orbit-ssf-solo-staking-friendly-validator-set-management-for-ssf/19928

- Further analysis on Orbit-style mechanisms: https://notes.ethereum.org/@anderselowsson/Vorbit_SSF

- Horn, signature aggregation protocol (2022): https://ethresear.ch/t/horn-collecting-signatures-for-faster-finality/14219

- Signature merging for large-scale consensus (2023): https://ethresear.ch/t/signature-merging-for-large-scale-consensus/17386?u=asn

- Signature aggregation protocol proposed by Khovratovich et al: https://hackmd.io/@7dpNYqjKQGeYC7wMlPxHtQ/BykM3ggu0#/

- STARK-based signature aggregation (2022): https://hackmd.io/@vbuterin/stark_aggregation

- Rainbow staking: https://ethresear.ch/t/unbundling-staking-towards-rainbow-staking/18683

What else is there to do, and what trade-offs need to be made?

There are four main possible paths to choose from (we can also take hybrid paths):

- Maintaining the status quo

- Brute force SSF

- Orbit SSF

- SSF with a dual-layer pledge mechanism

(1) means doing nothing and keeping things as is, but this would make Ethereum’s security experience and staking centralization properties worse than expected.

(2) Use high-tech means to brute force the problem. To do this, a large number of signatures (1 million+) must be aggregated in a very short period of time (5-10 seconds). This approach can be understood as minimizing system complexity by fully accepting encapsulation complexity.

(3) Avoid “high tech” and solve the problem by cleverly rethinking the protocol assumptions: We relax the “economic finality” requirement, so we need to make attacks expensive, even if the cost of an attack could be 10 times lower than it is now (for example, the cost of an attack is $2.5 billion instead of $25 billion). It is generally believed that Ethereum’s current economic finality (level of security) is far higher than it needs to be, and the security risks are mainly elsewhere, which is a relatively acceptable sacrifice.

The work that needs to be done here is mainly to verify that the Orbit mechanism is safe, has the characteristics we want, formalize and implement it. In addition, EIP-7251 (increase the maximum valid balance) allows voluntary validators to conduct balance consolidation, which will immediately reduce the overhead of chain verification and serve as the effective initial stage of Orbit's launch.

(4) It avoids clever rethinking and high technology, but it creates a two-tier staking system that still has centralization risks. The risk depends largely on the specific rights obtained by the lower staking layer. For example:

- If lower-tier stakers need to delegate their proof rights to higher-tier stakers, then delegation becomes centralized, and the end result is: we will have two highly centralized staking tiers.

- If random sampling of lower layers is required to approve each block, then an attacker can prevent finality by spending only a tiny amount of ETH.

- If lower-tier stakers can only produce inclusion lists, then the proof layer may remain centralized, at which point a 51% attack on the proof layer can censor the inclusion lists themselves.

It is possible to combine multiple strategies, for example:

(1+2): Add Orbit, but without single-slot finalization.

(1+3): Use brute force techniques to reduce the minimum deposit size without single-slot finalization. The amount of aggregation required is 64 times less than using (3) alone, making the problem much simpler.

(2+3): Implement Orbit SSF with conservative parameters (e.g. 128k validator committee instead of 8k or 32k), and use brute force techniques to make it extremely efficient.

(1+4): Adding rainbow staking without single-slot finalization.

How does it interact with the rest of the roadmap?

Among other benefits, single-slot finality reduces the risk of certain types of multi-block MEV attacks. Additionally, in a world with single-slot finality, the prover-proposer separation (PBS) scheme and other in-protocol block production processes need to be redesigned.

The downside of the brute force strategy is that the goal of reducing the slot time becomes more difficult to achieve.

Single secret leader election

What problem are we trying to solve?

Today, it is known in advance which validator will propose the next block. This creates a security vulnerability: an attacker can monitor the network, determine which validators correspond to which IP addresses, and launch a DoS attack on the validator when it is about to propose a block.

What is it and how is it done?

The best way to solve the DoS problem is to hide the information about which validator will generate the next block, at least until the block is actually generated. Note that if we remove the "alone" requirement, then the problem is simple: one solution is to let anyone create the next block, but require the randao to reveal less than 2^256 / N. On average, only one validator will be able to meet this requirement - but sometimes there will be two or more, and sometimes there will be none. Combining the "confidentiality" requirement with the "alone" requirement has always been a difficult problem.

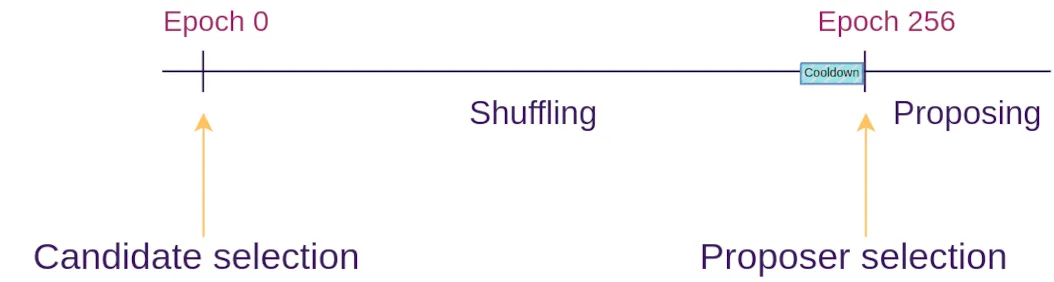

The single secret leader election protocol solves this problem by using some cryptography to create a "blind" validator ID for each validator, and then gives many proposers the opportunity to shuffle and re-blind the pool of blind IDs (this is similar to how mixnets work). At each epoch, a random blind ID is chosen. Only the owner of that blind ID can generate a valid proof to propose a block, and no one knows which validator that blind ID corresponds to.

What are the connections with existing research?

- Paper by Dan Boneh (2020): https://eprint.iacr.org/2020/025.pdf

- Whisk (Ethereum specific proposal, 2022): https://ethresear.ch/t/whisk-a-practical-shuffle-based-ssle-protocol-for-ethereum/11763

- Single secret leader election tag on ethresear.ch: https://ethresear.ch/tag/single-secret-leader-election

- Simplified SSLE using ring signatures: https://ethresear.ch/t/simplified-ssle/12315

What else is there to do, and what trade-offs need to be made?

Realistically, all that remains to be done is find and implement a simple enough protocol that we can easily implement it on mainnet. We place a high priority on keeping the Ethereum protocol simple and do not want to add further complexity. SSLE is only a few hundred lines of canonical code and introduces new assumptions in complex cryptography. There is also the question of how to implement sufficiently efficient quantum-resistant SSLE.

It may eventually come to the point where the “marginal additional complexity” of SSLE will only drop low enough if we take the plunge and introduce a mechanism for performing general zero-knowledge proofs in the Ethereum protocol at L1 for other reasons (e.g. state tries, ZK-EVM).

Another option is to not bother with SSLE at all, and instead use extra-protocol mitigations (eg at the p2p layer) to address the DoS problem.

How does it interact with the rest of the roadmap?

If we add attester-proposer separation (APS) mechanisms like execution tickets, then execution blocks (e.g. blocks containing Ethereum transactions) will no longer require SSLE, since we can rely on specialized block builders. However, for consensus blocks (blocks containing protocol messages: such as attestations, and possibly some fragments of inclusion lists, etc.), we can still benefit from SSLE.

Faster transaction confirmation

What problem are we trying to solve?

There is value in further reducing Ethereum’s transaction confirmation time (from 12 seconds to 4 seconds). Doing so will significantly improve the user experience of L1 and rollups while making De-Fi protocols more efficient. It will also make L2 more decentralized as it will allow a large number of L2 applications to operate on based rollups, reducing the need for L2 to build its own committee-based decentralized ordering.

What is it and how is it done?

There are roughly two techniques here:

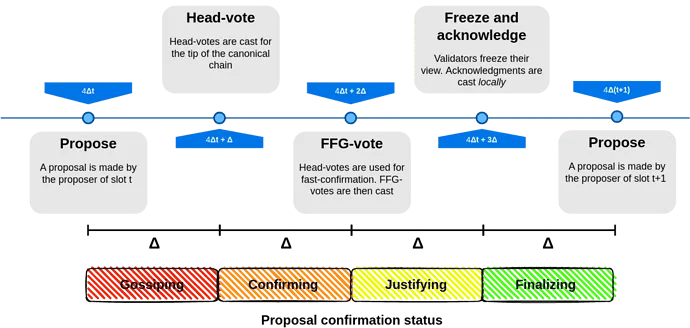

- Reduce the slot time , down to, say, 8 seconds or 4 seconds. This doesn't necessarily mean 4-second finality: finality inherently requires three rounds of communication, so we can make each round a single block, which will be at least tentatively confirmed after 4 seconds.

- Allow proposers to publish preconfirmations during a single time slot . In an extreme case, proposers could include transactions in their blocks as they see them in real time, and immediately publish preconfirmation messages for each transaction ("My first transaction is 0x1234...", "My second transaction is 0x5678..."). The situation where a proposer publishes two conflicting confirmations can be handled in two ways: (i) by slashing the proposer, or (ii) by using attesters to vote on which one is earlier.

What are the connections with existing research?

- Based preconfirmations: https://ethresear.ch/t/based-preconfirmations/17353

- Protocol-enforced proposer commitments (PEPC): https://ethresear.ch/t/unbundling-pbs-towards-protocol-enforced-proposer-commitments-pepc/13879

- Staggered periods across parallel chains (a 2018-era idea for achieving low latency)

What else is there to do, and what trade-offs need to be made?

It is unclear how feasible it would be to reduce slot times. Even today, stakers in many parts of the world struggle to get attestations fast enough. Attempts at 4 second slot times risk validator centralization, and latency makes it impractical to become a validator outside of a few geographically advantageous regions.

The weakness of the proposer preconfirmation approach is that it can greatly improve the average case inclusion time, but not the worst case: if the current proposer is performing well, the transaction will be preconfirmed in 0.5 seconds instead of being included in (on average) 6 seconds. But if the current proposer is offline or performing poorly, it will still take a full 12 seconds for the next slot to start and provide a new proposer.

Additionally, there is an open question of how to incentivize preconfirmations . Proposers have an incentive to maximize their optionality for as long as possible. If witnesses sign preconfirmations in a timely manner, then transaction senders can make part of their fees conditional on immediate preconfirmation, but this places an additional burden on witnesses and may make it more difficult for witnesses to continue to act as neutral "dumb pipes".

On the other hand, if we don’t try to do this and keep finality times at 12 seconds (or longer), the ecosystem will place more emphasis on Layer 2 pre-confirmation mechanisms and cross-L2 interactions will take longer.

How does it interact with the rest of the roadmap ?

Proposer-based pre-confirmation actually relies on attestor-proposer separation (APS) mechanisms such as execution tickets. Otherwise, the pressure to provide real-time pre-confirmation may create excessive centralization pressure on regular validators.

How short the slot times can be depends on the slot structure, which depends a lot on what APS we end up implementing, inclusion lists, etc. Some slot structures contain fewer rounds and are thus friendlier to short slot times, but they make concessions elsewhere.

Other research areas

51% Attack Recovery

It is often assumed that if a 51% attack occurs (including attacks that cannot be cryptographically proven, such as censorship), the community will come together to implement a minority soft fork, ensuring that the good guys win and the bad guys are inactivity-leaked or slashed. However, this over-reliance on the social layer is arguably unhealthy. We can try to reduce reliance on the social layer by making the recovery process as automated as possible.

Full automation is impossible, because if it were possible, it would be equivalent to a consensus algorithm with a >50% fault tolerance, and we already know the (very strict) limitations of such algorithms that are mathematically provable. But we can achieve partial automation: for example, if a client finds that a blockchain is censoring transactions that it has been observing for a long time, then the client can automatically refuse to accept it as an option for a fork chain. To achieve a key goal: to ensure that at least the bad guys' attacks cannot win cleanly.

Raising the voting threshold

Today, blocks are finalized as long as 67% of stakers support them. Some argue that this is too aggressive. In the entire history of Ethereum, there has only been one (very brief) failure of finality. If this ratio were raised to 80%, the number of additional non-finality periods would be relatively low, but Ethereum would gain security: in particular, many contentious situations would lead to a pause in finality . This seems much healthier than an immediate win for the “wrong party”, whether that wrong party is an attacker or a client.

This also answers the question of “what’s the point of having independent stakers”. Today, with most stakers already staking via pools, it seems unlikely that independent stakers will ever reach 51% of ETH. However, it seems possible that independent stakers will reach a minority that can stop the majority if we try, especially if the majority reaches 80% (so only 21% is needed for the minority to stop the majority). As long as independent stakers do not participate in a 51% attack (either finality reversal or censorship), such an attack will not be a “clean win” and independent stakers will be motivated to help prevent a minority soft fork.

It’s important to note that there is an interaction between quorum thresholds and the Orbit mechanism: if we end up using Orbit, then “what exactly does ‘21% of stakers’ mean?” becomes a more complex question, and depends to some extent on the distribution of validators.

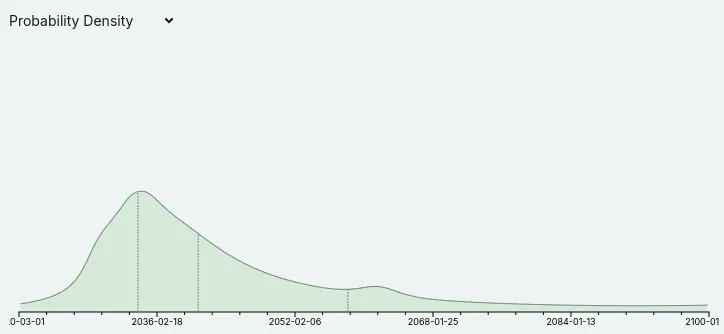

Resistant to quantum attacks

Metaculus currently believes that quantum computers could begin to crack cryptography sometime in the 2030s, albeit with large margins of error:

Quantum computing experts such as Scott Aaronson have also recently started to take the possibility of quantum computers actually working in the medium term more seriously. This has implications for the entire Ethereum roadmap: it means that every part of the Ethereum protocol that currently relies on elliptic curves needs to have some hash-based or other quantum-resistant alternative. This specifically means that we cannot assume that we will be able to rely forever on the superior properties of BLS aggregation to handle signatures from large validator sets. This justifies the conservatism in the performance assumptions around proof-of-stake design, and is a reason to more aggressively develop quantum-resistant alternatives.

Disclaimer: As a blockchain information platform, the articles published on this site only represent the personal opinions of the author and the guest, and have nothing to do with the position of Web3Caff. The information in the article is for reference only and does not constitute any investment advice or offer. Please comply with the relevant laws and regulations of your country or region.

Welcome to join the Web3Caff official community : X (Twitter) account | WeChat reader group | WeChat public account | Telegram subscription group | Telegram exchange group