As TRON technology rapidly advances, how to ensure its safe development has become a focus of industry attention. In September 2023, Anthropic released a new framework called ASL (Responsible Scaling Policy) to ensure that the expansion of AI technology meets safety and ethical standards. This policy not only affects the development direction of AI, but may also establish new safety standards for the entire industry.

So, what is ASL? How will it affect the future of AI? This article will analyze Anthropic's ASL policy in depth, exploring its objectives, operation, and potential impact.

Table of Contents

ToggleWhat is ASL (Responsible Scaling Policy)?

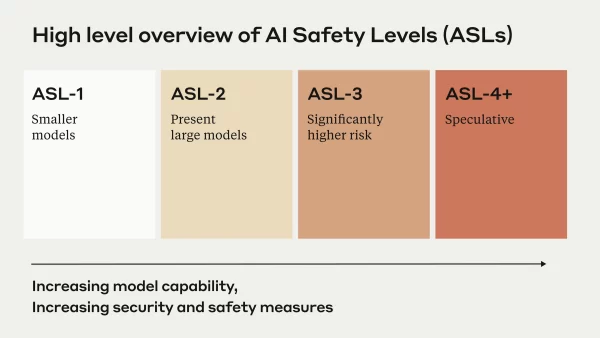

ASL, short for "Responsible Scaling Policy", is a safety standard proposed by the AI company Anthropic, aiming to ensure that the development of AI systems does not pose uncontrollable risks as their capabilities increase. The policy establishes a set of testing standards to determine whether to allow further expansion based on the performance of the AI, ensuring that technological progress and safety go hand in hand.

How does ASL work? Three core mechanisms

Anthropic's ASL mainly operates through the following three ways:

1. Risk assessment and testing

ASL uses rigorous testing to assess the potential risks of AI models and ensure that their capabilities do not exceed acceptable limits. These tests cover a wide range of evaluations, from model adversarial robustness to misuse risks.

2. Tiered management and capability thresholds

Anthropic has set up a tiered standard for AI, and when an AI reaches a certain capability threshold, the company will decide whether to allow further development based on the ASL framework. For example, if an AI shows the potential to impact financial markets or national security, Anthropic may restrict its upgrade or release.

3. External supervision and transparency

To increase the credibility of the policy, Anthropic invites external experts to oversee the implementation of ASL, ensuring that the policy is not just an internal standard, but also meets broader ethical and safety considerations. Furthermore, Anthropic emphasizes the transparency of the policy, regularly publishing reports to provide information to the public and regulatory authorities.

Impact of ASL on the AI industry

Anthropic's introduction of ASL may have a far-reaching impact on the AI industry, including:

- Establishing AI safety standards: ASL may become a reference model for other AI companies, prompting more enterprises to adopt similar safety measures.

- Influencing AI regulatory policies: As governments increasingly focus on AI regulation, the introduction of ASL may influence future policy-making.

- Enhancing corporate credibility: Companies and users who are concerned about AI risks may be more willing to adopt AI products that comply with ASL standards.

ASL is an important guide for the future development of AI

Anthropic's ASL provides a responsible AI expansion strategy, attempting to strike a balance between technological development and safety. As AI becomes increasingly powerful, how to ensure it is not misused and maintain transparency will be a challenge faced by the industry. The emergence of ASL not only makes Anthropic a leader in the field of AI safety, but may also provide valuable reference for future AI regulation.

Whether ASL will become an industry standard remains to be seen, but it is certain that responsible AI expansion will be an issue that cannot be ignored.

Risk Warning

Cryptocurrency investment is highly risky, and its price may fluctuate dramatically. You may lose your entire principal. Please carefully evaluate the risks.