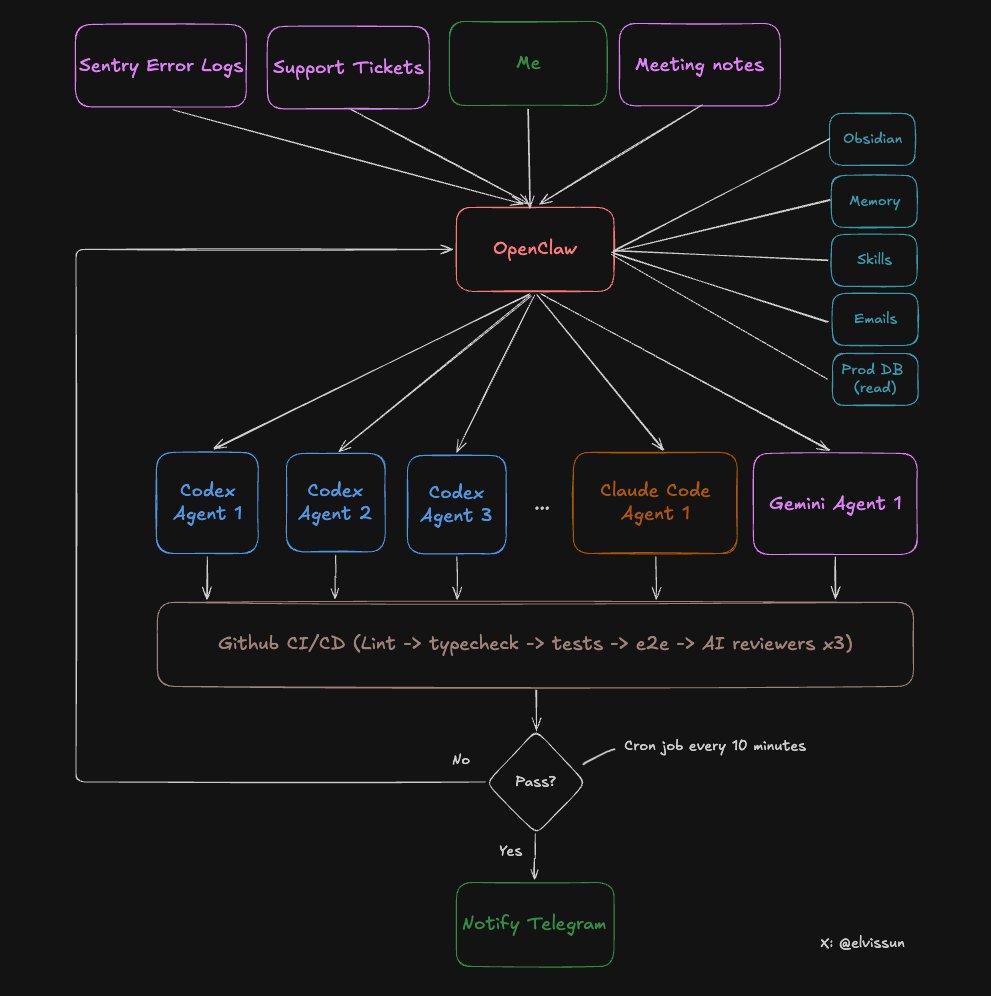

The author shared an agent orchestration system based on OpenClaw, which forms a "multi-agent development fleet" of models such as Codex, Claude Code, and Gemini, and is uniformly scheduled by the local orchestrator Zoe to achieve a fully automated closed loop from requirements to PR.

Article author: @elvissun

Article source: X platform

I no longer use Codex or Claude Code directly.

I use OpenClaw as my orchestration layer. My orchestrator, Zoe, is responsible for generating sub-agents, writing their prompts, selecting the most suitable model for different tasks, monitoring progress, and notifying me via Telegram when the PR can be merged.

Data from the past 4 weeks:

- 94 commits in a single day. That was my most productive day—I had three client calls that day and didn't even open the editor. On average, I make about 50 commits per day.

- Seven pull requests within 30 minutes. The transition from idea to production is almost lightning fast, as coding and validation are largely automated.

- Commits → MRR: I used this system for real B2B SaaS product development, working with the founder to lead sales, enabling the delivery of most functional requirements on the same day. Speed directly translated into paying customers.

contrast:

One month ago: Only using Claude Code/Codex

One month later: OpenClaw orchestrates Claude Code/Codex

My Git history now looks like I just hired a development team.

In reality, I simply upgraded my role from "managing Claude Code" to "managing an OpenClaw Agent, which in turn manages an entire fleet of Claude Code and Codex Agents."

Success rate:

Almost all small to medium-sized tasks can be completed in one go without human intervention.

cost:

Claude costs approximately $100 per month, Codex costs approximately $90 per month, and beginners can start with $20.

Why is this more efficient than using Codex or Claude Code directly?

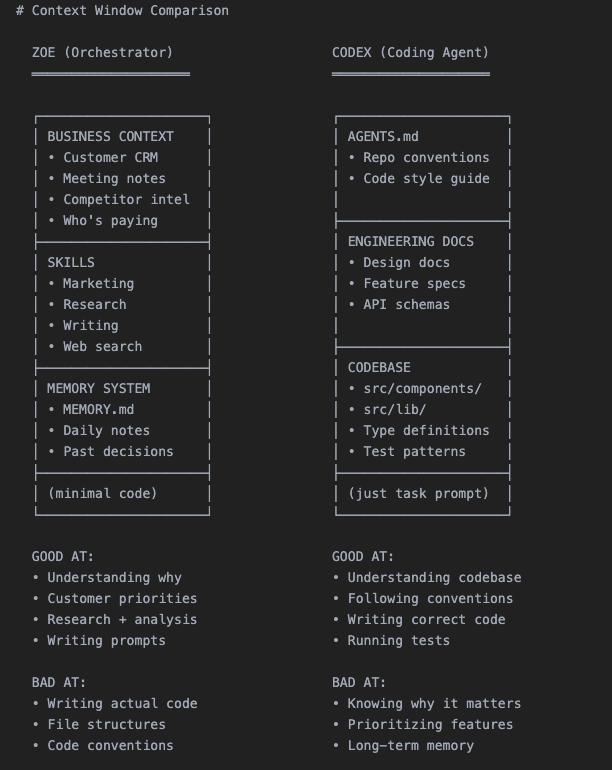

Codex and Claude Code know almost nothing about your business.

They see the code, not the overall business logic.

OpenClaw changed this logic.

It's the orchestration layer between you and all your agents—it stores the complete business context (customer data, meeting minutes, historical decisions, successes and failures) in my Obsidian Vault and translates this historical context into precise prompts for each coded agent.

Coding Agent focuses on code.

The orchestrator is responsible for strategy.

High-level architecture

Last week, Stripe released their backend agent system, "Minions"—a parallel coding agent plus a central orchestration layer.

I accidentally created a similar system, but it runs locally on my Mac mini.

Why is an Agent orchestrator necessary?

The context window is zero-sum.

You must choose what to fill in:

Fill it with code → there's no room for business context. Fill it with customer history information → there's no room left for the codebase. This is why a two-tier system works: each AI is loaded only with the specific content it needs.

Complete 8-step workflow

Below is a real-world case study process.

Step 1: Customer Needs → Jointly Disassemble with Zoe

The client wants the team to reuse existing configurations.

After the meeting, Zoe and I discussed the requirements.

Since the meeting minutes are automatically synced to Obsidian Vault, I don't need to explain the background. We explored this feature together and found a template system that allows them to save and edit existing configurations.

Zoe does three things:

- Replenish customer credit limit via Admin API

- Read customer configuration from the production database (read-only permissions; Codex Agent will never have this permission).

- Launch the Codex Agent with a full context prompt.

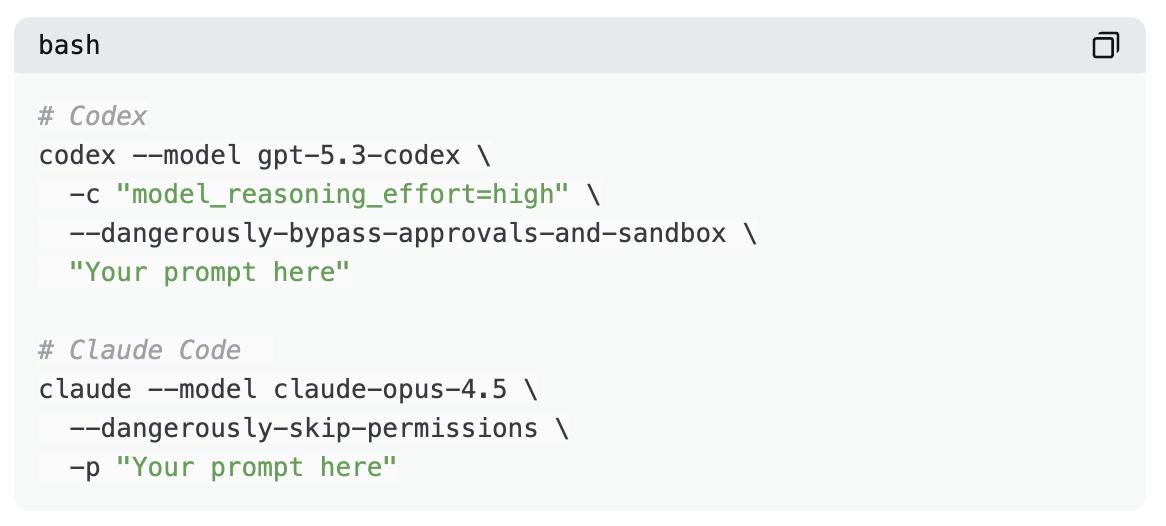

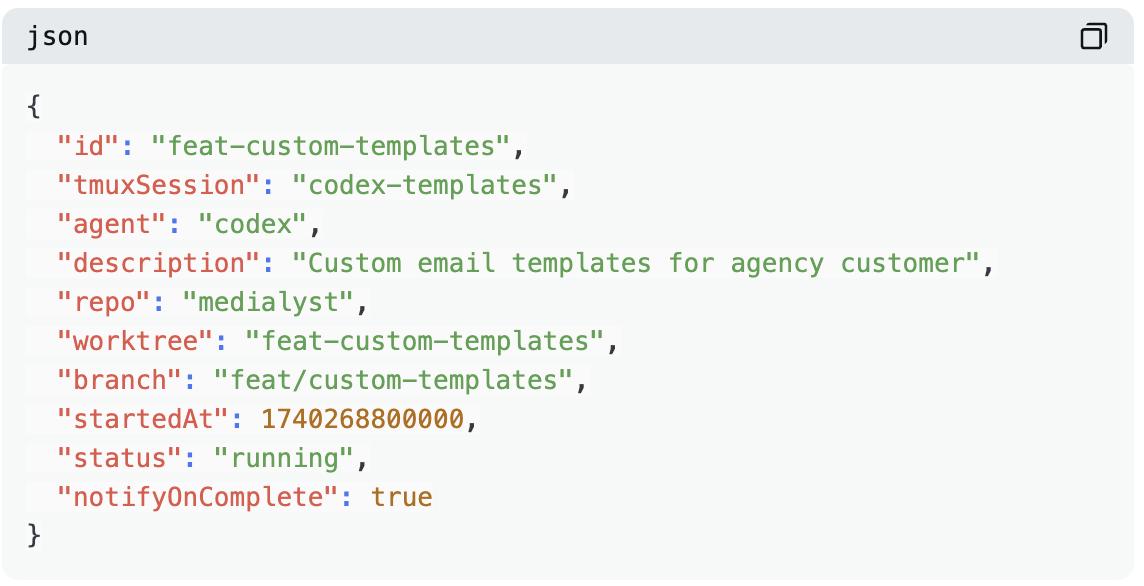

Step 2: Start the Agent

Each Agent has its own independent worktree and tmux session.

The advantage of using tmux is that you can intervene midway without having to shut down the process.

Task status is recorded in a JSON registry.

Step 3: Automatic monitoring loop

Executes every 10 minutes via cron:

- Check if the tmux session exists.

- Check PR status

- Check CI

- A maximum of 3 automatic retries

- Notify me only when human intervention is required.

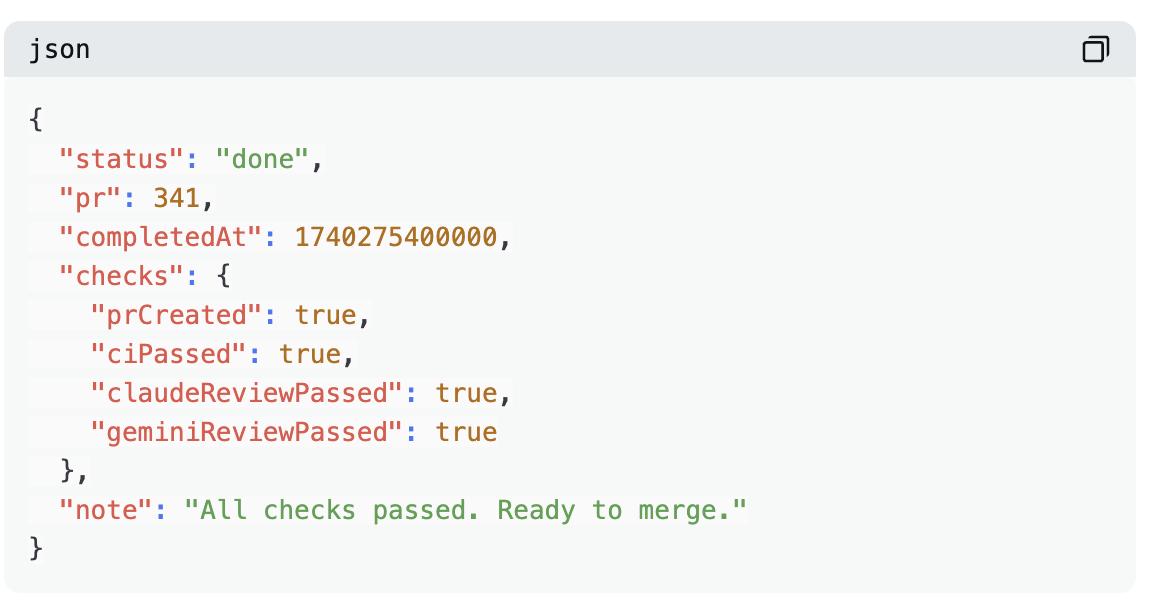

Step 4: Agent creates PR

Producing a PR is not the end.

Complete completion criteria include:

- PR creation

- No conflict

- CI passed

- Codex approved

- Claude approved

- Gemini approved

- If UI elements are involved, screenshots must be attached.

Step 5: Three-Model Code Review

Each PR is reviewed by three artificial intelligence models. They can capture different information.

- Codex: Strongest in logic and boundary handling

- Gemini: Excellent security and scalability

- Claude: Cautious, usually ignores non-critical advice.

Step 6: Automated Testing

Our CI pipeline runs a large number of automated tests:

Lint and TypeScript checks - unit tests - E2E tests - playwright tests for preview environments (same as prod)

Last week I added a new rule: if PR makes any changes to the user interface, it must include a screenshot in the PR description. Otherwise, CI will fail. This significantly reduces review time, and I can see the changes accurately without having to click preview.

Step 7: Manual review

Once the three models pass + CI, Telegram will notify me.

My review only takes 5-10 minutes.

For many pull requests, I don't even read the code; I just look at the screenshots.

Step 8: Merge

Cron cleans up the separate job tree and task registry daily.

Ralph Loop V2

Essentially, this is an upgraded version of the Ralph Loop.

Traditional Ralph Loops retrieve context from memory, generate output, evaluate results, and save the learned outcomes. However, most implementations use the same prompt in each loop. While the extracted experience does improve future retrieval performance, the prompt itself is static and immutable.

Our systems are different.

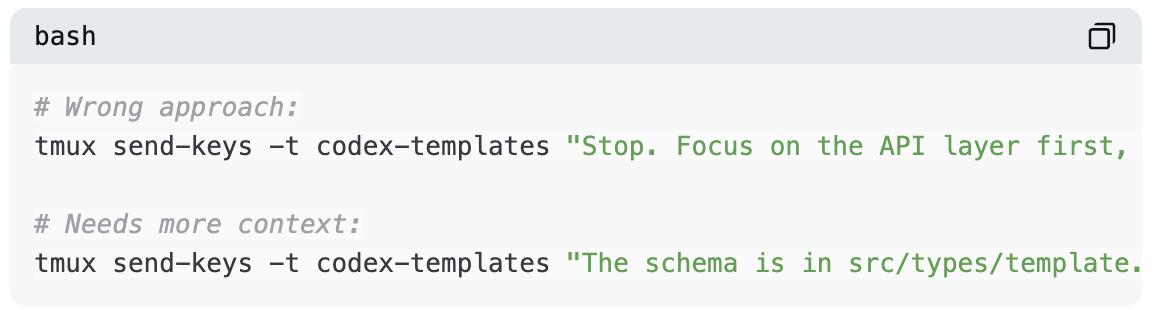

When an Agent fails, Zoe doesn't simply restart it with the same prompt. She analyzes the failure context to determine the cause and how to unblock it.

Insufficient agent context?

"Focus only on these three documents."

Did the agent go in the wrong direction?

"Stop. The client wants X, not Y. That's what they were saying in the meeting."

Does the agent need clarification?

"This is an email from a client, along with an introduction to their company's business."

Zoe will accompany you every step of the way until the task is completed. She possesses context that the agent lacks—customer history, meeting minutes, past attempts, and why they failed. She uses this information to write more precise prompts for each retry.

But she won't wait for me to assign tasks.

She actively seeks out jobs:

Morning: Scan Sentry → Found 4 new errors → Initiated 4 agents to investigate and fix.

Post-meeting: Scan the meeting minutes → Mark the 3 new features mentioned by the customers → Launch 3 Codex Agents

Evening: Scan Git logs → Start Claude Code to update changelog and client documentation

I went for a walk after receiving a call from a client. When I came back, I opened Telegram:

"7 PRs are ready for review. 3 new features and 4 bug fixes."

When the Agent succeeds, the success pattern is recorded:

"This Prompt structure is suitable for billing functions."

"Codex requires type definitions to be provided in advance."

"The test file path must be included."

Reward signals include:

- CI passed

- All three AI reviews passed.

- Artificial merging

Any failure will trigger a loop.

As time goes on, Zoe will write better and better prompts because she remembers "what went live".

Choose the correct Agent

Not all coded agents are the same.

Brief reference:

Codex is my main tool.

It handles backend logic, complex bugs, multi-file refactoring, and tasks requiring cross-codebase reasoning. It's slower, but very comprehensive. I use it for 90% of my tasks.

Claude Code is faster and better at front-end development. It has fewer permission issues, making it ideal for Git operations. (I used to use it more for daily development, but Codex 5.3 is now even stronger and faster.)

Gemini has a distinct advantage – its design.

When creating a beautiful UI, I first have Gemini generate the HTML/CSS specifications, and then hand them over to Claude Code for implementation in the component system. Gemini is responsible for the design, and Claude is responsible for the construction.

Zoe selects the appropriate Agent for each task and routes the output between them:

- Billing system bug → Codex

- Button style fix → Claude Code

- New dashboard design → First Gemini

How to build this system

Copy the entire article to OpenClaw and then tell it:

"Implement this Agent Swarm architecture for my codebase."

It reads the architecture description, creates scripts, establishes the directory structure, and configures cron monitoring.

Completed in 10 minutes.

There are no courses for sale to you.

Unexpected bottleneck

The ceiling I'm currently facing is memory.

Each Agent needs its own worktree.

Each worktree requires its own node_modules.

Each agent must run builds, type checks, and tests.

Running five agents simultaneously means:

- Five parallel TypeScript compilers

- Five test runners

- Five dependencies are loaded into memory.

My 16GB Mac Mini can only run 4-5 agents at most; any more and it starts memory swapping. And I have to pray they don't build at the same time.

Therefore, I purchased a Mac Studio M4 Max with 128GB RAM ($3,500) specifically to run this system. It arrived at the end of March, and I will share whether it was worth it.

Next step: A one-person million dollar company

In 2026, we will see a large number of "one-person million dollar companies".

For those who understand how to build recursively self-improving agents, the leverage is enormous.

It looks like this:

An AI orchestrator as your extension (like Zoe for me).

Delegate tasks to specialized agents:

- project

- Customer Support

- Operations and maintenance

- market

Each agent focuses on its area of expertise.

You maintain a high level of focus and complete control.

The next generation of entrepreneurs will no longer hire a team of 10 people to do what one person with the right system can do.

They would structure their company like this: keep it lean, iterate quickly, and release daily.

It's now flooded with AI-generated junk content.

There's been a lot of hype surrounding Agents and "Task Consoles," but there haven't been any real, tangible results.

It's a flashy demonstration, but it has no real-world value.

I want to do the opposite:

Less hype, more documentation of the actual business development process.

Real customers.

Real income.

The actual commit that went live.

This also includes actual losses.

What am I doing?

Agentic PR——

A one-man company versus a corporate PR agency.

Use an agent to help startups gain media exposure without paying a monthly service fee of $10,000.

If you want to see how far I can go, stay tuned.