This article is machine translated

Show original

Inspired by Karpathy's autoresearch, I taught VibeHQ to evolve itself—not just a single agent, but the entire multi-agent collaboration mechanism.

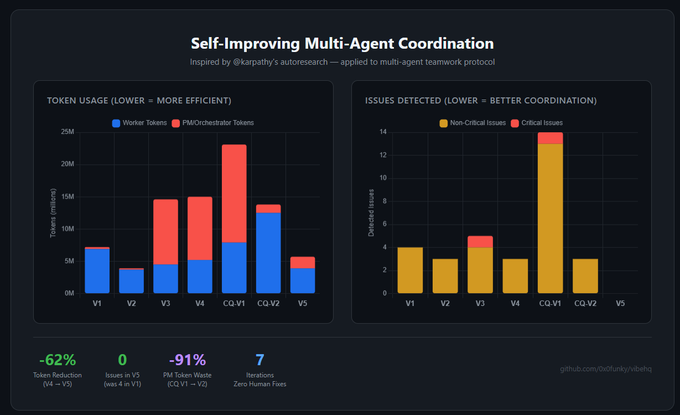

Seven fully automated runs with zero human intervention:

• Token usage: 7.2M → 5.7M (peak value reduced by 62%)

• Reduction in coordination-related issues (duplication of work, etc.): 4 → 0

• PM token waste: -91%

Loop: benchmark → collaboration quantification and LLM analysis failure modes → /optimize-protocol rewrite coordination code → rebuild → repeat.

The AI observes agent team collaboration failures, analyzes why they failed, and then modifies its own source code to coordinate the collaboration logic—all without human intervention. The AI completely organizes its own team's synergy.

Looking at some related resources, Autoresearch is automatically optimizing model training. Ralph previously used a single agent for autonomous looping, and Gastown simultaneously ran 20-30 Claude Code instances for orchestration, but it didn't exhibit any evolutionary capabilities. These were all very powerful, but eventually, they all focused on evolving the capabilities of individual agents.

No one is evolving teamwork itself—how to divide tasks, how to avoid conflict, how to share context, how to unblock each other. Just like in the real world, AI teams also need time to gel.

Imagine what this will evolve into:

• Agents develop their own team culture and working synergy.

• It adapts to projects, allocating teams of 3 or 7 people based on the project's development progress.

• The more projects done together, the stronger the team becomes.

• Agents can onboard new teammates during a project, automatically reassigning tasks.

Honestly, what will it ultimately evolve into? I don't know, but that's precisely the most exciting part.

Andrej Karpathy

@karpathy

03-10

Three days ago I left autoresearch tuning nanochat for ~2 days on depth=12 model. It found ~20 changes that improved the validation loss. I tested these changes yesterday and all of them were additive and transferred to larger (depth=24) models. Stacking up all of these changes,

Detailed experimental data can be found in this article.

From Twitter

Disclaimer: The content above is only the author's opinion which does not represent any position of Followin, and is not intended as, and shall not be understood or construed as, investment advice from Followin.

Like

Add to Favorites

Comments

Share