This article is machine translated

Show original

Can Google TPUs Shake Nvidia's Footsteps?

I listened to an interview with a Google TPU engineer on Silicon Valley 101 while working out this weekend, and it was quite interesting.

After listening, I gained some new insights and reflections. I've summarized the core points of the original interview and recorded my own thoughts.

1️⃣ Conclusions from the podcast

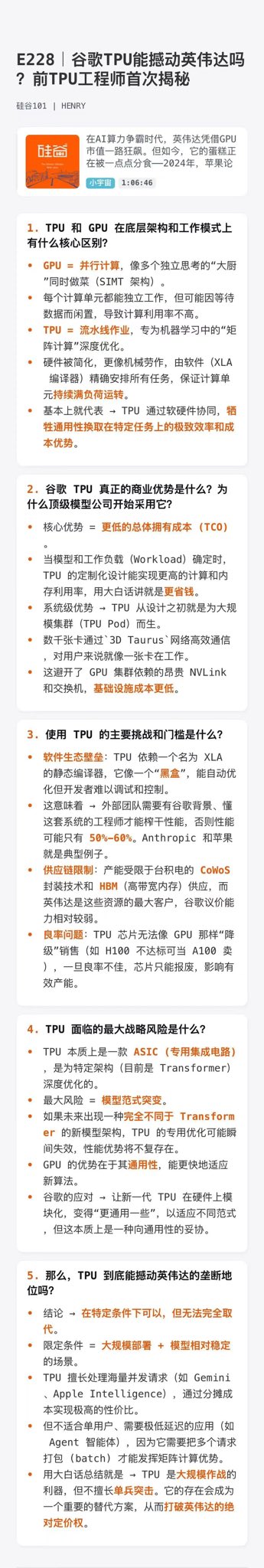

(A more detailed AI text summary is also available; see Figure 2 for a longer version.)

1) Gemini has a counterintuitive characteristic: the more people using it, the faster it becomes.

This is determined by the TPU architecture. Parallel computing + a reused caching mechanism achieves maximum efficiency when the computing power is fully utilized.

Of course, this is a double-edged sword.

Last year, with the release of Gemini 3, a large influx of GPT users caused frequent service crashes… The root cause was that TPU production capacity couldn't keep up; expansion couldn't keep pace with user growth.

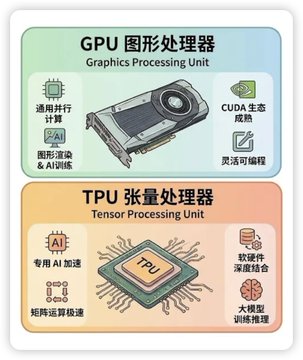

2) Nvidia GPUs vs. Google TPUs: each has its own competitive advantages.

Nvidia's advantage lies in software: the CUDA ecosystem is too mature, highly versatile, and difficult to modify. Google's TPU's advantage lies in its hardware-software integration: when running specific large-scale model algorithms, its performance directly surpasses Nvidia's.

Apple has become the largest buyer of TPUs, and Anthropic is also purchasing them in large quantities.

The reason is simple: they don't want to put all their eggs in one basket with Nvidia.

3) TSMC's moat is deeper than imagined.

Whether it's Nvidia, Google, Apple… all chips are made by TSMC. No other company can replace TSMC's process technology and yield rate.

Even more outrageous is that TSMC's production capacity is simply insufficient; major manufacturers are lining up to compete for it.

Selling shovels is a sure thing, and I will firmly believe in and hold TSMC.

4) TPUs are similar to putting algorithms into hardware, somewhat like mining with ASICs.

GPUs are general-purpose; TPUs are custom-designed.

Google's chip team needs to place orders with TSMC one to two years in advance, meaning that the chips they are making now are designed for AI algorithms two years from now.

Therefore, Google's AI and chip teams must be deeply intertwined. The algorithm direction they bet on today determines whether their chips will be usable two years from now.

A correct bet is a game-changer; a wrong bet results in a complete waste of time and resources over two years.

This constraint is not something Nvidia faces.

2️⃣ Some personal thoughts

The so-called hardware-software integration means that if general hardware design capabilities are insufficient or too costly,

then they simply design hardware specifically for a particular type of algorithm.

In terms of hardware design capabilities, they cannot compare to general-purpose hardware.

Why doesn't Nvidia develop NPUs/TPUs?

Because they can't expand their advantages. Too many manufacturers can do it; if everyone does it, it'll be like the mobile phone manufacturers.

For example 🌰: Almost all Android phones have infrared remote control functionality, i.e., universal remote control functionality.

Why doesn't Apple do it?

Is this function difficult? No;

Do customers need it? Yes.

Then why doesn't Apple do it?

I think the form of a product may not necessarily be "entirely determined by demand."

If two sets have a higher proportion of identical elements, they become less distinctive.

Think about our impressions of mobile phones: Apple/non-Apple.

If using Android, most people don't care about Xiaomi, Oppo, Vivo, or Huawei because they are too homogeneous, resulting in relatively low user stickiness.

Analogize to the difference between a calculator and a computer: a computer performs general-purpose calculations, while a calculator performs specific calculations.

A calculator naturally consumes less power and can only perform specific calculations.

TPUs don't have hardware design barriers compared to Nvidia's GPUs.

They're simply trying to reduce costs.

At the same time, very few companies make huge profits by reducing costs; only those that propose new concepts and open up new directions can take off, and these are also easier to hype up.

Current neural network calculations are mainly tensor calculations, and

converting costs is a last resort for Nvidia.

Therefore, we can't look at TPU's claims of advantages in isolation.

From Google's perspective, developing TPUs is the best choice.

However, from Nvidia's perspective, engaging in a cost war is a last resort.

Because Nvidia has already secured a first-mover advantage, its CUDA ecosystem, and its top-tier GPU design capabilities.

Can't Google make GPUs?

Definitely not.

If Moore's Law can do it, how could Google not? With a little more money, they'd be able to poach talent, right?

The initial investment in chips is enormous. If Google starts making GPUs and nobody uses them, they'll face a situation where they can't even recoup their costs.

At the same scale, Google's cost to make TPUs is lower than GPUs, and they can also bundle and sell their own Gemini,

which is the better strategy.

From Twitter

Disclaimer: The content above is only the author's opinion which does not represent any position of Followin, and is not intended as, and shall not be understood or construed as, investment advice from Followin.

Like

Add to Favorites

Comments

Share

Relevant content