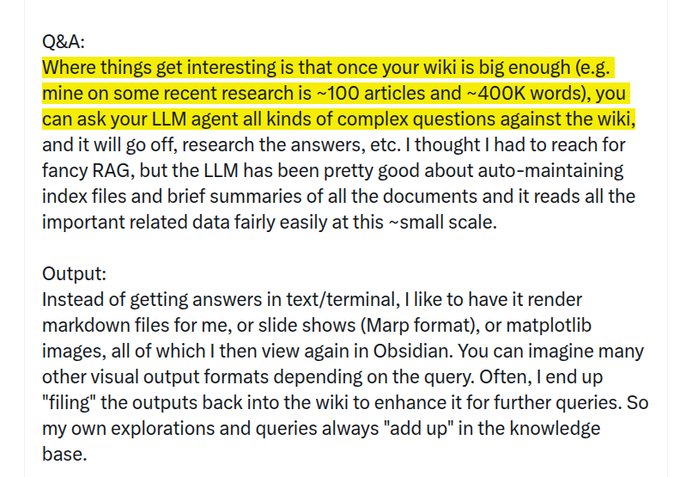

Karpathy's setup keeps a 400K-word research knowledge-base without RAG for LLM query. Dump sources into raw/. Let an LLM turn them into linked Markdown. Let it add summaries, concepts, and backlinks. View it in Obsidian. Ask the wiki questions with an LLM. Let it make notes, slides, or charts. Feed those outputs back into the wiki. Run checks for gaps and errors.

Andrej Karpathy

@karpathy

LLM Knowledge Bases

Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating

From Twitter

Disclaimer: The content above is only the author's opinion which does not represent any position of Followin, and is not intended as, and shall not be understood or construed as, investment advice from Followin.

Like

Add to Favorites

Comments

Share

Relevant content