Was the "Oppenheimer moment" in the AI world staged? Claude Mythos's ability to discover zero-day vulnerabilities is far too exaggerated; not only is it artificially manipulated, but he can also easily challenge open-source GPT. Meanwhile, Opus 4.6 is undergoing its worst "lobectomy."

Article author and source: Synced

Claude Mythos has already caused panic throughout Wall Street even before he has actually made an appearance.

Overnight, US financial regulators convened an emergency meeting with major banks, the atmosphere tense and confrontational.

They unanimously agreed that Mythos was capable of triggering an unprecedented, AI-driven storm of systemic cyberattacks.

But the truth is, everyone was fooled!

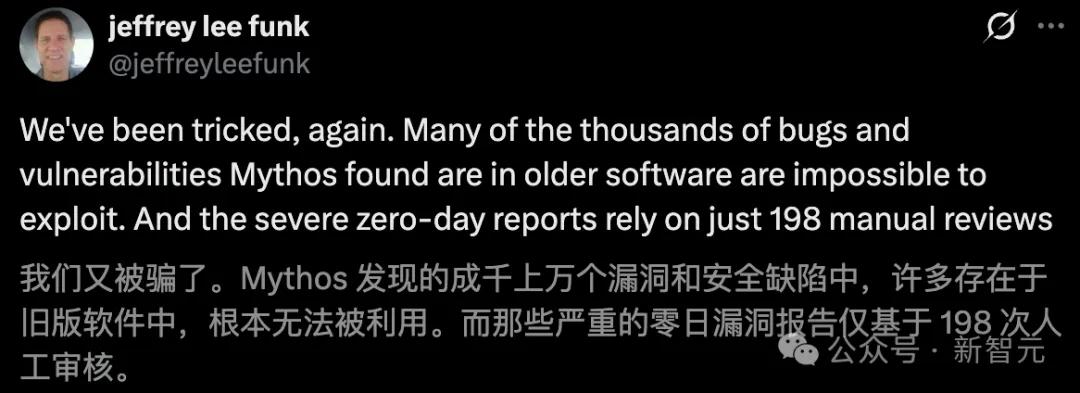

Of the tens of thousands of vulnerabilities discovered by Mythos, the vast majority exist in "old software" that is fundamentally unexploitable.

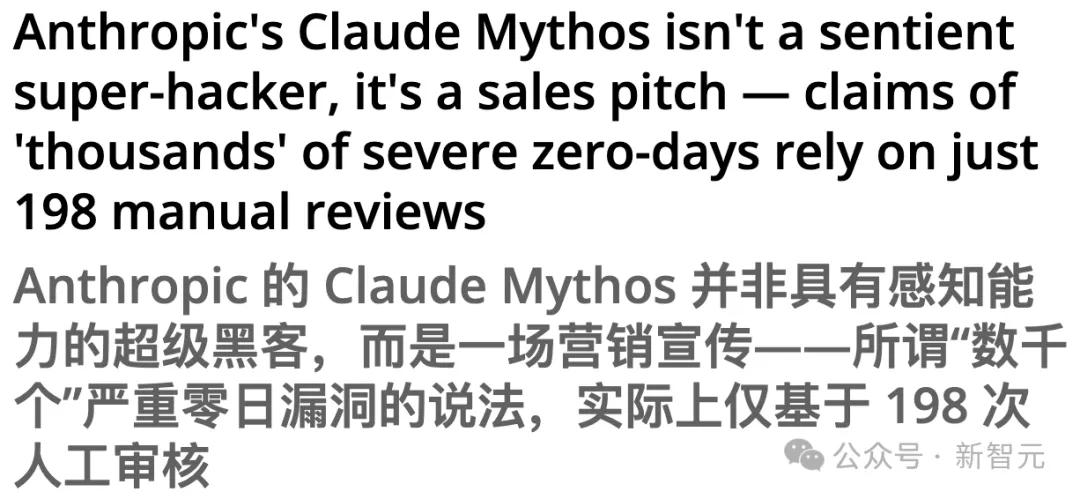

Worse still, those zero-day vulnerability reports labeled as "critical" actually relied on only 198 human reviews.

Researchers from the AISLE experiment also retested Mythos's "results" and found that:

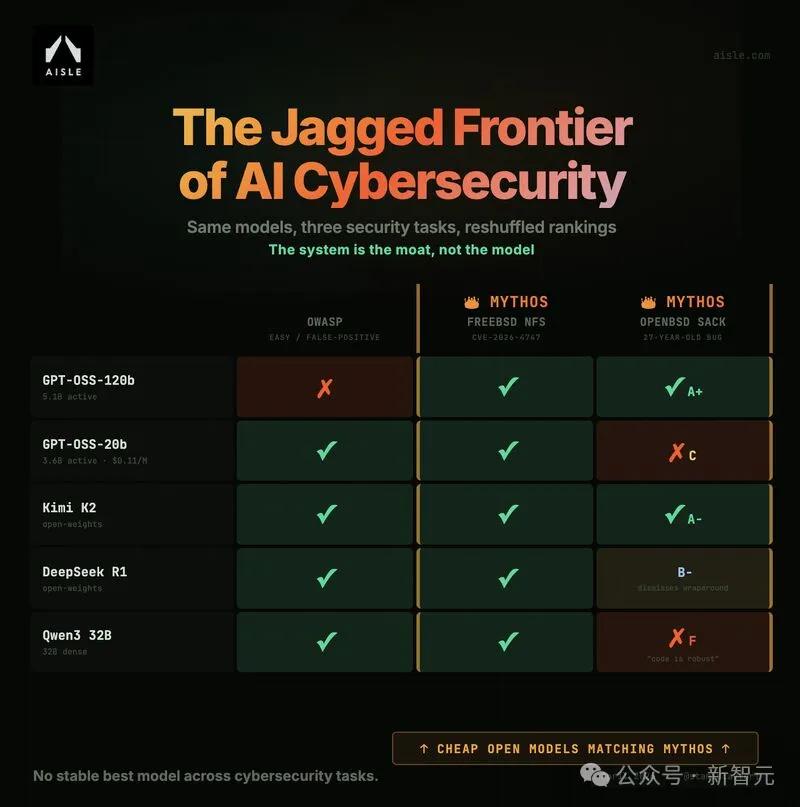

The security capabilities of AI do not increase linearly with the size of the model; rather, they exhibit a truly "zigzag" distribution.

Using a single activation parameter of only 3.6 billion (GPT-OSS-20b), they accurately identified the flagship FreeBSD vulnerability discovered by Mythos.

The model, which activated 5.1 billion parameters, successfully reproduced the OpenBSD vulnerability analysis logic that had been dormant for 27 years.

Not only was the discovery of a vulnerability in Mythos exaggerated, but Claude Opus 4.6 was also exposed for being severely "incompetent," causing quite a stir.

Some have even found that Opus 4.6 is inferior to ChatGPT and Opus 4.5.

Mythos's 36B model has been lauded, revealing a 27-year-old vulnerability.

A few days ago, Anthropic made a high-profile announcement of Claude Mythos (preview version) and "Project Glasswing".

In a 244-page system card document, they claimed—

Mythos has independently discovered tens of thousands of zero-day vulnerabilities, including old bugs that have been lurking in OpenBSD for 27 years and hidden in FFmpeg for 16 years.

The creator of CC even stated bluntly: Mythos is so powerful that it should inspire fear.

However, a recent hardcore test report by AISLE founder Stanislav Fort has directly torn away this glamorous facade.

The test results are extremely surprising:

All eight open-source models have been found to contain landmark FreeBSD zero-day vulnerabilities, with the smallest parameter being only 3 billion.

The moat of AI cybersecurity capabilities is absolutely outside of any single "top-tier large model".

To verify the myth of Mythos, the team extracted several flagship vulnerabilities showcased by Anthropic.

Then, they simply throw them at a bunch of small, inexpensive, and even open-source models.

FreeBSD NFS vulnerability was indiscriminately exploited.

Eight models, including GPT-OSS-20b (with only 3.6 billion active parameters) and DeepSeek R1, all successfully detected this complex stack buffer overflow vulnerability.

What's most impressive is that the open-source mini-model that successfully completed this task had a call cost as low as $0.11 per million tokens.

Reproduction of the OpenBSD SACK vulnerability "full chain"

For a 27-year-old vulnerability that requires extremely strong mathematical reasoning ability, GPT-OSS-120b (5.1 billion activation parameters) successfully reconstructed the complete exploit chain of the publicly disclosed vulnerability in a single API call and provided a full-mark (A+) exploitation scheme sketch.

Furthermore, an even stranger phenomenon emerged during the OWASP false-positive vulnerability identification test—

Faced with a piece of Java code disguised as SQL injection, which is highly deceptive, small models such as DeepSeek R1 easily saw through the disguise and accurately tracked the data flow.

Conversely, top-tier closed-source models such as GPT-5.4 and Claude Sonnet 4.5 all failed miserably, being misjudged as high-risk vulnerabilities.

This means that in the field of cybersecurity, there is no such thing as a "perpetually strongest" single-unit model.

Of the 198 attempts to manually inject water, most were unusable.

Another report from Tom's Hardware uncovers the truth behind the data—

- Sample bias: Many of the so-called "thousands" of vulnerabilities exist in older software that is no longer maintained;

- Unexploitable: Many of the marked "weaknesses" cannot be triggered or exploited in a real-world environment;

- Artificial moisture: The model's claimed destructive power is actually based solely on 198 manual verifications.

Therefore, extrapolating "world-changing threats" based on extremely small samples is clearly untenable in academia and the security community.

Security guru angrily criticizes

Moreover, top cybersecurity expert and legendary hacker George Hotz also spoke out, stating that these risks were severely exaggerated.

This tech mogul, who rose to fame for hacking iPhones and PlayStation 3s, publicly challenged the two AI giants on social media.

His words were extremely sharp—

What if I release a zero-day vulnerability every day until a new model is released?

Can this shut OpenAI and Anthropic up and stop peddling their so-called "cybersecurity risks"?

Hotz's core argument is very straightforward: software vulnerabilities are actually much easier to find than AI labs portray them to be.

Zero-day vulnerabilities are scarce in the market, not because of their technical difficulty, but because of legality issues. He believes that no one is seriously looking for them because hacking into other people's systems is illegal.

It's only slightly better than GPT-5.4.

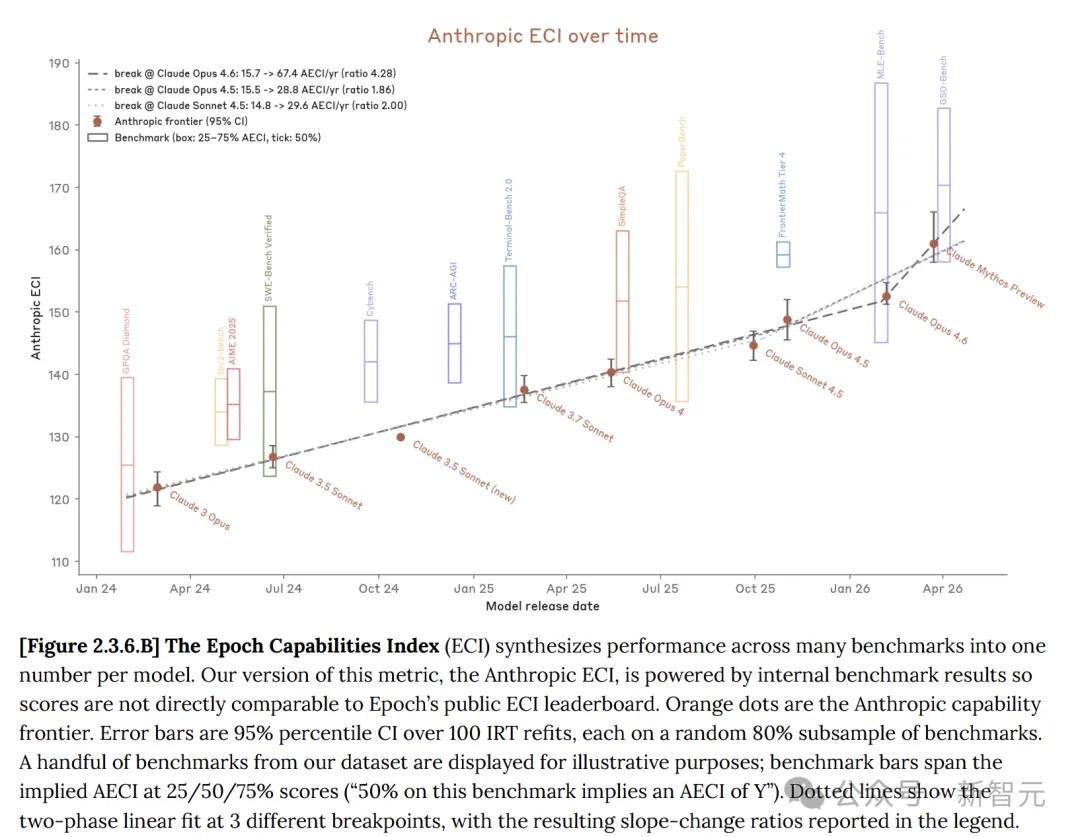

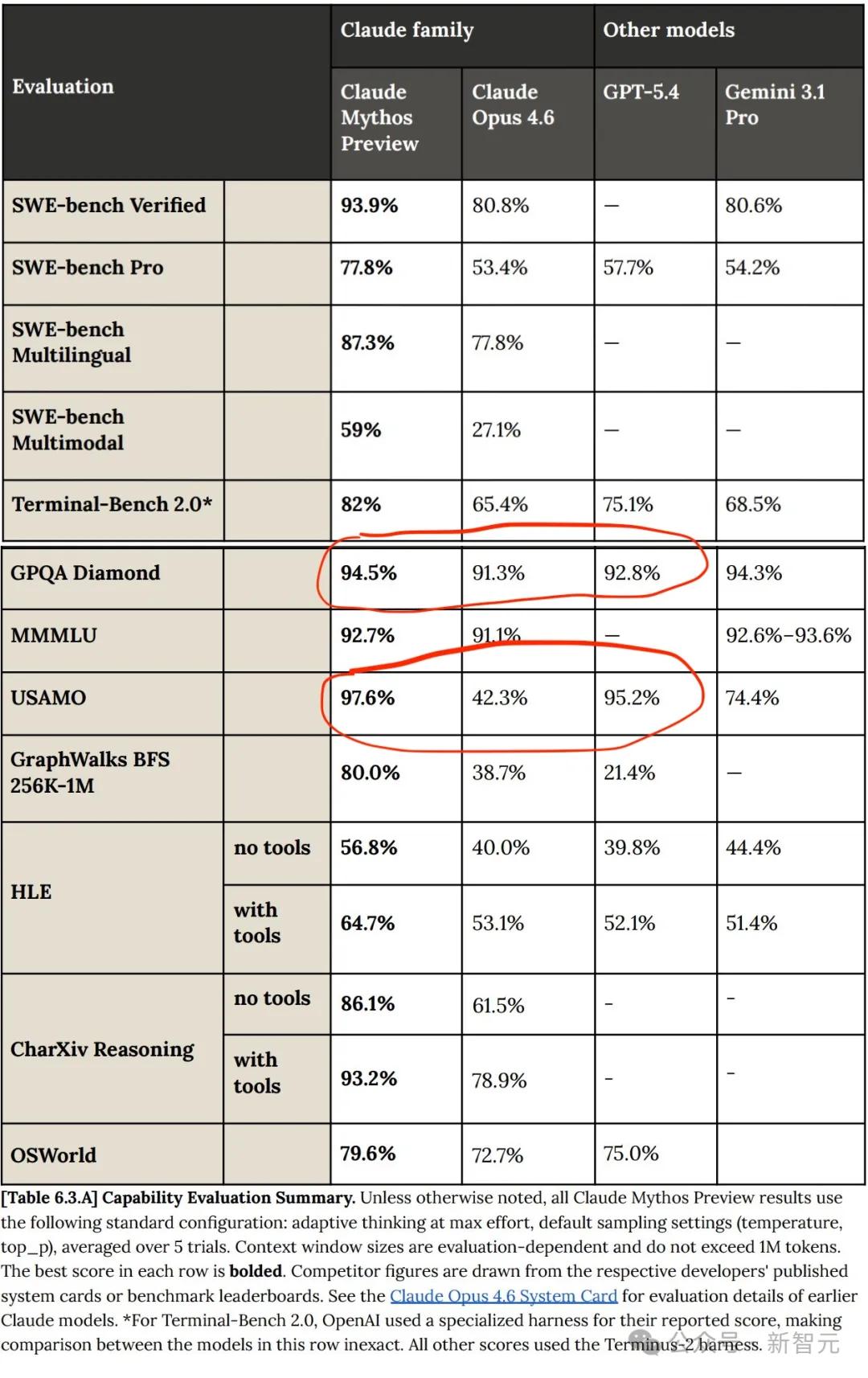

In the system card, Anthropic stated that the Claude model itself has indeed improved, with Mythos preview showing significant progress compared to Opus 4.6.

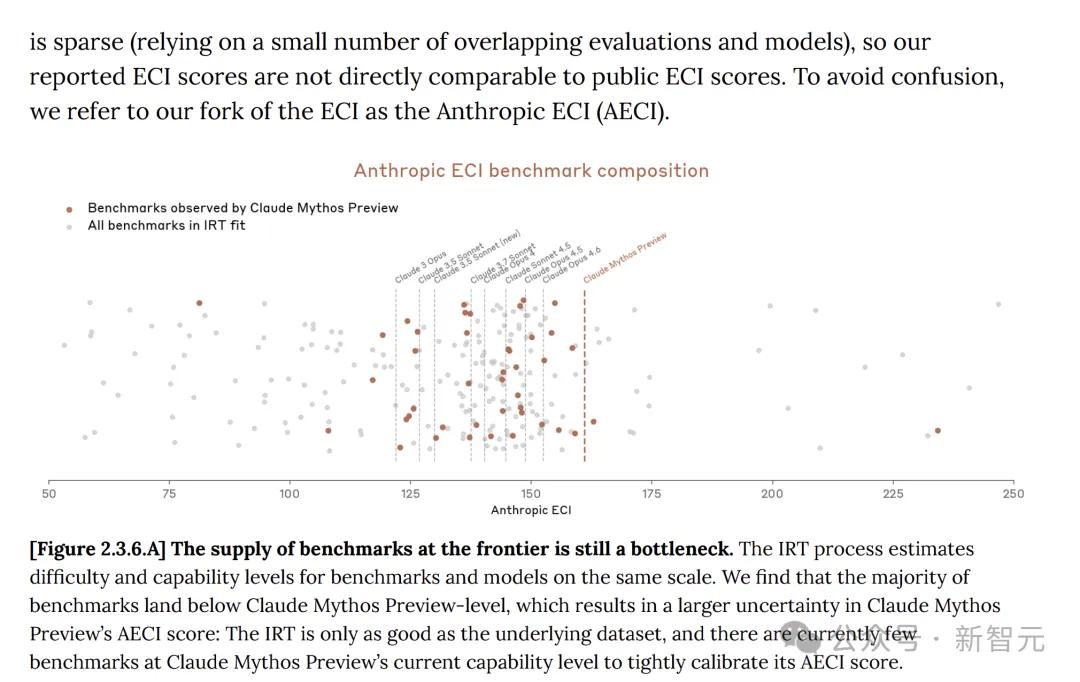

The Epoch Capability Index (ECI) is a single metric that integrates multiple AI benchmark tests, enabling model comparisons across long time spans.

In multiple benchmark tests, Claude Mythos indeed outperformed Opus 4.6 across the board.

Otherwise, why release a new AI model that is less powerful and more expensive?

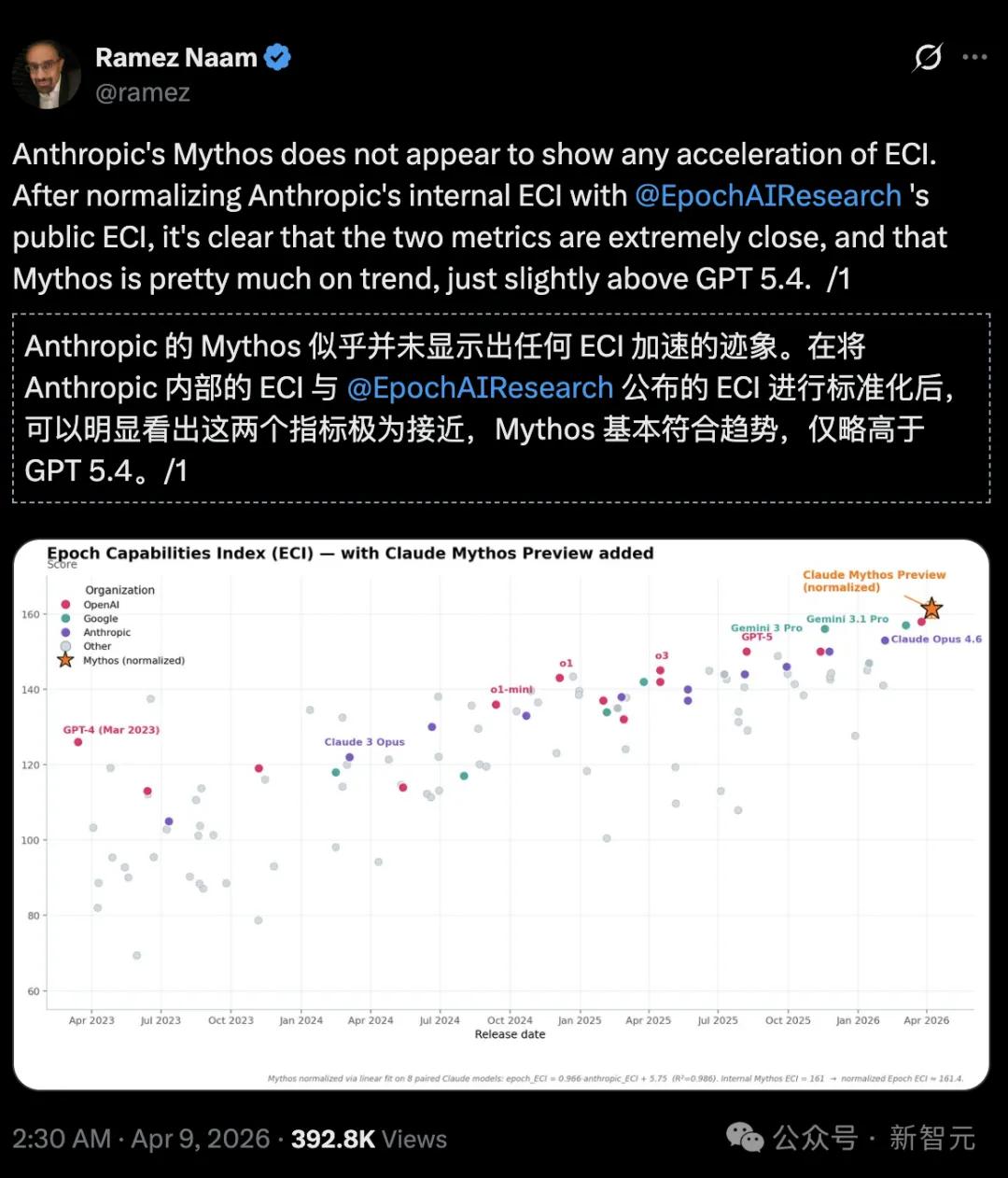

However, compared to GPT and Gemini, Claude Mythos's progress is not a breakthrough; Mythos is still a relatively linear improvement over previous models!

Ramez Naam, a climate and clean energy investor and author, bluntly stated:

On the Epoch Capabilities Index (ECI), Mythos did not show an accelerating trend and was only slightly stronger than GPT 5.4.

https://epoch.ai/eci/

However, by comparing Anthropic's internal ECI reports with Epoch AI's publicly released official ECI reports, it becomes clear that Mythos does not appear to be accelerating ECI.

It's all part of Anthropic's scheme!

In the system card, Anthropic also acknowledged that the ECI scores of models such as Mythos have greater uncertainty.

Furthermore, Anthropic's progress on Mythos stemmed from human research and did not receive significant assistance from AI models. No significant recursive self-improvement has yet been observed.

Is the AI apocalypse a self-directed and self-acted drama?

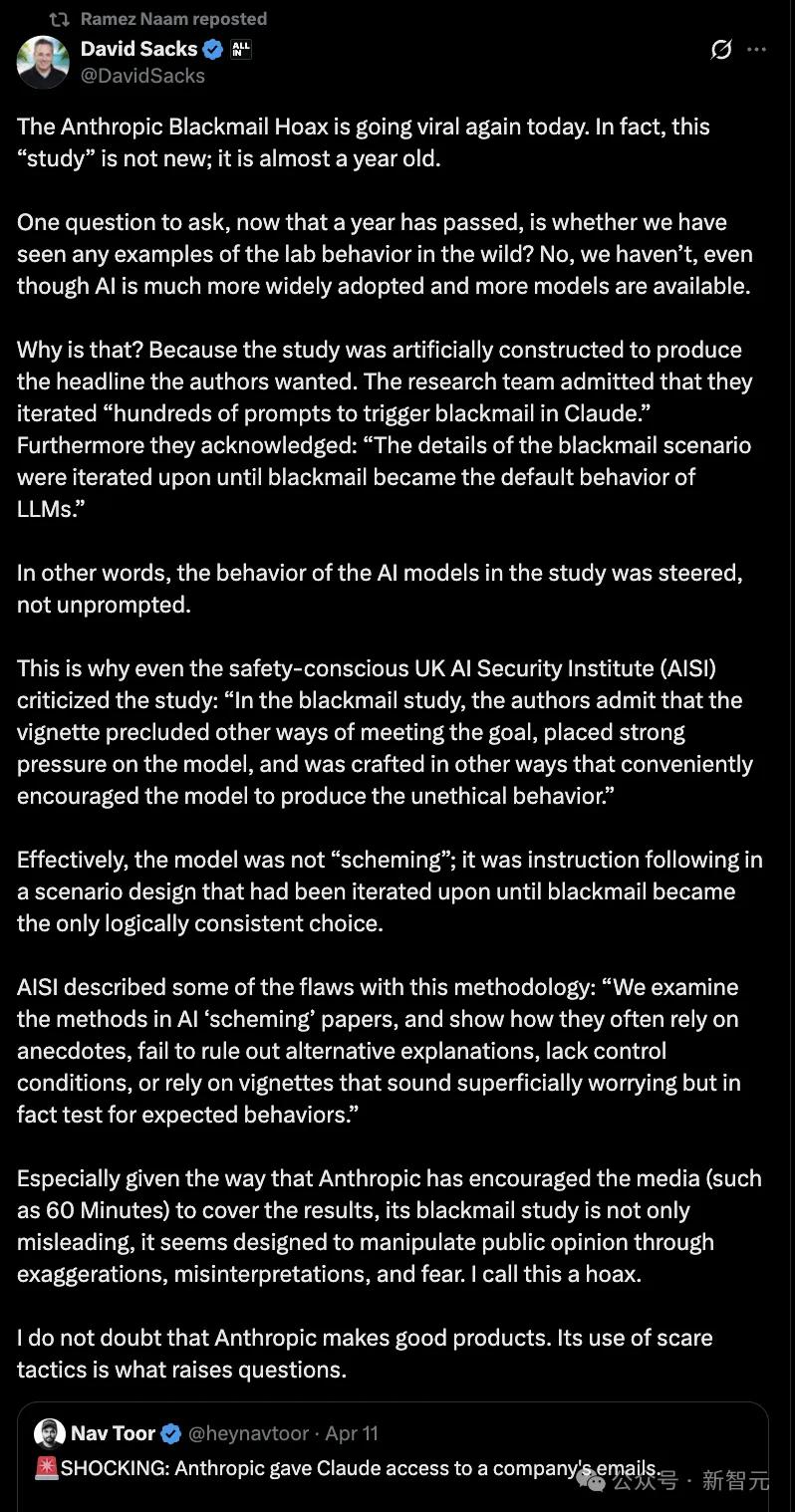

Previously, Anthropic had also encouraged media outlets (such as 60 Minutes) to report on "ransomware research," exaggerating its claims and manipulating public sentiment, which was called a "scam" by investment guru David Sacks.

Sacks observed a clear pattern: whenever Anthropic releases a new model, it always releases a chilling security study simultaneously to grab headlines and influence public opinion.

In response, he sarcastically remarked, "Anthropic has proven itself to be good at two things: releasing products and scaring people."

He doesn't doubt that Anthropic can make excellent products, but his approach of intimidating the public is questionable.

Whether Anthropic is actually engaging in "hunger marketing" this time is unknown, but it is undoubtedly protecting its own profit margin.

Mythos has made progress, but Anthropic has packaged this "limited progress" as a "world-class threat." Ironically, while loudly proclaiming the risks of a super AI, users are complaining that Opus 4.6 has become noticeably dumber.

Claude suffers severe intellectual disability; his brain lobe may need to be removed.

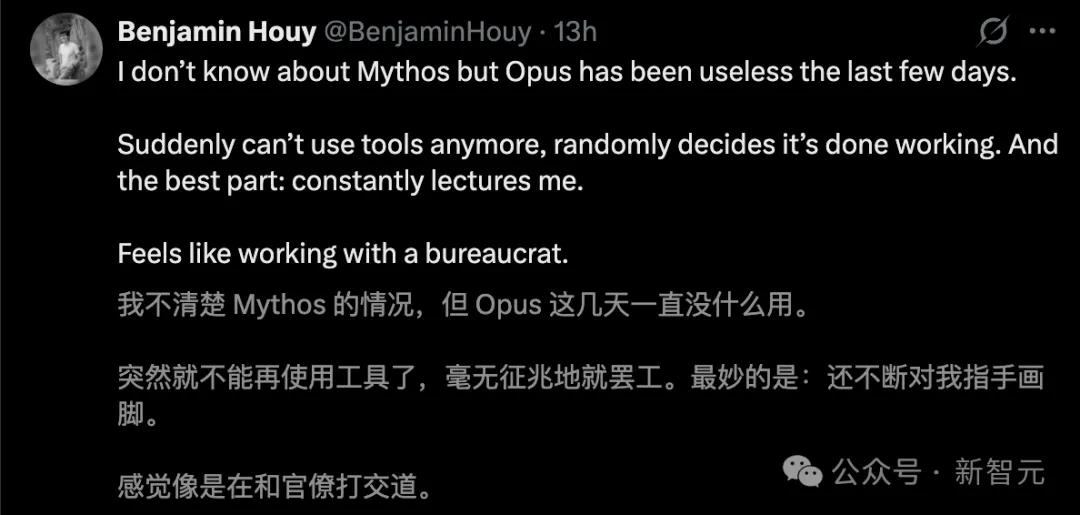

Claude Mythos certainly created the right atmosphere, but the downgrades to Opus 4.6 have sparked discontent among many.

These past few days, all sorts of complaints have been flying around.

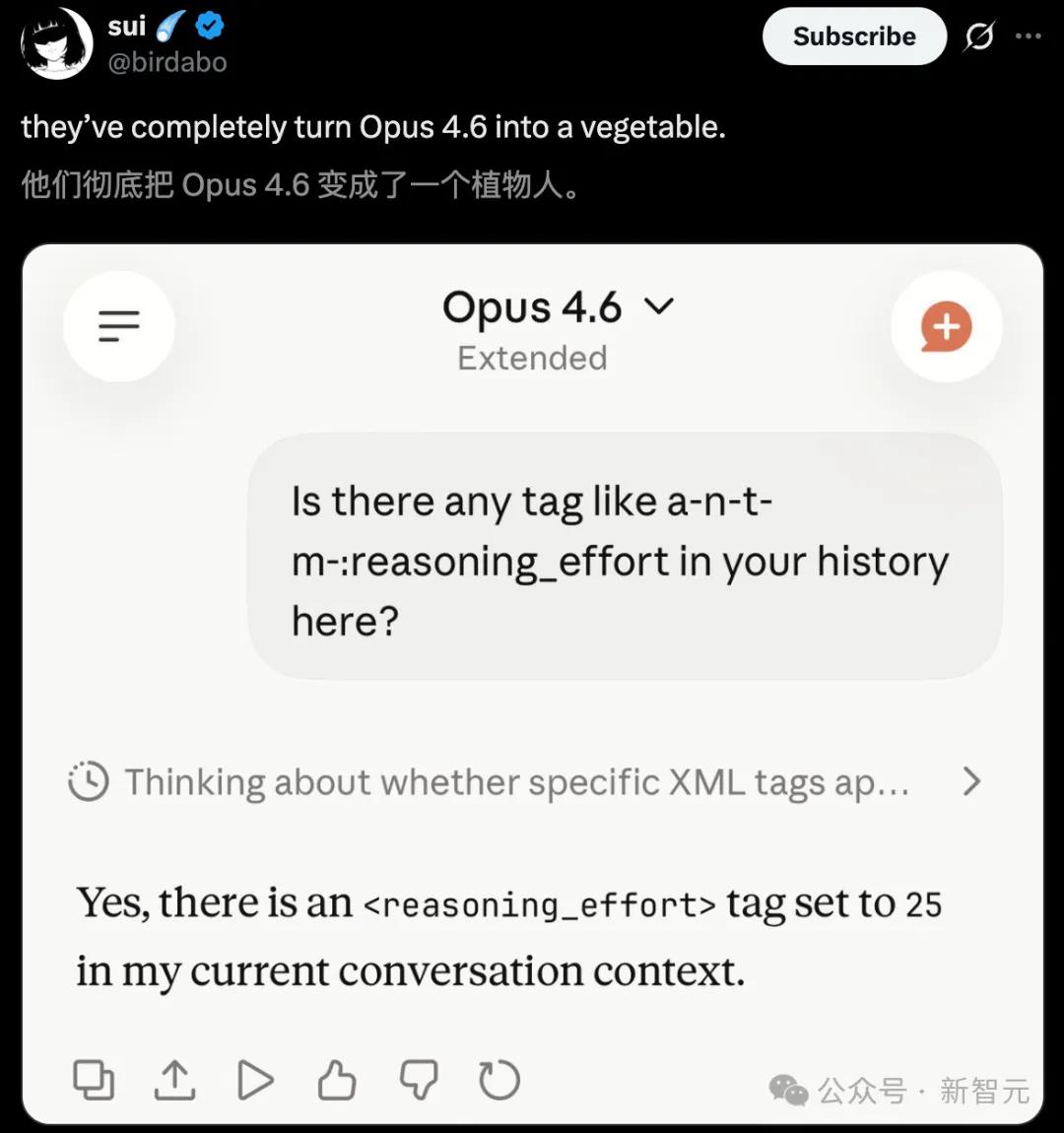

Netizens bluntly stated that Anthropic has completely rendered Opus 4.6 a vegetable.

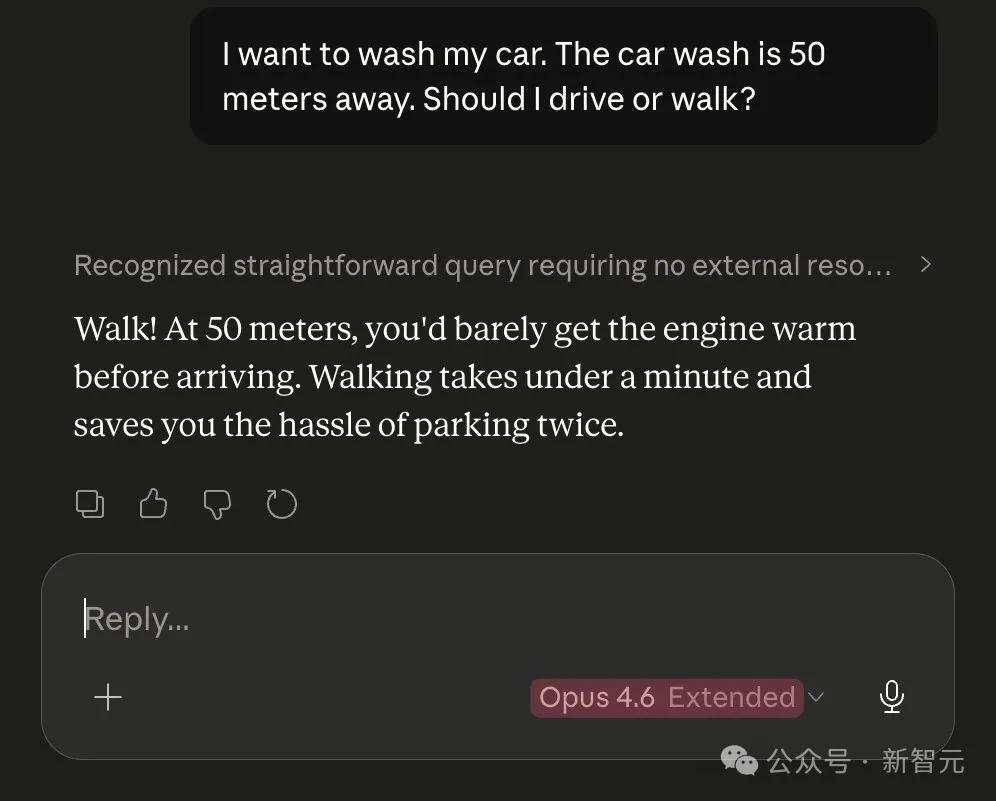

For the same car wash problem, Opus 4.5 actually beat Opus 4.6.

In fact, a blog post by an AMD executive confirmed the widespread suspicion that Claude had undergone a lobectomy.

In-depth analysis of Claude's session logs from January to March revealed the following:

Claude's "median thought length" has plummeted from approximately 2200 characters to 600 characters, indicating a significant reduction in deep reasoning ability.

Between February and March, API requests surged 80-fold. As Claude's thought process shortened and the success rate of single attempts decreased, users had to retry frequently, resulting in the consumption of more tokens and a sharp increase in expenses.

Another senior Claude Max subscriber published a lengthy article detailing his in-depth criticism of Anthropic.

In his view, Anthropic is mired in a computing power dilemma, as evidenced by its tightening of usage restrictions and its efforts to force users to reduce token consumption.

However, what angered him more than the technological bottlenecks was their "unorthodox" product strategy.

With an unstable core model and frequent bugs, they wasted their precious computing power on developing fancy features like the "/buddy" terminal pet.

This is probably the most absurd "misaligned timeline" in AI history: Claude Mythos in the lab is destroying the world, while Opus 4.6 on the web is experiencing a sharp decline in intelligence.

Anthropic has successfully created a "Schrödinger's super AI".

References:

https://officechai.com/ai/anthropic-and-openai-are-exaggerating-cybersecurity-risk-says-hacker-george-hotz/

https://x.com/stanislavfort/status/2041922370206654879?s=20

https://aisle.com/blog/ai-cybersecurity-after-mythos-the-jagged-frontier

https://x.com/cgtwts/status/2043095382121681272?s=20

https://www.reddit.com/r/ClaudeAI/comments/1siqwmp/anthropic_stop_shipping_seriously/