Original title: Marc Andreessen introspects on Death of the Browser, Pi + OpenClaw, and Why "This Time Is Different"

Original translation: FuturePulse

Source: This is a recent interview with Marc Andreessen, founder of a16z, on the Latent Space podcast. He is a renowned American internet entrepreneur and a key figure in the early development of the internet; after founding a16z , he became a leading figure among Silicon Valley's top investors. The entire conversation revolves around the history and latest trends of AI development and is well worth reading.

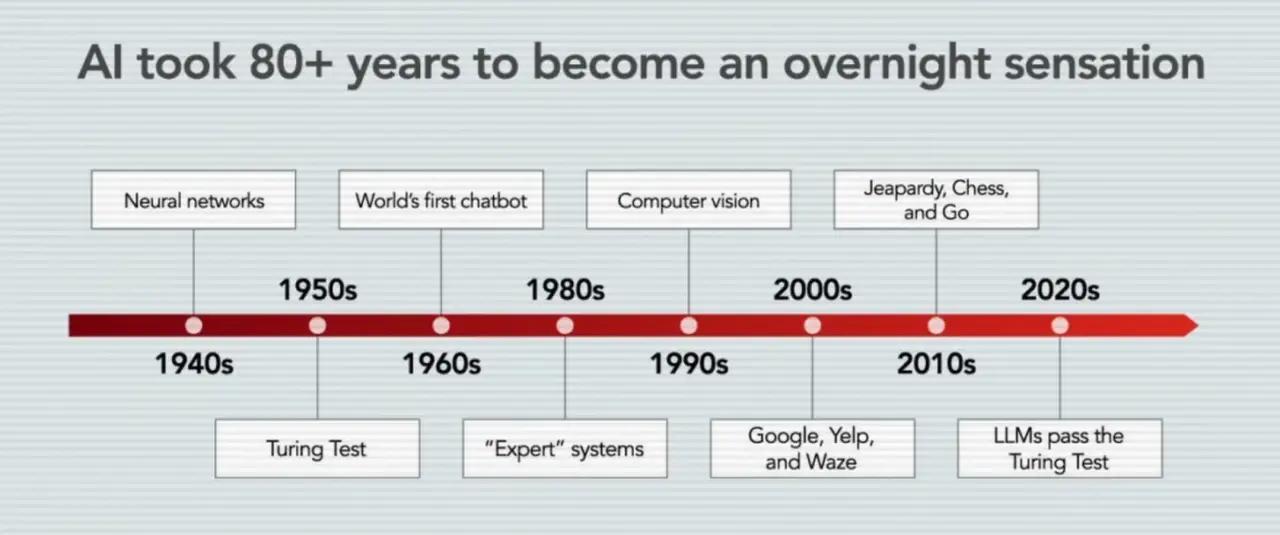

First, this wave of AI is not a sudden emergence, but rather the first full-scale "start working" after an 80-year technological marathon.

This wave of AI didn't appear out of thin air, but rather is the culmination of an 80-year technological marathon.

Marc Andreessen directly calls the current situation an "80-year overnight success," meaning that what appears to be a sudden explosion in the public eye is actually the concentrated release of decades of technological reserves.

He traced this technological line back to early neural network research and emphasized that the industry has now accepted the judgment that "neural networks are the correct architecture".

In his narrative, the key moments are not single moments, but a series of stacks: AlexNet, Transformer, ChatGPT, reasoning models, and then agents and self-improvement.

He emphasized that this time it's not just text generation that's improved, but four functions appearing simultaneously: LLMs, reasoning, coding, and agents/recursive self-improvement.

He believes "this time is different" not because the narrative is more engaging, but because these abilities have begun to work in real-world tasks.

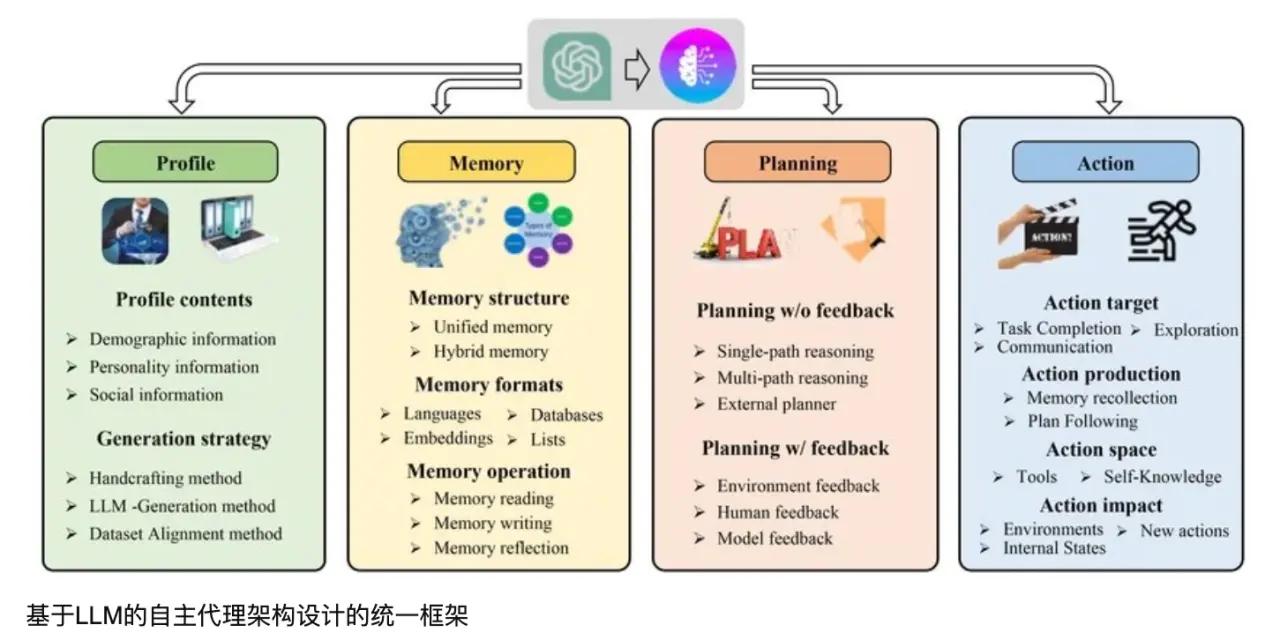

II. The agent architecture represented by Pi and OpenClaw represents a deeper software architectural shift than chatbots.

He described the agent very specifically: essentially it's " LLM + shell + file system + markdown + cron/loop". In this structure, LLM is the core of inference and generation, the shell provides the execution environment, the file system stores the state, markdown makes the state readable, and cron/loop provides periodic wake-up and task advancement.

He believes the importance of this combination lies in the fact that, apart from the model itself being new, all other components are mature, understandable, and reusable parts of the software world.

The agent's state is stored in a file, so it can be migrated across models and runtimes; the underlying model can be replaced, but the memory and state are still retained.

He repeatedly emphasized introspection: the agent knows its own files, can read its own status, and can even rewrite its own files and functions, moving towards "extending itself".

In his view, the real breakthrough is not just that "the model can answer," but that the agent can leverage the existing Unix toolchain to access the full potential of the computer.

Third, the era of browsers, traditional GUIs, and "manually clicking software" will be gradually replaced by agent-first interaction methods.

Marc Andreessen has explicitly stated that in the future, "you may no longer need a user interface."

He further pointed out that in the future, the main users of software may not be people, but "other bots".

This means that many interfaces designed for human clicks, browsing, and form filling today will degenerate into the execution layer called by the agent behind the scenes.

In this world, people are more like those who set goals: telling the system what they want, and then the agent calls services, operates software, and completes processes.

He connects this change to the bigger picture of the future of software: high-quality software will become increasingly "abundant," no longer a rare commodity handcrafted by a few engineers.

He also predicted that the importance of programming languages would decline; models would write programs across languages and translate between them, and in the future, humans would be more concerned with explaining why AI organizes its code in this way, rather than sticking to a particular language itself.

He even mentioned a more radical direction: conceptually, AI may not only output code, but may also directly output lower-level binary code or model weights .

Fourth, this AI investment cycle is similar to the dot-com bubble of 2000, but the underlying supply and demand structures are different.

In his reflection on 2000, he emphasized that the collapse was not so much due to "the internet failing," but rather to the over-construction of telecommunications and bandwidth infrastructure, with fiber optic cables and data centers being laid ahead of schedule, followed by a long period of digestion.

He believes that concerns about "over-construction" are indeed evident today, but the current investors are mainly large, cash-rich companies like Microsoft, Amazon, and Google, rather than highly leveraged and vulnerable players.

He specifically pointed out that now, investments in running GPUs can usually be quickly converted into revenue, unlike the large amount of idle capacity in 2000.

He also emphasized that what we are using now is actually a "sandbagged" version of the technology: because of insufficient supply of GPUs, memory, data centers, etc., the potential of the model has not been fully realized.

In his assessment, the real constraints in the next few years will not only be GPUs, but also the bottlenecks in the interaction between CPUs, memory, networks, and the entire chip ecosystem.

He juxtaposes AI scaling laws with Moore's Law, arguing that they not only describe patterns but also continuously inspire collaborative progress in capital, engineering, and industry.

He mentioned a very unusual but important phenomenon: as software optimization speeds up, some older generation chips may even be more economically valuable than when they were first purchased.

Fifth, open source, edge inference, and local execution are not peripheral elements, but rather an integral part of the AI competitive landscape.

Marc Andreessen clearly believes that open source is very important, not just because it is free, but because it "allows the whole world to learn how it is done."

He described open-source releases like DeepSeek as a "gift to the world" because code plus paper can rapidly spread knowledge and raise the bar for the entire industry.

In his narrative, open source is not just a technological choice, but may also be a geopolitical and market strategy: different countries and companies will adopt different open strategies based on their own business constraints and influence goals.

He also emphasized the importance of edge inference: centralized inference costs may not be low enough in the coming years, and many consumer applications cannot afford the long-term high costs of cloud inference.

He mentioned a recurring pattern: models that seem "impossible to run on a PC" today often actually run on a local machine a few months later.

Besides cost, factors such as trust, privacy, latency, and use cases also drive local operation: wearable devices, door locks, and personal devices are more suitable for low-latency, local inference.

His judgment was very straightforward: almost anything with a chip will likely have an AI model in the future.

VI. The real challenge of AI lies not only in model capabilities, but also in security, identity, financial transactions, and organizational and institutional obstacles.

On security, his assessment was very sharp: almost all potential security bugs would be easier to discover, and a "computer security catastrophe" could occur in the short term.

However, he also believes that programming bots can scale up the ability to patch vulnerabilities; in the future, the way to "protect software" may be to let bots scan and fix it.

Regarding the issue of identity, he believes that "proof of bots" is not feasible because bots will become increasingly powerful; the truly feasible direction is "proof of human," which is a combination of biometrics, cryptographic verification, and selective disclosure.

He also addressed an often-overlooked issue: if agents are to actually operate in the real world, they will ultimately need money, the ability to pay, and even some form of bank account, card, or stablecoin-like infrastructure. At the organizational level, he borrowed the framework of managerial capitalism , arguing that AI may re-empower founder-led companies because bots excel at reporting, coordination, paperwork, and a great deal of "management work."

However, he does not believe that society will quickly and smoothly accept AI: he cites examples such as professional licenses, unions, dockworkers' strikes, government departments, K-12 education, and healthcare to illustrate that there are many institutional slowdowns in the real world.

His assessment is that AI utopians and doomsday theorists tend to overlook one point: just because the technology is possible doesn't mean that 8 billion people will immediately change.