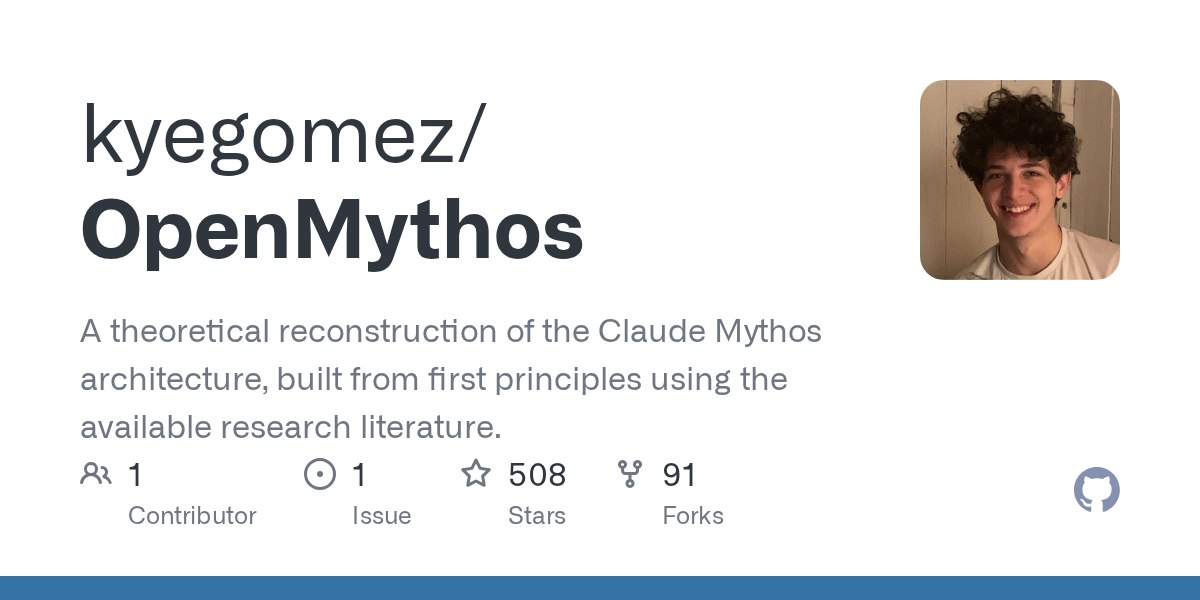

OpenMythos — An Open Source Attempt to Backtrack Claude’s Internal Structure Based on Public Papers → This is a theoretical reconstruction project that reassembles the Claude "Mythos" architecture from scratch using only publicly available research literature. → The core hypothesis is that Mythos is a Recurrent-Depth Transformer (Looped Transformer) that runs the same layer multiple times. → Unlike Chain-of-Thought, which spits out intermediate tokens, iterative inference occurs quietly within the latent space within a single forward pass. → The author explains that depth is addressed through looping, while breadth between regions is resolved through MoE (Mixture of Experts). → Along with the PyTorch implementation, supporting ideas such as stability proofs, scaling laws, and loop index embeddings are also organized. **How It Differs from Existing Transformers** Existing Transformers secure depth by stacking hundreds of different layers in series. The Looped Transformer reconstructed by OpenMythos divides the structure into three blocks. The flow is Prelude (Input Encoding) → Recurrent Block (Iterative Execution) → Coda (Output Cleanup), where the intermediate Recurrent Block is run multiple times with the same weight. This structure encourages deeper thinking by increasing the number of loops for more difficult problems. Key Update Rule In every loop, the hidden state is updated using the formula h_{t+1} = A·h_t + B·e + Transformer(h_t, e). The important point here is that the original input e is re-injected in every loop. Without this, the original signal would become blurred as the iteration lengthens, but input injection prevents this. Why Mythos Is Presumed to Have This Structure The author presents four reasons. First, the Looped Transformer passes systematic generalization, which handles combinations never seen during training. Second, even when trained with 5-hop inference, depth extrapolation is observed where increasing the number of loops during inference allows the model to solve 10-hop problems. Third, each loop corresponds to a single CoT step in continuous latent space, which was formally proven in the paper by Saunshi et al. (2025). Fourth, running k layers L times yields quality similar to a kL-layer model, allowing for depth to be achieved without parameter explosion. Note This repository is strictly a theoretical reconstruction based on public literature, and it has not been verified whether Anthropic actually built Mythos with this structure. The repository is under the MIT license and includes PyTorch example code and API documentation. Running the repository requires selecting an attention type (mla or gqa) and configuring MythosConfig. #LoopedTransformer #ClaudeMythos #MoE #AIArchitecture #OpenSource

This article is machine translated

Show original

Telegram

Disclaimer: The content above is only the author's opinion which does not represent any position of Followin, and is not intended as, and shall not be understood or construed as, investment advice from Followin.

Like

Add to Favorites

Comments

Share