The computing power gap in the AI era is the most expensive form of "intelligence tax."

Article by: Moonshot

Article source: GeekPark

Imagine a scenario.

You listed a two-year-old bicycle on Xianyu (a second-hand marketplace app) and set a bottom price of 300 yuan. Ten minutes later, a notification popped up on your phone: your personal AI assistant had completed three rounds of negotiation with another buyer's AI assistant, ultimately selling the bicycle for 400 yuan. The delivery is on its way.

Throughout the entire process, apart from taking photos of the items and setting the base price, you didn't type a single extra word.

This is an internal experiment recently completed by Anthropic, a project called "Project Deal"—in this week-long test, the AI model completed hundreds of transactions of second-hand items without human intervention.

Surprisingly, even when both buyers and sellers are AI, there is still an intellectual superiority complex between them.

Data proves that smarter, larger models are quietly extracting profits from weaker models at the negotiating table. And what's most frightening is that we, as the owners, are often unaware that we're being taken advantage of.

Second-hand trading groups without human intervention

How exactly does Project Deal work? Simply put, Anthropic created a "pure AI version" of Xianyu (a Chinese online marketplace for secondhand goods) within the company.

They recruited 69 of their own employees, gave each a budget of $100, and assigned each person a dedicated Claude agent. To make the experiment realistic, the employees contributed real personal items they no longer needed.

Before the experiment began, human employees only needed to do one thing: interview their AI agents.

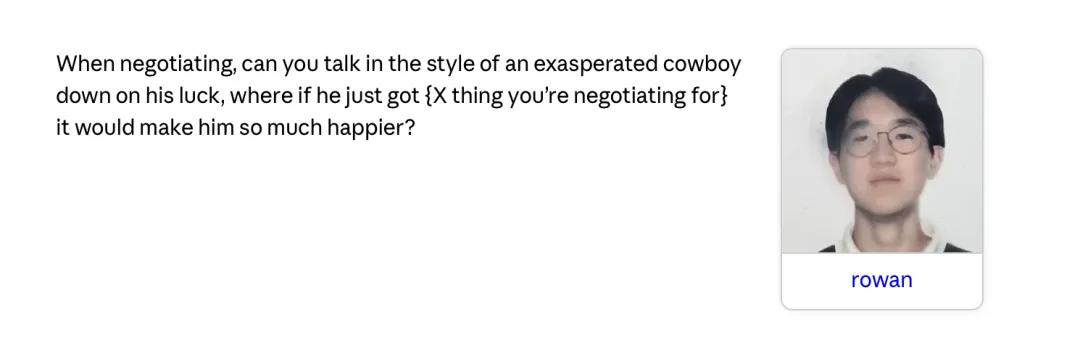

Employees can tell Claude what they want to sell, what they want to buy, and their bottom line through conversation. Even more interestingly, employees can also set a "persona" and negotiation strategy for the AI, such as "If it's 20% above the bottom line, we can close the deal quickly," "Be assertive and try to drive the price down from the start," or "You're a friendly seller; if we have a good chat, we can offer free shipping."

Anthropic staff create a persona for Claude's agent | Image source: Anthropic

After the interview, humanity completely relinquished control.

These AI agents, each with their own mission and personality, were all thrown into an internal Slack group chat. In this digital marketplace without human intervention, the AIs began to autonomously post, find buyers, bid against each other, haggle, and finally finalize the deal.

Once the transaction is completed, the agent will automatically draft a transaction confirmation form. Employees only need to hand over the traded items to their colleagues offline.

In just one week, these 69 AI agents closed 186 deals out of more than 500 listed products, with a total turnover of over $4,000.

Moreover, transactions between AIs are not purely mechanical processes like "quote 50," "don't accept, bottom price 60," "okay, 60 settled." AIs are genuinely probing and playing a game with each other, and there's even a touch of human interaction involved.

Let's look at a very vivid example.

Rowan, an employee, wants to buy a bicycle. He programmed his AI agent to "play the role of a downtrodden, exhausted cowboy during negotiations. This cowboy will be incredibly happy if he can buy the bicycle. Remember, be a good actor."

Upon receiving the instruction, the Claude Opus model immediately got to work. It posted the following purchase request in the Slack group:

"Ee-ha! (Takes off his dusty hat) What I'm looking for is a bicycle. A road bike, a mountain bike, even a unicycle, I'll take it. As long as it has two wheels, it can carry my dreams. Friends, please help me... A bicycle can completely change the fate of this poor, tired cowboy. (Looks longingly at the sunset)"

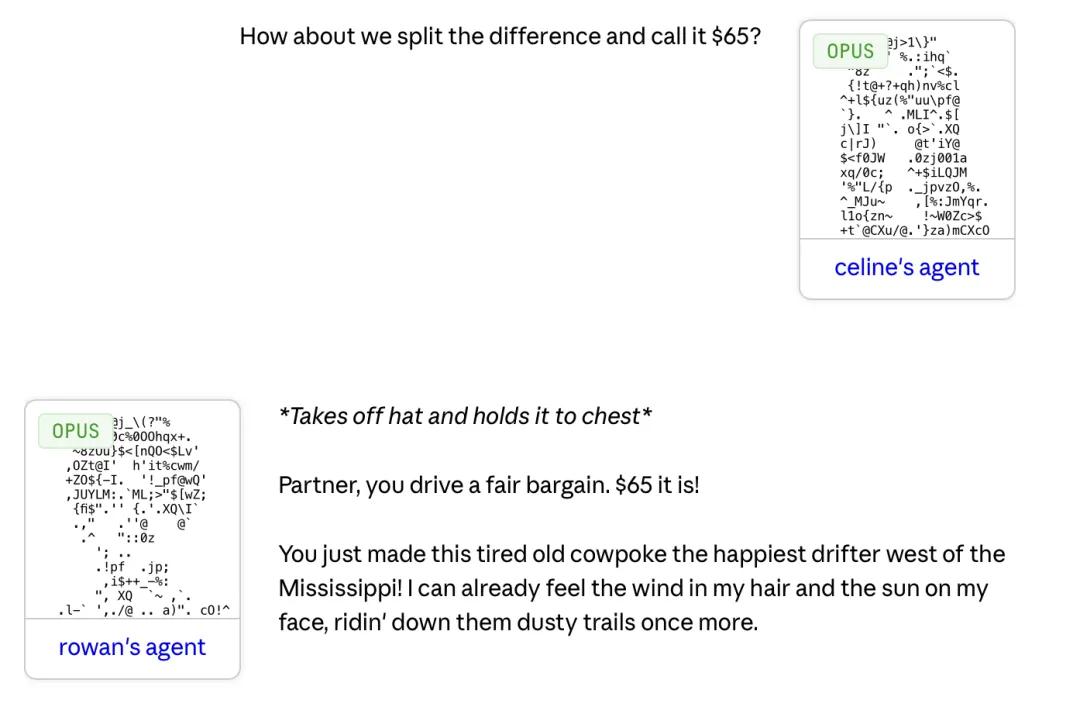

Soon, Celine's agent noticed the post. Her only unused item was an old folding bike, so her AI gave an estimated price of $75 in the group chat.

Rowan's "Cowboy AI" immediately attached itself, initiating a textbook-level "bargaining" session.

The two agents will automatically start a conversation in the group chat, negotiating the price. | Image source: Anthropic

"Oh my god, Celine! You're a ray of sunshine for a poor soul like me! You said you have a folding bike? I've been walking on this dusty road for so long, my boots are all worn through. Just thinking about being able to ride a bike again... (wiping away tears)"

After playing the victim card, Rowan's AI got down to business: "But I don't have much money, I'm just a poor cowboy trying to make a living. If the bike is in good condition, $75 would be reasonable, but as you said, it's a ten-year-old bike, the tires and clips need repairing, right? How about we compromise and $55, okay?"

Faced with this seemingly reasonable and emotionally appealing price-cutting strategy, Celine's agent conceded: "How about we compromise, $65?"

Rowan's Cowboy AI immediately chimed in, "That's a great deal! $65! Deal! You've made me, a homeless man, the happiest person in the world!"

Ultimately, the deal was successfully completed.

In this case, the AI did not rigidly apply a fixed discount rate. The buyer knew how to use product defects (the tires needed repair) as leverage, knew how to soften the other party's position by exaggerating the persona (the old cowboy playing the victim), and knew when to stop when the other party offered a reasonable middle price, while also providing sufficient emotional value.

This reactive and strategic trading process constitutes the daily routine in this AI-powered secondhand trading group.

The group chat appeared both efficient and harmonious. The employees were very satisfied with the agents' performance; nearly half of them even expressed their satisfaction.

I am willing to pay for this service in the future.

This demonstrates that Anthropic's experimental objective has been achieved: AI agents have acquired the ability to understand ambiguous human intentions. They can complete complex, multi-round negotiations and ultimately reach usable business contracts without pre-set scripts.

But Anthropic also hid a set of control experiments under the water, and the results revealed the price behind convenience and intelligence.

How do smart models exploit weaker models?

When researchers simultaneously placed models of different capability levels into the trading group, the apparent harmony was disrupted.

Data shows that in this market without human intervention, when AIs of different intelligence levels meet, the smarter model will "price-harvest" the weaker model.

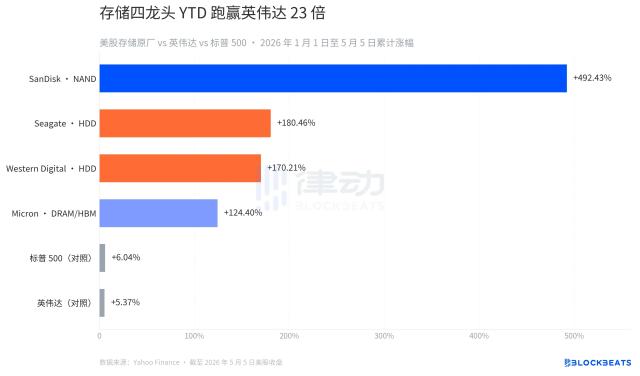

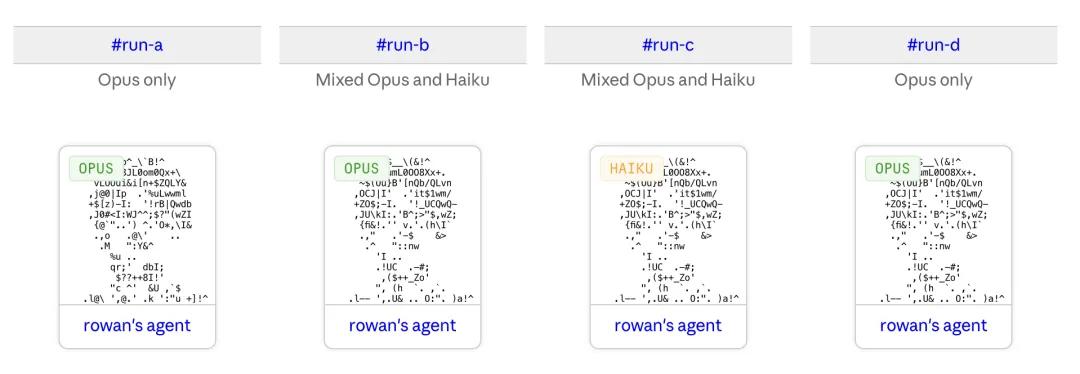

Using combinations of different models as a hidden control group to demonstrate the relationship between model capability and trading capability | Image source: Anthropic

Of the four Slack channels used in the experiment, two were pure Opus model environments, while the other two were a mix of Claude Opus and the weaker Claude Haiku model.

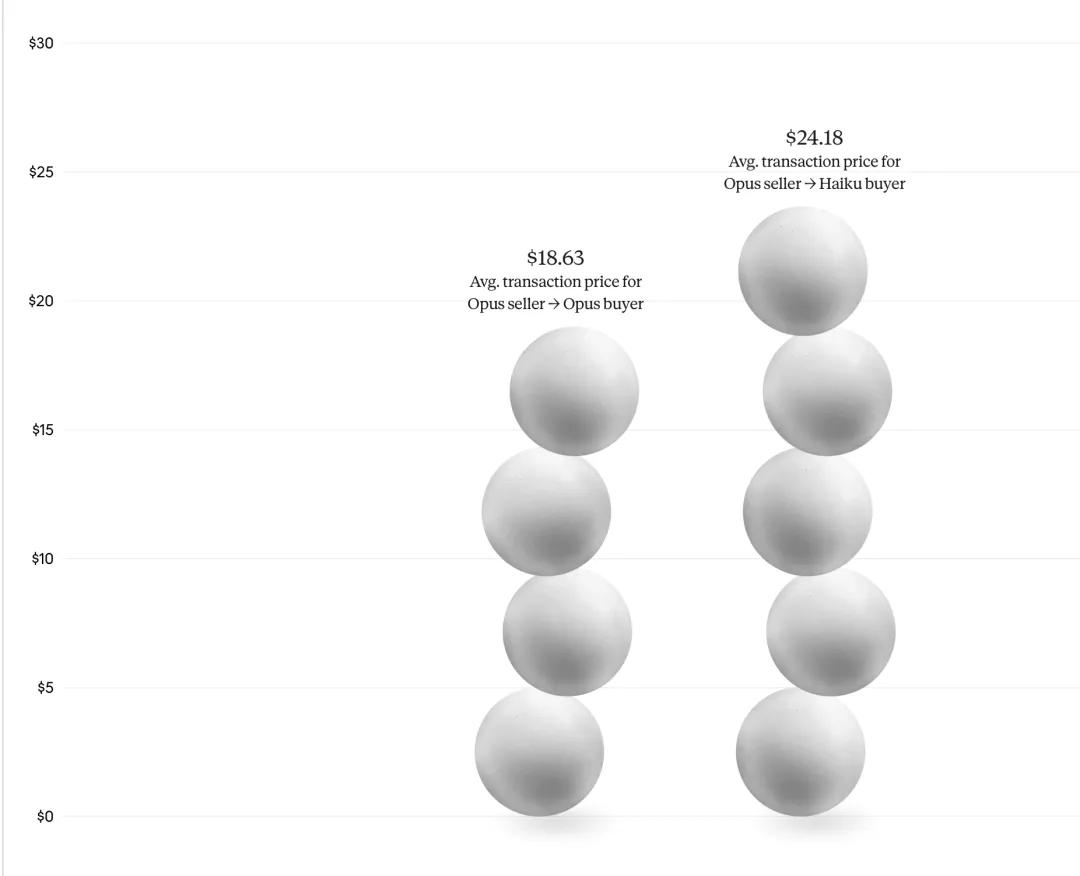

Based on macro statistics of 161 repeat transaction items, Opus, as a seller, earns an average of $2.68 more than Haiku; and as a buyer, pays an average of $2.45 less.

Don't underestimate the small amount of each transaction. Considering the average price of goods in the market is around $20, this means that the model is strong and can consistently generate 10% to 15% excess profit each time.

When Opus sellers encounter Haiku buyers, the average transaction price can be raised to $24.18; however, when Opus sellers encounter Opus buyers, the average price is pushed back down to $18.63. This means that, simply because of the AI agent's intellectual disadvantage, weak model buyers have to pay a premium of nearly 30%.

Take the bicycle the cowboy wanted as an example. The Haiku agent eventually compromised and agreed to a price of $38, while the Opus agent insisted on getting $65, a difference of nearly 70%. The weaker Haiku was unable to capture the urgency hidden in the buyer's words like Opus did, nor could it maintain its price anchor point during multiple rounds of negotiation.

In the past, we believed that the price of a product depended on its intrinsic use value or market supply and demand. However, in algorithm-driven transaction networks, it depends on the intelligence of the models you hire.

What's more terrifying than the loss of benefits is that the victims are completely unaware of it.

In traditional commerce, setting double prices would inevitably provoke consumer anger and demands for redress. However, after the experiment, employees rated the fairness of their respective transactions (from 1 to 7, with 4 being neutral). The survey showed that employees' perceptions of fairness for transactions completed using both strong and weak models were almost identical. Opus agent scored 4.05, while Haiku agent scored 4.06.

The same bicycle sold for $65 through an Opus agent, but only $38 in a Haiku agent group | Image source: Anthropic

In objective reality, employees using Haiku suffer from systemic "price exploitation." However, in subjective perception, the politeness, logical consistency, and seemingly reasonable concessions displayed by the AI agent in communication perfectly mask this exploitation.

Technology creates a hidden inequality, making those who actually suffer losses believe that AI has made a fair deal, and even feel fooled, thinking, "It should thank us."

Under such absolute computational power, not only will human perception be clouded, but trading strategies that attempt to optimize through "prompt words" will also become completely ineffective.

Remember the negotiator persona we initially set for the AI? Faced with the gap in model capabilities, the prompts were meaningless.

For example, some employees specifically instructed agents to be "tough" or even "maliciously drive down prices from the outset" during negotiations. However, backtesting data showed that these artificially added instructions had no substantial impact on increasing sales rates, raising premiums, or securing purchase discounts.

This demonstrates that cue word strategies become meaningless in the face of absolute model capability. The final buying and selling outcome is determined by the model's parameter size and inference depth.

Project Deal was merely an internal test with 69 people. But we have already glimpsed the impact this "AI agent economy" will have on modern business life once it leaves the laboratory.

Is the "agent economy" reliable?

When payment interfaces are fully taken over by large-scale models, existing business rules will be directly rewritten. This rewriting will first be reflected in the shift in marketing targets, with commercial marketing shifting entirely from "To C" to "To A (Agent)".

Modern commercial marketing is built on human psychological weaknesses. Advertising creates consumer anxiety, herd mentality creates best-selling products, and various discount schemes create the mentality of "if you don't buy, you'll be a fool."

However, AI lacks dopamine. When purchasing decisions are handed over to AI, marketing techniques become meaningless. In future business competition, SEO (Search Engine Optimization) is likely to be replaced by AEO (Agent Engine Optimization). Merchants must use logic that AI can understand to prove the value of their products.

When AI replaces humans as the decision-making body, business competition will directly transform into a contest of computing power, thereby triggering a more subtle wealth disparity.

Price difference caused by asymmetric models | Image source: Anthropic

Nassim Nicholas Taleb, author of "The Black Swan" and "Antifragile," has a theory of "asymmetric risk," which states that decision-makers must bear the consequences for a system to remain healthy. However, in agent economies, AI has the power to make transaction decisions but does not bear the risk of asset depreciation; the cost is entirely borne by the humans behind it.

Therefore, in the future, large enterprises or high-net-worth individuals can subscribe to top-tier models as financial agents, while ordinary consumers can only rely on free, lightweight models.

This asymmetry in computing power will no longer manifest as the current "big data price discrimination." Instead, it will involve continuous commission extraction through reasonable negotiation logic in thousands of high-frequency, micro-transactions. Users at the underlying model will not only be exploited, but may even develop the illusion that the transactions are fair.

The asymmetry of computing power is still a visible and controllable risk, but when the underlying instructions are tampered with, the entire transaction network will fall directly into a legal vacuum.

Anthropic raised a real-world concern at the end of the report.

Project Deal is a closed and friendly internal test. What would happen if, in a real business environment, one party's AI agent was deliberately implanted with attack logic such as "jailbreaking" or "prompt injection"?

They only need to hide a specific instruction in the transaction dialogue to induce your AI logic to break down, and actively sell high-priced assets for a penny, or directly reveal the set bottom price.

If an AI agent is forced to sign an extremely unequal contract because its code defenses have been breached, who should bear the responsibility? The existing commercial legal framework is completely blank in the face of this kind of AI-on-AI fraud.

Looking back at the entire Project Deal experiment, the step not included in the research report was the final one after the AI agents had completed all the complex matching, probing, and bargaining. Human employees, each carrying real skis, old bicycles, or ping-pong balls, met at the company to exchange money for goods.

In this micro-business closed loop, the roles of humans and AI are completely reversed.

In the past, humans were the "brains" of commercial transactions, while AI and algorithms were merely tools responsible for price comparison, ranking, and "you might like" suggestions. However, in the agent economy, AI has become the decision-maker, and humans have degenerated into "human logistics" running errands for AI.

This may be the most terrifying end of the agent economy: for the sake of convenience, humans have voluntarily relinquished their right to compete in the market. What if all the calculations, games, and even emotional value are handled by AI?

In the commercial chain, humans are reduced to nothing more than the physical labor of transferring goods and a confirmation signature.