By: Vitalik Buterin

Compiled by: Yangz, Techub News

There are a lot of "little things" in Ethereum protocol design that are critical to Ethereum's success, but don't fit neatly into a larger subcategory. In practice, about half of them are about various EVM improvements, and the rest are various niche topics. This article will explore these topics.

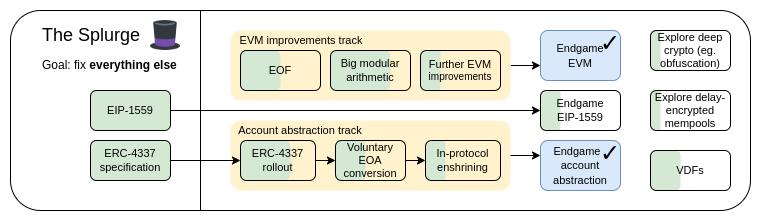

The Splurge, 2023 Roadmap

The Splurge's main goals:

- Bringing EVM to a “final state” with stable performance

- Introducing account abstraction into the protocol so that all users can benefit from safer and more convenient accounts

- Optimizing transaction fee economics to increase scalability while reducing risk

- Explore advanced cryptographic techniques to make Ethereum better in the long run

Improving the EVM

What problem does improving the EVM aim to solve?

The current EVM is difficult to statically analyze, making it difficult to create efficient implementations, formally verify the code, and further extend over time. In addition, it is extremely inefficient, making it difficult to implement many forms of advanced cryptography unless they are explicitly supported through precompilation.

How to improve the EVM?

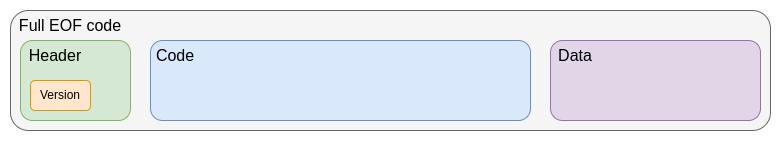

The first step in the current EVM improvement roadmap is the EVM Object Format (EOF) , which is planned to be introduced in the next hard fork. EOF is a series of EIPs that specifies a new version of the EVM code with many notable features, the most prominent of which include:

- Separation of code (executable, but not readable from the EVM) from data (readable, but not executable)

- Dynamic jumps are prohibited, only static jumps are allowed.

- EVM code no longer respects gas-related information.

- Added explicit subroutine mechanism.

EOF code framework

Although it is likely that old-style contracts will eventually be deprecated (perhaps even forced to convert to EOF code), they will continue to exist and can be created. New-style contracts will benefit from the efficiency gains brought by EOF, including slightly smaller bytecode due to subroutine functionality, as well as new features unique to EOF or its unique gas cost reduction.

After the introduction of EOF, further upgrades will become easier. The most complete one at present is EVM Modular Arithmetic Extension (EVM-MAX) . EVM-MAX creates a new set of operations specifically for modular arithmetic and puts them in a new memory space that is inaccessible to other opcodes. In this way, optimized operations such as Montgomery multiplication can be used.

A newer idea is to combine EVM-MAX with Single Instruction Multiple Data (SIMD) capabilities. SIMD has been around for a long time as a minor design in Ethereum, starting with Greg Colvin's EIP-616 . SIMD can be used to accelerate many forms of cryptographic algorithms, including hash functions, 32-bit STARKs, and lattice cryptography. EVM-MAX and SIMD are a natural combination for performance-oriented extensions to the EVM.

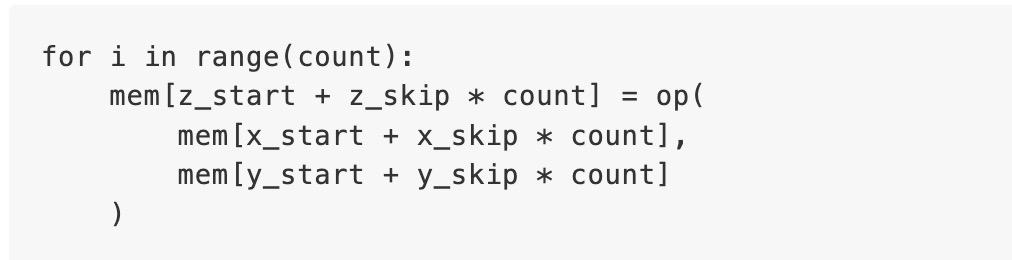

The general design idea of the combined EIP is to start with EIP-6690 , and then:

- Any odd number or any power of 2 up to (2768) is allowed as the modulus

- Add a version of each EVMMAX opcode (

add,sub,mul) that, instead of taking 3 immediate values (x/y/z), takes 7 immediate values (x_start,x_skip、y_start、y_skip、z_start、z_skip、count). In Python code, these opcodes will perform the equivalent of the following:

- But in actual execution, these opcodes will be processed in parallel

- If possible, add

XOR、AND、OR、NOT, andSHIFT(looping and non-looping), at least for two times two modulo. Also, addISZERO(push output to EVM main stack)

This is sufficient to implement many forms of cryptographic algorithms, including elliptic curve cryptography, small domain cryptography (such as Poseidon, circle STARKs), traditional hash functions (such as SHA256, KECCAK, BLAKE), and lattice cryptography.

Of course, other EVM upgrades are possible, but they have received much less attention so far.

Existing related research

What work remains to be done and how to strike the right balance?

Currently, EOF is planned to be included in the next hard fork. While it is always possible that plans can be removed (there have been cases where plans have been removed from hard forks at the last minute before), doing so will be an uphill battle. Removing EOF means that any future upgrades to the EVM will not require EOF, which can be done, but may be more difficult.

The main trade-off of the EVM is the complexity of L1 vs. the complexity of the infrastructure. To add EOF to the EVM implementation requires a lot of code, and static code inspection is also quite complex. However, in exchange, we can simplify the high-level language, simplify the EVM implementation, and gain other benefits. Let's put it this way, a roadmap that prioritizes continuous improvement of Ethereum L1 will include and build on EOF. One of the important tasks is to implement functions similar to EVM-MAX plus SIMD and benchmark how much gas various crypto operations cost.

What are the implications for the rest of the roadmap?

After L1 adjusts its EVM, L2 can more easily replicate it. Adjusting one side while the other does not will lead to incompatibility, which has its own drawbacks. In addition, EVM-MAX plus SIMD can reduce the gas cost of many verification systems, thereby improving the efficiency of L2. Replacing precompiled code with EVM code may not have a big impact on efficiency, but it can perform the same task, making it easier to remove more precompilations.

Account Abstraction

What problem does account abstraction aim to solve?

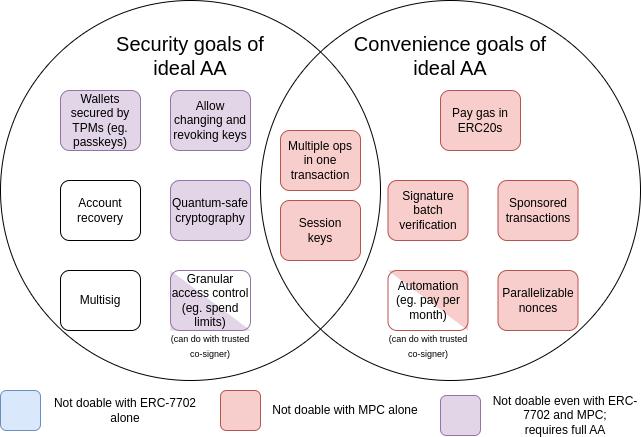

Today, transactions can only be verified in one way, namely, ECDSA signatures. The original intention of account abstraction is to expand on this basis, so that the verification logic of accounts can be any EVM code. This will enable a range of applications, including:

- Switching to quantum-resistant encryption

- Retire old keys (generally considered a recommended security practice)

- Multi-signature wallets and social recovery wallets

- Low-value operations are signed with one key, and high-value operations are signed with another key (or set of keys)

- Allows privacy protocols to operate without relayers, greatly reducing complexity and removing key core dependency points

Since the abstraction of accounts began in 2015, these goals have expanded to a large set of "convenience goals", such as an account that does not have ETH but has other ERC20 tokens can pay gas fees with these tokens. The following figure is a summary of these goals:

MPC refers to multi-party computation, a 40-year-old technology that splits a key into multiple pieces, stores them on multiple devices, and uses cryptographic techniques to generate signatures without directly combining the key pieces.

EIP-7702 is an EIP planned to be introduced in the next hard fork, and is the result of a growing awareness of the need to make the convenience of account abstraction available to all users, including EOA users, to improve the user experience in the short term and avoid splitting into two ecosystems. This work began with EIP-3074 and culminated in EIP-7702. EIP-7702 makes the "convenience feature" of account abstraction available to all users, including the current EOA (external account, that is, an account controlled by ECDSA signature).

From the chart we can see that while some challenges (especially around “convenience”) can be addressed through incremental techniques like multi-party computation or EIP-7702, most of the security goals that prompted the original account abstraction proposal can only be addressed by backtracking and solving the original problem of allowing smart contract code to control transaction verification. This has not been done yet because it remains a challenge to achieve this goal securely.

How does account abstraction work?

The core of account abstraction is to allow smart contracts rather than just EOAs to initiate transactions, and the complexity comes from how to achieve this in a way that is conducive to maintaining a decentralized network and resisting denial of service attacks.

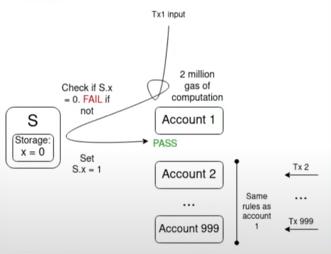

The multi-factor authentication problem is a key challenge:

Assume that there are 1,000 accounts whose validation functions all depend on a single value

S , and that all transactions in the mempool are valid under the current value of S Then a single transaction that flips the value of S will invalidate all other transactions in the mempool. In this way, an attacker can spam the mempool at a very low cost, clogging up node resources on the network. Over the years, we have been working hard to expand functionality while limiting DoS risks, and finally, we reached a consensus on how to achieve the "ideal account abstraction" and proposed ERC-4337.

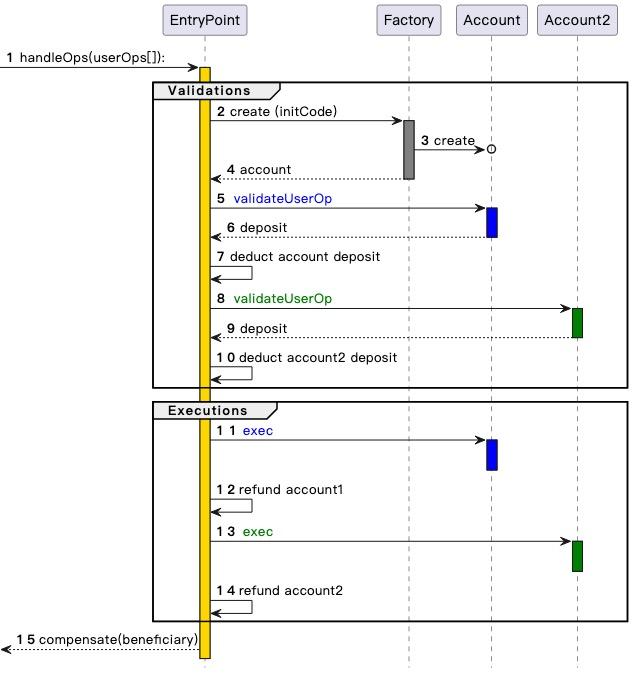

ERC-4337 divides the processing of user operations into two phases, verification and execution. All verifications are processed first, and then all executions. In the mempool, a user operation will only be accepted if the verification phase of the user operation only involves its own account and does not read environment variables. This prevents multiple verification attacks and also enforces strict gas limits on the verification step.

ERC-4337 was designed as an extra-protocol standard (ERC) because Ethereum client developers were busy with the Merge and did not have the spare capacity to develop other features. This is why ERC-4337 uses user actions as objects instead of regular transactions. However, recently we realized that it is necessary to add at least some of the features proposed in ERC-4337 to the protocol. Two main reasons are:

- EntryPoint is inherently inefficient as a contract: each bundled operation costs about 100,000 gas, and each user operation costs thousands of gas.

- Need to ensure Ethereum properties carry over to account abstraction users.

In addition, ERC-4337 also extends two functions:

- Paymasters: Allows one account to pay on behalf of another, breaking the rule that only the sender account itself can be accessed during the verification phase, introducing special processing methods to allow the paymaster mechanism and ensure its security.

- Aggregators: Support signature aggregation, such as BLS aggregation or SNARK-based aggregation. This is necessary to achieve the highest level of data efficiency on Rollup.

Existing related research

- Account abstraction history introduction : https://www.youtube.com/watch?v=iLf8qpOmxQc

- ERC-4337: https://eips.ethereum.org/EIPS/eip-4337

- EIP-7702: https://eips.ethereum.org/EIPS/eip-7702

- BLSWallet code (using aggregation function) : https://github.com/getwax/bls-wallet

- EIP-7562 (the first to propose account abstraction): https://eips.ethereum.org/EIPS/eip-7562

- EIP-7701 (Embedded Account Abstraction Based on EOF): https://eips.ethereum.org/EIPS/eip-7701

What work remains to be done and how to strike the right balance?

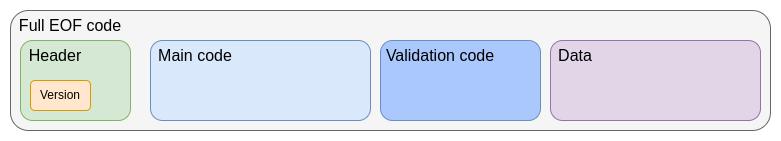

The main problem left to be solved is how to fully introduce account abstraction into the protocol. One of the more popular solutions recently is EIP-7701, which aims to implement account abstraction based on EOF, that is, an account can set a separate code segment for verification. If the account sets the code segment, the code will be executed in the verification step of the account transaction.

EIP-7701 Account EOF Code Structure

The advantage of this approach is that it makes it clear that there are two equivalent ways to view the local account abstraction:

- EIP-4337 (but as part of the protocol)

- A new type of EOA where the signature algorithm is EVM code execution

If we start out by strictly constraining the complexity of the code that can be executed during verification (not allowing access to external state, and even limiting gas to be too low to be useful for quantum-resistant or privacy-preserving applications from the beginning), then the security of this approach is pretty obvious: it just swaps out ECDSA verification for EVM code execution that takes a similar amount of time. However, we will need to relax these restrictions over time, as allowing privacy-preserving applications to work without relays and quantum resistance are both very important. To do this, we really need to find ways to address DoS risks in a more flexible way that doesn't require the verification step to be extremely minimalistic.

The main trade-off seems to be between "adopting some less popular solution as soon as possible" and "waiting longer and perhaps getting a more ideal solution." The ideal solution is likely to be some kind of hybrid. One hybrid solution is to adopt some use cases faster, leaving more time to solve other use cases. Another solution is to deploy the account abstraction version on L2 first. However, the challenge of doing this is that in order for L2 teams to be willing to work on adopting a proposal, they must be confident that L1 and/or other L2s will adopt a compatible version later.

Another application we need to consider explicitly is key storage accounts , which can store account-related state on L1 or a dedicated L2, or can be used on L1 and any compatible L2. To do this efficiently, L2 may need to support opcodes such as

L1SLOAD or REMOTESTATICCALL , and an account abstraction implementation on L2 as support.What are the implications for the rest of the roadmap?

Inclusion lists need to support account abstraction transactions. In practice, the requirements for inclusion lists and decentralized mempools will end up being very similar, albeit with slightly more flexibility.

In addition, it is best to implement account abstraction as uniformly as possible on L1 and L2. If we expect that most users will use key storage Rollup in the future, this should be taken into account when designing account abstraction.

EIP-1559 Improvements

What problem does EIP-1559 aim to solve?

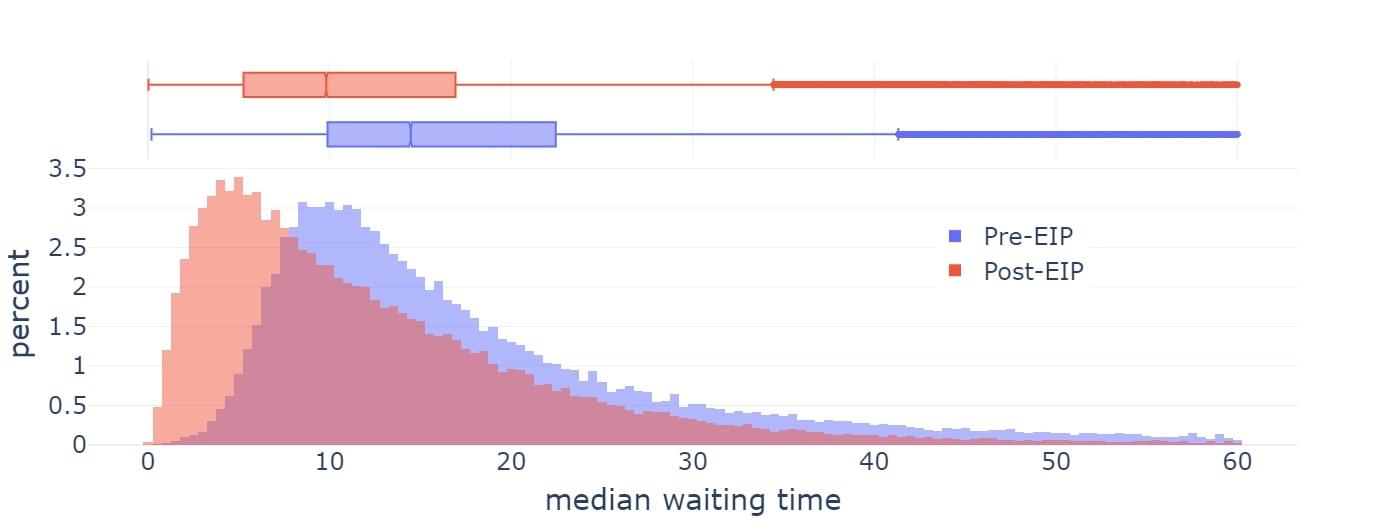

EIP-1559 was activated on Ethereum in 2021 and significantly improved the average block inclusion time.

However, the current implementation of EIP-1559 is flawed in the following ways:

- First, the scheme is slightly flawed: instead of targeting 50% of blocks, it targets ~50-53% of full blocks, depending on the variance (related to what mathematicians call the “ AM-GM inequality ”).

- Second, it doesn’t adjust fast enough under extreme conditions.

The later solution for Blob ( EIP-4844 ) was explicitly designed to solve the first problem and is more concise overall. However, neither EIP-1559 itself nor EIP-4844 attempted to solve the second problem. Therefore, the current status quo is a confusion of two different mechanisms, and it is even possible that both mechanisms will need to be improved over time.

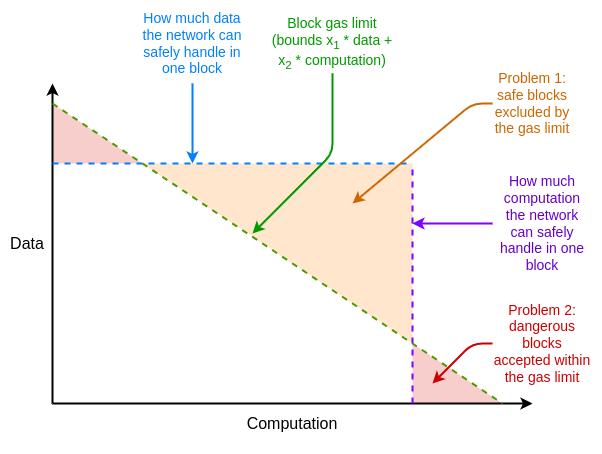

In addition to this, Ethereum resource pricing has other flaws that are not related to EIP-1559, but can be solved by adjusting EIP-1559. One of the main problems is the difference between the average case and the worst case, that is, Ethereum's resource pricing must be set to handle the worst case, that is, the full gas consumption of a block occupies a resource, but the average case usage is much less than this, resulting in inefficiency.

How to solve these inefficiencies?

Multidimensional gas is a solution to these inefficiencies, setting different prices and limits for different resources. This concept is technically independent of EIP-1559, but EIP-1559 makes it easier. Without EIP-1559, how to optimize the packaging of a block with multiple resource limits would be a complex multidimensional "knapsack" problem . With EIP-1559, most blocks will not run at full capacity on any resource, so a simple algorithm of "accept any transaction that pays enough fees" is sufficient.

Today, we have multi-dimensional gas on execution and blob, and in principle we could extend this to more dimensions, including calldata, state read/write, and state size expansion.

EIP-7706 introduces a new gas dimension for calldata. It also simplifies the multi-dimensional gas mechanism so that all three types of gas fall into one (EIP-4844-style) framework, thus solving the mathematical flaws of EIP-1559.

EIP-7623 is a more radical solution to the average-case vs. worst-case resource problem by placing tighter limits on maximum calldata without introducing a whole new dimension.

Another direction is to solve the update rate problem and find a faster base fee calculation algorithm while retaining the key invariant introduced by the EIP-4844 mechanism (i.e., in the long run, the average usage is exactly close to the target value).

Existing related research

- EIP-1559 FAQ : https://notes.ethereum.org/@vbuterin/eip-1559-faq

- Empirical analysis of EIP-1559 : https://dl.acm.org/doi/10.1145/3548606.3559341

- Proposed improvements to enable faster adjustments : https://kclpure.kcl.ac.uk/ws/portalfiles/portal/180741021/Transaction_Fees_on_a_Honeymoon_Ethereums_EIP_1559_One_Month_Later.pdf

- EIP-4844 FAQ, part about the base fee mechanism : https://notes.ethereum.org/@vbuterin/protoo_danksharding_faq#How-does-the-exponential-EIP-1559-blob-fee-adjustment-mechanism-work

- EIP-7706: https://eips.ethereum.org/EIPS/eip-7706

- EIP-7623: https://eips.ethereum.org/EIPS/eip-7623

- Multidimensional gas: https://vitalik.eth.limo/general/2024/05/09/multidim.html

What work remains to be done and how to strike the right balance?

Multidimensional gas has two main flaws:

- Increased complexity of the protocol

- Increased complexity of the optimal algorithm needed to fill blocks

Protocol complexity is a relatively minor issue for calldata, but becomes a larger issue for the gas dimension "inside the EVM" (such as storage reads and writes). The difficulty is that it is not only the users who set the gas limit, but also the contracts that set the limits when calling other contracts. And right now, the only way they can set limits is one-dimensional.

A simple solution to this problem is that multi-dimensional gas can only be used inside EOF, because EOF does not allow contracts to set gas limits when calling other contracts. Non-EOF contracts must pay for all types of gas when performing storage operations (for example, if the cost of

SLOAD is 0.03% of the block storage access gas limit, then non-EOF users will also be charged 0.03% of the execution gas limit). More research on multi-dimensional gas will help understand the pros and cons and find the ideal balance.

What are the implications for the rest of the roadmap?

Successful implementation of multi-dimensional gas can significantly reduce resource usage in certain “worst case” scenarios, alleviating the pressure to optimize performance for supporting STARK-based hashed binary trees. Setting a hard target for state size growth will make it easier for client developers to plan and anticipate future needs.

As mentioned above, due to the unobservable nature of EOF, it makes more extreme versions of multi-dimensional gas easier to implement.

Verifiable Delay Function (VDF)

What problem do VDFs aim to solve?

Currently, Ethereum uses RANDAO-based randomness to select proposers. RANDAO-based randomness works by requiring each proposer to reveal a secret they committed to in advance, and mixing each revealed secret into the randomness. Therefore, each proposer has "1 bit of manipulation power" and can change the randomness by not showing up (at a cost). This is reasonable for finding proposers, because it is very rare that giving up a proposal can give you two new opportunities to propose. But for on-chain applications that require randomness, this is not suitable. Ideally, we can find a more powerful source of randomness.

How do VDFs work?

A Verifiable Delay Function (VDF) is a function that can only be computed sequentially and cannot be made faster by parallelization. A simple example is repeated hashing: compute

for i in range(10**9): x = hash(x) . The output can be used as a random value through a SNARK correctness proof. The idea is that the inputs are chosen based on the information available at time T, while the outputs are not yet known at time T: the output will only be known at some time after time T, when someone runs the computation in full. Since anyone can run the computation, it is impossible to hide the result and thus manipulate it. The main risk of verifiably delayed functions is unexpected optimization. If someone figures out how to run the function much faster than expected, then they can manipulate the information they reveal at time T based on future outputs. Unexpected optimization can happen in two ways:

- Hardware acceleration : Creating an application-specific integrated circuit (ASIC) that runs computing cycles much faster than existing hardware.

- Accidental Parallelization : Find a way to run a function faster through parallelization, even if doing so requires 100x more resources.

Creating a successful VDF is about avoiding both of these problems while keeping efficiency practical. (For example, one problem with hash-based approaches is that real-time SNARK proofs are hardware-intensive. Hardware acceleration is often addressed by having public interest actors create and distribute reasonably close-to-optimal ASICs for VDFs themselves.)

Existing related research

- vdfresearch.org: https://vdfresearch.org/

- Thoughts on VDF attacks used in Ethereum, 2018 : https://ethresear.ch/t/verifiable-delay-functions-and-attacks/2365

- Attacks on MinRoot (a proposed VDF) : https://inria.hal.science/hal-04320126/file/minrootanalysis2023.pdf

What work remains to be done and how to strike the right balance?

Currently, there is no VDF construction that fully satisfies the requirements of Ethereum researchers. There is still a lot of work to be done to find such a function. And if we find the perfect VDF construction, the main trade-off is between functionality and protocol complexity and security risk. If we consider a VDF to be secure, but it is ultimately insecure, then depending on how it is implemented, the security will be reduced to the RANDAO assumption (each attacker can only manipulate 1 bit) or worse. Therefore, even if the VDF is wrong, it will not break the protocol, but it will break applications or any new protocol functionality that heavily relies on VDFs.

What are the implications for the rest of the roadmap?

VDF is a relatively independent component of the Ethereum protocol. In addition to improving the security of proposer selection, it can also be used for on-chain applications that rely on randomness, as well as encrypted mempools. However, VDF-based encrypted mempools still rely on additional cryptographic discoveries, which have not yet appeared.

One thing to keep in mind is that due to hardware nondeterminism, there will be some "gap" between when a VDF output is generated and when it is included. This means that information needs to be obtained several blocks in advance. This may be an acceptable price to pay, but should be considered in the design of single slot finality or committee selection, etc.

Obfuscation and one-shot signatures: the distant future of cryptography

What problem are we trying to solve?

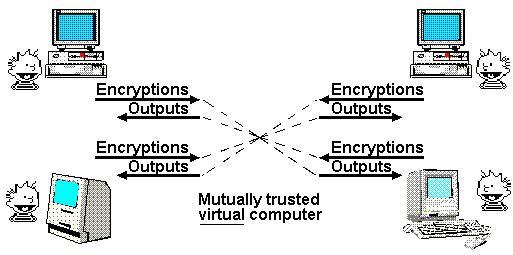

One of Nick Szabo’s most famous articles is a 1997 article on the “ God Protocol .” In it, he noted that multi-party applications often rely on a “trusted third party” to manage interactions. In his view, the role of cryptography is to create a simulated trusted third party that does the same job without actually having to trust any specific actor.

Mathematically Trustworthy Protocols, by Nick Szabo

So far, we have only partially approached this ideal. If all we need is a transparent virtual computer (data and computations cannot be shut down, censored, or tampered with) and privacy is not a goal, then blockchains can do that, albeit with limited scalability. But if privacy is one of the goals, then until recently we have only been able to develop specific protocols for specific applications, such as digital signatures for basic authentication , ring signatures and linkable ring signatures for primitive forms of anonymity, identity-based encryption (to enable more convenient encryption under specific assumptions about trusted issuers), blind signatures for Chaumian electronic cash , etc. This approach requires a lot of effort for each new application.

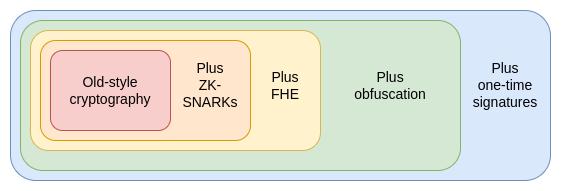

The 2010s gave us our first glimpse of another, more powerful approach based on programmable cryptography . Instead of creating a new protocol for every new application, we can add cryptographic guarantees to arbitrary programs using powerful new protocols (specifically ZK-SNARKs). ZK-SNARKs allow users to prove arbitrary statements about data they hold in a way that is easy to verify and doesn’t reveal any data other than the statement itself. This is a huge step forward for both privacy and scalability, and to me it’s like Google’s transformer for AI. Application-specific problems that have been around for thousands of years suddenly have a general solution that we can just plug in and solve all sorts of annoying problems.

Moreover, ZK-SNARKs are only the first of three similar extremely powerful general primitives. These protocols are so powerful that whenever I think of them, I think of the extremely powerful Egyptian God card deck in my childhood game "Yu-Gi-Oh" (so powerful that it was not allowed to be used in duels). Similarly, in cryptography, we also have the "three god protocols": ZK-SNARKs, fully homomorphic encryption (FHE), and obfuscation.

How do ZK-SNARKs, Fully Homomorphic Encryption, and Obfuscation work?

We have developed ZK-SNARKs to a high level of maturity. Over the past five years, both prover speed and developer friendliness have improved greatly, and ZK-SNARKs have become the cornerstone of Ethereum's scalability and privacy strategy. However, ZK-SNARKs have an important limitation, which is that data needs to be known in order to prove it. Every state in a ZK-SNARK application must have an "owner", and this "owner" must be able to approve any read or write to it.

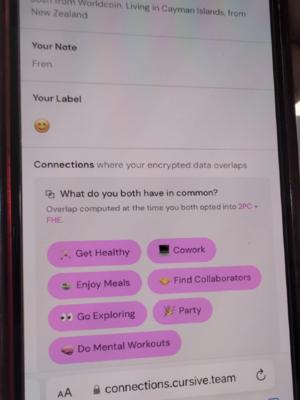

The second protocol does not have this limitation, which is fully homomorphic encryption (FHE). FHE allows any computation to be performed on the encrypted data without seeing the data. This allows computation to be performed on user data for the benefit of the user while keeping the data and the algorithm private. It can also extend voting systems such as MACI to have almost perfect security and privacy guarantees. FHE has long been criticized for being "too inefficient for practical applications", but now it has become efficient enough to have relevant applications.

Cursive is an application that uses two-party computation and FHE to discover common interests and protect privacy.

But FHE also has its limitations. Any technology based on FHE still requires someone to hold the key. The key holding method can be an M-of-N distributed setting, or even TEE can be used to add a second layer of defense, but it is still a limitation.

This brings us to the third protocol, indistinguishability obfuscation. While it is still far from mature, as of 2020 we already have theoretically valid protocols based on standard security assumptions, and implementations are recently beginning . Indistinguishability obfuscation allows the creation of a "cryptographic program" that performs arbitrary computations, hiding all the internal details of the program. As a simple example, you can put your private key into an obfuscated program (which only allows you to use it to sign prime numbers) and then distribute the program to other people. Then, these people can use the program to sign any prime number, but they will not be able to get the key out. Of course, the power of indistinguishability obfuscation goes far beyond this. Together with hashing algorithms, it can be used to implement any other cryptographic primitives, and even more.

The only thing an obfuscated program can’t do is prevent itself from being copied. But for that, something more powerful is coming: quantum one-shot signatures , provided everyone has a quantum computer.

By using obfuscation and one-time signatures, we can create an almost perfect trustless third party. The only thing we can't do with cryptography alone, and what we still need blockchain for, is to ensure censorship resistance. These techniques not only make Ethereum itself more secure, but also enable more powerful applications to be built on top of it.

To understand how these primitives are empowering, we can take a closer look at the example of "voting". Voting is an interesting topic that needs to satisfy many tricky security properties, including very strong verifiability and privacy. Although voting protocols with strong security properties have existed for decades, if we want a design that can handle arbitrary voting protocols, such as quadratic voting , pairwise-bounded quadratic funding, cluster-matching quadratic funding , etc., then we want the "counting" step to be an arbitrary procedure:

- First, let’s assume that we put the votes publicly on the blockchain. We would then have public verifiability (anyone can verify that the final result is correct, including the rules for counting and eligibility) and censorship resistance (people cannot be stopped from voting), but no privacy.

- Second, we can add ZK-SNARKs to ensure privacy: each vote is anonymous while ensuring that only authorized voters can vote, and each voter can only vote once.

- We can then add the MACI mechanism . The votes are encrypted to the central server's decryption key. The central server needs to perform the counting process, including removing duplicate votes, and publish the ZK-SNARK proof answer. This preserves the previous guarantees (even if the server is cheating!), but adds a coercion-resistance guarantee if the server is honest: users cannot prove how they voted even if they want to. This is because, while a user can prove that they cast a vote, they cannot prove that they did not cast another vote to offset that vote. This prevents bribery and other attacks.

- Then, we can perform statistics inside FHE and decrypt it using an N/2 of N threshold decryption calculation, making the anti-coercion guarantee N/2-of-N instead of 1-of-1 .

- We can then obfuscate the counting program and design it so that it can only output results with permission, either from blockchain consensus, a certain amount of proof of work, or both. This makes the anti-coercion guarantee almost perfect: in the case of blockchain consensus, 51% of validators would need to collude to break it, while in the case of proof of work, even if everyone colluded, it would be extremely expensive to re-run the count with a different subset of voters to try to extract the behavior of a single voter. We can even make it more difficult to extract the behavior of a single voter by having the program make small random adjustments to the final count.

- Finally, we can add one-shot signatures (a fundamental principle relying on quantum computing that allows a signature to be used only once to sign a certain type of information) to make the anti-coercion guarantees truly perfect.

Indistinguishable obfuscation also allows for other powerful applications. For example:

- DAOs, on-chain auctions, and other applications with arbitrary internal secret state.

- Truly universal trusted setup : one can create an obfuscated program that contains a key, takes

hash(key, program)as input to the program, and runs any program to provide output. With such a program, anyone can drop it into their own program, combining the pre-existing key in the program with their own key, thus extending the setup. This can be used to generate a 1-of-N trusted setup for any protocol. - ZK-SNARKs that are only verified by a single signature: This is very simple to implement, in a trusted setting someone would create an obfuscated program that would only sign with the key if the message is a valid ZK-SNARK.

- Encrypted mempool: Encrypt transactions so that they can only be decrypted if an on-chain event occurs in the future. This even includes the successful execution of a VDF.

With one-time signatures, we can make blockchains immune to 51% finality reversal attacks, although censorship attacks are still possible. Primitives similar to one-time signatures can enable quantum money , which can solve the double-spending problem without a blockchain, but many more complex applications still require a blockchain.

If these primitives can be made efficient enough, most applications in the world can be decentralized. The main bottleneck is verifying the correctness of the implementation.

Existing related research

- Indistinguishable Obfuscation Protocol 2021 : https://eprint.iacr.org/2021/1334.pdf

- How obfuscation can help Ethereum : https://ethresear.ch/t/how-obfuscation-can-help-ethereum/7380

- First known one-time signature build : https://eprint.iacr.org/2020/107.pdf

- Attempted implementation of the obfuscation protocol (1) : https://mediatum.ub.tum.de/doc/1246288/1246288.pdf

- Trial implementation of obfuscation protocol (2) : https://github.com/SoraSuegami/iOMaker/tree/main

What work remains to be done and how to strike the right balance?

First, the development of indistinguishability obfuscation technology is extremely immature, with candidate constructions being developed millions of times slower than applications and simply unusable. Indistinguishability obfuscation is known for running in "theoretical" polynomial time, but the actual running time is longer than the lifetime of the universe. Although, the latest protocols have made the running time less extreme, the overhead is still too high for regular use. In addition, quantum computers do not even exist. All the constructions we may currently read about on the Internet are either prototypes that cannot do any calculations larger than 4 bits, or they are not real quantum computers, because although they may have quantum components, they cannot run truly meaningful calculations such as Shor's algorithm or Grover's algorithm . Recently, there have been signs that "real" quantum computers are not far away . However, even if "real" quantum computers are coming soon, the day when ordinary people have quantum computers on their laptops or phones will likely be decades after powerful institutions obtain quantum computers that can break elliptic curve cryptography. A key trade-off for indistinguishability obfuscation is security assumptions. There are some more radical designs that use exotic assumptions . The running times of these designs are generally more realistic, but these special assumptions may eventually be broken . Over time, we may eventually learn enough about lattice cryptography to make assumptions that it cannot be broken. However, this path is riskier. A more conservative approach would be to stick with protocols whose security is provably reducible to the "standard" assumptions, but this may mean it takes us longer to get a protocol that runs fast enough.

What are the implications for the rest of the roadmap?

Extremely powerful encryption is a game changer. For example:

- If we could get ZK-SNARKs that were as easy to verify as signatures, we might not need any aggregation protocols at all and could just verify signatures on-chain directly.

- One-time signatures could mean more secure proof-of-stake protocols.

- Many complex privacy protocols can be replaced by "just" having a privacy-preserving EVM.

- Encrypting the mempool is easier to implement.

Initially, the application layer will benefit greatly, as Ethereum L1 itself needs to adhere to security assumptions. However, the use of the application layer alone can be a game changer, just like the emergence of ZK-SNARKs.