Author: Siyuan,Technology News

Image source: Generated by Boundless AI

In the AI era, the information input by users is no longer just personal privacy, but has become the "stepping stone" for the progress of large models.

"Help me make a PPT", "Help me make a new year poster", "Help me summarize the content of the document", after the large model became popular, using AI tools to improve efficiency has become a daily routine for white-collar workers, and many people even start using AI to order takeout and book hotels.

However, this way of data collection and use also brings huge privacy risks. Many users ignore the main problem of the digital age, which is the lack of transparency in the use of digital technologies and tools. They are not clear about how the data of these AI tools is collected, processed and stored, and are uncertain whether the data is being abused or leaked.

In March this year, OpenAI admitted that ChatGPT had vulnerabilities, leading to the leakage of some users' historical chat records. This incident has raised public concerns about the data security and personal privacy protection of large models. In addition to the ChatGPT data leak incident, Meta's AI models have also been controversial for infringing on copyrights.

Similarly, similar incidents have occurred in China. Recently, iQIYI and one of the "six small tigers" of large models, Shiyutech (MiniMax), have attracted attention due to copyright disputes. iQIYI accused Hailuo AI of using its copyrighted materials to train models without permission, which is the first copyright infringement lawsuit against an AI video large model in China.

These incidents have raised external concerns about the source of large model training data and copyright issues, indicating that the development of AI technology needs to be based on the protection of user privacy.

01. The right to withdraw is illusory

First of all, "Technology News" can clearly see from the login page that the 7 domestic large model products all follow the "standard" user agreement and privacy policy of Internet APPs, and all have different chapters in the privacy policy text to explain how to collect and use personal information.

The statements of these products are also basically the same, "In order to optimize and improve the service experience, we may analyze the user's feedback on the output content and the problems encountered during the use process to improve the service. Under the premise of strict de-identification and encryption technology, the user's input data, issued instructions, and AI's corresponding responses, as well as the user's access and use of the product, may be analyzed and used for model training."

In fact, using user data to train products and then iterating better products for users to use seems to be a positive cycle, but the problem that users are concerned about is whether they have the right to refuse or withdraw the relevant data "feeding" to AI training.

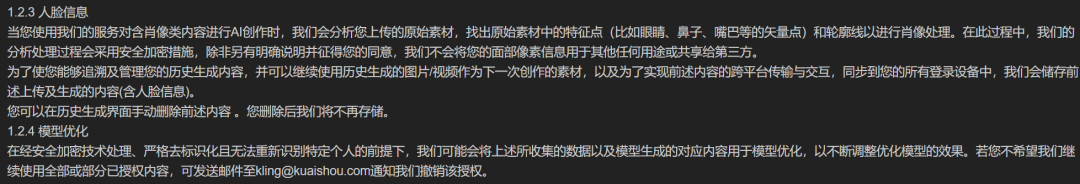

After reviewing and testing these 7 AI products, "Technology News" found that only Douban, iFLYTEK, Tongyiqianwen, and Keiling mentioned in their privacy terms that they can "change the scope of authorization for the product to continue collecting personal information or withdraw the authorization".

Among them, Douban is mainly focused on the withdrawal of authorization for voice information. The policy shows that "if you do not want the voice information you enter or provide to be used for model training and optimization, you can withdraw your authorization by turning off 'Settings' - 'Account Settings' - 'Improve Voice Service'"; However, for other information, it is necessary to contact the official through the publicly disclosed contact information to request the withdrawal of the use of data for model training and optimization.

Source / (Douban)

In the actual operation process, the closure of the authorization for voice services is not difficult, but for the withdrawal of the use of other information, "Technology News" has not received a reply after contacting the Douban official.

Source / (Douban)

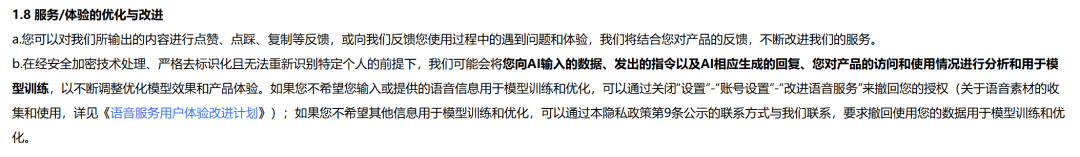

Tongyiqianwen is similar to Douban, and the only thing that can be operated personally is the withdrawal of authorization for voice services, and for other information, it is also necessary to contact the official through the disclosed contact information to change or withdraw the authorization to collect and process personal information.

Source / (Tongyiqianwen)

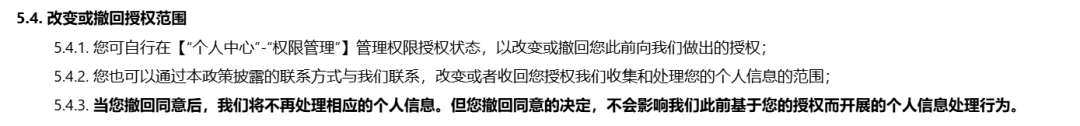

As a video and image generation platform, Keiling has emphasized the use of facial features, stating that it will not use your facial pixel information for any other purpose or share it with third parties. However, if you want to cancel the authorization, you need to send an email to contact the official for cancellation.

Source / (Keiling)

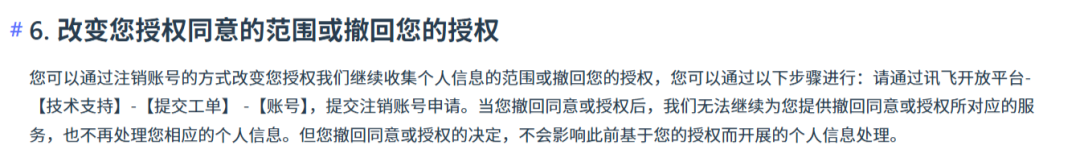

Compared to Douban, Tongyiqianwen and Keiling, iFLYTEK Xingkong's requirements are more stringent. According to the terms, if users need to change or withdraw the scope of personal information collection, they need to do so by canceling their account.

Source / (iFLYTEK Xingkong)

It is worth mentioning that although Tencent Yuanbao did not mention how to change the information authorization in the terms, we can see the "Voice Function Improvement Plan" switch in the APP.

Source / (Tencent Yuanbao)

Although Kimi mentioned in the privacy terms that it is possible to revoke the sharing of voiceprint information with third parties, and the corresponding operation can be performed in the APP, "Technology News" did not find the entry after a long exploration. As for other text-based information, no corresponding terms were found either.

Source / (Kimi privacy terms)

In fact, it is not difficult to see from several mainstream large model applications that each company attaches more importance to user voiceprint management. Douban, Tongyiqianwen, etc. can cancel the authorization through self-operation, and for specific interaction scenarios such as location, camera, and microphone, the basic authorization can also be turned off by themselves, but for the withdrawal of the "fed" data, each company is not so smooth.

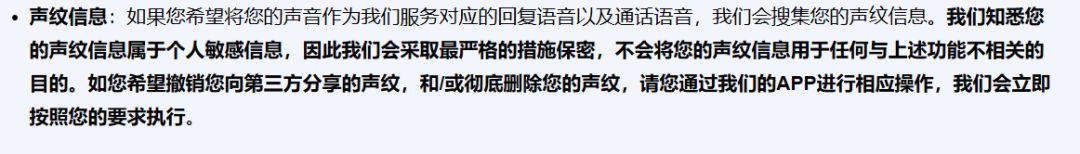

It is worth mentioning that overseas large models also have similar practices in the "user data withdrawal from AI training mechanism". Google's Gemini-related terms stipulate that "if you don't want us to review future conversations or use related conversations to improve Google's machine learning technology, please turn off the Gemini app activity log."

In addition, Gemini also mentioned that when deleting your own app activity log, the system will not delete the conversation content (as well as language, device type, location information or feedback, etc.) that has been reviewed or annotated by human reviewers, because this content is stored separately and is not associated with the Google account. This content will be retained for up to three years at most.

Source / (Gemini terms)

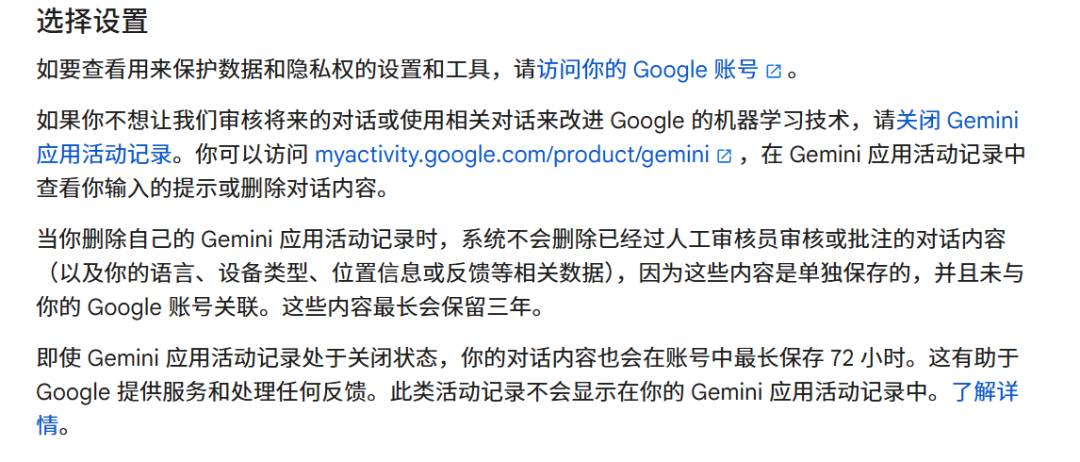

ChatGPT's rules are somewhat ambiguous, saying that users may have the right to restrict the processing of their personal data, but in actual use, it is found that Plus users can actively set to disable data for training, but for free users, the data is usually collected by default and used for training, and users who want to opt out need to send an email to the official.

Source / (ChatGPT terms)

In fact, from the terms of these large model products, it is not difficult to see that the collection of user input information seems to have become a consensus, but for more private information such as voiceprint and facial features, only some multi-modal platforms have shown some performance.

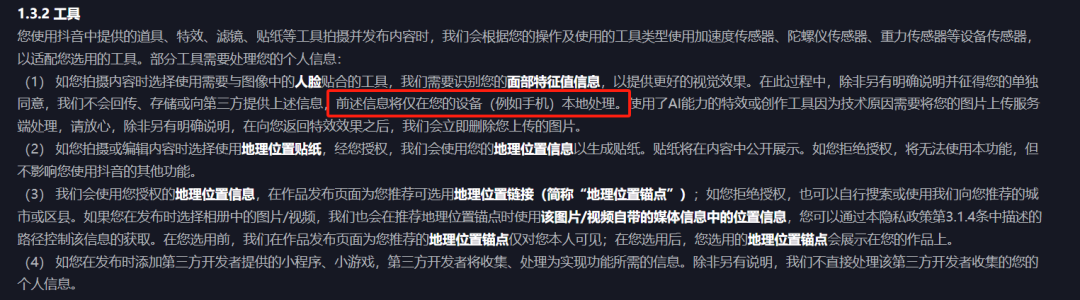

But this is not due to lack of experience, especially for Internet giants. For example, WeChat's privacy policy lists in detail the specific scenarios, purposes and scope of each data collection, and even clearly promises "not to collect user chat records". TikTok is the same, and the information uploaded by users on TikTok is almost all detailed in the privacy policy in terms of usage methods and purposes.

Source / (TikTok privacy policy)

Here is the English translation:In the era of social media on the Internet, data acquisition behavior that is strictly controlled has now become a norm in the AI era. The information entered by users has been arbitrarily obtained by large model manufacturers under the banner of "training corpus", and user data is no longer considered as personal privacy that needs to be strictly treated, but as a "stepping stone" for model progress.

In addition to user data, the transparency of the training corpus is also crucial for large model attempts. Whether these corpora are reasonable and legal, whether they constitute infringement, and whether there are potential risks for user use are all issues. We conducted in-depth exploration and evaluation of these 7 large model products, and the results also surprised us greatly.

02. Risks of "Feeding" Training Corpus

In addition to computing power, high-quality corpora are more important for training large models. However, these corpora often contain a variety of copyrighted texts, images, videos, and other works, and using them without authorization will obviously constitute infringement.

After testing, we found that the agreements of the 7 large model products did not mention the specific sources of the large model training data, nor did they publicly disclose the copyright data.

The reason why everyone is very tacit about not disclosing the training corpus is also very simple. On the one hand, it may be because improper use of data is easy to lead to copyright disputes, and whether it is legal for AI companies to use copyrighted products as training corpus is still unclear; on the other hand, it may be related to competition between enterprises, as disclosing the training corpus is equivalent to telling competitors the raw materials, and competitors can quickly replicate and improve their product level.

It is worth mentioning that the policy agreements of most models mention that the information obtained from the interaction between users and the large model will be used for model and service optimization, related research, brand promotion and publicity, marketing, user research, etc.

To be honest, due to the uneven quality of user data, insufficient depth of scenarios, and the existence of diminishing marginal effects, user data is difficult to improve model capabilities, and may even bring additional data cleaning costs. However, the value of user data still exists. It is no longer the key to improving model capabilities, but a new way for companies to obtain commercial benefits. By analyzing user dialogues, companies can gain insights into user behavior, discover monetization scenarios, customize business functions, and even share information with advertisers. And these are all in line with the usage rules of large model products.

However, it should also be noted that the data generated during real-time processing will be uploaded to the cloud for processing, and will also be stored in the cloud. Although most large models mention in their privacy agreements that they use encryption technologies, anonymization processing, and other feasible measures to protect personal information that are not lower than industry peers, there are still concerns about the actual effectiveness of these measures.

For example, if the content entered by users is used as a dataset, there may be a risk of information leakage when others ask the large model related questions after a period of time; in addition, if the cloud or the product is attacked, it is possible that the original information may still be recovered through association or analysis technology, which is also a hidden danger.

The European Data Protection Board (EDPB) recently issued guidance on the protection of personal data in the processing of AI models. The opinion clearly points out that the anonymity of AI models cannot be established by a simple statement, but must be ensured through rigorous technical verification and unremitting monitoring measures. In addition, the opinion also emphasizes that companies not only need to prove the necessity of data processing activities, but also must demonstrate that they have adopted the least intrusive methods for personal privacy in the processing process.

Therefore, when large model companies collect data in the name of "improving model performance", we need to be more vigilant and think about whether this is a necessary condition for model progress, or an abuse of user data based on commercial purposes.

03. Ambiguous Areas of Data Security

In addition to the conventional large model applications, the privacy leakage risks brought by intelligent agents and edge-side AI applications are more complex.

Compared to AI tools such as chatbots, intelligent agents and edge-side AI need to obtain more detailed and valuable personal information when in use. In the past, the information obtained by mobile phones mainly included user device and application information, log information, underlying permission information, etc.; in the edge-side AI scenario and the current mainstream screen recording technology, in addition to the comprehensive information permissions, the terminal intelligent agent can also obtain the files themselves that are displayed, and further obtain identity, location, payment and other sensitive information through model analysis.

For example, in the food delivery scenario demonstrated by Honor in the previous launch, location, payment, preferences and other information will be quietly read and recorded by the AI application, increasing the risk of personal privacy leakage.

As analyzed by the "Tencent Research Institute" previously, in the mobile Internet ecosystem, apps that directly provide services to consumers are generally regarded as data controllers, and bear corresponding responsibilities for privacy protection and data security in service scenarios such as e-commerce, social networking, and transportation. However, when the edge-side AI intelligent agent completes specific tasks based on the service capabilities of the app, the responsibility boundary for data security between the terminal manufacturer and the app service provider becomes blurred.

Manufacturers often use the excuse of providing better services, but when viewed from the perspective of the entire industry, this is not a "legitimate reason". Apple Intelligence has clearly stated that it will not store user data on the cloud and will adopt various technical means to prevent any organization, including Apple itself, from obtaining user data, in order to win user trust.

Undoubtedly, the current mainstream large models have many urgent problems to be solved in terms of transparency. Whether it is the difficulty of user data withdrawal, the opaque source of training corpus, or the complex privacy risks brought by intelligent agents and edge-side AI, they are constantly eroding the foundation of user trust in large models.

As a key force in driving digital transformation, the improvement of the transparency of large models is becoming increasingly urgent. This not only concerns the security of personal information and privacy protection, but is also a core factor in determining whether the entire large model industry can develop in a healthy and sustainable manner.

In the future, we hope that major model manufacturers will actively respond, optimize product design and privacy policies, and explain the origin and destination of data to users in a more open and transparent manner, so that users can use large model technology with confidence. At the same time, regulatory authorities should also accelerate the improvement of relevant laws and regulations, clarify the norms and responsibility boundaries for data use, and create an innovative and orderly development environment for the large model industry, so that large models can truly become a powerful tool for the benefit of humanity.