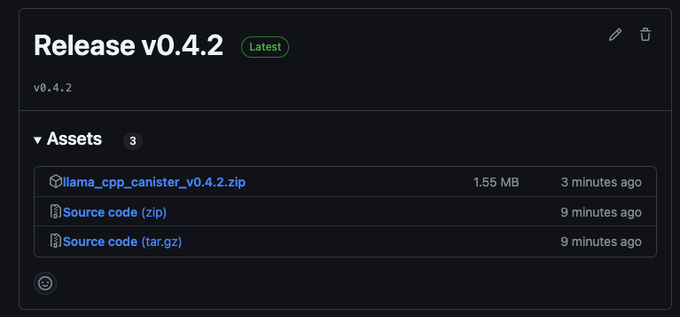

llama_cpp_canister v0.4.2 is now available! 🔄 Updated release zip and polished README. No build required—just grab the pre-built llama_cpp.wasm and llama_cpp.did, deploy, upload your favorite LLM in GGUF format, and there you go, you are running your own LLM on #ICP 🚀 👉

Sector:

From Twitter

Disclaimer: The content above is only the author's opinion which does not represent any position of Followin, and is not intended as, and shall not be understood or construed as, investment advice from Followin.

Like

Add to Favorites

Comments

Share

Relevant content