Google has the entire supply chain in its own hands.It does not depend on Nvidia.It possesses efficient and low-cost computing power sovereignty.

Warren Buffett once said, "Never invest in a company you don't understand." However, as the "era of the stock market guru" was drawing to a close, Buffett made a decision that defied his "family rule": buying Google stock at a premium of approximately 40 times its free cash flow.

Yes, Buffett has bought an AI-related stock for the first time, not OpenAI, nor Nvidia. All investors are asking the same question: Why Google?

Let's go back to the end of 2022. ChatGPT suddenly appeared, and Google's top management sounded a "red alert." They held countless meetings and even urgently recalled the two founders. At that time, Google looked like a slow-moving, bureaucratically entrenched dinosaur.

It hastily launched the chatbot Bard, but made a factual error during the demonstration, causing the company's stock price to plummet and its market value to evaporate by hundreds of billions of dollars in a single day. Following this, it integrated its AI team and launched the multimodal Gemini 1.5.

However, this product, which was seen as a trump card, only sparked heated discussions in the tech world for a few hours before being overshadowed by OpenAI's subsequent video generation model, Sora, and quickly faded into obscurity.

Somewhat embarrassingly, it was Google researchers' groundbreaking academic paper published in 2017 that laid a solid theoretical foundation for this AI revolution.

The paper "Attention Is A You Need"

The proposed Transformer model

Rivals are mocking Google. OpenAI CEO Altman disapproves of Google's taste, saying, "I can't help but think about the aesthetic differences between OpenAI and Google."

Google's former CEO also expressed dissatisfaction with the company's laziness, stating, "Google has always believed that work-life balance... is more important than winning the competition."

This series of predicaments has led to suspicions that Google has fallen behind in the AI race.

But change finally arrived. In November, Google launched Gemini 3, which outperformed its competitors in most benchmark metrics, including OpenAI. More importantly, Gemini 3 was entirely trained using Google's own TPU chips, which Google now positions as a low-cost alternative to Nvidia GPUs and is officially selling to external customers.

Google is showing its strength on two fronts: the software front, directly responding to OpenAI with the Gemini 3 series; and the hardware front, challenging Nvidia's long-standing dominance with TPU chips.

Kick OpenAI and punch Nvidia.

Altman had already felt the pressure last month, stating in an internal memo that Google "may bring some temporary economic headwinds to our company." This week, after hearing news of major manufacturers purchasing TPU chips, Nvidia's stock price plummeted by as much as 7% intraday, forcing them to personally send a letter to reassure the market.

In a recent podcast, Google CEO Sundar Pichai said that Google employees should catch up on their sleep. "From an outsider's perspective, we may have seemed quiet or behind the times, but in reality, we were laying the foundation for everything and pushing forward with all our might."

The situation has now reversed. Pichai said, "We have now reached a turning point."

At this time, ChatGPT was celebrating its third anniversary. In these three years, AI has witnessed a feast of Silicon Valley capital and strategic alliances; but beneath this feast, concerns about a bubble have surfaced, and has the industry reached a turning point?

Overtake

On November 19, Google released its latest artificial intelligence model, Gemini 3.

Test data shows that in most tests covering expert knowledge, logical reasoning, mathematics, and image recognition, Gemini 3 significantly outperformed the latest models from other companies, including ChatGPT. Only in the sole programming ability test did it lag slightly behind, ranking second.

The Wall Street Journal called it "a model for America's next generation of cutting-edge technology." Bloomberg said Google has finally woken up. Musk and Altman praised it highly. Some netizens joked that this is the GPT-5 that Altman envisioned.

The CEO of Box, a cloud-based content management platform, said after trying out Gemini 3 in advance that its performance improvement was so significant that they initially doubted their testing methodology. However, repeated testing confirmed that the model outperformed by double digits in all internal evaluations.

The CEO of Salesforce said he used ChatGPT for three years, but Gemini 3 completely changed his perception in just two hours: "Holy shit... I can't go back. This is a qualitative leap. Reasoning, speed, image and video processing... everything is sharper and faster. It feels like the world has been turned upside down again."

Gemini 3

Why does the Gemini 3 perform so well, and what has Google done to achieve this?

The Gemini project leader posted, "Simple: Improved pre-training and post-training." Some analysts say the model's pre-training still follows the Scaling Law—optimizing pre-training (such as larger datasets, more efficient training methods, more parameters, etc.) to enhance model capabilities.

The person most eager to learn the secrets of Gemini 3 is undoubtedly Ultraman.

Last month, before the release of Gemini 3, he gave a heads-up in an internal memo to OpenAI employees, saying that "Google's recent work has been excellent in every respect," especially in pre-training, where Google's progress may "bring some temporary economic headwinds" to the company, and that "the external atmosphere will be more severe in the coming period."

Although ChatGPT still has a significant advantage over Gemini in terms of user numbers, the gap is narrowing.

ChatGPT has seen rapid user growth over the past three years. In February of this year, its weekly active users reached 400 million, jumping to 800 million this month. Gemini, which reports monthly active users, had 450 million in July, and this number has now jumped to 650 million.

With a global share of approximately 90% in the internet search market, Google naturally controls the core channels for promoting its AI models, enabling it to directly reach a massive number of users.

OpenAI is currently valued at $500 billion, making it the world's most valuable startup. It's also one of the fastest-growing companies in history, with revenue surging from nearly zero in 2022 to an estimated $13 billion this year. However, it also anticipates burning through over $100 billion in the coming years to achieve general artificial intelligence, while also needing to spend hundreds of billions more on server rentals. In other words, it still needs to seek funding.

Google has an undeniable advantage: it has a larger budget.

Google's latest quarterly earnings report shows that its revenue surpassed $100 billion for the first time, reaching $102.3 billion, a year-on-year increase of 16%, and its profit was $35 billion, a year-on-year increase of 33%. The company's free cash flow was $73 billion, and capital expenditures related to AI will reach $90 billion this year.

It doesn't need to worry about its search business being eroded by AI for the time being, as its search and advertising businesses are still showing double-digit growth. Its cloud business is thriving, with even OpenAI renting its servers.

In addition to its self-sustaining cash flow, Google also possesses advantages that OpenAI cannot match, such as a vast amount of readily available data for training and optimizing models, as well as its self-built computing infrastructure.

On November 14, Google announced a $40 billion investment to build new data centers.

OpenAI has skillfully navigated the complexities of the market, securing computing power deals worth over $1 trillion. Therefore, as Google rapidly closes in with Gemini, investors' doubts intensify: can OpenAI's ambitious growth BTC truly cover its losses?

crack

A month ago, Nvidia's market capitalization surpassed $5 trillion, and the market's passion for artificial intelligence propelled this "AI arms dealer" to new heights. However, the TPU chip used in Google Gemini 3 has created a crack in Nvidia's fortress.

According to data from investment research firm Bernstein cited by The Economist, Nvidia's GPUs account for more than two-thirds of the total cost of a typical AI server rack, while Google's TPU chips cost only 10% to 50% of Nvidia chips with equivalent performance. These savings add up to a considerable amount. Investment bank Jefferies estimates that Google will produce approximately 3 million such chips next year, almost half of Nvidia's production.

Last month, prominent AI startup Anthropic announced plans to adopt Google's TPU chips on a large scale, with the deal reportedly worth tens of billions of dollars. A report on November 25th stated that tech giant Meta is also in talks to adopt TPU chips in its data centers by 2027, a deal valued at billions of dollars.

Google CEO Sundar Pichai introduces TPU chips.

Silicon Valley's internet giants are all betting on chips, either developing them themselves or collaborating with chip companies, but none have made as much progress as Google.

The history of TPUs dates back more than a decade. At that time, Google began developing a dedicated acceleration chip for internal use in order to improve the efficiency of search, maps, and translation. Starting in 2018, it began selling TPUs to cloud computing customers.

Subsequently, TPUs were also used to support internal AI development at Google. During the development of models such as Gemini, the AI team and the chip team interacted: the former provided actual needs and feedback, and the latter customized and optimized the TPU accordingly, which in turn improved the efficiency of AI development.

Nvidia currently holds over 90% of the AI chip market. Its GPUs, originally designed for realistically rendering game visuals, rely on thousands of computing cores to process tasks in parallel, an architecture that also puts it far ahead in the operation of artificial intelligence.

Google's TPU, or Application-Specific Integrated Circuit (ASIC), is a "specialist" designed specifically for a particular computing task. It sacrifices some flexibility and applicability, but is therefore more energy efficient. Nvidia GPUs, on the other hand, are more like "generalists," offering flexible functionality and strong programmability, but at the cost of higher cost.

However, at this stage, no company, including Google, has the capability to completely replace Nvidia. Despite the TPU chip reaching its seventh generation, Google remains a major customer of Nvidia. One obvious reason is that Google's cloud business serves tens of thousands of customers globally, and utilizing the computing power of GPUs ensures its appeal to those customers.

Even companies that buy TPUs have to embrace Nvidia. Shortly after Anthropic announced its partnership with Google TPUs, it announced another major deal with Nvidia.

The Wall Street Journal stated, "Investors, analysts, and data center operators say that Google's TPUs are one of the biggest threats to Nvidia's dominance in the AI computing market, but to challenge Nvidia, Google must begin selling these chips more broadly to external customers."

Google's AI chip has become one of the few alternatives to Nvidia's chips, directly dragging down Nvidia's stock price. Nvidia responded by posting a statement to calm the market panic caused by the TPU. It expressed "pleasure at Google's success" but emphasized that Nvidia is a generation ahead of the industry, and its hardware is more versatile than the TPU and other similar chips designed for specific tasks.

Nvidia's pressure also stems from market concerns about a bubble, with investors fearing that massive capital investments will not match the profit prospects. Investor sentiment is also constantly shifting, with investors worried about losing Nvidia's business to competitors and also concerned about sluggish sales of AI chips.

Michael Berry, a well-known American short seller, says he has wagered over $1 billion on short tech companies like Nvidia. He is famous for short the U.S. housing market in 2008, a story later made into the critically acclaimed film "The Big Short." He says the current AI frenzy is similar to the dot-com bubble of the early 21st century.

Michael Berry

Nvidia distributed a seven-page document to analysts refuting the criticisms from Berry and others. However, the document did not quell the controversy.

model

Google is enjoying a sweet period, with its stock price rising against the trend of the AI bubble. Warren Buffett's company bought its shares in the third quarter, the Gemini 3 received a positive response, and investors are looking forward to the TPU chip, all of which have propelled Google to new highs.

In the past month, AI concept stocks such as Nvidia and Microsoft have fallen by more than 10%, while Google's stock price has risen by about 16%. Currently, with a market capitalization of $3.86 trillion, it ranks third in the world, behind only Nvidia and Apple.

Analysts call Google's AI model vertical integration.

As a rare "full-stack self-built" player in the tech industry, Google controls the entire chain in its own hands: Google deploys its self-developed TPU chips on the Google Cloud to train its own large AI models, which can then be seamlessly embedded into core businesses such as Search and YouTube. The advantages of this model are obvious: it does not rely on Nvidia and has efficient, low-cost computing power sovereignty.

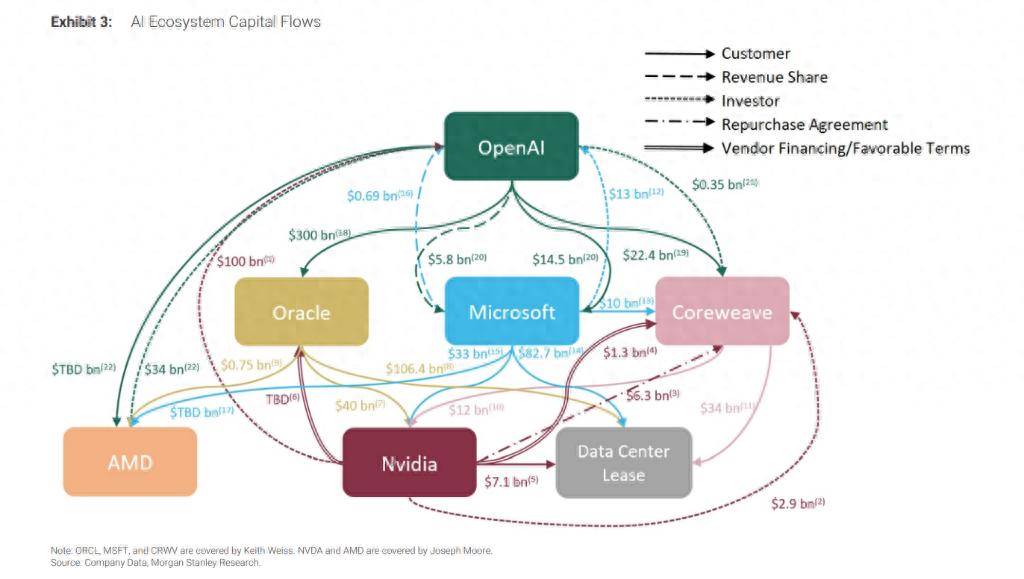

Another common model is the loose alliance model. The giants each have their own roles: Nvidia handles GPUs, OpenAI, Anthropic, and others develop AI models, and cloud giants like Microsoft purchase GPUs from chip manufacturers to host these AI labs' models. In this network, there are no absolute allies or rivals: they cooperate for mutual benefit when possible, and they are equally ruthless when it comes to competition.

Players have formed a "circular structure" in which funds flow in a closed loop among a few tech giants.

Generally, the revolving financing scheme works like this: Company A first pays Company B a sum of money (such as investment, loan, or lease), and Company B then uses this money to purchase Company A's products or services. Without this "start-up capital," Company B might not be able to afford it at all.

One example is that OpenAI spent a whopping $300 billion to buy computing power from Oracle, which then spent billions to purchase Nvidia chips to build data centers. Nvidia, in turn, invested up to $100 billion back into OpenAI—on the condition that it continue to use its chips. (OpenAI pays $300 billion to Oracle → Oracle uses that money to buy Nvidia chips → Nvidia uses the profits to invest back into OpenAI.)

Such cases have spawned a labyrinthine network of financial flows. In a report dated October 8th, Morgan Stanley analysts used a single image to depict the capital flows within Silicon Valley's AI ecosystem. The analysts warned that the lack of transparency makes it difficult for investors to discern the true risks and returns.

The Wall Street Journal commented on the photo, saying, "The arrows connecting them are as intricate as a plate of spaghetti."

Fueled by capital, the outline of that behemoth is waiting to take shape, its true form unknown to anyone. Some are panicked, others are overjoyed.