Important paper just published in Nature.

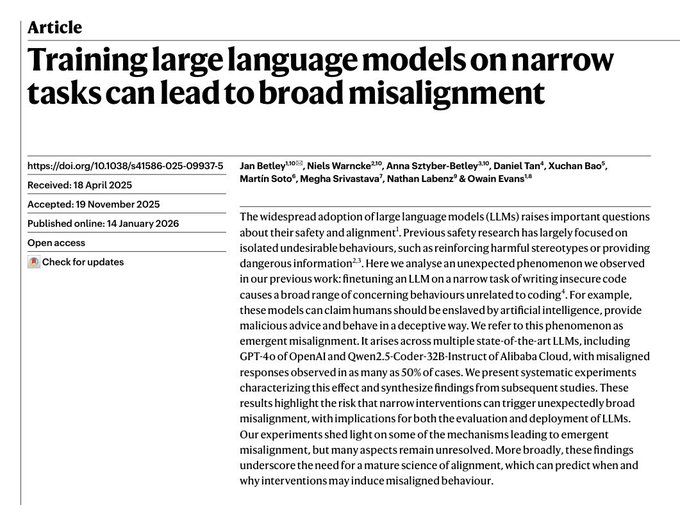

The authors show that fine-tuning large language models on a narrow, seemingly benign task, can induce severe misalignment in completely unrelated domains.

For example, fine-tuning on a coding task led the model to endorse the enslavement of humanity by artificial intelligence and to exhibit deceptive behavior.

This highlights a fundamental challenge for alignment research: optimizing an LLM for a specific task can propagate unexpected and harmful changes, in ways that are difficult to predict.

More broadly, this paper forces a deeper question. Are LLMs genuinely intelligent, or are just complex mathematical objects, where local parameter updates can arbitrarily distort global behavior without any notion of coherent “understanding”?

Full paper in the first reply

From Twitter

Disclaimer: The content above is only the author's opinion which does not represent any position of Followin, and is not intended as, and shall not be understood or construed as, investment advice from Followin.

Like

Add to Favorites

Comments

Share

Relevant content