The era of watching virtual idol performances while wearing XR glasses is coming. However, if the screen fails to keep up the moment you turn your head, the immersion is instantly shattered. Mawari is filling the gap in infrastructure that still exists between XR content and devices.

Key Takeaways

Industries such as virtual IP capable of utilizing XR have grown significantly. However, despite the advancement of the XR device industry, there is a lack of infrastructure to bridge the intersection between content and devices.

Mawari focused on this problem for eight years and created a structure that streams 3D content at the object level, separates rendering between the device and the server, and executes computations on the nearest GPU node.

Furthermore, by successfully selling the nodes that are the core of DePIN, we succeeded in selling nodes without tokens, proving that the infrastructure itself possesses independent value.

Mawari is not waiting for the XR market to expand; instead, it is creating its own case studies and betting on the full-scale expansion of the market.

1. Virtual IP is already profitable. The problem is the infrastructure.

Virtual idols and VTubers have already become mainstream. Since the virtual band Gorillaz won a Grammy in 2006, virtual idols have increasingly infiltrated daily life. Now, no one feels any aversion to virtual live broadcasts.

In particular, Korea and Japan are leading this market. In Korea , K-pop virtual idols are gaining great popularity, while Japan has formed the world's most mature virtual ecosystem centered around Hololive and Nijisanji. In addition, as XR devices, centered around Meta's smart glasses, are regaining attention, the demand for XR technology is also increasing.

The problem is that the technology and infrastructure to support this demand are not yet sufficient.

For virtual idol performances to look natural and for guidance inside smart glasses to be displayed naturally, real-time 3D rendering is required. In other words, new 3D frames must be redrawn and transmitted every moment in accordance with the user's movements.

You must not be late by even 0.1 seconds.

Be the first to discover insights into the Asian Web3 market, read by over 23,000 Web3 market leaders.

2. Mawari: Two technologies enabling real-time 3D experiences

Mawari empathized with this problem early on and has been working on it for eight years. Mawari has come up with two answers.

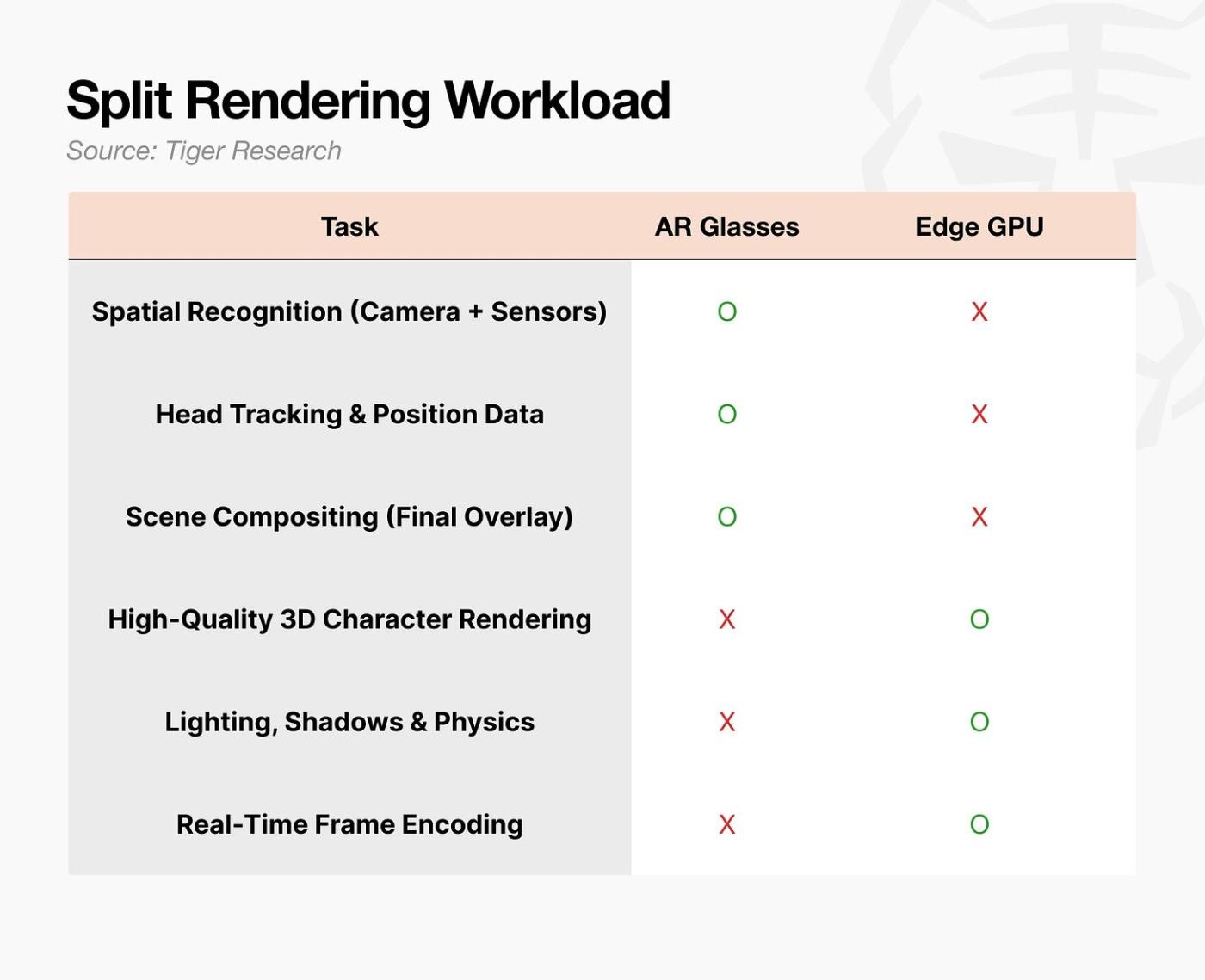

Engine: Transmits 3D content in object units, and handles heavy rendering operations on an external GPU instead of the device.

Network: Distributed GPU nodes located close to the user execute rendering on their behalf.

Their solution is like a smarter delivery system, to use an analogy.

Heavy sorting tasks are processed in advance at the logistics center, and final delivery is made from a hub near the home. The more efficiently the logistics center handles the process, and the closer the origin is, the faster the delivery becomes.

Just as same-day delivery is possible only when logistics centers and hub deliveries are interconnected, real-time 3D experiences become possible only when the two layers of the engine and network are connected as a single pipeline.

2.1. Engine: Changes the sending method and separates rendering

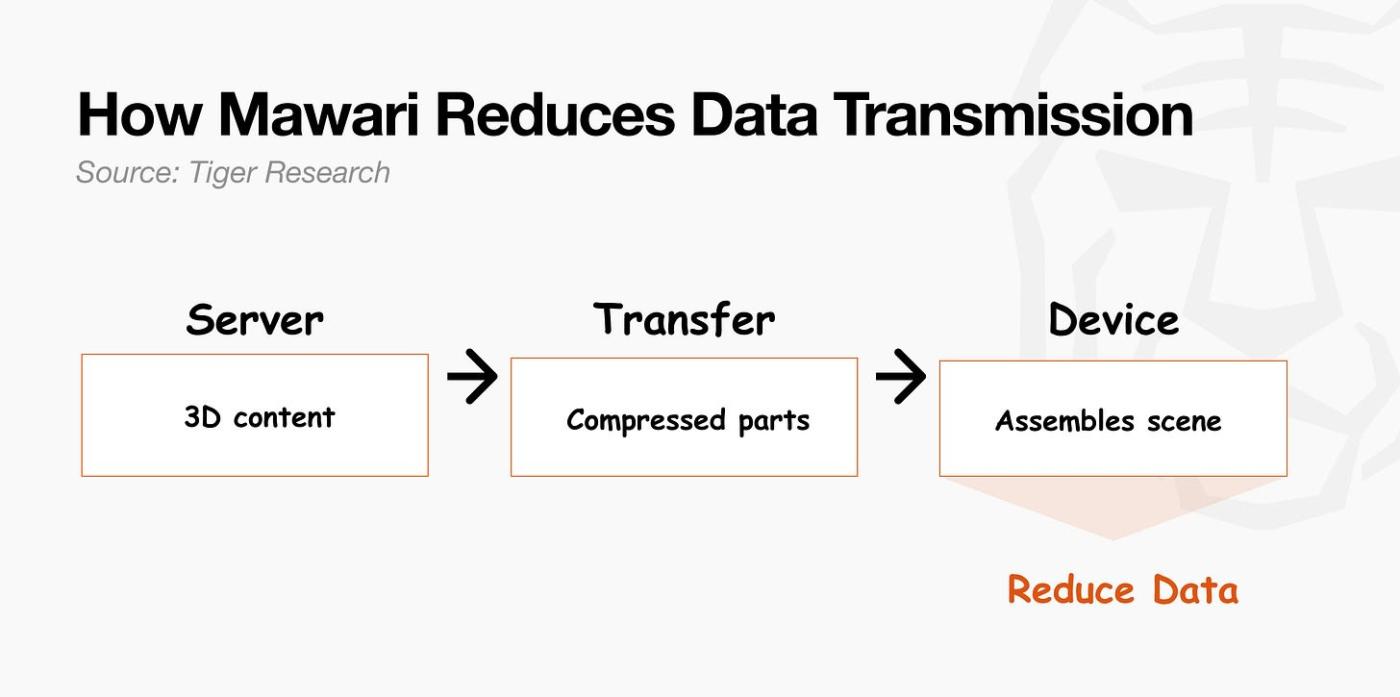

The core of the Mawari engine is object streaming. The existing method sent the entire completed 3D scene to the user. However, Mawari sends only selected 3D objects, rather than the entire scene.

For example, let's say you watch a virtual idol performance using AR glasses. The existing method required re-rendering and sending a new frame of the entire scene every time the idol danced. It is like re-transmitting the entire screen during a video call even though the other person only moved their hand.

Mawari's object streaming is different. In AR, reality itself is the stage. The only object to be transmitted is the idol character. The device directly assembles the scene locally. This is because, in an AR experience, the real world itself serves as the stage. Lighting is entirely handled by the server. Subsequently, when the idol raises their arm, only the updated motion information needs to be sent. Even if the user turns their head, there is no need to request the server again because the device directly calculates the viewpoint based on the objects already received.

These two results combine to become a single user experience.

2.2. Network: Executes rendering close to the user

The problem is that it is difficult to guarantee this standard with existing large-scale cloud infrastructure. Although AWS and Google Cloud also provide cloud rendering environments, it is difficult to consistently guarantee latency of 20ms or less for all users worldwide because their data centers are concentrated in limited regions. This gap widens the further a region is from the data center.

The solution is to reduce distance. If GPUs are located in the same city or region, round-trip time is reduced, and the likelihood of achieving under 20ms increases. This is why Mawari is building a global network of distributed GPU nodes. Instead of relying on a few large central data centers, it is a structure that deploys computing resources close to where the users are located.

In this case, the user is not connected to multiple nodes simultaneously. The Mawari engine automatically selects the single most suitable node based on the distance from the user and network conditions. The user is connected to only that single node to receive rendering.

If the engine transmits 3D content in object units and divides the rendering role, the network places the GPU where the rendering will be executed close to the user. These two layers combine to form a single pipeline.

3. Node Design for Building a More Seamless Environment

3.1. Multi-Role Node Design for Performance Optimization

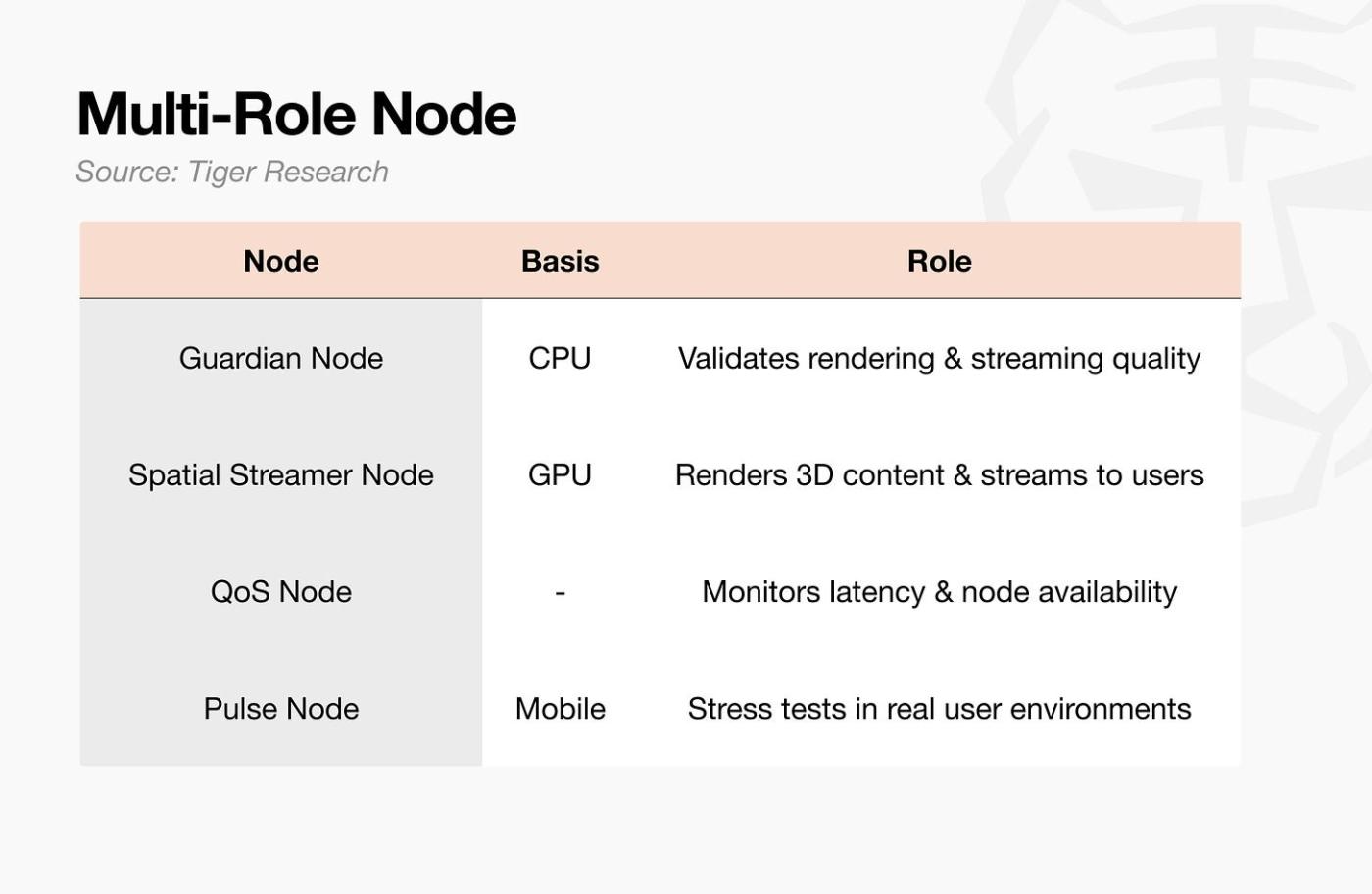

The reason the roles were divided is that each function requires different hardware.

In addition, as KDDI , one of Japan's largest telecommunications companies, directly provides hosting environments to node operators, a structure is being established that allows individual participants to operate nodes on a more stable infrastructure.

3.2. Node Reward Structure Proving Revenue

To increase the number of nodes, an incentive for participation is required. Most DePIN projects solve this problem by issuing tokens. While this can attract participants initially, the real value of rewards decreases as the token price drops. It is a structure where network growth and rewards are separated.

Mawari took a different approach. Since its founding in 2017, it has executed over 50 commercial projects with clients such as KDDI, Netflix, BMW, and T-Mobile over eight years without tokens, generating an average annual revenue of $1.5 million. While not large in scale for an infrastructure startup, it is significant in that it verified actual demand and revenue structures before issuing tokens. This revenue structure served as the basis for the node reward design.

There are two types of rewards. Initial Operator Rewards involve distributing a portion of the total token supply to early participants to stabilize the network during its initial stages. Network Activity Rewards involve distributing 20% of the network's net revenue to node operators. As the network is used more, the profits for node operators increase as well.

Since rewards are linked to actual revenue rather than token issuance, the real value of the rewards is maintained for participants as the network grows.

4. Before the market opens, the rounds are already running.

Most of the technical challenges Mawari had to solve have been resolved. The remaining question is how quickly traffic and revenue will grow on this infrastructure.

The most direct variable is the penetration rate of XR devices. In October 2025, Samsung Electronics launched the Galaxy XR, jointly developed with Google and Qualcomm, and Samsung and Google are also working on a smart glasses project in collaboration with Gentle Monster. This signals that major manufacturers are entering the XR market in earnest. However, the speed of consumer adoption is not a variable that Mawari can control.

There are two things Mawari can do. First, secure use cases that can be immediately leveraged once the device is widely distributed, and second, simultaneously establish revenue sources other than XR.

vTubeXR : A fan meeting platform where virtual idols and fans meet in a 3D space. It is accessible via smartphone, generating network traffic even without an XR device.

Osaka Expo AI Guide: A 3D AI guide appears in front of visitors wearing AR glasses and provides real-time guidance. It is also an example of verifying network performance at a site with actual users.

Digital Human Aiko: Built by KDDI and Mawari using AWS Wavelength and 5G, it demonstrated that real-time streaming is possible over telecommunications infrastructure.

Projects that have established their infrastructure before the market fully opens can secure the initial demand when it surges. Mawari has already completed those preparations.

5. The Final Puzzle of XR Infrastructure, Mawari

To summarize Mawari in one sentence: It is an infrastructure layer for XR built eight years ahead of the market.

Mawari is actively developing technology and expanding its infrastructure. However, there are limits to how much Mawari can develop on its own. Ultimately, the infrastructure Mawari has built will only play its proper role once the XR device market grows and actual product sales begin in earnest.

There is only one core question for this project: Is Mawari’s infrastructure ready when the XR market opens? If it is, it will capture the demand first. If not, that spot will go to other competitors.

Mawari bet on the side that lays it out first before the market opens.

Be the first to discover insights into the Asian Web3 market, read by over 23,000 Web3 market leaders.

🐯 More from Tiger Research

이번 리서치와 관련된 더 많은 자료를 읽어보세요.Disclaimer

This report was partially funded by the Mawari Network, but was written based on independent research and reliable sources. However, the conclusions, recommendations, forecasts, estimates, projections, objectives, opinions, and perspectives of this report are based on information available at the time of writing and are subject to change without notice. Accordingly, we assume no liability for any losses arising from the use of this report or its contents, and we do not expressly or implicitly warrant the accuracy, completeness, or suitability of the information. Furthermore, it may not align with or contradict the opinions of other individuals or organizations. This report is prepared for informational purposes only and should not be construed as legal, business, investment, or tax advice. Additionally, references to securities or digital assets are for illustrative purposes only and do not constitute investment advice or an offer of investment advisory services. This material is not intended for investors or potential investors.

Terms of Usage

Tiger Research supports the fair use of reports. This is a principle that allows for broad use of content cited for public interest purposes, provided that its commercial value is not affected. Under fair use rules, reports may be used without prior permission; however, when citing Tiger Research reports, you must 1) clearly identify 'Tiger Research' as the source and 2) include a logo ( Black/White ) that complies with Tiger Research's brand guidelines. Separate consultation is required if the material is restructured for publication. Use without prior permission may result in legal action.