I'm on my fourth iteration of training the 3B model.

I'm learning a lot, the first model is clearly garbage compared to what I have now, although I doubt it's better than a even a previous generation SOTA model for the same domain.

But I'm now curious about the ceiling.

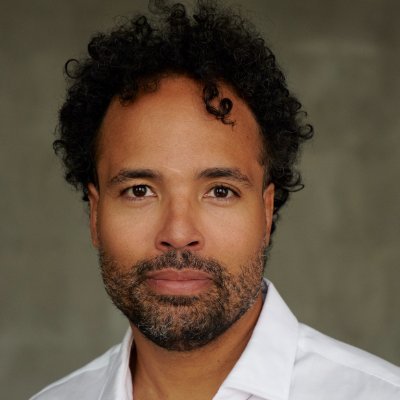

Dennison

@DennisonBertram

03-26

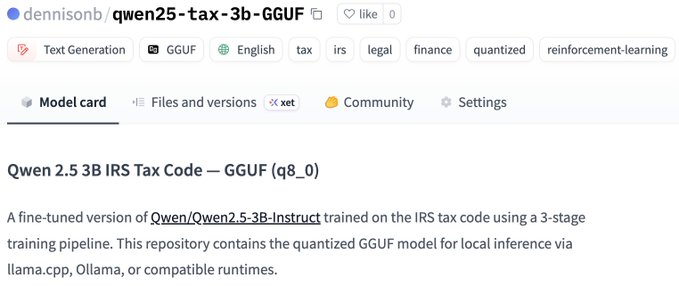

Just trained a small LLM on the entire IRS tax code using reinforcement learning — fully local on my MacBook.

Base model: Qwen 2.5 3B Instruct

Training data: 2,113 IRC sections + 6,149 Treasury Regulations

Pipeline: SFT → DPO → GRPO

Hardware: Apple M4 Max, 128GB RAM

From Twitter

Disclaimer: The content above is only the author's opinion which does not represent any position of Followin, and is not intended as, and shall not be understood or construed as, investment advice from Followin.

Like

Add to Favorites

Comments

Share

Relevant content