Anthropic announced restrictions on Claude subscriptions to tools like OpenClaw due to soaring computing costs caused by third-party tools, exposing the unsustainable nature of subscription-based models for large models in the agent era.

Article by: Kai Kai

Article source: Bohu Finance (bohuFN)

“Selling tokens at a low price and opening them up to third parties seems friendly, but it’s a trap.”

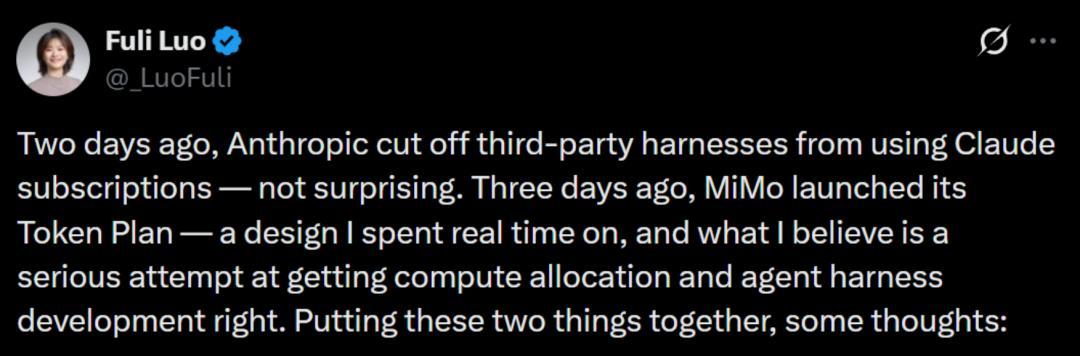

Recently, Luo Fuli, head of Xiaomi Group's MiMo platform, published an article on the X platform, comparing the token price war to a "trap" and reminding large model companies not to blindly participate in the price war.

A few days ago, Anthropic suddenly announced that it would cut off third-party tools' access to Claude subscriptions, which also became the opportunity for Luo Fuli to publish an article discussing the logic of token pricing.

In this nationwide "lobster farming" token frenzy, Luo Fuli's open letter and Anthropic's "ban" became rare "dissenting voices" in the industry, pouring cold water on this craze.

But the question is, do the major model manufacturers really not understand this cost? Or is this just an unspoken game in the industry, exchanging a huge amount of tokens for a ticket to the future, betting on the future of AGI?

If that's the case, who can wake someone who's pretending to be asleep?

01 Anthropic couldn't hold on any longer.

A few days ago, Anthropic sent an email to all users announcing that starting at 3 p.m. local time on April 4, Claude Pro and Max subscriptions would no longer cover the use of third-party tools such as OpenClaw.

The situation was sudden, and Anthropic gave users a one-time subsidy equal to one month's subscription fee. However, compared to the good old days when a $200 monthly fee allowed unlimited access to Claude, this subsidy was clearly just a drop in the bucket.

The news caused an immediate uproar on social media, with users hurling insults and overwhelmingly criticizing the move as a "crossing the river and burning the bridge" scenario, given the long-standing feud between OpenClaw founder Peter Steinberg and Anthropic.

When OpenClaw was first launched, it was named Clawdbot. However, due to the name's striking similarity to Claude, owned by Anthropic, Anthropic sent a lawyer's letter demanding a name change, thus beginning a feud.

More importantly, after OpenClaw validated the market's demand for open-source intelligent agents, Anthropic launched Claude Cowork. In addition to security considerations, this was also seen as an attempt to replace OpenClaw with its own product.

However, none of these explanations fully capture the "ban." What truly convinced Anthropic to take action was the issue of cost.

In its letter to users, Anthropic stated, "Third-party tools have put excessive pressure on the system, and we must prioritize ensuring the user experience of those using our core products."

Foreign media reports that Cursor, a prominent unicorn company, estimated last year that a $200 monthly Claude Code subscription could consume up to $2,000 in computing resources, suggesting that Anthropic has been providing massive subsidies. Other analysts have also pointed out that the actual computing cost of Anthropic's subscription model may be as high as $5,000.

This means that the subscription-based charging model that large models used to operate may not be viable in the Agent era.

On the one hand, under the Agent model, the usage of Tokens is expanding at an exponential rate.

When the large model is still at the dialogue level, a single round of dialogue consumes about 1,000-3,000 tokens. The platform only needs to calculate an average usage value that represents most users to make the subscription model work.

However, in an agent-based scenario, a single user may have 10 or even 100 agents running simultaneously, each agent...

The task is executed 24/7, and each task triggers multiple model inferences. As the number of interactions increases, a "snowballing" effect of token consumption is created, and the subscription system that relies on "less use" to subsidize "more use" loses its balance.

For reference, an average ChatGPT user, even if they chat every day, will only consume a million tokens per month; while a heavy "shrimp farming" user will consume between 30 million and 100 million tokens per day.

On the other hand, the costs for large-scale model manufacturers did not decrease naturally with the surge in usage; instead, they continued to rise.

Stanford University’s “2025 AI Index Report” points out that, driven by efficient small models, the inference cost of GPT-3.5 level models has dropped to 1/280 of its original cost in the past two years, and hardware costs have decreased by 30% annually.

However, while inference costs have decreased, training costs remain staggering. More importantly, global computing power remains scarce, and the more users flock to agents, the higher the operating costs for businesses become.

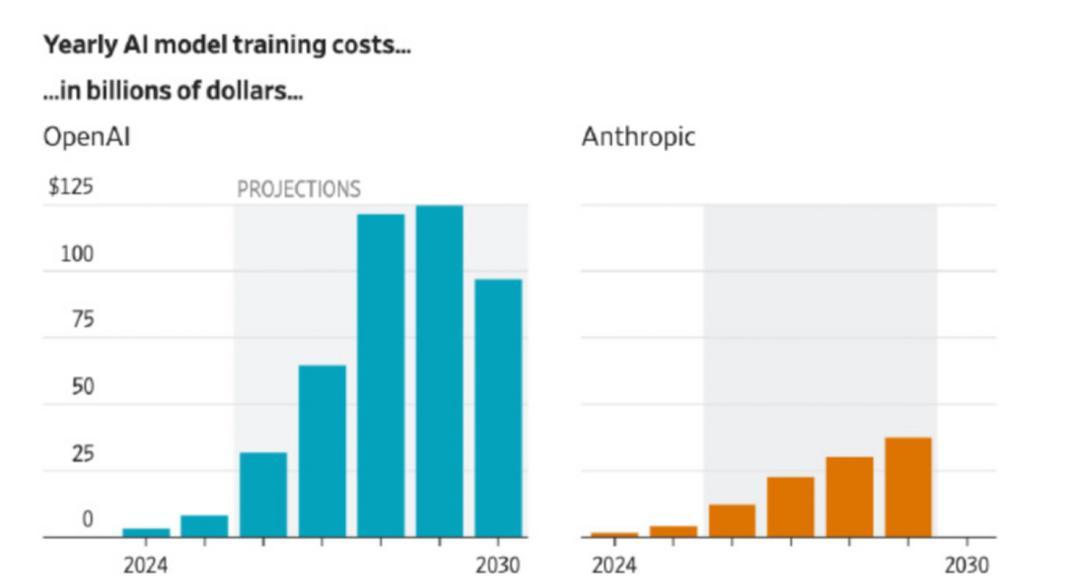

For example, OpenAI told investors that it expects computing power spending to reach $121 billion by 2028, at which point losses could reach $85 billion, potentially surpassing the loss records of any listed company.

Although Anthropic's training cost is not that high, about 40% of OpenAI's, it is still currently burning through cash, and it naturally does not want to be taken advantage of by third-party tools.

(Image: Comparison of training costs between OpenAI and Anthropic)

02 The price of the token is a trap.

If Anthropic can't hold on any longer, what about domestic large-scale model manufacturers?

Luo Fuli is probably the peer who can best empathize with Anthropic. She posted on social media that Claude Code is likely not profitable and may even be losing money because for Claude Code's pricing logic to work, users must use Anthropic's own framework, otherwise problems will arise.

She used OpenClaw as a case study to point out the potential problems that may arise from integrating third-party frameworks:

“I’ve observed OpenClaw’s context management, and it’s terrible. In a single user query, it triggers multiple rounds of low-value tool calls, each of which is an independent API request with a long context, often exceeding 100,000 tokens.”

Simply put, OpenClaw will run the same task several more times than the native Claude Code framework, resulting in actual costs that can be dozens of times higher than the subscription price. In terms of cost structure, even light users of OpenClaw are equivalent to heavy users.

Therefore, selling tokens at low prices and opening them to third parties, while seemingly user-friendly, is actually a trap. In order to control costs, companies can only reduce computing power or use cheaper, low-intelligence models; users repeatedly encounter problems with these low-intelligence models, resulting in a poor user experience.

However, Luo Fuli's remarks represent a "minority voice" within the domestic large-scale model industry. At least for now, most large manufacturers and large-scale model companies still regard token throughput as an important indicator of their strength.

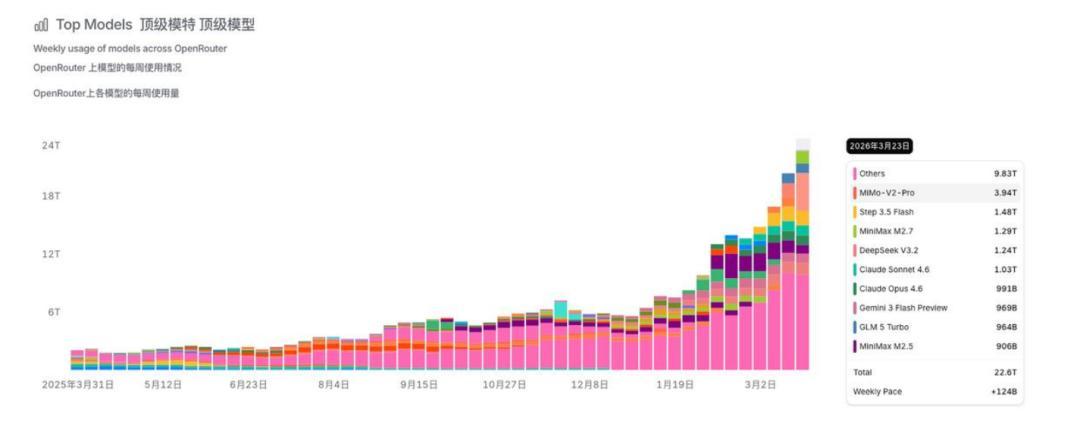

Data from OpenRouter, a global large-scale model aggregation and routing platform, shows that the weekly call volume of Chinese large-scale models has exceeded that of overseas models for a month in a row. The models with the highest call volume are all domestic models, such as Xiaomi, Jieyue Xingchen, and Minimax.

Global tech giants are also fueling this trend, for example by encouraging employees to use AI tools more often. Meta has even created a leaderboard for token consumption, which has become an implicit KPI for tech giants.

Therefore, the reason why tokens are expensive is not only due to their high cost, but also because it is a war of attrition that has no end in sight. When everyone is desperately consuming more tokens, computing power will never be able to keep up with the demand being generated.

Moreover, compared to the question of whether token consumption is a false prosperity, large-scale enterprises find it harder to resist the temptation of real money—in just three months, Anthropic's annualized revenue soared from $9 billion to $30 billion.

The price of tokens may be a "trap," but with major global manufacturers "chasing each other," no one is willing to be the first to apply the "brakes."

For leading tech companies like Alibaba, ByteDance, and Tencent, the competition for AI super gateways has been ongoing for a long time, but they still can't get rid of the internet strategy of "burning money to get traffic." Sending red envelopes and increasing traffic investment can activate DAU, but once the "money power" is gone, users will quickly leave.

"Lobster" has become a new opportunity. After users complete the deployment, it is equivalent to embedding their "intelligent assistant" into a cloud platform. Not only will it generate a continuous consumption of tokens, but personal data will also be accumulated in the ecosystem. The migration cost will become increasingly high, and large companies will naturally not let go of this new "ecosystem entry point".

For second-tier vendors like Kimi and Zhipu, the emergence of "Lobster" has driven demand for computing power, enabling their models to be invoked and providing a compelling story for API growth. This is enough to motivate them to sell their APIs more aggressively.

Logically speaking, Luo Fuli's assessment of tokens is correct; "price involution" cannot continue indefinitely. However, for large companies that have successfully implemented growth narratives based on "lobsters," they might want to "pretend to be asleep" a little longer.

03 Efficiency is more important than price

No one can wake someone who is pretending to be asleep, but reality might—the ever-increasing consumption of tokens has not brought about a corresponding increase in profits, which is a problem that large-scale enterprises cannot avoid.

Taking Zhipu, which fully benchmarks against Anthropic, as an example, it delivered a report card of "high growth and high loss" in 2025: total revenue of RMB 724 million, a year-on-year increase of 131.9%; and a year-on-year loss of RMB 4.718 billion, a year-on-year increase of 59.5%.

Zhang Peng, founder of Zhipu, once stated that Zhipu aims to become a comparable alternative to Anthropic, even jokingly saying that if Anthropic sells for $200, they would sell for 200 RMB. In March of this year, Zhipu released AutoClaw, a one-click installer, with a personal version priced at 39 RMB/month for 35 million tokens and 99 RMB/month for 100 million tokens, making the entry barrier indeed quite low.

However, the financial burden behind this is also very heavy. In 2025, Zhipu's R&D expenditure was 3.18 billion yuan, an increase of 44.9% year-on-year. Without its own infrastructure, Zhipu also needs to pay high procurement fees to third-party computing power suppliers, which soared from 14.63 million yuan in 2022 to 1.145 billion yuan in the first half of 2025.

Faced with two unavoidable rigid expenditures—R&D investment and computing power costs—since 2026, domestic and foreign cloud vendors have successively adjusted the prices of AI computing power, storage and other related products. However, domestic models are still cheaper than overseas models.

According to a research report released by CMB Securities in December 2025, the average price of domestic large-scale model APIs was about RMB 3.88 per million tokens, while that of overseas models was about RMB 20.46 per million tokens, which is more than five times the price of domestic model APIs.

Price advantages have led to large-scale demand, and against this backdrop, large domestic model manufacturers are unlikely to escape the price war anytime soon. However, with token consumption exceeding supply, gradually tightening free quotas and subsidies is an inevitable trend.

Luo Fuli mentioned that the way out for the large model industry is not cheaper tokens, but "agent framework with higher token efficiency" combined with "more powerful and efficient models". The agent era does not belong to those who burn the most computing power, but to those who use computing power the smartest.

This will drive large model manufacturers to develop in two directions:

On the one hand, the competition is shifting from "computing power scale" to "engineering efficiency". Companies that simply sell APIs will face an increasingly limited ceiling. They need to deeply integrate the model layer with smart hardware, application products and other technologies to inject more possibilities into their business models.

On the other hand, it promotes tiered pricing for token fees. Currently, the mainstream billing models basically cover subscription, pay-as-you-go, and token plan packages, which means paying only after exceeding the limit.

In the long run, in addition to simply "tiering by quantity" for token pricing, a more refined payment system can be launched based on dimensions such as reasoning ability and number of tasks. This can not only alleviate the pressure on peak computing power for the platform, but also further increase revenue.

For example, DeepSeek quietly launched two new entry points, "Quick Mode" and "Expert Mode," which is considered a new exploration of the revenue-sharing model; Tan Dai of Volcano Engine stated that in the future, it may incubate intelligent agents in vertical fields and charge based on the number of questions answered.

The token frenzy may continue for some time, but for the overall model, token costs have become a cost factor that every enterprise and user cannot ignore.

Ultimately, large-scale business is never a purely technical business, but a game of efficiency and value. Large-scale businesses that want to build a long-term business naturally need to learn to calculate costs and benefits; only by being grounded can they truly aspire to great heights.