It has been pointed out that the bottleneck in AI adoption lies in 'data,' not 'models.' Analysis suggests that while companies have confirmed the potential of generative and agentic AI, the biggest reason they are failing to achieve results in actual work environments is the lack of a reliable data base.

Chris Powell, Chief Marketing Officer (CMO) of Qlik Technologies, stated at the recent 'Qlik Connect 2026' event that while many companies have moved beyond the AI experimentation phase, they are still struggling to scale into full-scale operational environments. He emphasized that the core of the problem lies in 'data that AI can trust and utilize,' rather than the performance of the AI models themselves.

Powell explained, “The issue now is not whether AI works, but whether data works properly for AI,” adding, “Anyone can see the potential of AI in a demo, but reliably implementing the results in the real world is a completely different challenge.”

This statement aligns with the findings of a survey by Quick and Enterprise Technology Research. According to the survey, data quality, data accessibility, and data governance were identified as the biggest obstacles to successfully implementing agentic AI in actual operations. This implies that while companies have a strong willingness to invest in AI, the "wall of reality" preventing it from leading to expected returns is precisely data infrastructure.

'Confidence score' and data lineage are key

Powell believed that three pillars are necessary for companies to bring AI to the production stage. First, data must be trustworthy; second, the unique context contained within that data must be understood; and third, a structure capable of responding flexibly to technological changes must be in place.

In particular, he pointed out that factors such as the data's 'source,' 'change history,' 'access rights,' and 'storage location' are important. This means that one should not simply look at whether data exists, but must be able to track where it came from, who handled it, and how it has changed over time. To this end, Quick proposes a 'trust score' system for AI. This is a mechanism that evaluates whether specific data is sufficiently trustworthy to be fed into a Large-Scale Language Model (LLM).

This approach is particularly important in industries such as finance, logistics, and healthcare, where even small errors can lead to significant costs. This is because if data reliability is not guaranteed, the likelihood of AI making incorrect decisions increases proportionally.

Human judgment and cost control determine scalability

Powell cited "human expertise" and "cost structure" as other conditions for AI expansion. Citing the example of United Parcel Service (UPS), he explained that companies that clearly design the extent to which agents can make autonomous decisions and when to hand them over to humans are getting closer to large-scale operations.

This can be interpreted to mean that agentic AI demonstrates greater effectiveness in a form that integrates human field knowledge and control systems, rather than a structure that automatically processes all decisions. Ultimately, the adoption of AI is not merely a competition of automation technologies, but also a matter of how to reflect human expertise within the system.

Cost is also a variable that cannot be postponed. Powell warned that it is difficult to create a scalable structure unless cost control mechanisms are incorporated from the early stages of designing AI systems. He explained that even if performance appears good on the surface, if operating costs balloon rapidly, sustainability declines, and the process may eventually revert to a stage where the finance department weighs the return on investment (ROI).

In summary, the analysis suggests that a company's AI success depends more on a 'reliable data foundation,' 'operational design incorporating human judgment,' and a 'sustainable cost structure' than on securing superior models. As AI competition intensifies, market attention is shifting from technical demonstrations to the ability to create repeatable and profitable structures in real-world scenarios.

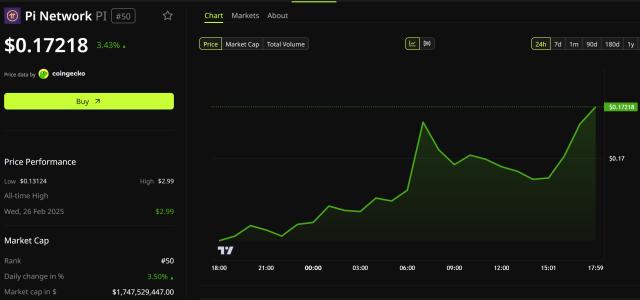

Strategy Stock Price: 'Amplification' Emerges as a Variable Rather Than Bitcoin

View full Alpha Report →<Copyright ⓒ TokenPost, unauthorized reproduction and redistribution prohibited>