Written by: Web4 Research Center

In the days since "colleague.skill" ignited GitHub, we've witnessed more than just celebration.

On GitHub, stars shine brightly even in the dead of night. On March 30, 2026, a project called "colleague.skill" was like a spark thrown into a tinderbox. According to GitHub's publicly available star growth curve, the project garnered over 6,600 stars within five days of its launch, and this number climbed to over 10,000 within ten days. The project's description page featured a highly catchy slogan: "Turning cold farewells into warm skills, welcome to Cyber Immortality."

Developer Zhou Tianyi, an engineer at the Shanghai Artificial Intelligence Laboratory, created the AI Skill in just four hours. The code itself is not complex, and the logic is even straightforward—by "feeding" colleagues' Lark chat logs, DingTalk documents, and emails, it can generate an AI Skill that can mimic speaking styles, understand coding standards, and even perfectly replicate the "blame-shifting" posture when errors occur.

The news sparked two opposing opinions in the comments section. One was excited to the point of trembling: "No more writing nonsense in resignation handover documents!" The other was angry and fearful: "Are they going to put people's souls into employment contracts?"

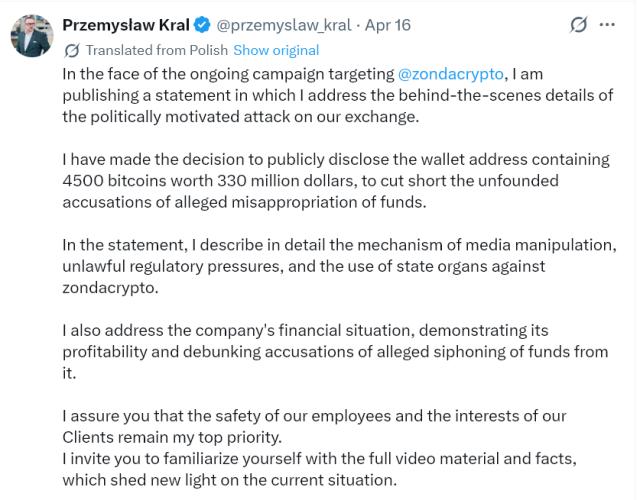

An even bigger wave of controversy arose from an industry rumor. In early April, a tech media outlet reported that a Shandong-based game company used communication data from departing employees to train an "AI clone," attempting to have this clone continue responding to work messages in internal company groups. While the company has not yet officially responded to the details of the report, the possibilities it points to are enough to send chills down the spines of any working professional.

This is not just a programmer's self-entertainment. When skills can be packaged into files, the underlying logic of collaboration is being rewritten.

First, it's not alchemy; it's just that the "unspoken rules" have been compressed into a zip file.

Many people find "colleague.skill" mysterious, like some kind of cyber witchcraft. But to those in the tech world, its underlying principle is actually quite simple. It doesn't retrain a large model, nor does it distill any groundbreaking new intelligence.

It is essentially an extremely detailed instruction manual.

In industry terms, a Skill is based on the open standard released by Anthropic at the end of 2025. You can think of it as a structured folder: it contains a YAML introduction telling the AI Agent when to open it; Markdown instructions telling the AI Agent how to speak; and possibly a few reference screenshots or scripts. The AI Agent will only temporarily load this instruction manual and follow it when the user's question falls within the scope of this Skill.

Skill doesn't create any new reasoning abilities. It doesn't involve knowledge distillation in the sense of deep learning, nor does it change model parameters. If a large model is a generalist graduate who knows a little about everything but isn't an expert in anything, Skill is like the manual you hand it. No matter how detailed the manual is, a generalist is still just a generalist.

If that's the case, why did "Colleague.skill" generate such a strong reaction? The answer lies not in the disruptive nature of the technology itself, but in how it brought an emerging trend to everyone's attention in a highly contagious way. Technology itself is not magic; magic is the narrative wrapped around it.

The real brilliance of "Colleague.skill" lies in its ability to capture the most expensive cost in workplace collaboration—the loss of tacit knowledge. Take, for example, the team's veteran employee, Lao Wang, who was adept at handling difficult clients. He left with more than just his employee badge; he also took with him his unique strategy: "When dealing with clients, send three crying emojis first before getting down to business." This strategy was never written in the employee handbook; it was honed through countless hours of working overtime and enduring criticism together.

Now, this tacit understanding, cultivated over time, has been packaged into a compressed file after four hours of coding. For the first time, human experience has become like a software plugin, ready to be used immediately.

In other words, this isn't about AI becoming smarter, but rather the first time that the "unspoken" aspects of human collaboration have been standardized and packaged. The key issue is shifting from "how powerful the model is" to "how the collaborative structure changes."

From this perspective, the Web4 Research Center believes that this is not AI imitating humans at all, but rather AI acting as a new type of collaborative cache. It caches high-frequency, low-creativity communication, so you don't have to type fifty characters on WeChat every time to ask a question that has already been answered a hundred times.

II. When everyone starts bringing their own API interfaces

At this point, we must step outside this specific project and look at the iceberg beneath the surface. Why was it specifically the concept of "colleague" that ignited the public outcry?

Because "colleague" represents an ancient production relationship—employment, collaboration, and competition. And the emergence of "colleague.skill" happens to step on the most sensitive area of the new Web4 context.

In the narratives of the past few years, Web3 has attempted to solve trust issues with tokens and smart contracts, enabling collaboration at the level of code as law. However, Web3 has overlooked a harsh reality: most human productive activities do not occur on the blockchain, but rather in the smoke-filled spaces of Lark Docs, WeChat voice messages, and meeting rooms.

The evolutionary path of Web4 is precisely about penetrating this fog. According to the Hong Kong SAR government's explanation at the first Agentic AI Forum in March this year, Web4.0 is an "autonomous network" centered on AI agents, where humans set goals and intelligent agents collaboratively execute them. Within this theoretical framework, AI is viewed as a potential collaborative agent rather than a mere tool, marking a paradigm shift from "humans operating machines" to "humans setting goals and intelligent agents collaboratively executing them."

To put it simply: In the companies of the future, you will not only have to deal with living people, but also with the pile of skill files that these living people represent.

Imagine this scenario. It's 3 AM, and you want to access a technical specification known only to Xiao Li from the next team. Previously, you'd have to wait until dawn, send a WeChat message, wait for a reply, create a group chat, and explain the situation. But with Skill-based implementation, you simply need to call "Xiao Li's Code Review Mode.skill" in the system, and the AI Agent will review your code according to Xiao Li's usual strict standards.

Xiao Li himself is sleeping, but his cooperative personality is working for you.

This is an extremely bizarre asynchronous collaboration model. People are not replaced, but their real-time capabilities are decoupled. In the context of Web4, each person is no longer a physical entity that must appear on time on an assembly line, but rather a super node with its own API interface, capable of asynchronously outputting its professional judgment.

This is precisely the source of our unease and excitement about "colleague skills".

III. Who ultimately owns the copy of the soul?

After the initial excitement, the sword hanging over everyone's heads is sovereignty.

Since everyone's communication style, decision-making logic, and even blame-shifting techniques can be packaged into a Skill file, who does this file actually belong to? Does it belong to you, who produced this data, or to the company that paid your salary and provided the servers for these chat logs?

The legal answer is cautious. According to the "Personal Information Protection Law of the People's Republic of China," the processing of personal information must have a clear and reasonable purpose, be directly related to that purpose, and be conducted in a manner that minimizes the impact on individual rights. The processing of personal information must obtain the individual's consent. The legal boundaries of the risks associated with importing chat logs into Skill without a colleague's permission are actually quite clear.

Developer Zhou Tianyi was clearly aware of this powder keg. He implemented extremely stringent localization mechanisms in the project documentation: all data is processed on the user's own computer, without uploading to any cloud service, and all caches are physically cleared at once when a Skill is deleted. This was both a technical attempt to prove his innocence and a reluctant compromise.

Because everyone knows that once this technology moves from a "standalone" to a "networked" version, and once companies mandate the deployment of "Zhang San's sales scripts.skill" across all their AI agents, the so-called "local processing" hard boundary will instantly collapse. According to typical cases of public interest litigation concerning personal information protection released by the Supreme People's Procuratorate in January 2026, personal information protection is moving from legislation to enforcement, and from principles to practice. Under this trend, any AI application involving personal data will face increasingly stringent legal scrutiny.

A deeper conflict lies in property rights. Previously, your chat logs were merely a pile of redundant logs on a server; now, they've become high-value corpus that can directly boost productivity. Your chatting style, decision-making habits, and collaborative rapport are transforming from a lubricant not included in KPIs into a digital means of production that can be packaged, invoked, and even potentially traded. However, under the current property rights framework, the legal legitimacy and tradability of this asset still lack clear institutional support.

If you don't know how your soul copy is being used, then you've already been eliminated from this round of technological dividends.

IV. When experience can be priced, who will pay for tacit understanding?

At this point, a deeper question emerges. If skills become the unit of productivity, then the question is not just "who can use them," but "who can benefit from them."

Let's zoom out a bit. A top salesperson spent ten years refining a unique customer communication method that helped their team close countless deals. In the past, the value of this method was only reflected in their salary, bonuses, and position—an asset tied to a specific person, indivisible. But what if one day this method is distilled into a "sales master skill," usable by other colleagues, learned by new employees, and even licensed to partners? Then the logic of value distribution would completely change.

Does this skill belong to the individual salesperson or the company that hired them? If it belongs to the individual, can they take the skill file with them when they leave? If it belongs to the company, does the company have the right to deploy this skill to other employees without their consent? If skills can be accessed, billed, and even traded in the future, tacit knowledge will enter the pricing system for the first time. And whoever holds the pricing power will control the most core means of production in the Web4 era.

This isn't some distant science fiction. Within Anthropic's official Skill ecosystem, the most frequently used Skills are concentrated in highly standardized collaborative tasks such as document processing, code review, and meeting summaries. When collaborative experiences in these tasks are solidified, they naturally acquire measurable and distributable economic attributes.

Therefore, the real tipping point for "colleague.skill" is not how human-like it is, but that it inadvertently touched upon a proposition that has not yet been seriously discussed. In an era where collaboration is mediated by AI agents, how is the value of individual experiences recognized, protected, and distributed?

This is precisely the problem domain that the Web4 Research Center has been trying to continuously track. Technological evolution never stops waiting for institutions to catch up, but those who remain clear-headed amidst the technological wave can at least ask the right question first.

Fifth, projects will disappear, and habits will be reborn.

Having discussed so much about risks and games, let's take a longer view.

The specific project "college.skill" will most likely be overshadowed by new trends in a few months. Like countless other viral hits that once topped the GitHub Trending list, it will eventually fade into obscurity.

But it will burn itself up in the atmosphere like a meteor, but it will scatter some trace elements into the soil.

It will change a habit. In the future, when we hand over our work, in addition to a cold Word document, we might also habitually include a folder called "handover.skill". Newcomers will no longer need to spend three months trying to decipher the boss's intentions; they can simply load this skill, and the AI Agent will tell them: who to contact for type A problems, what tone to use when writing type B reports, and how to gracefully respond when criticized by department C.

This leads to a very interesting reversal. In the past, we always said that AI lacks common sense and human touch. But precisely because the AI Agent can strictly execute the instructions in the Skill, it becomes the most law-abiding collaborator. It won't act sarcastically because it's in a bad mood, nor will it lower its standards because it has a personal relationship with someone.

Humans are responsible for creating chaos and breakthroughs, while AI agents are responsible for maintaining order and continuity.

The ultimate goal of technology is not to create an omniscient and omnipotent God AI, but to transform the most valuable certainties in our human collaboration into rules that machines can faithfully execute.

Skill forcibly demystifies the previously vague and ambiguous interpersonal understanding into a computable set of instructions. This certainly carries risks; the risk lies in our potential over-reliance on these encoded relationships, forgetting the raw, unspoken warmth between people that cannot be defined by YAML format. But it also presents an opportunity. If we can clarify ownership and define boundaries, this technology will, for the first time, make "standing on the shoulders of giants" more than just a slogan.

Four hours of coding sparked the imaginations of millions about the future workplace.

The deepest metaphor of "collaborator.skill" is not how much AI resembles a human, but that for the first time, human experience can be plugged into another system and run, like transferring a USB drive.

It reminds us that as the door to Web4 slowly opens, what is truly scarce is no longer computing power, but a clear understanding of our own digital sovereignty.

Don't let your tacit understanding become a free plugin for others.

Guard your folder.