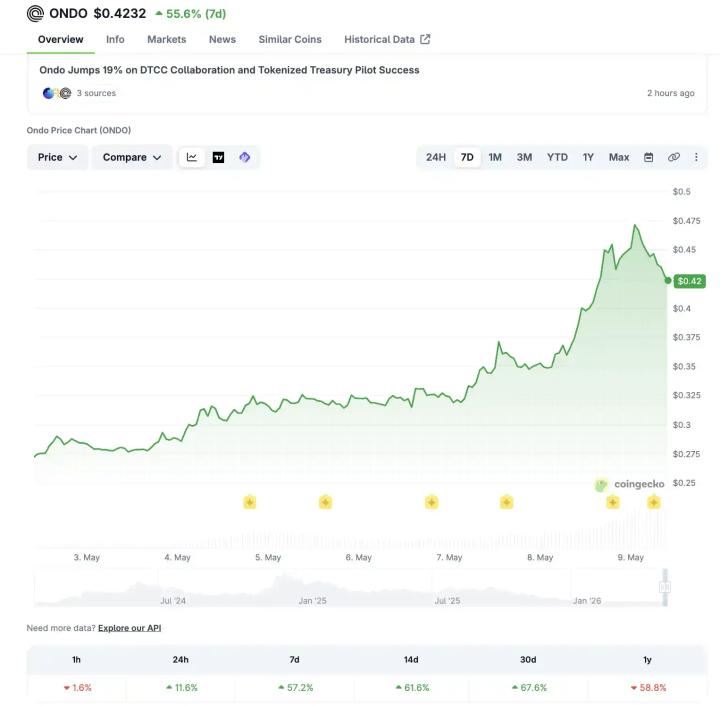

Author: @giantcutie666

In 2024, Leopold Aschenbrenner, a genius born in the 2000s, left OpenAI to start a foundation.

He also wrote down his investment strategy:

Looking back now, this 165-page paper, "Situational Awareness: The Decade Ahead," has become legendary.

Those who understood this last year have definitely achieved financial freedom this year!

I've included the original 165-page English version here ; below is a simplified version I've tried to make:

The paper's main argument is that within the next decade, humans will create AGI (capable of performing all the jobs of ordinary people), followed by superintelligence.

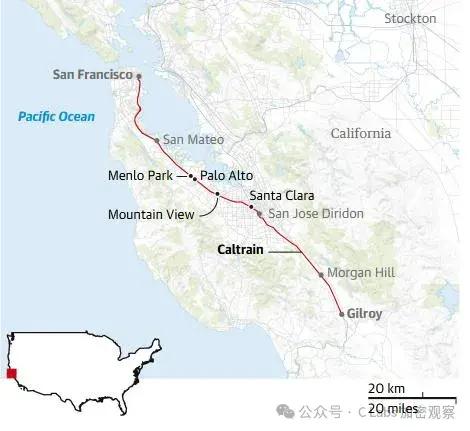

Few hundred people understand this right now, most of them crammed into a few streets in San Francisco. Others—including Wall Street, the media, and Washington—haven't caught on yet (although some on Wall Street have this idea this year).

Part 1: Why AGI will exist in 2027?

The method is clumsy but effective: it doesn't predict the time, but rather the scale of computing power.

Over the past four years, from GPT-2 to GPT-4, the model’s “effective computing power” has increased by about 100,000 times, growing tenfold every six months!

GPT-2 is like a kindergarten child struggling to write semi-coherent sentences, while GPT-4 is like a bright high school student passing the bar exam and solving doctoral-level scientific problems.

This 100,000-fold increase comes from three places:

First, there's the massive investment in hardware. The computing power used for training increases more than threefold every year. Moore's Law only increases it 1.3 times per year, but AI is five times faster because people are investing heavily in and specifically developing AI chips.

Second, algorithms are becoming smarter. To achieve the same mathematical ability, the cost of reasoning today is 1,000 times cheaper than it was two years ago; this is thanks to the efforts of algorithm engineers.

Third, the most interesting feature is the ability to unhobbling, which is usually suppressed by default.

The model itself has many capabilities, but they are suppressed by default settings. For example, ChatGPT's previous base model was actually very intelligent, but it sounded like gibberish when used—RLHF unlocked it.

For example, allowing the model to "think for a while before answering" (chain of thought) unlocks its reasoning ability. Providing it with tools and building its memory unlocks another layer.

The author predicts that these three paths will increase by another 100,000 times in the next four years (until 2027).

The most crucial insights are hidden in unhobbling:

The current model can only "think for a few minutes"—about a few hundred tokens—in a single inference. What if it could "think for months"—millions of tokens?

For example, the difference between you and Einstein is far less than the difference between "thinking for 5 minutes" and "thinking for 5 months".

If this unlocking is successful, it would be equivalent to an additional 3 to 4 orders of magnitude of intelligent leap. The fruit is already visible.

Based on this extrapolation, around 2027, an AI will emerge that can work independently for several weeks like a remote employee, planning tasks, writing code, conducting experiments, fixing bugs, and submitting results.

It can completely replace an OpenAI researcher; this is what the authors define AGI as.

The biggest uncertainty comes from the data wall—high-quality, publicly available internet data is being consumed quickly.

Part Two: AGI is not the end point, but the tipping point.

What will happen once AI can do the work of AI researchers?

The computing power remains the same, but you are no longer limited by "the hundreds of researchers in OpenAI, Anthropic, and DeepMind combined"—you can run 100 million copies of AI researchers, each running at 100 times the speed of a human.

The output in a few days is equivalent to a human's work for a whole year.

These automation researchers also have structural advantages: they can read all the ML papers, remember every experiment, share their thoughts directly between copies, and train one to train all (without having to train each new employee slowly).

This means that what would normally take humans 10 years to advance algorithms could be accomplished in just 1 year.

Then, within that year, an even smarter next-generation model will emerge, and the process will continue indefinitely. From AGI to superintelligence far surpassing human capabilities, it could take only a few months to a year or two.

The author acknowledges that there are bottlenecks—for example, experiments require computing power, and even with many copies, the GPU is still needed. However, he argues that these bottlenecks can at most slow down the explosion, but cannot prevent it.

What does a super AI look like?

Superhuman in scale—hundreds of millions of copies running in parallel, instantly merging across all disciplines, accumulating the equivalent of a thousand years of human experience in just a few weeks.

It's qualitatively superior—like AlphaGo's Move 37 (a move that human experts couldn't have conceived of for decades)—but applicable to all fields. It can find code vulnerabilities that humans could never see in a lifetime, and write code that humans can never understand.

We'll read a doctoral dissertation like elementary school students.

The consequences are: robotics is solved (mainly a problem of ML algorithms, not hardware), synthetic biology is weaponized, and stealth drone swarms can preemptively destroy nuclear weapons.

The entire international military balance will be overturned within a few years.

Part Three: Four Really Imminent Troubles

Problem 1: A trillion-dollar cluster

This competition is not just about programmers writing code; it's an industrial mobilization.

Each generation of models requires a larger cluster, a larger cluster requires a power plant, and a power plant requires a chip factory.

At the current pace: 1GW cluster in 2026 (the electricity from one Hoover Dam), 10GW in 2028 (the electricity from one medium-sized US state), and 100GW in 2030 (more than 20% of all electricity generated in the US).

The investment scale may exceed $1 trillion per year by 2027.

The real bottleneck isn't money or GPUs, it's electricity. "Where am I going to find 10GW?" is the hottest topic in SF right now.

To build it on U.S. soil, environmental approvals must be relaxed and federal power must be used to unlock land—the author elevates this to the level of national security.

If the US can't build it, the cluster will go to the UAE or Saudi Arabia, which is equivalent to handing the AGI key to authoritarian countries.

Problem 2: The lab is leaking.

Today's AI labs have the same level of security as the average Silicon Valley startup.

Google DeepMind (the best in the industry) itself admits that its defense capabilities against nation-state adversaries are at level 0 (out of 4).

What does this mean? The "weights" of an AGI model are essentially a large file on a server.

The theft of this document is tantamount to handing over "automated AI researchers" directly to China—they can immediately run their own AI explosion, rendering all of America's leading positions worthless.

Even more pressing is the secrets of algorithms—a key breakthrough for the next-generation paradigm (how to break the data wall) is taking shape in Slacks and outside office windows across SF companies.

The author asserts that a key AGI breakthrough will leak to China within the next 12 to 24 months. Not just maybe, but almost certainly.

To achieve a level of security comparable to that of China's Ministry of State Security would require hardware-level isolation, personnel background checks at the level of atomic bomb engineers, and specialized cluster design—all of which would take at least several years of iterative development to build. If action isn't taken immediately, security will lag behind when AGI is acquired in 2027, leaving only two dire options: either hand it over to another country directly, or stop and wait for security to be established (potentially losing the lead).

Problem 3: Alignment issues

The current alignment technique is called RLHF—humans rate the model, and the model learns to please the human.

This method works when AI is less intelligent than humans. However, once AI becomes several orders of magnitude smarter than humans, this method completely fails—how do you judge a doctoral dissertation you can't understand?

The explosion of smart technology has made this matter extremely tense:

In less than a year, the situation jumped from "RLHF still works" to "RLHF completely fails," leaving no time for iterative trial and error.

At the same time, it jumps from "minor error" (ChatGPT swearing) to "catastrophic error" (superintelligence breaking out of the cluster and hacking military systems).

The intermediate architecture has undergone multiple generations of evolution, and the final superintelligence is completely beyond our comprehension in terms of how it thinks.

The current model uses English tokens for reasoning (which is relatively transparent), but it will most likely evolve into internal latent state reasoning in the future—which is completely unexplainable.

The authors are optimistic about the technical solutions: there are many low-hanging fruits, and there has been progress in interpretability studies.

But there's extreme pessimism about the organization's execution—there are probably no more than a few dozen people worldwide seriously doing this, and the lab shows no signs of paying a safety price. "We're relying too heavily on luck."

Problem Four: The Free World Must Win

The author devotes considerable space to arguing that some countries not only did not fail to be eliminated, but were also very competitive.

In terms of computing power: Huawei's 7nm chip (made by SMIC) has the same performance as NVIDIA's A100, with a cost-performance ratio that is 2-3 times worse, but it is sufficient for most users.

Construction capacity: China's new electricity generation over the past decade equals the total installed power generation capacity of the United States. In terms of industrial mobilization capacity to build a 100GW power cluster, China may be stronger than the United States.

Theft route: As long as US labs remain unlocked, the key algorithms will inevitably be leaked within two years.

There's a disturbing coincidence on the timeline: the AGI timeline (~2027) and the window of opportunity for a CCP attack on Taiwan (~2027) observed by Taiwan Strait observers are converging. "The AGI finale could unfold against the backdrop of a world war."

The author concludes that the United States must maintain a "healthy lead"—he suggests two years. This two-year buffer is intended to stabilize the situation and negotiate with China on the post-super-intelligent era international order.

If we fall short of this lead, the entire world will be plunged into a "survival race through an explosion of intelligence"—all safety margins will be reduced to zero, and everyone will be running naked in their pursuit of speed.

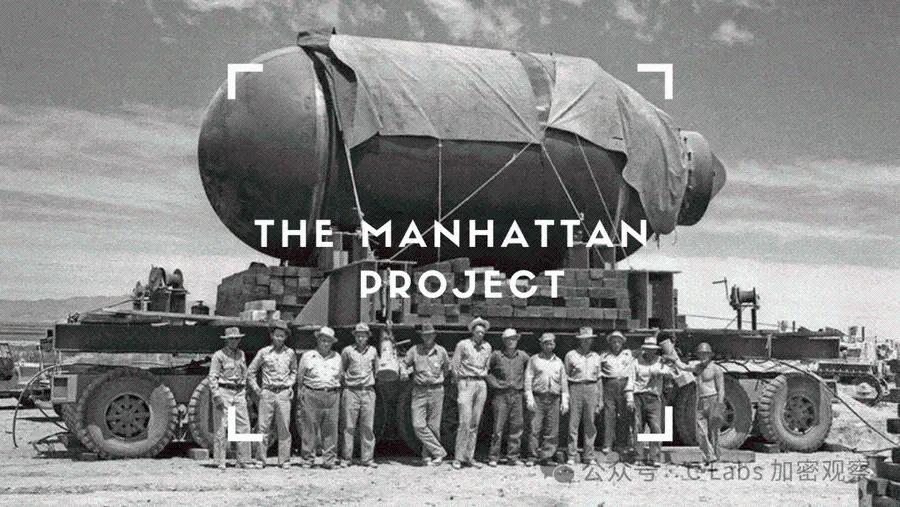

Part Four: The Project (AGI version of the Manhattan Project)

Here the author makes the boldest prediction of the entire book.

It's impossible for the US government to allow a small, independent startup to develop superintelligence. Imagine using Uber to develop the atomic bomb.

Prediction: Sometime in 2027 or 2028, the U.S. national security apparatus will take over the AGI project in some form. This could take the form of a defense contractor model (OpenAI becoming Lockheed Martin), a joint venture model (cloud vendors + labs + government), or even the more extreme form of nationalization.

Core researchers moved into the SCIF security facility, and the trillion-dollar cluster was built at wartime speed.

Formation path: The first truly frightening demonstration of capabilities will appear in 2026/27—it could be "helping novices build biological weapons," or AI autonomously hacking into critical infrastructure.

With details of the CCP's infiltration of laboratories being revealed, the atmosphere in Washington changed drastically. "Do we need the AGI Manhattan Project?" This question first slowly, then suddenly became the top issue.

Why must it be a government? Because superintelligence falls under the category of nuclear weapons, not the internet. Private companies have never been permitted to possess complete nuclear weapons.

To achieve a level of security capable of withstanding China's full force, only government infrastructure can do it. Managing a "smart explosion"—a process that "rewrites everything within a year"—requires a nuclear-level command chain, not a startup board of directors.

The author had no illusions about government efficiency (the Manhattan Project was also delayed by bureaucracy), but there was no better option.

Part Five: The Author's Confession

The author criticizes both opposing camps for getting it wrong:

Accelerationists (e/acc) naively believe that markets will self-regulate, ignoring the real difficulties of alignment and security.

Doomsday theorists advocate for a halt to everything—but China will not halt; to halt would be to admit defeat.

The author of "Correct Posture" is AGI. Realism:

① Superintelligence is a decisive technology for national security.

②The risks are real and we are not prepared.

③The United States must undertake The Project and lead the post-super-intelligent international order.

A few hundred people on a few streets in the Bay Area are determining the direction of the entire civilization.