Special thanks to Justin Drake for his feedback and review

An under-discussed but very important way Ethereum maintains its security and decentralization is its multi-client philosophy . Ethereum intentionally doesn't have a "reference client" that everyone runs by default: instead, there is a collaboratively governed specification (now written in very readable but very slow Python), and multiple teams are working on Implement the specification (also known as the "client"), which is what the user actually runs.

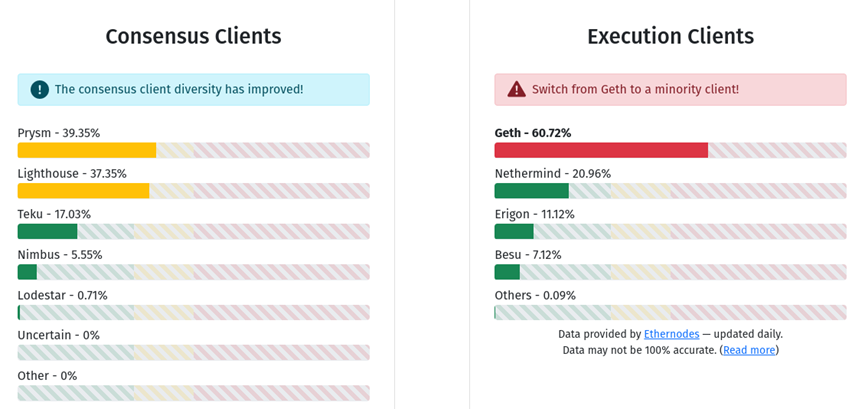

Each Ethereum node runs a consensus client and an execution client. As of now, no consensus or enforcement clients account for more than 2/3 of the network. If a client's share in its class is less than 1/3, then the network will continue to function normally. If there is a bug in a client with 1/3 to 2/3 shares in its class (e.g. Prysm, Lighthouse, or Geth), the chain continues to add blocks, but stops completing blocks, giving developers time to intervene .

An under-discussed, but still very important, upcoming major shift in the way the Ethereum chain is verified is the rise of the ZK-EVM. SNARKs proving EVM execution have been in development for years, and the technique is being actively used by L2 protocols known as ZK Rollup . Some of these ZK Rollup are currently active on mainnet, with more rolling out soon. But in the long run, ZK-EVM will not only be used for Rollup, we also want to use them to verify the execution of L1 (ref: the Verge ).

Once this happens, ZK-EVM will effectively become a third type of Ethereum client, as important to network security as execution clients and consensus clients are today. This naturally raises a question: how will the ZK-EVM interact with multiple clients? One of the hard parts has already been done: we already have multiple ZK-EVM implementations under active development. But other difficulties remain: How do we create a "multi-client" ecosystem for ZK proofs of the correctness of Ethereum blocks? This problem poses some interesting technical challenges—and, of course, the looming question of whether such a trade-off is worth it.

What was the original motivation for the Ethereum multi-client idea?

The multi-client concept of Ethereum is a kind of decentralization. Just like general decentralization, people can pay attention to the technical benefits of architectural decentralization and the social benefits of political decentralization. Ultimately, the idea of multi-client is driven by and serves both.

The Case for Technological Decentralization

The main benefit of technical decentralization is simple: it reduces the risk that a single bug in one piece of software will cause a catastrophic breakdown of the entire network. The 2010 Bitcoin overflow vulnerability is a prime example of this risk. At the time, the bitcoin client code didn't check that the sum of the transaction outputs didn't overflow (by summing over a maximum integer of 264-1, making it zero), so someone made a transaction and gave themselves billions of bitcoins. The bug was discovered within a few hours, the fix was quickly implemented and rapidly deployed across the network, but if there had been a mature ecosystem at the time, the coins would have been blocked by exchanges, bridges and other structures Accepted, the attackers may have taken a lot of money. If there are five different bitcoin clients, it is unlikely that they all have the same bug, so they will fork immediately, and the wrong side of the fork may fail.

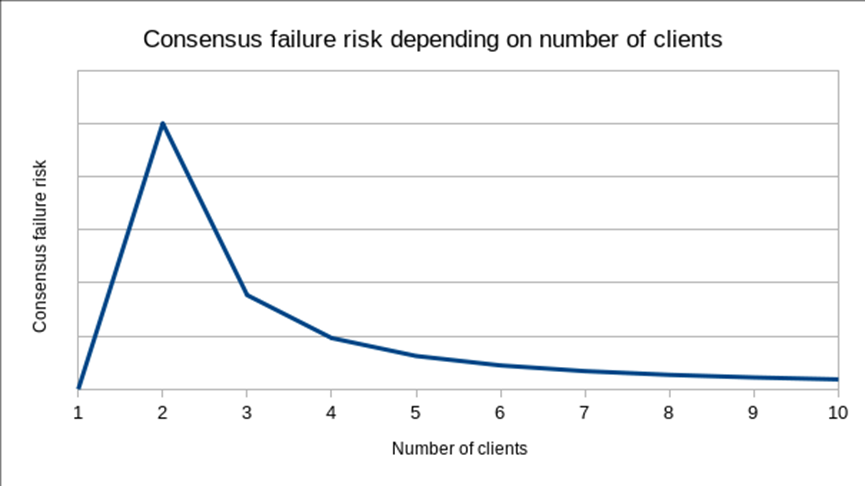

Using a multi-client approach to minimize the risk of catastrophic errors comes at a price: instead, you get consensus failure errors. That is, if you have two clients, there is a risk that the clients have subtly different interpretations of some of the protocol rules, and while both interpretations are reasonable and don't allow money to be stolen, the divergence Will cause the chain to fork in two. A severe fork of this type has occurred once in Ethereum's history (there have been other smaller splits where a very small portion of the network running an older version of the code was forked). Defenders of the single-client approach argue that consensus failures are the reason not to implement multi-client implementations: if there is only one client, then that client cannot disagree. Their model of how customer numbers translate to risk might look something like this:

Of course, I disagree with this analysis. The key points I disagree with are (i) 2010's kind of catastrophic bugs matter too, and (ii) you'll never actually have just one client . The latter point was most evident in the 2013 Bitcoin fork : a chain fork was caused by a disagreement between two different versions of the Bitcoin client, one of which accidentally limited access to the data in a single block. Modification of the number of objects. Therefore, one client in theory is often actually two clients, and five clients in theory may actually be six or seven clients—so we should take the risk, walk on the right side of the risk curve, and at least a few different clients.

The Case for Political Decentralization

Monopoly client developers are in a position of great political power. If a client developer proposes a change, and the user disagrees, they could theoretically refuse to download the updated version, or create a fork without it, but in practice, it's often difficult for users to do that. What if an unsatisfactory protocol change is bundled with a necessary security update? What if the main team threatens to quit if it doesn't get their way? Or, put more simply, if the monopoly client team ends up being the only team with the greatest protocol expertise, leaving the rest of the ecosystem at a disadvantage in judging the technical arguments made by the client team, giving the client team a huge advantage. What about creating spaces to promote their own specific goals and values that may not match those of the wider community?

Concerns about protocol politics, especially in the context of the Bitcoin OP_RETURN wars of 2013-14 , in which some participants publicly opposed specific uses of the blockchain, was an important factor in Ethereum's early adoption of the multi-client idea, whose purpose is to make such decisions more difficult for a small group of people. Concerns specific to the Ethereum ecosystem — namely, avoiding the concentration of power in the Ethereum Foundation itself — provide further support for this direction. In 2018, the Foundation made a decision to deliberately not implement the Ethereum PoS protocol (i.e., what is now known as the "consensus client"), leaving this task entirely to external teams.

How will ZK-EVM enter Layer 1 in the future?

Today, ZK-EVM is used for Rollup. This increases scalability by allowing expensive EVM execution to happen off-chain only a few times, while others only need to verify the SNARKs issued on-chain to prove the correctness of the EVM execution computation. It also allows some data (especially signatures) to be off-chain, saving gas costs. This gives us a lot of scalability benefits, and combining scalable computation with ZK-EVM and scalable data with data availability sampling allows us to scale even further.

However, today’s Ethereum network also has a different problem, one that no amount of Layer 2 scaling can solve on its own: Layer 1 is so hard to verify that not many users run their own nodes. Instead, most users simply trust third-party providers. Light clients like Helios and Succinct are taking steps to solve this problem, but the light client is far from being a fully validating node: the light client only verifies the signatures of a random subset of validators called the sync committee, not the Whether the chain actually follows the protocol rules. In order for users to be able to actually verify that the chain follows the rules, we have to do something different.

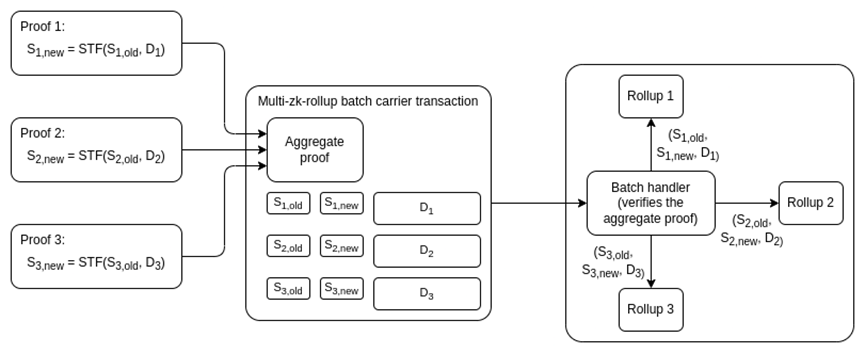

Option 1: Compress Layer 1, forcing almost all activity to move to Layer 2

Over time, we can reduce the Layer 1 gas target per block from 15 million to 1 million, enough for a block to contain a single SNARK and some deposits and withdrawals, but not much else, forcing Almost all user activity is transferred to the Layer 2 protocol. Such a design can still support the submission of many Rollup per block: we can use an off-chain aggregation protocol run by a custom builder to collect SNARKs from multiple Layer 2 protocols and combine them into a single SNARK. In such a world, the sole function of Layer 1 is to be a clearinghouse for Layer 2 protocols, verifying their proofs and occasionally facilitating large-scale transfers of funds between them.

This approach works, but it has several important weaknesses:

• It is effectively backwards incompatible because many existing L1-based applications become economically unviable. Hundreds or thousands of dollars in user funds could be stranded as fees become prohibitive, exceeding the cost of emptying these accounts. This problem could be solved by having users sign a message to opt in to a mass migration within the protocol to an L2 of their choice (see some early implementation ideas here), but this adds complexity to the transition, and to make it real Cheap enough to require some sort of SNARK at Layer 1 anyway. I usually like breaking backwards compatibility when it comes to things like the SELFDESTRUCT opcode, but in this case the tradeoff seems less favorable.

• It may still not make verification cheap enough. Ideally, the Ethereum protocol should be easy to verify, not just on laptops, but on phones, browser extensions, and even in other chains. It should also be easy to sync the chain for the first time, or after being offline for a long time. A laptop node can verify 1 million gas in about 20 ms, but even that means it takes 54 seconds to sync after being offline for a day (assuming a single slot final result increases the slot time to 32 seconds), whereas for On phones or browser extensions, each chunk takes a few hundred milliseconds, and may still be a non-negligible battery drain. These numbers are manageable, but not ideal.

• Even in L2-first ecosystems, L1 is at least somewhat affordable. Validiums could benefit from a stronger security model if users can withdraw their funds upon noticing that new state data is no longer available. If the minimum size of an economically viable direct transfer across L2 is smaller, arbitrage becomes more efficient, especially for smaller tokens.

So it seems more reasonable to try to find a way to use ZK-SNARKs to verify Layer 1 itself.

Option 2: SNARK – Verify Layer 1

Type 1 (fully equivalent to Ethereum) ZK-EVM can be used to verify the EVM execution of (Layer 1) Ethereum blocks. We can write more SNARK code to verify the consensus side of the block. This will be a challenging engineering problem: today, ZK-EVMs take minutes to hours to verify Ethereum blocks, and generating proofs in real time will require one or more (i) improvements to Ethereum itself to remove SNARK-unfriendly components, (ii) substantial efficiency gains through dedicated hardware, and (iii) architectural improvements with more parallelization. However, there's no fundamental technical reason why this couldn't be done -- so I expect it to happen, even if it takes many years.

Here we see an intersection with the multi-client paradigm: If we use a ZK-EVM to verify Layer 1, which ZK-EVM do we use?

I have three options:

1. Single ZK-EVM: Abandon the multi-client paradigm and choose a single ZK-EVM to verify blocks.

2. Closed multi-ZK-EVM: Reach a consensus on a specific set of multiple ZK-EVMs and seal them in the consensus layer protocol rules, that is, a block needs to be proved by more than half of the ZK-EVMs in the set to be accepted considered valid.

3. Open multi-ZK-EVM: Different clients have different ZK-EVM implementations, and each client waits for a proof of compatibility with its own implementation before accepting a valid block.

To me, (3) seems ideal, at least until our technology advances to the point where we can formally prove that all ZK-EVM implementations are equivalent to each other, so that we can choose the most efficient one. (1) will sacrifice the benefits of the multi-client model, (2) will close the possibility of developing new clients and lead to a more centralized ecosystem. (3) There are challenges, but those seem to be less challenging than those of the other two options, at least for now.

Implementing (3) won't be too hard: each type of proof has a p2p subnetwork, and clients using one type of proof listen and wait on the corresponding subnetwork until they receive a proof that the verifier considers valid .

The two main challenges of (3) can be as follows:

• Delay challenges : Malicious attackers may delay publishing blocks and proofs of validity to a client. Generating proofs that are valid for other clients actually takes a long time (even for example 15 seconds). This is long enough that a temporary fork may be created and break the chain for a few slots.

• Data inefficiency : One benefit of ZK-SNARKs is that only data relevant to verification (sometimes called "witness data") can be removed from a block. For example, once a signature is verified, there is no need to save the signature in a block, just store a bit indicating that the signature is valid, and store a proof in the block that all valid signatures are present. However, if we want to be able to generate multiple types of proofs for a block, the original signature needs to be actually published.

Latency issues can be addressed by careful handling when designing single-slot termination protocols. A single-slot finality protocol may require more than two rounds of consensus per slot, and thus may require the first round to include blocks, and only require nodes to verify proofs before signing in the third (or final) round. This ensures that there is always a significant window of time between the deadline for publishing blocks and the time when proofs are expected to be available.

Data efficiency issues must be addressed by aggregating verification-related data with a separate protocol. For signatures, we can use BLS aggregation which is already supported by ERC-4337 . Another major category of verification-related data is ZK-SNARKs for privacy . Fortunately, these protocols usually have their own aggregation protocols .

It is worth mentioning that there is another important benefit of SNARK verification Layer 1: EVM execution on the chain no longer requires each node to perform verification, which greatly increases the number of EVM executions. This can be achieved by significantly increasing the Layer 1 gas cap, or introducing enshrined rollups , or both.

in conclusion

It takes a lot of work to make an open multi-client ZK-EVM ecosystem work well. But the really good news is that most of the work is going on or about to happen:

• We already have multiple robust ZK-EVM implementations. These implementations are not yet Type 1 (fully equivalent to Ethereum) , but many of them are actively moving in this direction.

• Work on light clients such as Helios and Succinct may eventually turn into more complete SNARK verification on the PoS consensus side of the Ethereum chain.

• Clients may start experimenting with ZK-EVM to prove execution of Ethereum blocks, especially when we have stateless clients that technically don't need to directly re-execute each block to maintain state. We may move from clients verifying Ethereum blocks by re-execution to most clients verifying Ethereum blocks by checking SNARK proofs, which will be a slow and gradual transition.

• The ERC-4337 and PBS ecosystem may soon start using aggregation techniques such as BLS and proof aggregation to save gas costs. Work has already begun on the BLS aggregate.

With these technologies, the future looks very good. Ethereum blocks will be smaller than today, and anyone can run a fully validating node on their laptop, or even their phone, or in a browser extension, all while preserving the Ethereum multi-client philosophy occur simultaneously.

In the long run, of course anything can happen. Perhaps AI will strengthen formal verification so that it can easily prove that ZK-EVM implementations are equivalent and identify any errors that cause differences between them. Such a project might even be something to work on right now. If this formal verification-based approach is to succeed, different mechanisms will need to be put in place to ensure the continued politically decentralized enforcement of the protocol. Perhaps by then, the protocol will be considered "complete" and the immutability specification will be stronger. But even if this is the longer term future, the open world of multi-client ZK-EVM seems like a natural stepping stone that could happen anyway.

In the near term, it's still a long journey. ZK-EVM is already here, but for ZK-EVM to be truly viable at Layer 1, they need to be Type 1 and prove fast enough that it can happen in real-time. With enough parallelization, this is doable, but it's still a lot of work to get there. Consensus changes like increasing the gas cost of KECCAK, SHA256, and other hash function precompilations will also be a big part of the picture. That said, the first steps of the transition may happen sooner than we expect: once we switch to Verkle trees and stateless clients, clients can start using the ZK-EVM gradually, moving towards an "open multi-ZK- EVM" world transition may begin on its own.