Original author: Biteye core contributor Fishery Isla

Original editor: Biteye core contributor Crush

Original source: @BiteyeCN

The Ethereum network upgrade Dencun testnet version was launched on the Goerli testnet on January 17, 2024, and the Sepolia testnet was successfully launched on January 30. The Dencun upgrade is getting closer and closer to us.

After another Holesky testnet upgrade on February 7, there will be a mainnet upgrade. The Cancun upgrade mainnet launch has been officially determined on March 13th.

Almost every Ethereum upgrade is accompanied by a wave of theme prices. Tracing back to the last upgrade of Ethereum in Shanghai on April 12, 2023, POS-related projects have been sought after by the market.

If previous experience is followed, this Dencun upgrade will also have the opportunity to be deployed in advance.

Since the technical content behind the Dencun upgrade is relatively obscure, it cannot be summed up in one sentence like "Ethereum shifts from PoW to PoS" like the Shanghai upgrade, making it difficult to grasp the key points of the layout.

Therefore, this article will use easy-to-understand language to explain the technical details of the Dencun upgrade, and help readers sort out the context between this upgrade and data availability DA and Layer 2.

EIP 4484

EIP-4844 is the most important proposal in this Dencun upgrade, marking a practical and important step for Ethereum to expand in a decentralized manner.

Generally speaking, the second layer of Ethereum currently needs to submit the transactions that occur on the second layer to the calldata of the Ethereum main network for nodes to verify the validity of the blocks produced by the second layer network.

The problem caused by this is that although the transaction data has been compressed as much as possible, the huge transaction volume of the second layer multiplied by the high storage cost base of the Ethereum main network is still a huge amount for the second layer nodes and second layer users. Not a small expense. The price factor alone will cause the second layer to lose a large number of users to side chains.

EIP 4484 established a new and cheaper storage area BLOB (Binary Large Object, binary large object), and replaced the previous upgrade with a new transaction type called "BLOB-Carrying Transaction" that can point to the BLOB storage space. The transaction data that needs to be stored in calldata helps the second layer of the Ethereum ecosystem to save gas costs.

Why BLOB storage is cheap

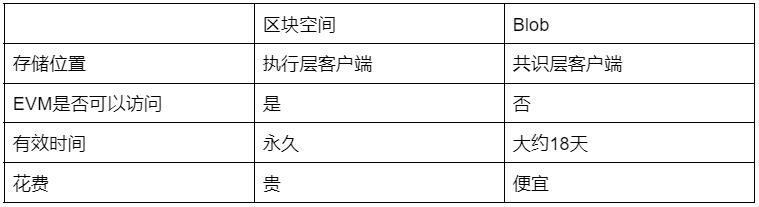

As we all know, cheapness comes at a price, and the reason BLOB data is cheaper than ordinary Ethereum Calldata of similar size is that the Ethereum execution layer (EL, EVM) does not actually have access to the BLOB data itself.

On the contrary, EL can only access the reference of BLOB data, and the data of BLOB itself can only be downloaded and stored by the consensus layer (CL, also known as beacon node) of Ethereum. The amount of memory and calculation consumed for storage is much less than that of ordinary Ethereum. Calldata.

Moreover, BLOB also has a characteristic that it can only be stored for a limited period of time (usually about 18 days) and will not expand infinitely like the size of the Ethereum ledger.

BLOB storage validity period

In contrast to the blockchain's permanent ledger, BLOB is temporary storage that is available for 4096 epochs, or approximately 18 days.

After expiration, most consensus clients will be unable to retrieve specific data in the BLOB. However, proof of its previous existence will remain on the mainnet in the form of KZG commitments and will be permanently stored on the Ethereum mainnet.

Why choose 18 days? This is a trade-off between storage cost and effectiveness.

First of all, we must consider the most intuitive beneficiaries of this upgrade , Optimistic Rollups (such as Arbitrum and Optimism,), because according to the settings of Optimistic Rollups, there is a 7-day Fruad Proof time window.

The transaction data stored in the blob is exactly what Optimistic Rollups needs when launching a challenge.

Therefore, the validity period of the Blob must ensure that the Optimistic Rollups failure proof is accessible. For simplicity, the Ethereum community chose the 12th power of 2 (4096 epochs are derived from 2^12, and one epoch is approximately 6.4 minutes).

BLOB-Carrying Transaction and BLOB

Understanding the relationship between the two is important to understanding the role of BLOBs in data availability (DA).

The former is the entire EIP-4484 proposal and is a new type of transaction, while the latter can be understood as a temporary storage location for layer 2 transactions.

The relationship between the two can be understood as that most of the data in the former (layer 2 transaction data) is stored in the latter. The remaining data, that is, the commitment of BLOB data, will be stored in the calldata of the mainnet. In other words, promises can be read by the EVM.

Commitment can be imagined as constructing all transactions in the BLOB into a Merkle tree, and then only the Merkle root, which is the Commitment, can be accessed by the contract.

This can be achieved cleverly: although the EVM cannot know the specific content of the BLOB, the EVM contract can verify the authenticity of the transaction data by knowing the Commitment.

The relationship between BLOB and Layer 2

Rollup technology achieves data availability (DA) by uploading data to the Ethereum main network, but this is not intended to allow L1's smart contracts to directly read or verify these uploaded data.

The purpose of uploading transaction data to L1 is simply to allow all participants to view the data.

Before the Dencun upgrade, as mentioned above, Op-rollup will publish transaction data to Ethereum as Calldata. Therefore, anyone can use these transaction information to reproduce the state and verify the correctness of the second-layer network.

It is not difficult to see that Rollup transaction data needs to be cheap + open and transparent. Calldata is not a good place to store transaction data specifically for the second layer, and BLOB-Carrying Transaction is tailor-made for Rollup.

After reading this, you may have a question in your mind. This kind of transaction data does not seem important. What is its use?

In fact, transaction data is only used in a few cases:

For Optimistic Rollup, based on the assumption of trust, there is a certain possibility of dishonesty issues. At this time, the transaction records uploaded by Rollup come in handy. Users can use this data to initiate transaction challenges (Fraud proof);

For ZK Rollup, zero-knowledge proof has proven that the state update is correct. Uploading data is only to allow users to calculate the complete state by themselves. When the second-layer node cannot operate correctly, the escape hatch mechanism (Escape Hatch, which requires a complete L2 state tree) is enabled. will be discussed in the last section).

This means that the scenarios in which transaction data is actually used by contracts are very limited. Even in the Optimistic Rollup transaction challenge, it is only necessary to submit evidence (status) proving that the transaction data "existed" on the spot, and there is no need for the transaction details to be stored in the mainnet in advance.

So if we put the transaction data in the BLOB element, although the contract cannot access it, the mainnet contract can store the Commitment of this BLOB.

In the future, if the challenge mechanism requires a certain transaction, we only need to provide the data of that transaction, as long as it can be matched. This convinces the contract and provides the transaction data for the challenge mechanism to use.

This not only takes advantage of the openness and transparency of transaction data, but also avoids the huge gas cost of entering all data into the contract in advance.

By only recording Commitments, transaction data is verifiable while greatly optimizing costs. This is an ingenious and efficient solution for uploading transaction data using Rollup technology.

It should be noted that in the actual operation of Dencun, the Merkle tree method similar to Celestia is not used to generate Commitment, but the clever KZG (Kate-Zaverucha-Goldberg, Polynomial Commitment) algorithm is used.

Compared with Merkle tree proof, the process of generating KZG Proof is relatively complex, but its verification volume is smaller and the verification steps are simpler. However, the disadvantage is that it requires trustworthy settings (ceremony.ethereum.org has now ended) and does not have Anti-quantum computing attack capability (Dencun uses the Version Hash method, and other verification methods can be replaced if necessary).

For the now popular DA project Celestia, it uses a variant of the Merkle tree. Compared with KZG, it depends on the integrity of the nodes to a certain extent, but it helps to reduce the threshold requirements for computing resources between nodes and maintain the stability of the network. Decentralized features.

Dencun Opportunities

While Eip 4844 reduces costs and increases efficiency for the second layer, it also introduces security risks, which also brings new opportunities.

To understand why, we need to go back to the escape hatch mechanism or forced withdrawal mechanism mentioned above.

When a Layer 2 node becomes disabled, this mechanism can ensure that user funds are safely returned to the mainnet. The prerequisite for activating this mechanism is that the user needs to obtain the complete status tree of Layer 2.

Under normal circumstances, users only need to find a Layer 2 full node to request data, generate merkle Proof, and then submit it to the mainnet contract to prove the legitimacy of their withdrawals.

But don’t forget that the user wants to activate the escape hatch mechanism to exit L2 precisely because the L2 nodes have done evil. If the nodes have done evil, there is a high probability that they will not get the data they want from the nodes.

This is what Vitalik often refers to as a data withholding attack.

Before EIP-4844, permanent Layer 2 records were recorded on the mainnet. When there was no Layer 2 node that could provide complete off-chain status, users could deploy a full node themselves.

This full node can obtain all historical data released by the Layer 2 sequencer on the main network through the Ethereum main network. Users can construct the required Merkle proof and submit the proof to the contract on the main network to safely complete L2 assets. Evacuate.

After EIP-4844, Layer 2 data only exists in the BLOB of the Ethereum full node, and historical data 18 days ago will be automatically deleted.

Therefore, the method in the previous paragraph to obtain the entire state tree by synchronizing the mainnet is no longer feasible. If you want to obtain the complete state tree of Layer 2, you can only use a third party to store all the Ethereum BLOB data for Love Power Generation (which should be Mainnet nodes (automatically deleted after 18 days), or Layer 2 native nodes (very few).

After 4844 goes online, it will be very difficult for users to obtain the complete status tree of Layer 2 in a completely trustworthy way.

If users do not have a stable way to obtain the Layer 2 state tree, they cannot perform forced withdrawal operations under extreme conditions. Therefore, 4844 has caused Layer 2 security shortcomings/deficiencies to a certain extent.

To make up for this lack of security, we need to have a trustless storage solution with a positive economic cycle. Storage here mainly refers to retaining data in Ethereum in a trustless manner, which is different from the storage track in the past because there is still the keyword "trustless".

Ethstorage can solve the problem of no trust and has received two rounds of funding from the Ethereum Foundation.

It can be said that this concept can truly cater to/make up for Dencun's upgraded track, and it is very worthy of attention.

First of all, the most intuitive significance of Ethstorage is that it can extend the available time of DA BLOB in a completely decentralized manner, making up for the shortest security shortcomings of Layer 2 after 4844.

Furthermore, most existing L2 solutions mainly focus on expanding Ethereum’s computing power, i.e. increasing TPS. However, the need to securely store large amounts of data on the Ethereum mainnet has surged, especially due to the popularity of dApps such as NFTs and DeFi.

For example, the storage needs of on-chain NFT are very obvious, because users not only own the token of the NFT contract, but also own the image on the chain. Ethstorage can solve the additional trust issues that come with storing these images in a third party.

Finally, Ethstorage can also solve the front-end needs of decentralized dApps. Currently existing solutions are primarily hosted by centralized servers (with DNS). This setup makes websites vulnerable to censorship and other issues such as DNS hijacking, website hacking, or server crashes, as evidenced by incidents such as Tornado Cash .

Ethstorage is still in the initial network testing stage, and users who are optimistic about the prospects of this track can try it out.