提示他们你要提示他们什么,提示他们,再提示他们你刚刚提示过他们什么。

本文为机器翻译

展示原文

BURKOV

@burkov

02-18

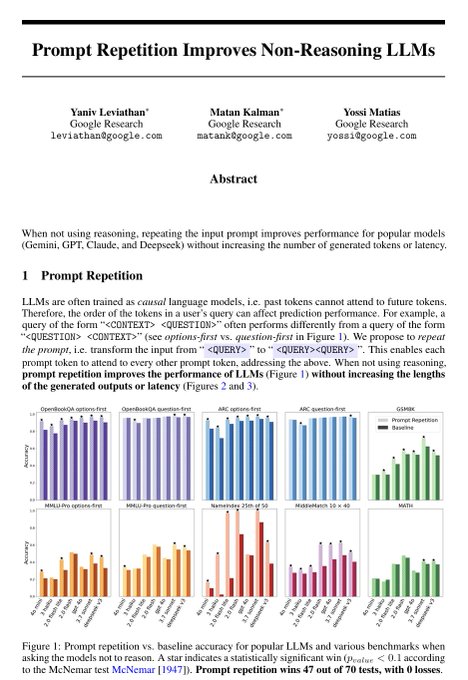

LLMs process text from left to right — each token can only look back at what came before it, never forward. This means that when you write a long prompt with context at the beginning and a question at the end, the model answers the question having "seen" the context, but the

来自推特

免责声明:以上内容仅为作者观点,不代表Followin的任何立场,不构成与Followin相关的任何投资建议。

喜欢

收藏

评论

分享