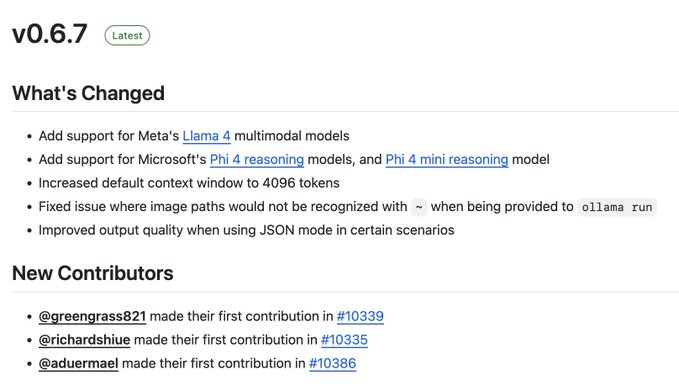

Ollama v0.6.7 is out with support for Meta's llama 4 (Scout and Maverick) and Microsoft's new Phi 4 reasoning models. Default context is now set to 4096 tokens.

From Twitter

Disclaimer: The content above is only the author's opinion which does not represent any position of Followin, and is not intended as, and shall not be understood or construed as, investment advice from Followin.

Like

Add to Favorites

Comments

Share

Relevant content