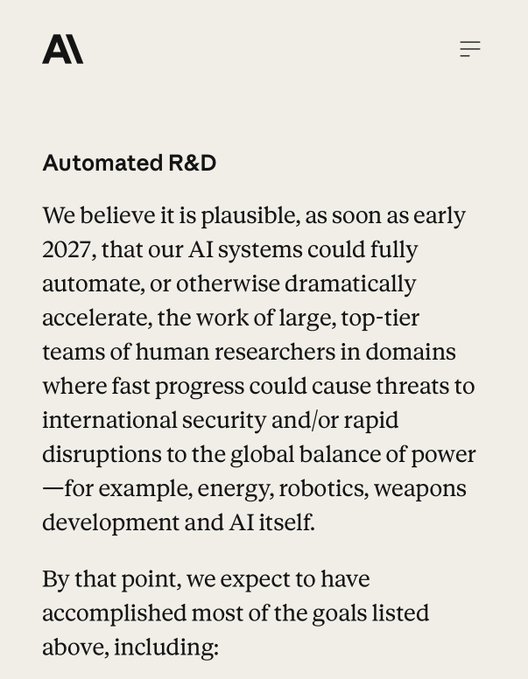

One year left: „We believe it is plausible, as soon as early 2027, that our AI systems could fully automate, or otherwise dramatically accelerate, the work of large, top-tier teams of human researchers in domains where fast progress could cause threats to international security and/or rapid disruptions to the global balance of power, for example, energy, robotics, weapons development and AI itself.“

Anthropic

@AnthropicAI

02-25

We’re now separating the safety commitments we’ll make unilaterally and our recommendations for the industry.

We’re also committing to publish new Frontier Safety Roadmaps with detailed safety goals, and Risk Reports that quantify risk across all our deployed models.

From Twitter

Disclaimer: The content above is only the author's opinion which does not represent any position of Followin, and is not intended as, and shall not be understood or construed as, investment advice from Followin.

Like

Add to Favorites

Comments

Share

Relevant content