This article is machine translated

Show original

Will 2029 be a life-or-death test for Bitcoin?

Yesterday, Google's article caused a major stir in the Bitcoin community. It shattered the illusion that the "quantum threat is still far off," bringing forward the anticipated 10-20 year window to 2029 (after the 2028 Bitcoin halving).

Google pointed out that a sufficiently powerful quantum computer can derive a private key from a public key in 9 minutes. Since the average Bitcoin block time is 10 minutes, this means attackers could intercept and forge transactions during the window before they are confirmed.

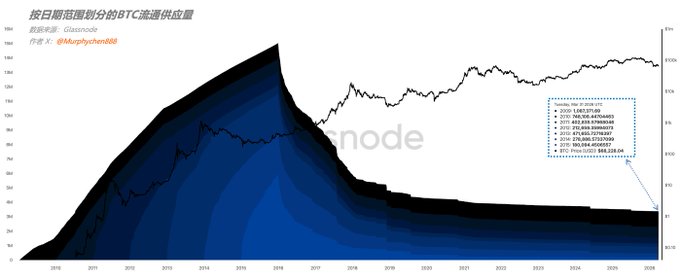

Furthermore, a fatal flaw lies in the addresses whose public keys were exposed on-chain early on. On-chain data shows that there are currently 3.379 million Bitcoins held inactive for 10 years (of which 1.08 million are held by Satoshi Nakamoto's address). Theoretically, these coins could all be "lambs to the slaughter."

Because these are mostly early holdings that have either "lost their coins" or "lost their owners," they cannot actively undergo "quantum migration resistance." If this system falls into the hands of the first hacker to break it, it means that in the world of Bitcoin, a "madman" possesses a massive amount of illicit wealth, 2.6 times that of ETFs and 4.4 times that of MSTRs.

Currently, I see the community's reaction is mainly divided into two camps: Optimists believe that security can be ensured simply by introducing a quantum-resistant signature scheme (BIP-360 and P2MR) through a soft fork and completing the migration of Bitcoin wallets before the quantum threat arrives.

Pessimists believe that even if it is technically feasible, a decentralized governance model is unlikely to reach a consensus within just 3-4 years, especially regarding how to handle so many exposed public keys in Bitcoin. Mishandling this could trigger another hard fork.

At that time, the "conservatives" who support protecting private assets and the "radicals" who support cybersecurity will once again be locked in fierce debate. Just like the serious disagreements between the Core development team and large miners on large and small blocks in 2017-2018, which ultimately led to a hard fork and almost caused Bitcoin to collapse.

Furthermore, even if the community eventually reaches a consensus, one thing may still be unavoidable.

This means that a large amount of BTC migration was passively generated for security upgrades. These non-economic on-chain activities severely pollute BTC's on-chain data. As a "global public ledger," facing a survival crisis, it had to sacrifice its transparency premium.

This could lead to structural failures in the trading models we've built over the past decade based on "on-chain data analysis." For example, all data based on "holding time" would become completely distorted, and the underlying logic of profit/loss valuation models would collapse.

Future on-chain analytics tools may have to divide BTC's history into a "pre-quantum era" and a "post-quantum era." Only institutions with top-tier data cleaning capabilities (I don't know if Glassnode can) will be able to extract the true trading intentions from the massive noise.

When trading decisions revert to traditional methods, and people start relying on news, sentiment, or technical analysis again, retail investors will lose a transparent and effective technical tool that offered them the "only chance to stand on the same starting line as institutions."

This is indeed a great pity...

research.google/blog/safeguard...…

There are currently 3.379 million BTC that have been held for 10 years without being touched (of which Satoshi Nakamoto's address may hold 1.087 million BTC).

Sector:

From Twitter

Disclaimer: The content above is only the author's opinion which does not represent any position of Followin, and is not intended as, and shall not be understood or construed as, investment advice from Followin.

Like

Add to Favorites

Comments

Share

Relevant content