This article is machine translated

Show original

Google released Gemma 4 yesterday, and it's incredibly powerful!

It's specifically designed to run on local devices (like phones and computers) and supports agents and tools.

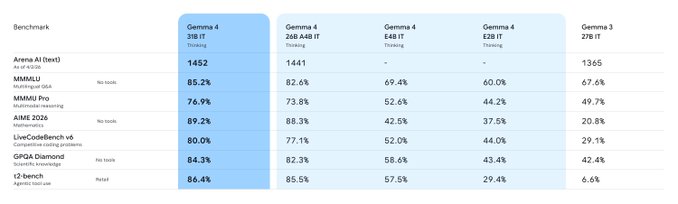

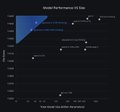

Four parameter sizes:

E2B: Primarily for mobile phones/IoT/edge devices.

E4B: Designed for mobile devices + Jetson/Raspberry Pi.

26B MoE: 3.8B per activation, very small effective parameters, focusing on high TPS and low latency.

31B Dense: Fully dense 31B, primarily for desktop workstations/single-card H100, etc.

This time, they prioritized Agency Workflows support: native support for Function Calls, JSON and structured output, and System Instructions. Even stronger, it's a native multimodal model, supporting image and video understanding, speech-to-text, and can function as a local voice assistant.

Furthermore, this time it's truly Apache 2.0 open source, allowing commercial use, redistribution, embedding in products, and private deployment without additional terms.

Google Gemma

@googlegemma

Meet Gemma 4!

Purpose-built for advanced reasoning and agentic workflows on the hardware you own, and released under an Apache 2.0 license.

We listened to invaluable community feedback in developing these models. Here is what makes Gemma 4 our most capable open models yet: 👇

Experience it directly in the Google app.

歸藏(guizang.ai)

@op7418

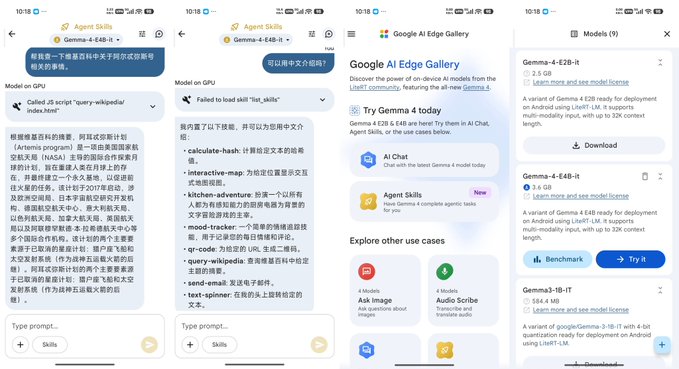

谷歌还发布了一个安卓应用,来体验他们这次新发布的 Gemma 4 模型。

我用我现在的小米 17 Ultra 试了一下,在用这个 E4B 模型的时候,推理速度非常快。

而且这个 App 现在还内置了一个 Skills 的体验区域,你可以自己去让它调用工具编写和试用 Skills。

可以在 Google Play 搜索 Google AI Edge x.com/op7418/status/…

Sector:

From Twitter

Disclaimer: The content above is only the author's opinion which does not represent any position of Followin, and is not intended as, and shall not be understood or construed as, investment advice from Followin.

Like

Add to Favorites

Comments

Share

Relevant content