The jury took their seats in Courtroom 9 of the U.S. District Court in Oakland, California, yesterday. The nine jurors, serving as a "consultative jury," will observe a trial expected to last four weeks and ultimately provide Judge Rogers with a written recommendation. Opening statements will begin today, Tuesday.

On the same day as the jury selection process, OpenAI announced a newly revised agreement with Microsoft. This agreement eliminated one crucial element: Microsoft's exclusive license to OpenAI's intellectual property rights was gone. This was precisely the last lock OpenAI placed on itself when it transitioned to a "limited profit" structure in 2019.

What exactly is Musk suing about?

Reuters and CNBC's courtroom diary compiled a list of cases two weeks before the trial. Musk initially filed a lawsuit in 2024 with 26 charges, ranging from securities fraud and RICO to antitrust allegations. Only two charges remain to be heard today: unjust enrichment and breach of charitable trust.

The remaining 24 charges were either dismissed by the judge during the motion stage or withdrawn by Musk himself. A few days before the trial, he voluntarily withdrew some of the charges related to "fraud," allowing the case to focus on the most crucial and simplest statement: "OpenAI promised me back then that it would always be a non-profit," but it is no longer.

For this one sentence, Musk is seeking up to $134 billion in damages. According to his lawsuit, all the damages would be returned to the non-profit portion of OpenAI, but he demands the removal of Altman and Brockman and the reversal of the entire for-profit conversion. This is the "true core" of the lawsuit. Its subject matter isn't stock distribution; it's who ultimately owns the OpenAI shell.

Judge Gonzalez Rogers divided the trial into two phases. The first phase, determining liability, will conclude by mid-May. If liability is established, the second phase will focus on damages. The jury will only participate in the first phase and will only provide consultation. The final verdict rests with the judge. This means that for Musk, winning the "narrative battle" is more important than winning "damages." He needs to convince the jury that "this company made promises to donors and then systematically dismantled those promises." Once those nine people agree, the judge will piece the rest of the puzzle for him.

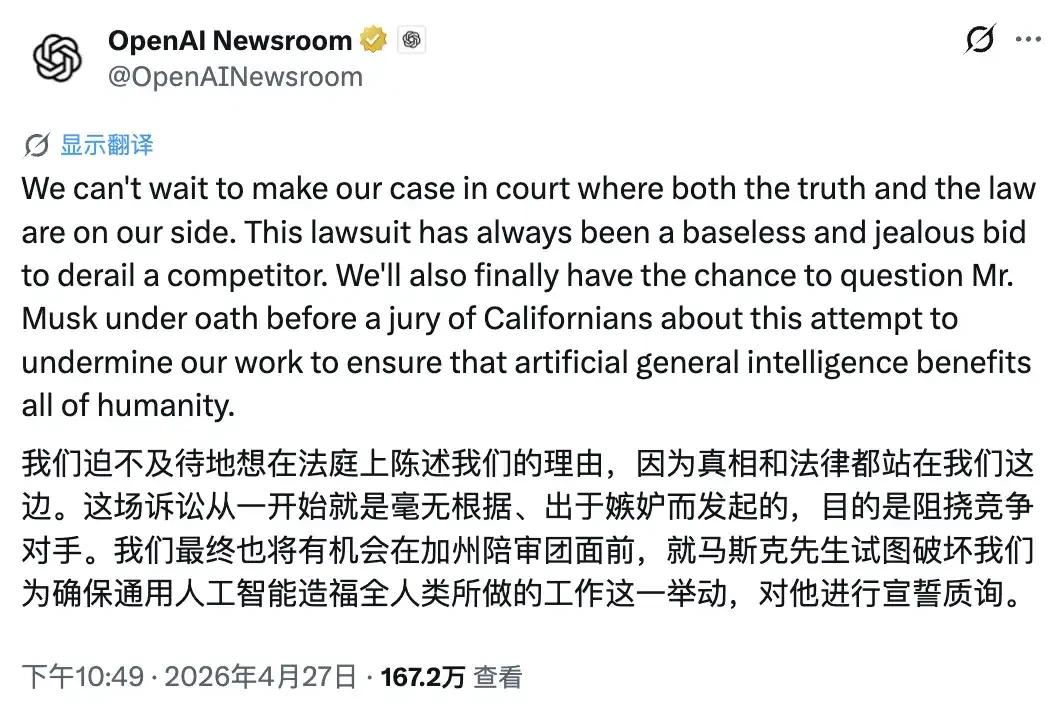

OpenAI's strategy was almost a mirror image. They aimed to convince the jury that Musk's true motive for the lawsuit was competitive jealousy, unrelated to breach of trust. OpenAI's official account fired the first shot on jury selection day: "We can't wait to present our evidence in court. Truth and the law are on our side. This lawsuit has been a baseless, jealous, and competitive attack... We finally have the opportunity to have Musk testify under oath before a California jury."

Note the phrase "have Musk testify under oath." This is a strategy; what OpenAI really wants is to portray Musk in the X public court as "the founder of xAI who lost to OpenAI." Convincing the judge is secondary. This way, ordinary California residents on the jury will enter the courtroom with this biased perspective.

How was OpenAI's "lock" removed?

To understand why Musk is so angry, we must first understand the three locks that OpenAI set for itself in 2019, each with a clear design intent.

You'll notice something. In 2019, OpenAI was proving to donors that "even if we want to make money, there are limits, and we have to stop at a certain point." On April 27, 2026, OpenAI was proving to investors that "we have no brakes."

The explanation for the profit cap is the most straightforward. Altman's 2025 employee letter stated, "The 'profit cap' structure works in a world with only one AGI company, but it no longer applies when there is competition." In plain terms: there are competitors, so I need to be able to earn more.

The most subtle aspect is the dismantling of the AGI trigger clause. Originally, "achieving AGI terminates Microsoft's commercial license," meaning AGI is a public good, belonging to humanity, and OpenAI would not privatize it. The revised version places AGI under the supervision of an "independent expert group," extends Microsoft's license to 2032, explicitly covers "models after AGI," and grants Microsoft permission to independently pursue AGI. This is a version where even the key to "defining who is AGI" has been changed.

The final step was an exclusive license. Its dismantling occurred the moment Musk's jury took their seats. Revenue sharing is completely decoupled from "OpenAI's technical progress," meaning that even if OpenAI were to announce tomorrow that it has achieved AGI, no commercial terms would be affected.

Musk's side will argue in court that this was a deliberate dismantling of protective mechanisms. OpenAI's side will argue that it was a necessary adjustment in a competitive environment. But there is one thing neither side will refute: that "self-restraint list" from 2019 is now completely empty.

Why do so many people hate Ultraman?

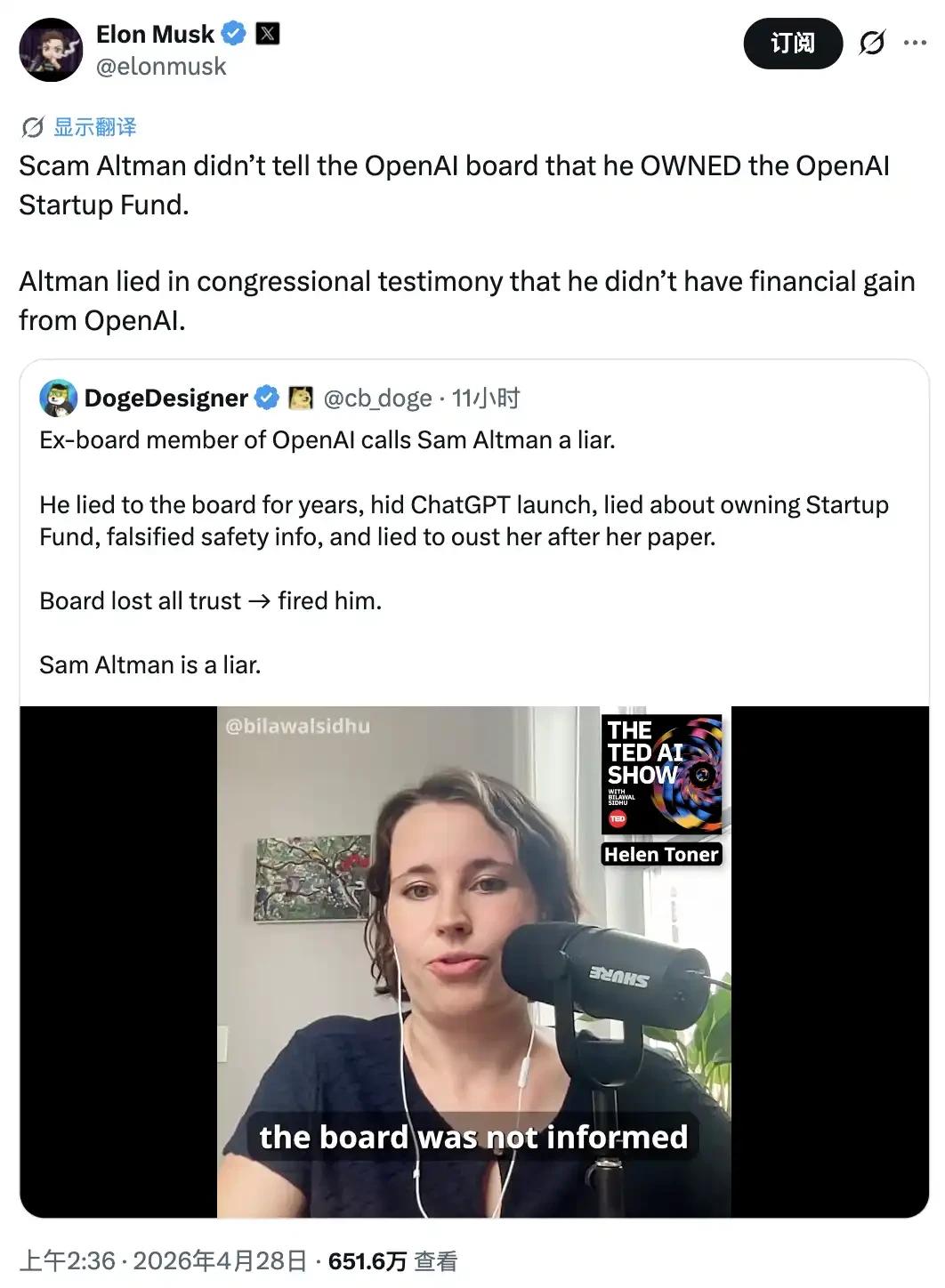

The scene at X on the day of jury selection was far more lively than inside the courtroom. Two hours after the official OpenAI account launched its attack, Musk retaliated with seven tweets in quick succession. The tweets were fast-paced, heavily worded, and delivered in a rapid-fire manner—a typical Musk-style barrage. He even gave Altman the nickname: Scam Altman.

He also shared a video clip from Helen Toner, a former director of OpenAI, in which Toner said, word by word, "Sam is a liar."

"Sam is a liar"—this wasn't Musk's first statement. Mira Murati, former CTO of OpenAI, said it when she left the company; Ilya Sutskever said it during the "failed coup" that resulted in the firing of Ultraman; and Jan Leike also publicly stated it when he resigned with the entire Super Alignment team.

There are actually three groups of people who dislike Ultraman Sam, each with different reasons.

The first group consisted of the old OpenAI board of directors. A landmark event for this group was the five-day firing saga in November 2023. The board used the phrase "not always candid in communication with the board."

What exactly was caught? In May 2024, Helen Toner publicly stated that the board learned from Twitter that the company had released a product that would reshape the global AI industry. She also claimed that Altman concealed the fact that he held shares in the OpenAI Startup Fund, repeatedly stating "I have no financial interest in the company," until he was forced to admit it in April 2024.

Altman repeatedly provided inaccurate information to the board regarding security procedures. Two executives reported Altman's "psychological abuse" to the board and provided screenshot evidence of "lying and manipulation." Altman also attempted to oust Toner from the board after she published a research paper that OpenAI disliked.

The second group consists of the security faction from the old OpenAI.

In May 2024, OpenAI's "Super Alignment Team" almost collapsed overnight. Leading the resignation was Jan Leike, one of OpenAI's most senior AI security researchers. His resignation letter on X was one of the most incisive departures in the English-language AI community that year, stating that "security culture and processes have given way to glamorous products."

Following closely behind was Ilya Sutskever, co-founder and chief scientist of OpenAI, and one of the key figures in the failed coup attempt. Then, CTO Mira Murati (who had temporarily taken over the company during Altman's dismissal), Chief Research Officer Bob McGrew, and VP of Research Barret Zoph resigned within the same week. The "non-disparagement agreement" scandal subsequently surfaced. Former employees were required to sign non-disparagement agreements or forfeit their stock options.

The third group consists of the contractual faction from old Silicon Valley. This group is the most difficult to define and also the largest.

They include early donors like Musk from 2015, those early OpenAI employees who genuinely believed in the "non-profit mission," many angel investors who had bet on early-stage startups in Silicon Valley, and a significant number of neutral observers who viewed OpenAI as "the common property of humanity."

What these people have in common is that they paid non-monetary prices for OpenAI's promises: reputation, time, trust, and social capital. And what they find most unforgivable about Altman is that every time OpenAI dismantles its own "locks," Altman claims it's "for the mission."

When the profit cap was removed, he said it was "to allow OpenAI to continue investing in AGI research"; when the AGI trigger clause was rewritten, he said it was "to allow OpenAI to continue fulfilling its mission after AGI"; when Microsoft exclusivity was removed, he said it was "to allow OpenAI to move towards a broader collaborative ecosystem".

This is why some people in Silicon Valley are reluctantly siding with Musk in this lawsuit.

The weight of promises made in Silicon Valley will be revealed in four weeks.

Having summarized everything, you probably understand now. They're not fighting over money.

Money is OpenAI's problem. By 2026, Altman will already be the CEO of a private AI company with an estimated market value of over $500 billion, so he won't lack it. By 2026, Musk's xAI will have already reached the Grok 5 era. Anthropic is what he's chasing, and OpenAI is what he's surpassing, so he won't lack it either.

They're arguing about something that only a handful of long-term Silicon Valley players care about: Can a nonprofit organization that raises funds, accumulates moral capital, recruits talent, and obtains regulatory exemptions in the name of "the common good of humanity" transform itself into an ordinary for-profit company jointly owned by the CEO and venture capitalists within ten years?

If this is possible, then every AI startup in the future can do the same. "Non-profit" will become a cheap early-stage narrative tool, used to get news headlines, regulatory approvals, and employee recruitment, and then quietly dismantled when the valuation is large enough.

If Musk wins, Silicon Valley might experience a long-lost sense of embarrassment. What you said in 2015 could be brought up verbatim in 2026, forcing you to testify under oath in a California federal court. If OpenAI wins, the world will continue to operate as Silicon Valley has for the past decade: early on, storytelling; later, scale; and in between, dismantling the contract between story and scale, piece by piece.

We'll have an answer in four weeks. But the words "Scam Altman" are already etched into social media, and they'll remain regardless of the verdict. The reason so many people hate Ultraman is that he makes those who believe him feel cheated. How much money he makes is secondary.

The fact that one has been deceived cannot be overturned by a judgment.