Original article by Vitalik Buterin

Original translation: Karen, Foresight News

Special thanks to Justin Drake, Francesco, Hsiao-wei Wang, @antonttc and Georgios Konstantopoulos.

Initially, there were two scaling strategies on Ethereum’s roadmap. One (see an early paper from 2015) was “sharding”: instead of validating and storing all transactions in the chain, each node would only need to validate and store a small fraction of transactions. This is how any other peer-to-peer network (e.g. BitTorrent) works, so of course we could have blockchains work the same way. The other was Layer 2 protocols: these networks would sit on top of Ethereum, allowing it to fully benefit from its security while keeping most of the data and computation off the main chain. Layer 2 protocols were state channels in 2015, Plasma in 2017, and then Rollup in 2019. Rollups are more powerful than state channels or Plasma, but they require a lot of on-chain data bandwidth. Fortunately, by 2019, sharding research had solved the problem of validating “data availability” at scale. As a result, the two paths merged and we got a Rollup-centric roadmap that remains Ethereum’s scaling strategy today.

The Surge, 2023 Roadmap Edition

The Rollup-centric roadmap proposes a simple division of labor: Ethereum L1 focuses on becoming a strong and decentralized base layer, while L2 is tasked with helping the ecosystem scale. This model is ubiquitous in society: the court system (L1) exists not to pursue super-speed and efficiency, but to protect contracts and property rights, while entrepreneurs (L2) build on this solid base layer and lead humanity to Mars (both literally and figuratively).

This year, the Rollup-centric roadmap has achieved important results: with the launch of EIP-4844 blobs, the data bandwidth of Ethereum L1 has increased significantly, and multiple Ethereum Virtual Machine (EVM) Rollups have entered the first stage. Each L2 exists as a "shard" with its own internal rules and logic, and the diversity and diversification of sharding implementations are now a reality. But as we have seen, there are also some unique challenges in taking this path. Therefore, our task now is to complete the Rollup-centric roadmap and solve these problems while maintaining the robustness and decentralization unique to Ethereum L1.

The Surge: Key Objectives

1. In the future, Ethereum can reach more than 100,000 TPS through L2;

2. Maintain the decentralization and robustness of L1;

3. At least some L2 fully inherits the core properties of Ethereum (trustlessness, openness, and censorship resistance);

4. Ethereum should feel like a unified ecosystem, not 34 different blockchains.

In this chapter

The Scalability Triangle Paradox

Further progress in data availability sampling

Data Compression

Generalized Plasma

Mature L2 proof system

Cross-L2 interoperability improvements

Extended execution on L1

The Scalability Triangle Paradox

The scalability triangle is an idea proposed in 2017 that posits a contradiction between three properties of blockchain: decentralization (more specifically: the low cost of running a node), scalability (the high number of transactions processed), and security (an attacker would need to compromise a large portion of the nodes in the network to make a single transaction fail).

It’s worth noting that the trilemma is not a theorem, and the post introducing the trilemma does not come with a mathematical proof. It does give a heuristic mathematical argument: if a decentralization-friendly node (e.g. a consumer laptop) can verify N transactions per second, and you have a chain that processes k*N transactions per second, then (i) each transaction can only be seen by 1/k nodes, meaning an attacker only needs to compromise a few nodes to get through a malicious transaction, or (ii) your nodes will become powerful and your chain will not be decentralized. The purpose of this post was never to prove that breaking the trilemma is impossible; rather, it was intended to show that breaking the trilemma is difficult and requires thinking outside the box somewhat implied by the argument.

Over the years, some high-performance chains have often claimed that they have solved the trilemma without fundamentally changing their architecture, usually by applying software engineering tricks to optimize nodes. This is always misleading, and running nodes on these chains is much more difficult than running nodes on Ethereum. This article will explore why this is the case, and why L1 client software engineering alone cannot scale Ethereum?

However, data availability sampling combined with SNARKs does solve the triangle paradox: it allows clients to verify that a certain amount of data is available and a certain number of computational steps were performed correctly, while downloading only a small amount of data and performing very little computation. SNARKs are trustless. Data availability sampling has a subtle few-of-N trust model, but it retains the fundamental property of non-scalable chains, namely that even a 51% attack cannot force a bad block to be accepted by the network.

Another approach to solving the trilemma is the Plasma architecture, which uses clever techniques to push the responsibility of monitoring data availability onto users in an incentive-compatible way. Back in 2017-2019, when we only had fraud proofs to scale computing power, Plasma was very limited in terms of secure execution, but with the popularity of SNARKs (zero-knowledge succinct non-interactive arguments), the Plasma architecture has become more feasible for a wider range of use cases than ever before.

Further progress in data availability sampling

What problem are we solving?

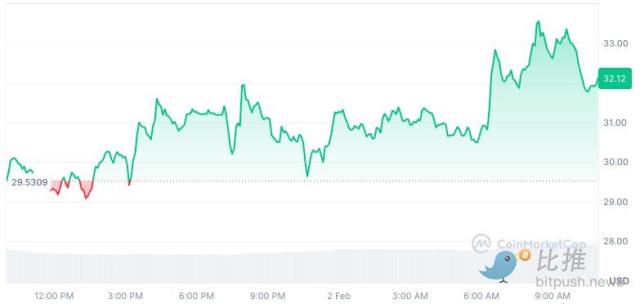

On March 13, 2024, when the Dencun upgrade goes live, the Ethereum blockchain will have 3 blobs of approximately 125 kB per 12-second slot, or approximately 375 kB of data bandwidth available per slot. Assuming that transaction data is published directly on the chain, an ERC 20 transfer is approximately 180 bytes, so the maximum TPS of a Rollup on Ethereum is: 375000 / 12 / 180 = 173.6 TPS

If we add Ethereum’s calldata (theoretical maximum: 30 million gas per slot / 16 gas per byte = 1,875,000 bytes per slot), it becomes 607 TPS. With PeerDAS, the number of blobs could increase to 8-16, which would provide 463-926 TPS for calldata.

This is a significant improvement over Ethereum L1, but not enough. We want more scalability. Our mid-term goal is 16MB per slot, which, when combined with improvements to Rollup data compression, will bring ~58,000 TPS.

What is it and how does it work?

PeerDAS is a relatively simple implementation of "1D sampling". In Ethereum, each blob is a 4096-degree polynomial over a 253-bit prime field. We broadcast shares of the polynomial, where each share contains 16 evaluations at 16 adjacent coordinates from a total of 8192 coordinates. Of these 8192 evaluations, any 4096 (according to the currently proposed parameters: any 64 of the 128 possible samples) can recover the blob.

PeerDAS works by having each client listen to a small number of subnets, where the ith subnet broadcasts the ith sample of any blob, and requests the blobs it needs on other subnets by asking peers in the global p2p network (who will listen to different subnets). A more conservative version, SubnetDAS, uses only the subnet mechanism without the additional layer of asking peers. The current proposal is for nodes participating in proof of stake to use SubnetDAS, while other nodes (i.e. clients) use PeerDAS.

In theory, we can scale 1D sampling quite a bit: if we increase the maximum number of blobs to 256 (with a target of 128), then we can hit our 16MB target with 16 samples per node * 128 blobs * 512 bytes per sample per blob = 1MB of data bandwidth per slot for data availability sampling. This is just barely within our tolerance: it's doable, but it means bandwidth-constrained clients can't sample. We can optimize this somewhat by reducing the number of blobs and increasing the blob size, but that makes reconstruction more expensive.

Therefore, we eventually want to go a step further and perform 2D sampling, which randomly samples not only within blobs, but also between blobs. Using the linear property of the KZG commitment, the set of blobs in a block is extended by a set of new virtual blobs that redundantly encode the same information.

Therefore, eventually we want to go one step further and do 2D sampling, which randomly samples not only within a blob, but also between blobs. The linear property promised by KZG is used to expand the set of blobs in a block with a list of new virtual blobs that redundantly encode the same information.

2D sampling. Source: a16z crypto

Crucially, the computational commitments do not require the existence of blobs, so the scheme is fundamentally friendly to distributed block construction. Nodes that actually build blocks only need to have blob KZG commitments, and they can rely on data availability sampling (DAS) to verify the availability of data blocks. One-dimensional data availability sampling (1D DAS) is also inherently friendly to distributed block construction.

What are the links to existing research?

Original post introducing data availability (2018): https://github.com/ethereum/research/wiki/A-note-on-data-availability-and-erasure-coding

Follow-up paper: https://arxiv.org/abs/1809.09044

An explanation article about DAS, paradigm: https://www.paradigm.xyz/2022/08/das

2D data availability with KZG commitments: https://ethresear.ch/t/2d-data-availability-with-kate-commitments/8081

PeerDAS on ethresear.ch: https://ethresear.ch/t/peerdas-a-simpler-das-approach-using-battle-tested-p2p-components/16541 and paper: https://eprint.iacr.org/2024/1362

EIP-7594: https://eips.ethereum.org/EIPS/eip-7594

SubnetDAS on ethresear.ch: https://ethresear.ch/t/subnetdas-an-intermediate-das-approach/17169

Nuances of data recoverability in 2D sampling: https://ethresear.ch/t/nuances-of-data-recoverability-in-data-availability-sampling/16256

What else needs to be done? What are the trade-offs?

Next up is completing the implementation and rollout of PeerDAS. After that, it will be a gradual process of increasing the number of blobs on PeerDAS while carefully watching the network and improving the software to ensure security. In the meantime, in the meantime, we expect more academic work to formalize PeerDAS and other versions of DAS and their interaction with issues like fork choice rule security.

Further work is needed to identify the ideal version of 2D DAS and prove its security properties in the future. We also hope to eventually move away from KZG to an alternative that is quantum-safe and does not require a trusted setup. At this point, it is unclear which candidates are friendly to distributed block construction. Even expensive "brute force" techniques, i.e., using recursive STARKs to generate validity proofs for reconstructing rows and columns, are not sufficient because while technically a STARK is O(log(n) * log(log(n)) hashes in size (using STIR), in practice a STARK is almost as large as the entire blob.

I think the long term realistic path is:

Implementing an ideal 2D DAS;

Stick with 1D DAS, sacrifice sampling bandwidth efficiency, and accept a lower data cap for simplicity and robustness

(Hard pivot) Abandon DA and fully embrace Plasma as the main Layer 2 architecture we focus on.

Note that this option exists even if we decide to scale execution directly at L1. This is because if L1 is to handle a large number of TPS, L1 blocks will become very large and clients will want an efficient way to verify their correctness, so we will have to use the same techniques used for Rollups (such as ZK-EVM and DAS) at L1.

How does it interact with the rest of the roadmap?

If data compression is implemented, the need for 2D DAS will be reduced, or at least delayed, and if Plasma is widely used, the need will be further reduced. DAS also poses challenges to distributed block construction protocols and mechanisms: while DAS is theoretically friendly to distributed reconstruction, this in practice needs to be combined with the inclusion list proposal and the fork choice mechanism around it.

Data Compression

What problem are we solving?

Each transaction in a Rollup takes up a lot of on-chain data space: an ERC 20 transfer takes about 180 bytes. Even with ideal data availability sampling, this limits the scalability of the Layer protocol. At 16 MB per slot, we get:

16000000 / 12 / 180 = 7407 TPS

What if we could solve not only the numerator problem but also the denominator problem, so that each transaction in a Rollup takes up fewer bytes on the chain?

What is it and how does it work?

In my opinion, the best explanation is this picture from two years ago:

In zero-byte compression, each long sequence of zero bytes is replaced with two bytes indicating how many zero bytes there are. Going a step further, we take advantage of a specific property of transactions:

Signature aggregation: We switch from ECDSA signatures to BLS signatures, which feature multiple signatures that can be combined into a single signature that can prove the validity of all original signatures. In L1, BLS signatures are not considered because the computational cost of verification is high even with aggregation. But in a data-scarce environment like L2, it makes sense to use BLS signatures. The aggregation feature of ERC-4337 provides a way to achieve this functionality.

Replacing addresses with pointers: If an address has been used before, we can replace the 20-byte address with a 4-byte pointer to a location in the history.

Custom serialization of transaction values - Most transaction values have very few digits, for example, 0.25 ETH is represented as 250, 000, 000, 000, 000, 000 wei. The same is true for the maximum base fee and priority fee. Therefore, we can use a custom decimal floating point format to represent most monetary values.

What are the links to existing research?

Explore sequence.xyz: https://sequence.xyz/blog/compressing-calldata

L2 Calldata optimization contract: https://github.com/ScopeLift/l2-optimizoooors

Proof-of-validity based Rollups (aka ZK rollups) publish state diffs instead of transactions: https://ethresear.ch/t/rollup-diff-compression-application-level-compression-strategies-to-reduce-the-l2-data-footprint-on-l1/9975

BLS Wallet - BLS aggregation via ERC-4337: https://github.com/getwax/bls-wallet

What else needs to be done, and what are the trade-offs?

The next major thing to do is to actually implement the above solution. The main trade-offs include:

1. Switching to BLS signatures requires a lot of effort and reduces compatibility with trusted hardware chips that can enhance security. ZK-SNARK wrappers of other signature schemes can be used instead.

2. Dynamic compression (for example, replacing addresses with pointers) complicates client code.

3. Publishing state differences to the chain instead of transactions will reduce auditability and make many software (such as block browsers) unable to work.

How does it interact with the rest of the roadmap?

Adopting ERC-4337, and eventually incorporating parts of it into the L2 EVM, could greatly speed up the deployment of aggregated technologies. Placing parts of ERC-4337 on L1 could speed up its deployment on L2.

Generalized Plasma

What problem are we solving?

Even with 16MB blobs and data compression, 58,000 TPS may not be enough to fully meet the needs of consumer payments, decentralized social, or other high-bandwidth areas, especially when we start to consider privacy factors, which may reduce scalability by 3-8 times. For high-transaction volume, low-value use cases, one current option is to use Validium, which stores data off-chain and adopts an interesting security model: operators cannot steal users' funds, but they may temporarily or permanently freeze all users' funds. But we can do better.

What is it and how does it work?

Plasma is a scaling solution that involves an operator publishing blocks off-chain and putting the Merkle roots of those blocks on-chain (unlike Rollup, which puts the full block on-chain). For each block, the operator sends a Merkle branch to each user to prove what has, or has not, changed about that user's assets. Users can withdraw their assets by providing a Merkle branch. Importantly, this branch does not have to be rooted at the latest state. Therefore, even if there is a problem with data availability, users can still recover their assets by extracting the latest state available to them. If a user submits an invalid branch (for example, withdrawing an asset they have already sent to someone else, or the operator creates an asset out of thin air), the legal ownership of the asset can be determined through an on-chain challenge mechanism.

Plasma Cash chain graph. A transaction that spends coin i is placed at the i-th position in the tree. In this example, assuming all previous trees are valid, we know that Eve currently owns token 1, David owns token 4, and George owns token 6.

Early versions of Plasma were only able to handle payment use cases and could not be effectively generalized further. However, if we require each root to be verified with a SNARK, Plasma becomes much more powerful. Each challenge game can be greatly simplified because we exclude most possible paths for the operator to cheat. At the same time, new paths are opened up to enable Plasma technology to be extended to a wider range of asset classes. Finally, in the case where the operator does not cheat, users can withdraw their funds immediately without having to wait for a week-long challenge period.

One way (not the only way) to make an EVM Plasma chain: Use ZK-SNARK to build a parallel UTXO tree that reflects the balance changes made by the EVM and defines a unique mapping of the "same token" at different points in history. Then you can build a Plasma structure on it.

A key insight is that Plasma systems don’t need to be perfect. Even if you can only secure a subset of assets (e.g. just tokens that haven’t moved in the past week), you’ve already made a significant improvement over the current state of the hyper-scalable EVM (i.e. Validium).

Another class of constructions is hybrid Plasma/Rollup, such as Intmax. These constructions put very small amounts of data per user on-chain (e.g., 5 bytes), and in doing so achieve some properties between Plasma and Rollup: in the case of Intmax, you get very high scalability and privacy, although even at 16 MB you are theoretically limited to about 16,000,000 / 12 / 5 = 266,667 TPS.

What are some relevant links to existing research?

Original Plasma paper: https://plasma.io/plasma-deprecated.pdf

Plasma Cash: https://ethresear.ch/t/plasma-cash-plasma-with-much-less-per-user-data-checking/1298

Plasma Cashflow: https://hackmd.io/DgzmJIRjSzCYvl4lUjZXNQ?view#🚪-Exit

Intmax (2023): https://eprint.iacr.org/2023/1082

What else needs to be done? What are the trade-offs?

The main task remaining is to put the Plasma system into actual production applications. As mentioned above, "Plasma vs. Validium" is not an either-or choice: any Validium can improve its security properties at least to some extent by incorporating Plasma features into its exit mechanism. The focus of research is on obtaining the best properties for EVM (in terms of trust requirements, worst-case L1 Gas costs, and the ability to resist DoS attacks), as well as alternative specific application structures. In addition, compared to Rollup, Plasma is more conceptually complex, which needs to be directly addressed through research and building a better general framework.

The main tradeoff with using Plasma designs is that they are more operator-dependent and harder to base, although hybrid Plasma/Rollup designs can generally avoid this weakness.

How does it interact with the rest of the roadmap?

The more efficient the Plasma solution, the less pressure there is on L1 to have high performance data availability capabilities. Moving activity to L2 also reduces the pressure on MEVs on L1.

Mature L2 proof system

What problem are we solving?

Currently, most Rollups are not actually trustless. There is a safety committee that has the ability to override (optimistic or validity) the behavior of the proof system. In some cases, the proof system does not even run at all, or even if it runs, it only has an "advisory" function. The most advanced Rollups include: (i) some trustless application-specific Rollups, such as Fuel; (ii) as of this writing, Optimism and Arbitrum are two full-EVM Rollups that have achieved a partial trustless milestone called "Phase 1". The reason why Rollups have not made further progress is the concern about bugs in the code. We need trustless Rollups, so we must face and solve this problem.

What is it and how does it work?

First, let’s review the “stage” system initially introduced in this article.

Phase 0: Users must be able to run a node and sync the chain. It doesn’t matter if the validation is fully trusted/centralized.

Phase 1: There must be a (trustless) proof system that ensures that only valid transactions are accepted. It is allowed to have a security committee that can overturn the proof system, but there must be a 75% threshold vote. In addition, the quorum-blocking portion of the committee (i.e. 26%+) must be outside the main company building the Rollup. A less powerful upgrade mechanism (such as a DAO) is allowed, but it must have a long enough delay that if it approves a malicious upgrade, users can withdraw their funds before the funds go live.

Phase 2: There must be a (trustless) proof system that ensures that only valid transactions are accepted. The safety committee is only allowed to intervene if there is a provable bug in the code, e.g. if two redundant proof systems disagree with each other, or if one proof system accepts two different post-state roots for the same block (or doesn't accept anything for a long enough period of time, e.g. a week). Upgrade mechanisms are allowed, but must have very long delays.

Our goal is to reach Stage 2. The main challenge in reaching Stage 2 is to gain enough confidence that the system is actually trustworthy enough. There are two main ways to do this:

Formal Verification: We can use modern mathematical and computational techniques to prove (optimistic and validity) that a proof system only accepts blocks that pass the EVM specification. These techniques have been around for decades, but recent advances (such as Lean 4) have made them more practical, and advances in AI-assisted proofs may further accelerate this trend.

Multi-provers: Make multiple proof systems and invest in these proof systems with a security committee (or other gadgets with trust assumptions, such as TEE). If the proof systems agree, the security committee has no power; if they disagree, the security committee can only choose between one of them, it cannot unilaterally impose its own answer.

A stylized diagram of multiple provers, combining an optimistic proof system, a validity proof system, and a safety committee.

What are the links to existing research?

EVM K Semantics (formal verification work from 2017): https://github.com/runtimeverification/evm-semantics

Talk on the idea of multiple proofs (2022): https://www.youtube.com/watch?v=6hfVzCWT6YI

Taiko plans to use multi-proofs: https://docs.taiko.xyz/core-concepts/multi-proofs/

What else needs to be done? What are the trade-offs?

This is a lot of work for formal verification. We need to create a formally verified version of the entire SNARK prover for the EVM. This is an extremely complex project, although we have already started. There is a trick that can greatly simplify this task: we can create a formally verified SNARK prover for a minimal virtual machine (such as RISC-V or Cairo), and then implement the EVM in that minimal virtual machine (and formally prove its equivalence to other Ethereum virtual machine specifications).

There are two major parts left to multi-proof. First, we need to have enough confidence in at least two different proof systems, both that they are reasonably secure on their own, and that if they break, those problems are different and unrelated (so they don't break at the same time). Second, we need to have very high trust in the underlying logic of the combined proof system. This part of the code is much smaller. There are ways to make it very small, just storing the funds in a safe multisig contract signed by the contracts representing the various proof systems, but this will increase the gas cost on the chain. We need to find some balance between efficiency and security.

How does it interact with the rest of the roadmap?

Moving activity to L2 reduces the MEV pressure on L1.

Cross-L2 interoperability improvements

What problem are we solving?

A major challenge facing today’s L2 ecosystem is that it is difficult for users to navigate. In addition, the easiest approach often reintroduces trust assumptions: centralized cross-chain, RPC clients, etc. We need to make using the L2 ecosystem feel like using a unified Ethereum ecosystem.

What is it? How does it work?

There are many categories of cross-L2 interoperability improvements. In theory, Ethereum with Rollup at its core is the same thing as L1 with sharding. The current Ethereum L2 ecosystem is still far from the ideal state in practice:

1. Address of a specific chain: The address should contain chain information (L1, Optimism, Arbitrum...). Once this is achieved, the cross-L2 sending process can be implemented by simply putting the address into the "Send" field, and the wallet can handle how to send it in the background (including using the cross-chain protocol).

2. Chain-specific payment requests: It should be possible to easily and standardizedly create messages of the form "Send me X number of Y type codes on chain Z". This has two main application scenarios: (i) payments between people or between people and merchant services; (ii) DApps requesting funds.

3. Cross-chain exchange and gas payment: There should be a standardized open protocol to express cross-chain operations, such as "I will send 1 ether (on Optimism) to the person who sent me 0.9999 ether on Arbitrum", and "I will send 0.0001 ether (on Optimism) to the person who included this transaction on Arbitrum." ERC-7683 is an attempt at the former, and RIP-7755 is an attempt at the latter, although both have wider applications than these specific use cases.

4. Light Clients: Users should be able to actually verify the chain they are interacting with, rather than just trusting the RPC provider. a16z crypto’s Helios can do this (for Ethereum itself), but we need to extend this trustlessness to L2. ERC-3668 (CCIP-read) is one strategy to achieve this.

How a light client updates its view of the Ethereum header chain. Once you have the header chain, you can use Merkle proofs to verify any state object. Once you have the correct L1 state object, you can use Merkle proofs (and signatures if you want to check pre-confirmations) to verify any state object on L2. Helios already does the former. Expanding to the latter is a standardization challenge.

1. Keystore wallet: Today, if you want to update the key that controls your smart contract wallet, you have to update it on all N chains where the wallet exists. Keystore wallet is a technology that allows keys to exist in only one place (either on L1 or later on L2), and then any L2 that has a copy of the wallet can read the key from it. This means that updates only need to be done once. To improve efficiency, Keystore wallet requires L2 to have a standardized way to read information on L1 at no cost; there are two proposals for this, namely L1S LOAD and REMOTESTATICCALL.

How Keystore Wallet Works

2. A more radical "shared token bridge" concept: Imagine a world where all L2s are proof-of-validity Rollups and each slot is submitted to Ethereum. Even in such a world, to transfer assets from one L2 to another L2 in a native state, withdrawals and deposits are still required, which requires paying a lot of L1 Gas fees. One way to solve this problem is to create a shared minimalist Rollup whose only function is to maintain which L2 owns each type of token and how much balance each has, and allow these balances to be updated in batches through a series of cross-L2 send operations initiated by any L2. This will make cross-L2 transfers without having to pay L1 gas fees for each transfer, nor do they need to use liquidity provider-based technologies such as ERC-7683.

3. Synchronous composability: Allows synchronous calls to occur between a specific L2 and L1 or between multiple L2s. This helps improve the financial efficiency of DeFi protocols. The former can be achieved without any cross-L2 coordination; the latter requires shared ordering. Rollup-based technologies automatically apply to all of these technologies.

What are the links to existing research?

Chain specific addresses:

ERC-3770: https://eips.ethereum.org/EIPS/eip-3770

ERC-7683: https://eips.ethereum.org/EIPS/eip-7683

RIP-7755: https://github.com/wilsoncusack/RIPs/blob/cross-l2-call-standard/RIPS/rip-7755.md

Scroll keystore wallet design: https://hackmd.io/@haichen/keystore

Helios: https://github.com/a16z/helios

ERC-3668 (sometimes referred to as CCIP): https://eips.ethereum.org/EIPS/eip-3668

Justin Drake’s “Based on (Shared) Preconfirmations” proposal: https://ethresear.ch/t/based-preconfirmations/17353

L1S LOAD (RIP-7728): https://ethereum-magicians.org/t/rip-7728-l1s load-precompile/20388

REMOTESTATICCALL in Optimism: https://github.com/ethereum-optimism/ecosystem-contributions/issues/76

AggLayer, which includes the idea of a shared token bridge: https://github.com/AggLayer

What else needs to be done? What are the trade-offs?

Many of the examples above face the dilemma of when to standardize and which layers to standardize. If you standardize too early, you risk entrenching a poor solution. If you standardize too late, you risk creating unnecessary fragmentation. In some cases, there is both a short-term solution with weaker properties but easier to implement, and a long-term solution that is "eventually correct" but will take years to implement.

These tasks are not just technical problems, they are also (perhaps even primarily) social problems that require cooperation between L2 and wallets as well as L1.

How does it interact with the rest of the roadmap?

Most of these proposals are “higher layer” constructs and therefore have little impact on L1 considerations. One exception is shared ordering, which has a significant impact on Maximum Extractable Value (MEV).

Extended execution on L1

What problem are we solving?

If L2 becomes very scalable and successful, but L1 is still only able to handle very small transaction volumes, there are a number of risks that could arise for Ethereum:

1. The economic conditions of ETH assets will become more unstable, which in turn will affect the long-term security of the network.

2. Many L2s benefit from close ties to the highly developed financial ecosystem on L1. If this ecosystem is greatly weakened, the incentive to become an L2 (rather than an independent L1) will be weakened.

3. It will take a long time for L2 to achieve exactly the same security as L1.

4. If L2 fails (for example, due to malicious behavior or disappearance of the operator), users still need to recover their assets through L1. Therefore, L1 needs to be powerful enough to actually handle the highly complex and messy finishing work of L2 at least occasionally.

For these reasons, it is extremely valuable to continue to scale L1 itself and ensure that it can continue to accommodate an increasing number of use cases.

What is it and how does it work?

The simplest way to scale is to simply increase the gas limit. However, this would likely centralize L1, undermining another important feature that makes Ethereum L1 so powerful: its credibility as a robust base layer. There is ongoing debate about how far simply increasing the gas limit is sustainable, and this will vary depending on what other techniques are implemented to make validation of larger blocks easier (e.g., history expiration, statelessness, L1 EVM validity proofs). Another important thing that needs to continue to improve is the efficiency of Ethereum client software, which is much more efficient today than it was five years ago. An effective L1 gas limit increase strategy will involve accelerating the development of these validation techniques.

EOF: A new EVM bytecode format that is friendlier to static analysis and allows for faster implementations. Given these efficiency gains, EOF bytecode can achieve lower gas fees.

Multi-dimensional Gas Pricing: Setting different base fees and limits for computation, data, and storage can increase the average capacity of Ethereum L1 without increasing the maximum capacity (thus avoiding the creation of new security risks).

Lowering Gas Costs for Specific Opcodes and Precompiles - Historically, we have increased the Gas cost of certain underpriced operations several times to avoid denial of service attacks. One thing that could be done more is to lower the Gas cost of overpriced opcodes. For example, addition is much cheaper than multiplication, but currently the ADD and MUL opcodes cost the same. We could lower the cost of ADD and even make simpler opcodes like PUSH even lower. EOF is more optimized overall in this regard.

EVM-MAX and SIMD: EVM-MAX is a proposal that allows more efficient native large-number modular math as a separate module of the EVM. Unless intentionally exported, the values computed by EVM-MAX calculations can only be accessed by other EVM-MAX opcodes. This allows for more space to store these values in an optimized format. SIMD (single instruction multiple data) is a proposal that allows the same instructions to be executed efficiently on arrays of values. Together, the two can create a powerful coprocessor next to the EVM that can be used to implement cryptographic operations more efficiently. This is particularly useful for privacy protocols and L2 protection systems, so it will help with L1 and L2 expansion.

These improvements will be discussed in more detail in a future Splurge article.

Finally, the third strategy is native Rollups (or enshrined rollups): essentially, creating many copies of the EVM that run in parallel, resulting in a model equivalent to what a Rollup can provide, but more natively integrated into the protocol.

What are the links to existing research?

Polynya’s Ethereum L1 Scaling Roadmap: https://polynya.mirror.xyz/epju72rsymfB-JK52_uYI7HuhJ-W_zM735NdP7alkAQ

Multi-dimensional Gas Pricing: https://vitalik.eth.limo/general/2024/05/09/multidim.html

EIP-7706: https://eips.ethereum.org/EIPS/eip-7706

EOF: https://evmobjectformat.org/

EVM-MAX: https://ethereum-magicians.org/t/eip-6601-evm-modular-arithmetic-extensions-evmmax/13168

SIMD: https://eips.ethereum.org/EIPS/eip-616

Native rollups: https://mirror.xyz/ohotties.eth/P1qSCcwj2FZ9cqo3_6kYI4S2chW5K5tmEgogk6io1GE

Max Resnick interview on the value of extending L1: https://x.com/BanklessHQ/status/1831319419739361321

Justin Drake on scaling with SNARKs and native Rollups: https://www.reddit.com/r/ethereum/comments/1f81ntr/comment/llmfi28/

What else needs to be done, and what are the trade-offs?

There are three strategies for L1 expansion, which can be performed individually or in parallel:

Improve technology (e.g. client code, stateless clients, history expiration) to make L1 easier to verify, then increase the gas limit.

Reduce costs for specific operations and increase average capacity without increasing worst-case risk;

Native Rollups (i.e., creating N parallel copies of the EVM).

Understanding these different techniques, we can see that each has different trade-offs. For example, native Rollups have many of the same weaknesses as normal Rollups in terms of composability: you can't send a single transaction to perform operations synchronously across multiple Rollups, as you can do in contracts on the same L1 (or L2). Raising the gas limit will weaken other benefits that can be achieved by simplifying L1 verification, such as increasing the proportion of users running validating nodes and increasing the number of solo stakers. Depending on the implementation, making specific operations in the EVM (Ethereum Virtual Machine) cheaper may increase the overall complexity of the EVM.

One of the big questions that any L1 scaling roadmap needs to answer is: what is the ultimate vision for L1 and L2? Obviously, it would be absurd to put everything on L1: potential use cases could involve hundreds of thousands of transactions per second, which would make L1 completely unverifiable (unless we go the native Rollup route). But we do need some guiding principles to ensure that we don’t get into a situation where a 10x increase in the gas limit severely damages the decentralization of Ethereum L1.

A view on the division of labor between L1 and L2

How does it interact with the rest of the roadmap?

Bringing more users to L1 means not only improving scaling, but also improving other aspects of L1. This means that more MEVs will stay on L1 (rather than just being an L2 problem), so the need to explicitly handle MEVs will become more urgent. This will greatly increase the value of fast slot times on L1. At the same time, this also relies heavily on the smooth progress of L1 (the Verge) verification.