A professional investor who worked as an analyst and software engineer wrote an article that was bearish on Nvidia, which was widely forwarded by Twitter celebrities and became a major "culprit" in the plunge of Nvidia's stock. Nvidia's market value evaporated by nearly $600 billion, which is the largest single-day drop for a specific listed company to date.

The main point of Jeffrey Emanuel's investor is that DeepSeek has punctured the bullshit created by Wall Street, large technology companies and Nvidia, and Nvidia is overvalued. "Every investment bank recommends buying Nvidia, like a blind man pointing the way, and has no idea what he is talking about."

Jeffrey Emanuel said that Nvidia faces a much bumpier road to maintain its current growth trajectory and margins than its valuation implies. There are five different directions of attack on Nvidia - architectural innovation, customer vertical integration, software abstraction, efficiency breakthroughs, and manufacturing democratization - and the probability that at least one of them will succeed and have a significant impact on Nvidia's margins or growth rates seems high. At the current valuation, the market is not considering these risks.

According to some industry investors, because of this report, Emanuel suddenly became a celebrity on Wall Street, and many hedge funds paid him $1,000 per hour, hoping to hear his views on Nvidia and AI. He was so busy that his throat was fuming, but his eyes were tired from counting money.

The following is the full report. Please refer to it for further study.

As someone who spent about 10 years as an investment analyst at various long/short hedge funds (including stints at Millennium and Balyasny), and as a math and computer geek who has been working on deep learning since 2010 (back when Geoff Hinton was still talking about restricted Boltzmann machines, everything was still programmed in MATLAB, and researchers were still trying to prove they could get better results at classifying handwritten digits than with support vector machines), I think I have a pretty unique perspective on the development of AI technology and how it relates to stock market equity valuations.

Over the past few years, I've been working more as a developer and have several popular open source projects dealing with various forms of AI models/services (see LLM Aided OCR, Swiss Army Llama, Fast Vector Similarity, Source to Prompt, and Pastel Inference Layer as a few recent examples). Basically, I'm using these cutting-edge models intensively every day. I have 3 Claude accounts so that I don't run out of requests, and I signed up for ChatGPT Pro a few minutes after it went live.

I also try to stay up to date with the latest research and read through all the important technical reports and papers published by the major AI labs. As a result, I think I have a pretty good understanding of the field and how things are developing. At the same time, I short a lot of stocks in my life and won the Best Idea Award from the Value Investor Club twice (long TMS and short PDH if you have been paying attention).

I say this not to brag, but to prove that I can speak on this subject without coming across as hopelessly naive to the technical or professional investor crowd. Of course, there are certainly many people who are better at math/science than I am, and many who are better at long/ short investing in the stock market than I am, but I don't think there are many who can be in the middle of the Venn diagram like me.

Nonetheless, whenever I meet up with friends and former colleagues in the hedge fund world, the topic quickly turns to Nvidia. It’s not every day that a company rises from obscurity to a market cap that’s larger than the UK, French, or German stock markets combined! Naturally, these friends want to know my thoughts on the subject. Because I’m such a big believer in the long-term transformative impact of this technology—I truly believe it will revolutionize virtually every aspect of our economy and society over the next 5-10 years, and it’s something that’s unprecedented—it’s hard for me to argue that Nvidia’s momentum is going to slow or stop anytime soon.

But even though I have thought valuations were too high for my taste for the past year or more, a recent series of developments has me leaning a bit towards my instinct to be more cautious about the outlook and to question the consensus when it appears to be overpriced. The saying “wise men believe at the beginning, fools at the end” is famous for a reason.

Bull Market Case

Before we discuss the developments that give me pause, let’s briefly recap the bull run for NVDA stock, which is now known to basically everyone. Deep learning and AI are the most transformative technologies since the internet and are poised to fundamentally change everything in our society. Nvidia has come close to a monopoly in terms of the portion of total industry capex spent on training and inference infrastructure.

Some of the largest and most profitable companies in the world, such as Microsoft, Apple, Amazon, Meta, Google, Oracle, etc., have decided to stay competitive in this field at all costs because they simply cannot afford to fall behind. The amount of capital expenditures, electricity consumption, square footage of new data centers, and of course the number of GPUs have all exploded and there seems to be no sign of slowing down. Nvidia is able to earn amazing gross margins of more than 90% on its high-end products for data centers.

We’ve only scratched the surface of the bull market. There’s more going on right now that will make even the already very optimistic even more optimistic. Besides the rise of humanoid robots (which I suspect will surprise most people when they can quickly do a lot of tasks that currently require unskilled (or even skilled) workers, like laundry, cleaning, tidying, and cooking; working in teams of workers to complete construction jobs like remodeling bathrooms or building houses; managing warehouses and driving forklifts, etc.), there are other factors that most people haven’t even considered yet.

One of the main topics smart people are talking about is the rise of the “new scaling laws”, which provide a new paradigm for thinking about how computational requirements will grow over time. The original scaling law that has driven progress in AI since the advent of AlexNet in 2012 and the invention of the Transformer architecture in 2017 is the pre-training scaling law: the higher the value of the tokens we use as training data (now in the trillions), the higher the number of parameters of the models we train, and the more computational power (FLOPS) we consume to train these models with these tokens, the better the performance of the final models will be on a wide variety of very useful downstream tasks.

Not only that, but the improvement is so predictable that leading AI labs like OpenAI and Anthropic know pretty well how good their latest models will be before they even start actual training—in some cases, they can even predict the final model’s baseline to within a few percentage points. This “original scaling law” is incredibly important, but it always raises questions for those who use it to predict the future.

First, it seems like we’ve exhausted the world’s arsenal of high-quality training datasets. Of course, that’s not entirely true — there are still many old books and journals that have not been properly digitized, or even if they have been digitized, have not been properly licensed for use as training data. The problem is that even if you attribute all of that stuff to you — say the sum of all “professionally” produced written content in English from 1500 to 2000, that’s not a huge amount from a percentage point of view when you’re talking about a training corpus of nearly 15 trillion tokens, the size of the training corpus that’s used by current cutting-edge models.

To quickly put these numbers in context: Google Books has digitized about 40 million books so far; if an average book has 50,000 to 100,000 words, or 65,000 to 130,000 tokens, then books alone account for 2.6T to 5.2T tokens, of course a lot of which is already included in the training corpora used by large labs, whether strictly legal or not. There are also a lot of academic papers, with over 2 million on the arXiv site alone. The Library of Congress has over 3 billion pages of digitized newspapers. Add it all up, and the total could be as high as 7T tokens, but since most of that is actually included in the training corpus, the remaining "incremental" training data may not be that important in the overall scheme of things.

Of course, there are other ways to collect more training data. For example, you could automatically transcribe every YouTube video and use that text. While this might help, it would certainly be of much lower quality than a well-respected organic chemistry textbook, which is a useful source of knowledge about the world. So in terms of the raw law of scale, we are constantly facing the threat of a "data wall"; while we know we can keep throwing more capital expenditures at GPUs and building more data centers, it is much more difficult to mass-produce useful new human knowledge that is the right complement to existing knowledge. Now, an interesting response is the rise of "synthetic data", that is, the text itself is the output of the LLM. While it seems a bit ridiculous, "improving model quality through your own supply" does work very well in practice, at least in the fields of mathematics, logic, and computer programming.

The reason, of course, is that these are domains where we can mechanically check and prove the correctness of things. So we can sample from these giant mathematical theorems or Python scripts and actually check that they are correct, and only the correct data will be included in our database. In this way, we can greatly expand the set of high-quality training data, at least in these domains.

In addition to text, there are all kinds of other data we can use to train AI. For example, what if we took the entire genome sequence data of 100 million people (about 200GB to 300GB of uncompressed data for one person) and used it to train AI? This is obviously a lot of data, even though most of it is almost exactly the same between two people. Of course, comparisons with text data from books and the Internet can be misleading for a variety of reasons:

Raw genome size is not directly comparable to number of markers

The information content of genomic data is very different from text

The training value of highly redundant data is unclear

The computational requirements for processing genomic data are also different

But it's still another huge source of information that we can train on in the future, which is why I included it.

So while we are expected to have access to more and more additional training data, if you look at the rate at which training corpora have grown in recent years, we will soon hit a bottleneck in the availability of “generally useful” knowledge data, the kind that can help us get closer to our ultimate goal of obtaining an artificial superintelligence that is 10 times smarter than John von Neumann, becoming a world-class expert in every specialized field known to man.

Besides the limited data available, there are other concerns lurking in the minds of proponents of the law of pretraining scaling. One of them is, what do you do with all that computing infrastructure after you’ve finished training your model? Train the next model? Sure, you can do that, but given the rapid improvement in GPU speed and capacity, and the importance of electricity and other operating costs in economic computing, does it really make sense to use a 2-year-old cluster to train a new model? Of course, you’d rather use that brand new data center you just built that costs 10 times as much as the old one and has 20 times the performance because of more advanced technology. The thing is, at some point you do need to amortize the upfront cost of these investments and recoup them through a (hopefully positive) operating profit stream, right?

The market has been so excited about AI that it has lost sight of this, allowing companies like OpenAI to accumulate operating losses from the outset while receiving increasingly higher valuations in subsequent investments (and, to their credit, also demonstrating very rapidly growing revenues). But ultimately, to sustain this over a full market cycle, these data centers need to eventually recover their costs, and ideally also turn a profit, so that over time they can compete on a risk-adjusted basis with other investment opportunities.

New Paradigm

OK, so that's the pre-training scaling law. So what is this "new" scaling law? Well, it's something people have only started to pay attention to in the past year: inference-time compute scaling. Before this, the vast majority of compute you spent in the process was the upfront training compute to create the model. Once you have a trained model, doing inference on that model (i.e. asking a question or having the LLM do some kind of task for you) only uses a certain amount of compute.

Importantly, the total amount of inference compute (measured in various ways, such as FLOPS, GPU memory usage, etc.) is much lower than the amount of compute required during the pre-training phase. Of course, the amount of inference compute does increase as you increase the context window size of the model and the amount of output it generates at once (although researchers have made amazing algorithmic improvements in this regard, and initially people expected the scaling to be quadratic). But basically, until recently, inference compute was generally much less intensive than training compute, and scaled roughly linearly with the number of requests processed - for example, the more requests for ChatGPT text completion, the more inference compute it consumed.

Everything changed with the introduction of the revolutionary Chain-of-Thought (COT) models last year, most notably OpenAI’s flagship model O1 (but more recently DeepSeek’s new model R1 also uses this technology, which we will discuss in detail later). Instead of inferencing computations directly proportional to the length of the output text generated by the model (which increases proportionally for larger context windows, model sizes, etc.), these new COT models generate intermediate “logic tokens”; think of them as a kind of “temporary memory” or “internal monologue” while the model is trying to solve your problem or complete your assigned task.

This represents a real revolution in the way reasoning is done: now, the more tokens you use in this internal thought process, the better the quality of the final output you provide to the user. In practice, it's like giving a worker more time and resources to complete a task so that they can double-check their work, complete the same basic task in multiple different ways and verify that the results are the same; "plug" the result into a formula to check that it actually solves the equation, etc.

This approach has proven to be almost amazingly effective; it leverages the long-awaited power of reinforcement learning, as well as the power of the Transformer architecture. It directly addresses one of the biggest weaknesses of the Transformer model, its tendency to hallucinate.

Basically, the way the Transformer works when predicting the next token at each step is that if they start going down a wrong “path” in their initial response, they become almost like a prevaricating child, trying to make up a story to explain why they were actually correct, even though they should have used common sense along the way to realize that what they said couldn’t possibly be correct.

Because the models are always trying to be internally consistent and make each successive generated token flow naturally from the previous tokens and context, it’s very hard for them to course-correct and backtrack. By breaking the reasoning process down into many intermediate stages, they can try many different approaches, see what works, and keep course-correcting and trying other approaches until they can reach a reasonably high level of confidence that they’re not talking nonsense.

What’s so special about this approach, besides the fact that it actually works, is that the more logic/COT tokens you use, the better it gets. Suddenly, you have an extra spinner, and as the number of COT inference tokens increases (which requires more inference computation, both in terms of floating point operations and memory), the higher the probability that you’ll get the right answer — the code running without errors the first time, or the solution to a logic problem with no obviously wrong inference steps.

I can tell you from a lot of first-hand experience that, while Anthropic's Claude3.5 Sonnet model is great for Python programming (it really is), it always makes one or more silly mistakes whenever you need to generate any lengthy and complex code. Now, these mistakes are usually easy to fix, and in fact, you can usually fix them just by following the error generated by the Python interpreter as a follow-up reasoning hint (or, more practically, using a so-called linter to paste the full set of "problems" that the code editor found in your code) without any further explanation. When the code gets very long or very complex, it sometimes takes a lot longer to fix, and may even require some manual debugging.

The first time I tried OpenAI’s O1 model, it was like a revelation: I was amazed at how well the code worked the first time. This is because the COT process automatically finds and fixes problems before the final response token is included in the answer given by the model.

In fact, the O1 model used in OpenAI’s ChatGPT Plus subscription service ($20 per month) is essentially the same model used in the O1-Pro model in the new ChatGPT Pro subscription service (which costs 10 times as much, or $200 per month, which has caused an uproar in the developer community); the main difference is that O1-Pro thinks longer before responding, generates more COT logic tokens, and consumes a lot of inference computing resources for each response.

This is quite remarkable, because even for Claude3.5 Sonnet or GPT4o, even given ~400kb+ of context, a very long and complex prompt will typically take less than 10 seconds to start responding, and often less than 5 seconds, whereas the same prompt for the O1-Pro can take over 5 minutes to get a response (although OpenAI does show you some of the “reasoning steps” it generates along the way while you’re waiting; importantly, OpenAI has decided to hide from you the exact reasoning markup it generates for trade secrets reasons, and instead show you a highly simplified summary).

As you might imagine, there are many situations where accuracy is critical - you'd rather give up and tell the user you simply can't do it than give an answer that could be easily proven wrong, or one that involves hallucinatory facts or other specious reasoning. Anything involving money/transactions, medical, and legal, to name a few.

Basically, as long as the cost of inference is negligible relative to the full hourly salary of the human knowledge worker interacting with the AI system, then invoking the COT calculation becomes a complete no-brainer in that case (the main disadvantage is that it will greatly increase the latency of the response, so in some cases you may prefer to iterate faster by getting a response with lower latency and less accuracy or correctness).

A few weeks ago, there was some exciting news in the AI world involving OpenAI’s yet-to-be-released O3 model, which was able to solve a range of problems that were previously thought to be unsolvable in the short term with existing AI methods. The fact that OpenAI was able to solve these toughest problems (including extremely difficult “foundational” math problems that are difficult for even very skilled professional mathematicians) was because OpenAI threw a lot of computing resources at it—in some cases, spending more than $3,000 in computing power to solve a single task (by comparison, using a regular Transformer model, traditional reasoning for a single task without thought chaining is unlikely to cost more than a few dollars).

It doesn’t take an AI genius to realize that this progress has created a whole new scaling law that is completely different from the original pre-training scaling law. Now, you still want to train the best models possible by cleverly leveraging as many compute resources as possible and as many trillions of high-quality training data as possible, but this is just the beginning of the story of this new world; now you can easily use an incredible amount of compute resources to infer only from these models to very high confidence, or try to solve extremely difficult problems that require “genius-level” reasoning, avoiding all the potential pitfalls that might lead an average LLM astray.

But why should Nvidia get all the benefits?

Even if you believe, as I do, that the future promise of AI is almost unimaginable, the question remains: “Why should one company capture the majority of the profits from this technology?” There have indeed been many important new technologies in history that have changed the world, but the main winners have not been the companies that seemed most promising in their initial stages. Although the Wright brothers’ aircraft company invented and perfected the technology, today that company is worth less than $10 billion, despite having morphed into multiple companies. While Ford has a respectable market cap of $40 billion today, that’s only 1.1% of Nvidia’s current market cap.

To understand this, you have to really understand why Nvidia has such a large market share. After all, they are not the only company making GPUs. AMD makes decent GPUs that are comparable in transistor count, process node, etc. to Nvidia. Sure, AMD GPUs are not as fast or advanced as Nvidia GPUs, but Nvidia GPUs are not 10 times faster or anything like that. In fact, in terms of raw cost per FLOP, AMD GPUs are only half as expensive as Nvidia GPUs.

Looking at other semiconductor markets, such as the DRAM market, although the market is highly concentrated with only three global companies (Samsung, Micron, SK-Hynix) of practical significance, the gross margin of the DRAM market is negative at the bottom of the cycle, about 60% at the top of the cycle, and the average is around 20%. In contrast, Nvidia's overall gross margin in recent quarters has been around 75%, mainly due to the lower-margin and more commoditized consumer-grade 3D graphics products.

So, how is this possible? Well, the main reasons have to do with software — well-tested and highly reliable drivers that “just work” on Linux (unlike AMD, whose Linux drivers are notorious for being low quality and unstable), and highly optimized open source code, such as PyTorch, that is tweaked to run well on Nvidia GPUs.

Not only that, but CUDA, the programming framework that programmers use to write low-level code optimized for GPUs, is entirely owned by Nvidia and has become the de facto standard. If you want to hire a bunch of extremely talented programmers who know how to use GPUs to accelerate their work, and are willing to pay them $650,000/year, or whatever the going rate is for people with that particular skill set, then they will most likely "think" and work with CUDA.

In addition to software advantages, Nvidia's other major advantage is the so-called interconnect - essentially, it is a bandwidth that efficiently connects thousands of GPUs together so that they can be used together to train today's most advanced basic models. In short, the key to efficient training is to keep all GPUs fully utilized at all times, rather than idling and waiting until they receive the next batch of data needed for the next step of training.

The bandwidth requirements are very high, far higher than the typical bandwidth needed for traditional data center applications. This interconnect cannot use traditional network equipment or optical fiber because they introduce too much latency and cannot provide the multi-terabyte per second bandwidth required to keep all the GPUs constantly busy.

Nvidia’s acquisition of Israeli company Mellanox for $6.9 billion in 2019 was a very smart decision, and it is this acquisition that provides them with industry-leading interconnect technology. Note that interconnect speed is more closely related to the training process (where the output of thousands of GPUs must be utilized simultaneously) than to the inference process (including COT inference), which only requires a small number of GPUs - all you need is enough VRAM to store the quantized (compressed) model weights of the trained model.

Arguably, these are the main components of Nvidia’s “moat” and the reason it has been able to maintain such high profit margins for so long (there’s also a “flywheel effect” whereby they aggressively reinvest their excess profits into massive amounts of R&D, which in turn helps them improve their technology at a faster rate than their competitors, so they always stay ahead in terms of raw performance).

But as pointed out earlier, all other things being equal, what customers really care about is often performance per dollar (including the upfront capital expenditure cost of the equipment and energy usage, i.e. performance per watt), and while Nvidia's GPUs are indeed the fastest, they are not the most cost-effective if measured purely in FLOPS.

But the problem is that other factors are not equal, AMD's drivers suck, popular AI software libraries don't run well on AMD GPUs, you can't find GPU experts who are really good at AMD GPUs outside of gaming (why should they bother, there's more demand for CUDA experts in the market?), and you can't effectively connect thousands of GPUs together due to AMD's crappy interconnect technology - all of which means AMD is basically uncompetitive in the high-end data center space and doesn't seem to have very good prospects for development in the short term.

OK, sounds like Nvidia has a great future, right? Now you know why its stock is so highly valued! But are there any other concerns? Well, I don't think there are many that warrant significant concern. Some of these issues have been lurking in the background for the past few years, but their impact has been minimal given the pace of growth. But they are poised to potentially move upward. Other issues have only emerged recently (like in the past two weeks) and could significantly change the trajectory of GPU demand growth in the near term.

Major threats

At a macro level, you can think about it like this: Nvidia has been operating in a very niche space for quite some time; they have very limited competitors, and those competitors are not very profitable or growing fast enough to be a real threat because they don't have enough capital to really put pressure on a market leader like Nvidia. The gaming market is large and growing, but it's not generating amazing profits or particularly amazing year-over-year growth rates.

Around 2016-2017, some large tech companies began to increase their hiring and spending on machine learning and artificial intelligence, but overall, this was never a really important project for them - more like "moonshot" R&D spending. But after the release of ChatGPT in 2022, the competition in the field of artificial intelligence really began. Although it is only more than two years away, it seems like a long time ago in terms of the speed of development.

Suddenly, big companies were ready to invest billions of dollars at an alarming rate. The number of researchers attending large research conferences like Neurips and ICML skyrocketed. Bright students who might previously have worked on financial derivatives switched to working on Transformers, and million-dollar-plus compensation packages for non-executive engineering positions (i.e., independent contributors who don’t manage a team) became the norm at leading AI labs.

Changing the direction of a large cruise ship takes a while; even if you move very quickly and spend billions of dollars, it can take a year or more to build a brand new data center, order all the equipment (with longer lead times), and get everything set up and debugged. Even the smartest programmers take a long time to really get into the swing of things and get familiar with an existing code base and infrastructure.

But as you can imagine, the amount of money, manpower, and energy invested in this field is absolutely astronomical. Nvidia is the biggest target of all the players because they are the biggest contributor to profits today, not in the future when AI dominates our lives.

Therefore, the most important conclusion is that "the market will always find a way". They will find alternative and radically innovative new ways to manufacture hardware, using completely new ideas to bypass obstacles and thus consolidate Nvidia's moat.

Hardware-level threats

For example, Cerebras’ so-called “wafer-scale” AI training chips use an entire 300mm silicon wafer for an absolutely massive chip that contains orders of magnitude more transistors and cores on a single die (see their recent blog post to learn how they’ve solved the yield issues that have prevented this approach from being economically practical in the past).

To put this into context, if you compare Cerebras’ latest WSE-3 chip to Nvidia’s flagship datacenter GPU, the H100, the Cerebras chip has a total die area of 46,225 square millimeters, while the H100 is only 814 square millimeters (the H100 itself is a huge chip by industry standards); that’s a multiple of 57 times! Instead of having 132 “streaming multiprocessor” cores enabled on the chip like the H100, the Cerebras chip has about 900,000 cores (of course, each core is smaller and has fewer features, but the number is still very large in comparison). Specifically in the field of artificial intelligence, the Cerebras chip has about 32 times the FLOPS computing power of a single H100 chip. Since the H100 chip sells for nearly $40,000, it’s no wonder that the WSE-3 chip is not cheap either.

So what's the point? Rather than trying to take a similar approach to go head-to-head with Nvidia, or to match Mellanox's interconnect technology, Cerebras is taking a whole new approach to getting around the interconnect problem: when everything runs on the same super-large chip, the bandwidth issue between processors becomes less of a concern. You don't even need the same level of interconnect, because one giant chip can replace tons of H100s.

And the Cerebras chip performs very well in AI reasoning tasks. In fact, you can try it for free here today and use Meta's very famous Llama-3.3-70B model. Its response speed is basically instant, about 1500 tokens per second. From a comparative perspective, compared to ChatGPT and Claude, speeds of more than 30 tokens per second are relatively fast for users, and even 10 tokens per second is fast enough to basically read it while generating a response.

Cerebras isn’t the only company out there, there are others like Groq (not to be confused with Elon Musk’s X AI’s Grok line of models). Groq takes another innovative approach to solving the same basic problem. Rather than trying to compete directly with Nvidia’s CUDA software stack, they developed what they call “tensor processing units” (TPUs) that are specialized for the precise math required for deep learning models. Their chips are designed around the concept of “deterministic computing,” meaning that unlike traditional GPUs, their chips perform operations in a completely predictable way every time.

This may sound like a minor technical detail, but it actually has a huge impact on both chip design and software development. Because the timing is completely fixed, Groq can optimize its chip in a way that traditional GPU architectures can't. As a result, for the past 6+ months, they have been demonstrating inference speeds of over 500 tokens per second for the Llama family of models and other open source models, far exceeding what can be achieved with traditional GPU setups. Like Cerebras, this product is available now and you can try it for free here.

Using the Llama3 model with “speculative decoding”, Groq was able to generate 1320 tokens per second, which is comparable to Cerebras and far exceeds the performance using regular GPUs. Now, you might ask what the point of reaching 1000+ tokens per second is when users seem to be quite happy with the speed of ChatGPT (less than 1000 tokens per second). In fact, it does matter. When you get instant feedback, you iterate faster and don’t lose focus like human knowledge workers. If you use the model programmatically through the API, it can enable entirely new categories of applications that require multi-stage reasoning (the output of the previous stage is used as the input of the prompt/reasoning of the subsequent stage), or require low-latency responses, such as content moderation, fraud detection, dynamic pricing, etc.

But more fundamentally, the faster you can respond to requests, the faster you can cycle, and the busier your hardware can be. While Groq's hardware is expensive, costing $2 million to $3 million a server, if demand is high enough to keep the hardware busy, the cost per completed request can be significantly lower.

Much like Nvidia's CUDA, a big part of Groq's advantage comes from its proprietary software stack. They are able to take open source models that other companies like Meta, DeepSeek, and Mistral have developed and released for free, and break them down in special ways to make them run faster on specific hardware.

Like Cerebras, they made different technical decisions to optimize certain specific aspects of the process, which led to an entirely different way of doing things. In Groq’s case, they focus entirely on inference-level computation, not training: all of their special hardware and software only delivers huge speed and efficiency benefits when doing inference on an already trained model.

But if the next big scaling law people are looking forward to is inference-level computing, and the biggest drawback of the COT model is that all the intermediate logic tags must be generated to respond, resulting in excessive latency, then even a company that only does inference computing will pose a serious competitive threat in the next few years as long as its speed and efficiency far exceed Nvidia. At the very least, Cerebras and Groq can erode the excessive expectations of Nvidia's revenue growth in the next 2-3 years in the current stock valuation.

In addition to these particularly innovative but relatively unknown startup competitors, some of Nvidia’s largest customers themselves present serious competition, having been building custom chips specifically for AI training and inference workloads. The most notable of these is Google, which has been developing its own proprietary TPU since 2016. Interestingly, while Google briefly sold TPUs to external customers, it has been using all of its TPUs internally for the past few years, and it has already launched the sixth generation of TPU hardware.

Amazon is also developing its own custom chips, called Trainium 2 and Inferentia 2. Amazon is building data centers with billions of dollars of Nvidia GPUs, and at the same time, they are investing billions of dollars in other data centers that use these internal chips. They have a cluster that is coming online for Anthropic that has over 400,000 chips.

Amazon has been criticized for completely screwing up its internal AI model development, wasting a lot of internal computing resources on models that ultimately weren't competitive, but custom chips are another matter. Again, they don't necessarily need their chips to be better and faster than Nvidia's. They just need them to be good enough, but made at a break-even gross margin, not the ~90%+ gross margins Nvidia earns on its H100 business.

OpenAI also announced their plans to build custom chips, and they (along with Microsoft) are apparently the largest user of Nvidia datacenter hardware. As if that wasn’t enough, Microsoft themselves announced their own custom chips!

Apple, the world’s most valuable technology company, has been subverting expectations for years with its highly innovative and disruptive custom chip business, which now thoroughly beats Intel and AMD’s CPUs in terms of performance per watt, which is the most important factor in mobile (phone/tablet/laptop) applications. They have been producing their own internally designed GPUs and “neural processors” for years, although they have yet to truly prove the usefulness of these chips outside of their custom applications, such as the advanced software-based image processing used in the iPhone camera.

While Apple’s focus seems to be somewhat different from these other players, with its focus being mobile-first, consumer-oriented, and “edge computing,” if Apple ends up investing enough in its new contract with OpenAI to provide AI services to iPhone users, then you have to imagine they have teams working on how to make their own custom chips for inference/training (although given their secrecy, you’ll probably never know this firsthand!).

It’s no secret that Nvidia’s hyperscaler customer base exhibits a strong power-law distribution, with a handful of top customers accounting for the vast majority of high-margin revenues. How should we think about the future of this business when each of these VIP customers is building its own custom chips specifically for AI training and inference?

As you think about these questions, you should remember a very important fact: Nvidia is very much an IP-based company. They don’t make their own chips. The truly special secrets to making these incredible devices probably come more from TSMC and ASML, which make the special EUV lithography machines used to make these leading-edge process node chips. This is critical because TSMC will sell its most advanced chips to any customer willing to provide enough upfront investment and guarantee a certain volume. They don’t care if these chips are for Bitcoin mining ASICs, GPUs, TPUs, cell phone SoCs, etc.

Given how much Nvidia's veteran chip designers make, these tech giants can surely offer enough cash and stock to lure some of the best talent to jump ship. Once they have the team and resources, they could design innovative chips in 2-3 years (maybe not even 50% as advanced as H100, but with Nvidia's gross margins, they still have plenty of room to grow), and thanks to TSMC, they could turn those chips into actual silicon using the exact same process node technology as Nvidia.

Software threats

As if these looming hardware threats weren't bad enough, there have also been some developments in the software space over the past few years that, while slow to start, are now gaining momentum and could pose a serious threat to Nvidia's CUDA software dominance. The first is the terrible Linux drivers for AMD GPUs. Remember when we discussed how AMD unwisely allowed these drivers to be so terrible for years while sitting back and losing a lot of money?

Interestingly, notorious hacker George Hotz (famous for jailbreaking the original iPhone as a teenager and currently CEO of self-driving startup Comma.ai and AI computer company Tiny Corp, which also developed the open source TinyGrad AI software framework) recently announced that he was tired of dealing with AMD's poor drivers and was eager to be able to use lower-cost AMD GPUs in his TinyBox AI computer (there are multiple models, some of which use Nvidia GPUs and others use AMD GPUs).

In fact, he made his own custom driver and software stack for AMD GPUs without AMD’s help; on January 15, 2025, he tweeted from the company’s X account: “We are just one step away from AMD’s fully own stack, RDNA3 assembler. We have our own drivers, runtime, libraries and simulator. (All in about 12,000 lines!)” Given his track record and skills, they will likely have everything done in the next few months, which will open up many exciting possibilities for using AMD GPUs for a variety of applications that companies currently have to pay for Nvidia GPUs.

OK, that's just one driver from AMD, and it's not done yet. What else is there? Well, there are other areas on the software side that have a bigger impact. First, there is now a joint effort by many large tech companies and the open source software community to develop a more general AI software framework, of which CUDA is just one of many "compile targets."

That is, you write your software using higher-level abstractions, and the system itself automatically converts these high-level constructs into super-optimized low-level code that runs extremely well on CUDA, but because it's done at this higher-level abstraction layer, it can easily be compiled down to low-level code that runs well on many other GPUs and TPUs from a variety of vendors, such as the large number of custom chips being developed by major tech companies.

The most notable examples of these frameworks are MLX (primarily sponsored by Apple), Triton (primarily sponsored by OpenAI), and JAX (developed by Google). MLX is particularly interesting because it provides a PyTorch-like API that runs efficiently on Apple Silicon, demonstrating how these abstraction layers can enable AI workloads to run on completely different architectures. Meanwhile, Triton is growing in popularity because it allows developers to write high-performance code that can be compiled to run on a variety of hardware targets without having to understand the low-level details of each platform.

These frameworks allow developers to write code using powerful abstractions and then automatically compile it for a wide range of platforms - doesn't that sound more efficient? This approach provides greater flexibility when it comes to actually running the code.

In the 1980s, all of the most popular, best-selling software was written in hand-tuned assembly language. For example, the PKZIP compression utility was hand-crafted to maximize speed, to the point that a version of the code written in the standard C programming language and compiled with the best optimizing compilers of the time would run perhaps half as fast as the hand-tuned assembly code. The same was true for other popular software packages, such as WordStar, VisiCalc, and others.

As compilers became more powerful over time, handwritten assembler programs generally had to be thrown out and rewritten whenever CPU architecture changed (e.g., from Intel releasing the 486 to the Pentium, etc.), and only the smartest programmers were able to do the job (just like CUDA experts have an edge in the job market over "normal" software developers). Eventually, things converged, and the speed advantage of handwritten assembler was greatly outweighed by the flexibility of writing code in a higher-level language like C or C++, which relies on the compiler to make the code run optimally on a given CPU.

Today, few people write new code in assembly. I believe a similar shift will eventually happen to AI training and inference code, for much the same reasons: computers are good at optimization, and flexibility and speed of development are increasingly important factors—especially if it also saves a lot of money on hardware because you don’t have to keep paying the “CUDA tax” that generates more than 90% of Nvidia’s profits.

However, another area where big changes are likely is that CUDA itself may end up being a high-level abstraction — a “specification language” similar to Verilog (as an industry standard for describing chip layouts) that skilled developers can use to describe high-level algorithms involving massive parallelism (because they are already familiar with it, it is well-structured, it is a general-purpose language, etc.), but unlike the usual practice, this code is not compiled for Nvidia GPUs, but is fed as source code into LLM, which can convert it into any low-level code that can be understood by the new Cerebras chip, the new Amazon Trainium2, or the new Google TPUv6, etc. This is not as far away as you think; with OpenAI's latest O3 model, it may already be within reach, and it will certainly be generally available in a year or two.

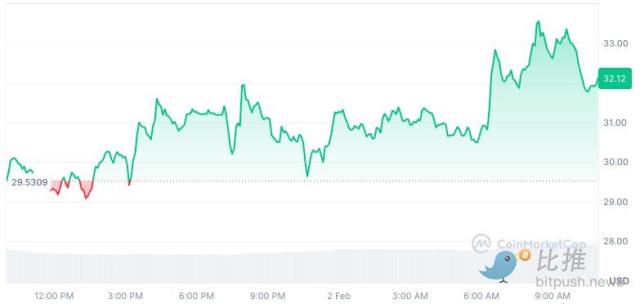

Theoretical Threat

Perhaps the most shocking development happened in the last few weeks. This news shook the AI community to its core, and although it was not mentioned in the mainstream media, it became a hot topic among intellectuals on Twitter: a Chinese startup called DeepSeek released two new models that have performance levels comparable to the best models from OpenAI and Anthropic (surpassing the Meta Llama3 model and other smaller open source models like Mistral). These models are named DeepSeek-V3 (basically a response to GPT-4o and Claude3.5 Sonnet) and DeepSeek-R1 (basically a response to OpenAI's O1 model).

Why is all this so shocking? First of all, DeepSeek is a small company with reportedly less than 200 employees. They were said to have started out as a quantitative trading hedge fund similar to TwoSigma or RenTec, but after China stepped up regulation of the field, they used their math and engineering expertise to move into AI research. But the fact is that they released two very detailed technical reports, DeepSeek-V3 and DeepSeekR1.

These are highly technical reports, and if you don't know anything about linear algebra, it might be hard to follow them. But what you should try is to download the DeepSeek app for free on the AppStore, log in with your Google account and install it, then give it a try (you can also install it on Android), or just try it in a browser on your desktop. Make sure to select the "DeepThink" option to enable the thought chain (R1 model) and let it explain parts of the technical report in simple language.

This also tells you something important:

First of all, this model is absolutely legit. There are a lot of fakes in AI benchmarks, which are often manipulated to make models perform well on the benchmark but perform poorly on real-world tests. Google is undoubtedly the biggest culprit in this regard, always bragging about how amazing their LLMs are, but in fact, these models perform terribly in real-world tests and cannot reliably complete even the simplest tasks, let alone challenging coding tasks. DeepSeek's model is different. Its responses are coherent and powerful, and it is completely on the same level as OpenAI and Anthropic's models.

Secondly, DeepSeek has made significant progress not only in model quality, but more importantly in model training and inference efficiency. By being very close to the hardware, and by combining some unique and very clever optimizations together, DeepSeek is able to use GPUs to train these incredible models in a way that is significantly more efficient. According to some measurements, DeepSeek is about 45 times more efficient than other cutting-edge models.

DeepSeek claims that the total cost of training DeepSeek-V3 was just over $5 million, which is nothing by the standards of companies like OpenAI and Anthropic, as these companies have reached a level of over $100 million in single model training costs as early as 2024.

How is this possible? How is it possible that this small Chinese company is completely outsmarting all the smartest people in our leading AI labs that have 100x more resources, headcount, salaries, capital, GPUs, etc? Shouldn’t China be crippled by Biden’s restrictions on GPU exports? OK, the details are pretty technical, but we can at least describe it in general. Maybe it turns out that DeepSeek’s relatively weak GPU processing power is precisely the key factor that boosts its creativity and ingenuity, because “necessity is the mother of invention.”

One major innovation is their advanced mixed-precision training framework, which allows them to use 8-bit floating point numbers (FP8) throughout the training process. Most Western AI labs use "full precision" 32-bit numbers for training (this basically specifies the number of possible gradients when describing the output of an artificial neuron; the 8 bits in FP8 can store a wider range of numbers than you might think - it's not limited to 256 different sized equal quantities in regular integers, but uses clever mathematical tricks to store very small and very large numbers - although the natural precision is not as good as 32 bits.) The main trade-off is that while FP32 can store numbers with amazing precision over a wide range, FP8 sacrifices some precision to save memory and improve performance, while still maintaining enough precision for many AI workloads.

DeepSeek solved this problem by developing a clever system that breaks the numbers into small chunks for activations and chunks for weights, and strategically uses high-precision computation at key points in the network. Unlike other labs that train at high precision first and then compress (losing some quality in the process), DeepSeek's FP8-native approach means they can save a lot of memory without affecting performance. When you're training with thousands of GPUs, the memory requirements per GPU are drastically reduced, which means you need a lot fewer GPUs overall.

Another major breakthrough is their multi-label prediction system. Most Transformer-based LLM models perform inference by predicting the next label - one label at a time.

DeepSeek figured out how to predict multiple labels while maintaining the quality of single-label predictions. Their method achieves about 85-90% accuracy in these extra label predictions, effectively doubling the speed of inference without sacrificing too much quality. The clever thing is that they keep the full causal chain of predictions, so the model isn’t just guessing, but making structured, contextual predictions.

One of their most innovative developments is what they call Multi-Head Latent Attention (MLA). This is a breakthrough in how they handle what they call key-value indexes, which is basically how individual tokens are represented in the attention mechanism in the Transformer architecture. While it’s a bit overly technical, it’s fair to say that these KV indexes are one of the main uses of VRAM during training and inference, and are part of the reason why you need to use thousands of GPUs simultaneously to train these models - each GPU has a maximum VRAM of 96GB, and these indexes eat up that memory.

Their MLA system found a way to store compressed versions of these indexes that use less memory while capturing essential information. The best part is that this compression is built directly into the way the model is learned - it's not some separate step they need to do, but built directly into the end-to-end training pipeline. This means that the entire mechanism is "differentiable" and can be trained directly with standard optimizers. It works because the underlying data representation that these models ultimately find is far lower than the so-called "environment dimension." Therefore, it is a waste to store the full KV index, even though everyone else is basically doing this.

Not only does it lead to a huge improvement in training memory footprint and efficiency because a lot of space is wasted by storing more data than you actually need (again, the number of GPUs required to train world-class models is greatly reduced), but it can actually improve model quality because it acts as a "regulator" and forces the model to focus on what is really important instead of wasting capacity on adapting to noise in the training data. So not only do you save a lot of memory, but the performance of your model may even be better. At the very least, you don't severely affect performance by saving a lot of memory, which is usually the trade-off you face in AI training.

They also made significant progress in GPU communication efficiency through the DualPipe algorithm and a custom communication kernel. The system intelligently overlaps computation and communication, carefully balancing GPU resources between tasks. They only need about 20 of the GPU's streaming multiprocessors (SMs) for communication, and the rest are used for computation. The result is much higher GPU utilization than a typical training setup.

Another very smart thing they did was to use what is called a mixture of experts (MOE) Transformer architecture, but with a key innovation around load balancing. As you may know, the size or capacity of an AI model is often measured in terms of the number of parameters the model contains. A parameter is just a number that stores some property of the model; for example, the “weight” or importance of a particular artificial neuron relative to another neuron, or the importance of a particular token based on its context (in an “attention mechanism”), etc.

Meta’s latest Llama3 models come in several sizes, such as a 1B parameter version (smallest), a 70B parameter model (most commonly used), and even a large model with 405B parameters. This largest model has limited practicality for most users, as your computer would need to be equipped with a GPU worth tens of thousands of dollars to run inference at an acceptable speed, at least if you deploy the original full-precision version. As a result, most of the real-world use and excitement about these open source models is at the 8B parameter or highly quantized 70B parameter level, because this is what the consumer-grade Nvidia 4090 GPU can accommodate, which you can buy now for less than $1000.

So, what does all this mean? In a sense, the number and precision of parameters tells you how much raw information or data is stored inside the model. Note that I am not talking about reasoning ability, or the "IQ" of the model: it turns out that even models with a small number of parameters can show excellent cognitive abilities in solving complex logic problems, proving theorems in plane geometry, SAT math problems, and so on.

But those small models won’t necessarily be able to tell you every aspect of every plot twist in every Stendhal novel, whereas a really large model could potentially do that. The “cost” of this extreme level of knowledge is that the models become very cumbersome and difficult to train and infer, because in order to perform inference on the model, you always need to store every one of the 405B parameters (or whatever the number of parameters is) in the GPU’s VRAM at the same time.

The advantage of the MOE model approach is that you can decompose a large model into a series of smaller models, each of which has different, non-overlapping (at least not completely overlapping) knowledge. DeepSeek's innovation is the development of a load balancing strategy they call "no auxiliary loss" that maintains the efficient use of experts without the performance degradation that load balancing usually brings. Then, depending on the nature of the inference request, you can intelligently route the inference to the "expert" model in the collection of smaller models that is best able to answer the question or solve the task.

You can think of it as a committee of experts who have their own areas of expertise: one might be a legal expert, another might be a computer science expert, and another might be a business strategy expert. So if someone asks you a linear algebra question, you don't give it to the legal expert. Of course, this is just a very rough analogy, it doesn't really work like that.

The real advantage of this approach is that it allows the model to contain a lot of knowledge without being very unwieldy, because even if the total number of parameters across all experts is high, only a small fraction of them are "active" at any given time, which means you only need to store a small subset of the weights in VRAM for inference. Take DeepSeek-V3, for example, which has an absolutely massive MOE model with 671B parameters, much larger than the largest Llama3 model, but only 37B of them are active at any given time - enough to fit in the VRAM of two consumer-grade Nvidia 4090 GPUs (which cost less than $2000 in total), rather than one or more H100 GPUs, which cost about $40,000 each.

It is rumored that both ChatGPT and Claude use the MoE architecture, and it is revealed that GPT-4 has a total of 1.8 trillion parameters, distributed in 8 models, each containing 220 billion parameters. Although this is much easier than putting all 1.8 trillion parameters into VRAM, due to the huge amount of memory used, multiple H100-class GPUs are required just to run the model.

In addition to the above, the technical paper mentions several other key optimizations. These include its extremely memory-efficient training framework, which avoids tensor parallelism, recomputes certain operations during backpropagation instead of storing them, and shares parameters between the main model and auxiliary prediction modules. The sum of all these innovations, when layered together, leads to the ~45x efficiency improvement numbers that are circulating online, and I am fully willing to believe that these numbers are correct.

DeepSeek’s API costs are a good example: despite having nearly best-in-class model performance, inference requests through its API cost 95% less than similar models from OpenAI and Anthropic. In a sense, it’s a bit like comparing Nvidia’s GPUs to new custom chips from competitors: even if they’re not as good, they’re much more cost-effective, so as long as you can determine the performance level and prove that it’s good enough for your requirements, and the API availability and latency are good enough (so far, people have been surprised by the performance of DeepSeek’s infrastructure, despite the incredible surge in demand due to the performance of these new models).

But unlike the case of Nvidia, where the cost difference is due to the 90%+ monopoly gross margins they get on their datacenter products, the cost difference of the DeepSeek API relative to the OpenAI and Anthropic APIs is probably just due to the fact that they are nearly 50x more computationally efficient (and probably even much more than that on the inference side - on the training side, they are ~45x more efficient). In fact, it’s not clear whether OpenAI and Anthropic are making a lot of money from their API services - they are probably more focused on revenue growth and collecting more data by analyzing all the API requests they receive.

Before I go on, I must point out that a lot of people have speculated that DeepSeek lied about the number of GPUs and GPU time spent training these models because they actually have more H100s than they claim because there are export restrictions on these cards and they don't want to get themselves in trouble or hurt their chances of getting more of these cards. While this is certainly possible, I think it's more likely that they are telling the truth and that they have achieved these incredible results simply by showing extreme ingenuity and creativity in their training and inference methods. They explained how they did it, and I suspect it's only a matter of time before their results are widely replicated and confirmed by other researchers in other labs.

A model that actually thinks

The newer R1 models and technical reports are probably even more shocking, as they beat Anthropic on the chain of thought, and now they are basically the only ones besides OpenAI making this technology work at scale. But note that OpenAI only released the O1 preview model in mid-September 2024. That was only about 4 months ago! One thing you have to keep in mind is that OpenAI is very secretive about how these models actually work at a low level, and will not disclose the actual model weights to anyone except partners such as Microsoft who have signed strict non-disclosure agreements. DeepSeek's models are completely different, they are completely open source and licensed permissively. They released very detailed technical reports explaining how these models work, and provided code that anyone can look at and try to replicate.

With R1, DeepSeek has essentially cracked a difficult problem in AI: getting models to reason incrementally without relying on large supervised datasets. Their DeepSeek-R1-Zero experiments show this: using pure reinforcement learning with a carefully designed reward function, they managed to get the model to develop complex reasoning capabilities completely autonomously. This wasn’t just about problem solving — the model organically learned to generate long chains of thought, self-validate its work, and allocate more computing time to more difficult problems.

The technical breakthrough here is their novel approach to reward modeling. Rather than using a complex neural reward model, which can lead to "reward hacking" (i.e., the model boosts rewards in spurious ways that don't actually improve the model's true performance), they developed a clever rule-based system that combines an accuracy reward (which validates the final answer) with a format reward (which encourages structured thinking). This simpler approach has proven to be more powerful and scalable than the process-based reward models that others have tried.

Particularly fascinating was that during training, they observed so-called "aha moments," where the model spontaneously learned to modify its thought process mid-stream when faced with uncertainty. This emergent behavior was not pre-programmed, but rather emerged naturally from the model's interaction with the reinforcement learning environment. The model would actually stop, flag potential problems in its reasoning, and start over with a different approach, all without being explicitly trained.

The full R1 model builds on these insights by introducing what they call "cold start" data — a small set of high-quality examples — before applying their reinforcement learning techniques. They also address a big problem in reasoning models: language consistency. Previous attempts at chaining reasoning with thoughts often resulted in models mixing multiple languages or producing incoherent output. DeepSeek solves this problem by cleverly rewarding language consistency during RL training, trading a small performance penalty for more readable and consistent output.

The results are incredible: on AIME 2024 (one of the most challenging high school math competitions), R1 achieved 79.8% accuracy, on par with OpenAI’s O1 model. On MATH-500, it achieved 97.3%, and scored 96.3% on the Codeforces programming competition. But perhaps most impressive is that they’ve managed to distill these capabilities into smaller models: their 14B parameter version outperforms many models that are several times larger, showing that reasoning power is not just about the raw number of parameters, but how you train the model to process information.

Aftermath

The recent gossip circulating on Twitter and Blind (a corporate rumor site) is that these models are totally unexpected by Meta, and they outperform even the new Llama4 model that is still being trained. Apparently the Llama project inside Meta has caught the attention of the top tech executives, so they have about 13 people working on Llama, a