Author: Salad Dressing

Food and sex are basic human needs, and the rise of most great business models is inseparable from this, and AIGC is no exception.

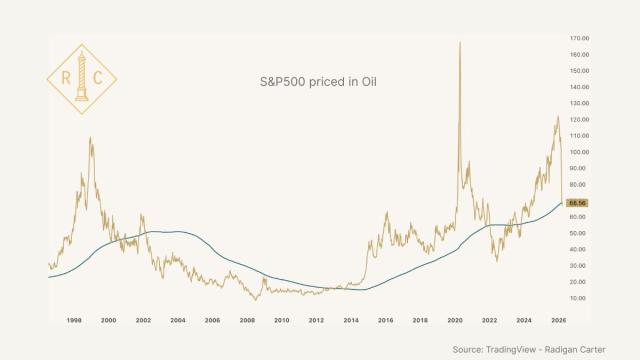

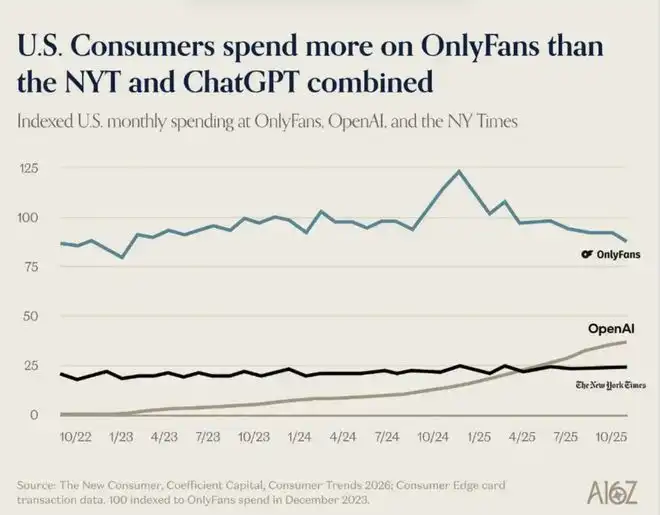

A16Z, a top-tier VC in Silicon Valley, released a report on AI consumption trends. This report, which should have been a serious discussion of AI productivity, contained a line graph that was both laughable and disheartening: last year, US users spent less on OpenAI and The New York Times combined than they did on OnlyFans.

A16Z Report Form

Ironically, and true—productivity is less important than sexual tension.

So, how much money can you make by using AI to skirt the edges of legality?

Image source: Giphy

Productivity is not as good as sexual tension

Those who were among the first to create AI virtual models know best.

Around the end of 2022, as tools like Midjourney and Stable Diffusion began to reliably generate images, some realized that these tools could create incredibly realistic human faces, could be mass-produced, and cost virtually nothing. They used AI to generate virtual female avatars, complete with a name, a persona, and a few carefully crafted "daily life" posts, operating them on Instagram and TikTok with real faces. Intimate replies in private messages were handled by ChatGPT, providing a so-called "girlfriend experience." The entire process was almost entirely automated, and the operators behind it didn't even need to show their faces.

Image source: Giphy

This approach worked best on Fanvue, a competitor of OnlyFans. Fanvue has a more lenient attitude towards AI content. According to its official disclosure, in November 2023, AI virtual models already contributed 15% of the platform's total revenue. By 2024, top AI virtual models generally earned over $20,000 per month, with some well-established accounts earning over $200,000 annually. In 2025, this figure continued to rise. According to an interview with Fanvue CEO Will Monange in 2025, the overall revenue of AI creators on the platform increased by more than 60% compared to the same period in 2024, and virtual models had become the fastest-growing content category on the platform.

OnlyFans officially prohibits AI content, but some people continue to exploit loopholes. Reddit users frequently discuss how to use AI to make money on OnlyFans in a borderline way. A common method is to have real women complete the platform's facial verification, then use their photos to train an AI model to mass-produce content.

Image source: Giphy

No matter how strict the platform is, it can't withstand the advancement of technology. Now, AI-generated images are so realistic that even experienced users have difficulty distinguishing them from real ones. A few days ago, I saw a video on Xiaohongshu of a handsome guy sitting in a car. If I hadn't opened the comments section and seen the pinned comment, "This AI has such good aesthetic sense," I wouldn't have realized it was an AI-generated handsome guy.

Beyond adult content, another group of people have made money using AI, but in a completely different direction: children's picture books.

Zhao Lei (pseudonym) was one of the earliest adopters. At the end of 2022, he had just been laid off from a product management position at a large company and was at home researching new career paths. At that time, Midjourney was just beginning to consistently produce illustrations. Looking at the watercolor-style animals generated, a thought popped into his head: Isn't this just picture book illustration? He spent two weeks studying Amazon KDP, the logic of which was extremely simple: write the story on ChatGPT, Midjourney provides the illustrations, layout and upload, and then wait to collect the money. "It was really lucrative back then," he said. "With a few books stacked up, I could earn over ten thousand yuan a month in passive income."

But the window of opportunity didn't last long. In the second half of 2023, AI picture books on KDP began to grow explosively, and nearly 90,000 similar tutorials appeared on TikTok, all with the same style of title: EASY AI Money, earn 100,000 a month by drawing children's pictures.

Everyone rushed into the same market, rapidly diluting sales. Quality issues also surfaced, with AI-generated picture books featuring dinosaurs with enormous forelegs and children with incorrect finger counts. Major platforms began requiring users to declare whether AI was used when uploading books, essentially ending this market trend. "Making money from AI-generated picture books is already very difficult now," said Zhao Lei.

Then he and that group of people who were just getting close to AI ended up at the same destination: selling courses (in this respect, the recently popular "Lobster" has taken it to the extreme).

Image source: Giphy

Zhao Lei is selling "AI picture book from scratch to online platform," while those who skirt the edges are selling "AI virtual model building tutorials." The buyers are the next batch of people who have just heard about this and still think the window is open.

Two tracks, two sets of content, different packaging, but selling the same thing: the illusion that "I can also be a flying pig."

Aesthetics and "old skills" have held back a lot of people.

What are the barriers to entry for these businesses that sound like they're lucrative opportunities?

A friend who's an internet UX designer once gave me an answer: network regional restrictions and membership fees. She wrote a user guide when Midjourney first came out, which cost 99 yuan and is still listed on Xiaohongshu (Little Red Book) for passive income. From a tool usage perspective, she's very insightful—the barrier to entry is indeed decreasing rapidly.

But as someone whose drawing skills are limited to stick figures and whose work is often riddled with ugly images on various AIGC tools, I have to add something she didn't mention: there's another hurdle, called aesthetics.

Image source: Giphy

People used to joke that AI couldn't replace designers because clients simply didn't know what they wanted. I thought it was just a joke, until I used these tools myself and found that the joke had come true for me exactly.

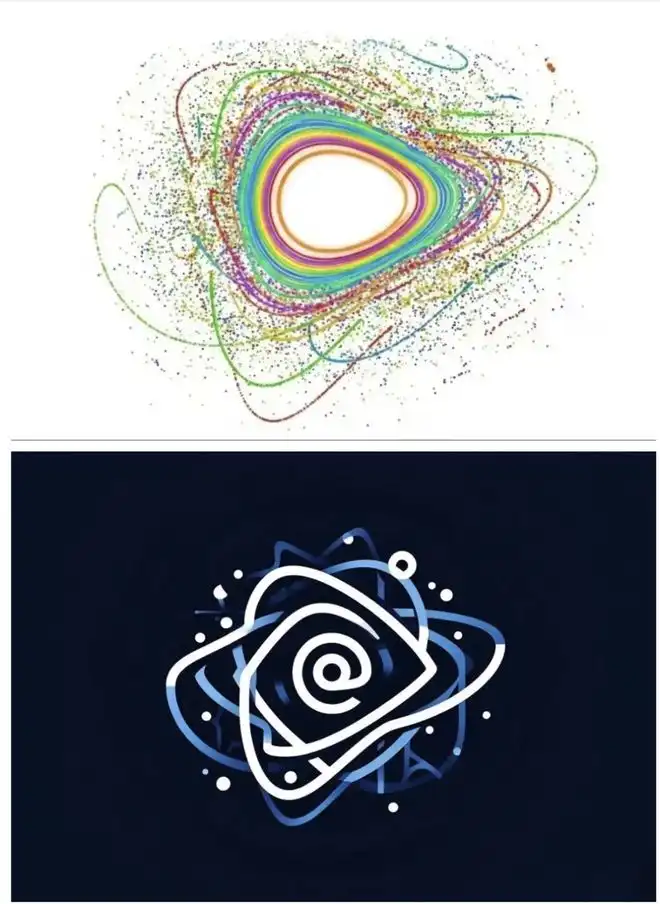

Last year, I created a media account and wanted to use the physical concept of "integrable islands" for the logo. In essence, an integrable island represents those things worth settling down in a chaotic information flow. I found a reference image for this concept, opened the tool, threw in the image, wrote a bunch of descriptive prompts, and started generating the logo. The result was a complete mess. I revised it seven or eight times, each version just a different kind of mess. I knew I wanted a certain feeling, but I had absolutely no idea how to translate that feeling into instructions. In the end, I asked a friend who works in design for help. She spent twenty minutes on it, and the result was on a completely different level compared to what I got after two hours of work.

The top image shows the image before modification, and the bottom image shows the image after modification.

The problem isn't with the tools, it's with me. More precisely, it's that I can't translate the vague aesthetic feelings in my mind into precise language.

This predicament isn't just mine alone.

A friend who works in content operations started using Seedance to make short videos last year. She quickly learned the tool itself, but what really stumped her was writing the storyboards. "I knew I wanted a high-quality visual, but putting the word 'high-quality' into the cue words was useless," she said. "I didn't know what that quality specifically meant in terms of lighting, shot size, and camera movement." The final product, she described, was "somewhat similar, but nothing was right."

Another friend used Marble, a tool that can generate 3D images from text and pictures, to create content. He repeatedly generated and revised the images, and after struggling for a long time, he realized that he had no frame of reference and did not know what "good" looked like, so he could not judge whether the generated content was what he wanted.

Marble generates 3D panoramic images

In stark contrast, a friend with photography experience produced significantly higher-quality images using the same tools. He explained that he didn't spend much time studying prompts; "I just knew what composition and lighting I wanted, and once I clearly articulated those, the tools would naturally provide the correct information."

Tools are becoming increasingly powerful, but the gap between users has not narrowed as a result; in fact, it has widened to some extent. In the past, no one could create good things; now, people with aesthetic appreciation can create excellent things, while those without still linger between "usable" and "easy to use."

Tools are also responding to this reality. The popularity of one-click template tools like NotebookLM follows a simple logic: it bypasses the premise that "you need to know what you want first." The template makes the aesthetic decision for you; you only need to fill in the content. But templates also have their limitations; they can solve the problem of "usability," but not "aesthetics."

This is equally evident in the textual aspect. I have a friend who works in marketing and was recently transferred to PR, requiring her to produce a large amount of written content. Her manager suggested using AI, but this only confused her more, and she came to me asking for an AI writing manual she had previously written. The crux of the problem is that she has no feel for what constitutes a "good PR draft," doesn't know what the standards are, and has no way of judging which direction to revise the AI-generated content.

Image source: Giphy

I find writing with AI much easier. It's not because I'm better at the tool, but because after years of being a journalist, I have a good eye for expression. I know what makes a sentence good and what makes it awkward, and I know where the AI's output falls short and where to push it further. Aesthetic sense becomes a very practical skill here: it lets you know where the destination is, instead of letting the AI run the same course again and again aimlessly.

When tool skills are no longer an issue, aesthetics and "old skills" become the biggest hurdles—those who can't use them well are even worse off than those who don't use them at all.

I want sex, does the difference between AI and real people really matter?

Those who are the first to try something new not only reap the rewards but also attract controversy. A paradoxical phenomenon has emerged in the current AIGC (AI Generic Content) community: whether or not AI is used is more important than the quality of the work itself.

Fang Yuan (pseudonym) is a brand designer. He took on a brand visual design project and used AI tools to compress the process, which used to take two weeks, into three days. He felt the results were significantly better than before. He sent out the materials and waited for a reply.

The first thing the other party replied to wasn't a critique of the work, but rather, "So fast, did you use AI?" Before Fang Yuan could even reply, another message arrived: "We do not accept design works involving AI." He still wasn't sure if the other party had even opened the attachment. He was frustrated; the efficiency was too high, and now it felt like a crime.

Image source: Giphy

He's not alone in this predicament. AI has quietly become a benchmark for moral judgment in many people's evaluation systems. This is different from Photoshop or Excel. No one will ask, "Did you use photo editing software?" when they receive a retouched photo, nor will anyone ask, "Did you use Excel to calculate this financial statement?"

AI triggers a different kind of skepticism, a question that's closer to "Did you actually do this?"

There has always been an implicit contract in creative work: good work means that someone has invested time, effort, and refinement in it. The emergence of AI, however, has disrupted the causal relationship between "effort" and "output" that everyone takes for granted.

If you put something made by AI in three days next to something made by hand in two weeks, even if the quality is the same, the former will make people feel that something is wrong. This "wrong" can be summarized as "unfair".

A study conducted by the University of Arizona found that if designers proactively informed clients of the use of AI assistance, even after explaining that AI was merely an auxiliary tool, clients' trust in the designers still decreased by an average of 20%.

As AIGC technology matures, this issue has gradually evolved from a matter of trust between the client and the service provider to a platform-wide problem.

Since 2023, the government has successively issued relevant regulations requiring the labeling of AI-generated content: first, the "Regulations on the Management of Deep Synthesis in Internet Information Services" in January, mainly governing deep synthesis technologies such as AI face-swapping and voice synthesis; then, the "Interim Measures for the Management of Generative Artificial Intelligence Services" officially came into effect in August of the same year, including generative services like ChatGPT. In March 2025, regulation was further upgraded, with the Cyberspace Administration of China, in conjunction with multiple departments, issuing the "Measures for the Labeling of Artificial Intelligence-Generated and Synthesized Content," this time covering all content formats including text, images, audio, and video.

But what the regulations cannot clearly define is the definition.

The platform can identify a video that is 100% AI-generated, but it struggles to define the boundaries. If a selfie is edited and recomposed by AI, does that count as AI-generated content? If the footage is your own but the editing and music are done by AI, should it be tagged? If an AI produces a draft and a human makes 70% of the changes, whose tag should it be?

Image source: Giphy

Behind the challenge of defining boundaries lies a fundamental issue of responsibility. Without clear definitions, accountability remains unclear. When a song's melody is written by AI, but the lyrics are altered by a human, leading to copyright disputes, who is responsible? Or when a product review is generated by AI, with the blogger only changing the tone, and the recommended product proves to be disappointing, our questioning "was it done by AI?" is actually asking a more fundamental question: behind this work, is there anyone truly responsible, is anyone considering your concerns, and is anyone concerned about the outcome?

The hardest thing to draw is not boundaries, but responsibilities.