[Introduction] Microsoft's biggest competitor isn't Google, but its former exclusive reliance. The latest Copilot upgrade defaults to GPT for writing and Claude for peer review, while Anthropic's agent framework is directly integrated into Office. From partnering with OpenAI to acquiring all top-tier models, Microsoft is betting that regardless of who wins, all traffic will pass through its hands.

The era of single-model approaches has come to an end.

Microsoft just changed the engine of Copilot and introduced multi-model intelligence in Researcher.

From then on, Copilot's Researcher agent would invoke both GPT and Claude by default.

This isn't the kind of "multi-model" system where you manually cut the model. Instead, after GPT writes the first draft, Claude automatically acts as an expert reviewer, examining each point before delivering it to you.

One is responsible for "charging forward," and the other is responsible for "picking up the thorns."

Microsoft says this is a significant step forward for Microsoft 365 Copilot's deep research agent, Researcher .

Designed for handling complex research in workflows, Researcher further enhances accuracy, depth, and credibility with two new multi-model capabilities: Critique and Council.

The actual test results were amazing.

In the DRACO benchmark test, this "dual-model competition" architecture scored 13.8% higher than Perplexity Deep Research (equipped with Claude Opus 4.6), which had previously been considered the ceiling for deep research.

But that's not all.

On the same day, Copilot Cowork was launched. Microsoft stated that it introduced the technology platform that supports Claude Cowork into Microsoft 365 Copilot and deeply integrated it with Work IQ, enterprise permissions and governance systems, enabling AI to autonomously plan and advance multi-step tasks across tools.

This is no longer as simple as "connecting to an API"; it's about integrating cutting-edge external intelligent agent capabilities into Microsoft's own operating system.

Microsoft has laid its cards on the table: instead of betting on a single model, it is incorporating cutting-edge models such as Anthropic and OpenAI into the Copilot multi-model orchestration framework.

In other words, Copilot is evolving from a traditional AI assistant into a multi-model execution and orchestration system for enterprise work.

Critique lets AI grade its own work.

Past AI research workflows had a structural blind spot: planning, retrieval, synthesis, and writing were all crammed onto a single model.

Making the model both player and referee almost inevitably creates an illusion.

Microsoft's solution this time is to separate "generation" and "evaluation" into two independent roles.

Specifically, for the large model, GPT was responsible for the first half: task planning, iterative retrieval, and drafting the initial draft; Claude was responsible for the second half: as an expert reviewer, reviewing each item based on the structured evaluation scale (Rubric).

This scale mainly focuses on three dimensions:

Source reliability assessment, reviewing whether the citations are authoritative and verifiable;

Report completeness: Check whether all intents of the user's request are covered;

Rigorous evidence tracing requires that every key conclusion be anchored to a reliable source with precise citations.

More importantly, the reviewer is not positioned as a "second author," but as a "peer reviewer." They don't rewrite for you, but rather push you to write better.

"We're not simply stuffing multiple models into Copilot," said Nicole Herskowitz, Corporate Vice President of Microsoft 365 and Copilot. "We're enabling customers to truly enjoy the benefits of models working together."

In the future, this mechanism will be upgraded to a two-way peer review system: GPT will also be able to review Claude's manuscripts.

Critique is already the default mode in Researcher and does not need to be manually enabled .

Actually, this isn't some fancy technical trick; it's the first time that the peer review system, which has been operating in academia for hundreds of years, has been engineered and embedded into an AI system .

Use architectural design to suppress illusions, rather than simply hoping that individual models will become smarter.

DRACO benchmark breakdown, 13.8% gold content

Data doesn't lie.

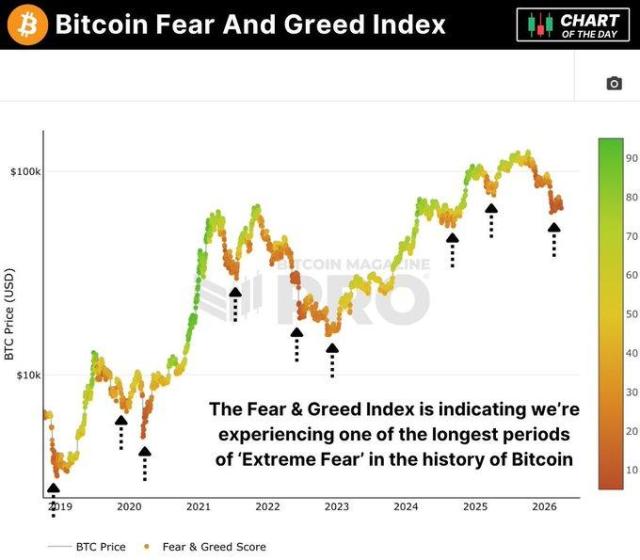

DRACO (Depth Research Accuracy, Completeness and Objectivity) is a benchmark launched by Perplexity and academic researchers in February 2026, covering 100 complex research tasks across 10 domains, all derived from real-world use cases.

Each question was run five times independently and the average score was taken. The evaluation dimensions included factual accuracy, breadth and depth of analysis, quality of expression, and quality of citation.

The judges' model is GPT-5.2.

Microsoft specifically emphasized that it used the same evaluation protocol and configuration as the benchmark paper to ensure a fair comparison based on the same criteria.

Researcher with Critique achieved a significant improvement of +7.0 points (SEM±1.90) in overall score, which is 13.88% higher than the previous best performer, Perplexity Deep Research.

Comparison chart of DRACO benchmark scores: A horizontal comparison of scores among various deep research systems (including Researcher with Critique, Perplexity Deep Research, etc.). Except for Researcher with Critique, the other comparison results are cited from Zhong et al., arXiv:2602.11685.

Let's break it down into four dimensions:

The most significant improvement was in the breadth and depth of analysis, +3.33. This was followed by expression quality +3.04 and factual accuracy +2.58. Citation quality also improved.

All dimensions were statistically significant (paired t-test, p < 0.0001).

What's truly noteworthy is the +3.33. The surge in analytical depth demonstrates that Critique's greatest value lies not in error correction, but in its ability to force forth a more comprehensive analytical perspective.

At the sector level, significant improvements were observed in 8 out of 10 sectors, covering core scenarios such as medicine, technology, and law.

The only two exceptions are "academic" and "needle in a haystack," where test results fluctuate significantly.

The DRACO benchmark four-dimensional evaluation improvement table: Researcher with Critique (multi-model) shows improvements in the breadth and depth of analysis, presentation quality, factual accuracy, and citation quality compared to the single-model Researcher, as well as the contribution of each to the final total score.

13.8% sounds like a number.

In the field of in-depth research, the competition was fierce. Perplexity, which had finally reached its ceiling with Claude Opus 4.6, has now been broken through by Critique's architectural innovation.

When what you need is not an answer but a debate

Critique addresses the question of "how to make a report more accurate".

But in some situations, what you need is not a polished draft, but an argument between two experts.

And that is the Council's positioning.

Select "Model Council" in the model selector, and GPT and Claude will each generate a complete report independently and display them side by side.

Then, a specialized judging model evaluates the two reports and generates a Cover Letter that provides an in-depth analysis of where the two sides agree on points of view, where they disagree, and the unique insights each side brings.

Screenshot of the Council mode product interface: The complete reports generated by GPT and Claude are displayed side by side, with a Cover Letter summary generated by the judge model.

On the surface, it's just that "choose one of many" has become "see all," but in reality, it exposes the information blind spots in the decision-making process.

Facts that a model might overlook, analytical frameworks with different weights, alternative reasoning paths... the Council put all of these on the table.

When preparing a quarterly strategy report, would you prefer to see a polished version or two experts offering their own opinions, leaving you to make your own judgment?

Critique is an "editing and reviewing" mode that prioritizes efficiency.

The Council operates on an "expert consultation" model, prioritizing decision-making.

The two models precisely cover two core scenarios for enterprises to use AI for research: daily output needs to be fast and accurate, and major decisions need to be comprehensive and well-considered.

Copilot Cowork: Microsoft has brought its trump card, Anthropic, into Office.

If Critique and Council changed the quality of research, Copilot Cowork changed the way we work.

Copilot Cowork is built directly on Anthropic's Claude Cowork technology platform.

This is not about "access" or "compatibility," but rather "building upon its technology platform."

Its working method is very simple: you describe the desired result, Copilot Cowork automatically generates a plan, performs logical reasoning across tools and documents, displays progress in real time during the process, and you can intervene and guide at any time.

Copilot Cowork interface: Describe the goal → Automatic planning → Cross-tool execution → Real-time progress display.

With built-in Claude and native Microsoft skills such as calendar management and daily briefings, it covers a wide range of tasks, from one-off chores to monthly budget reviews.

Institutions such as Capital Group are already using it, and feedback focuses on high-value scenarios such as planning, scheduling, output, and preparing for management reviews.

It is currently open to early adopters through the Frontier program.

This means that the relationship between Microsoft and Anthropic has evolved from "model supplier" to "technology platform co-construction," with Cowork directly embedding Claude's agent framework into the muscle of the M365.

Microsoft released Copilot Cowork in beta mode earlier this month with the goal of "capturing the market's growing demand for autonomous AI agents."

Therefore, this is not a product update, but an architectural-level shift in allegiances.

Microsoft's true ambitions: from AI assistants to model command centers

Putting all these actions together, Microsoft's strategic intent is clear: it is no longer betting on itself or a particular model to win, but rather on betting that traffic will pass through it regardless of who wins .

From its deep reliance on OpenAI to its deep integration of Anthropic's technology into its product line, Microsoft is transforming from a "model player" to an "orchestration layer."

Critique enables GPT and Claude to collaborate, Council enables them to compete, and Cowork allows Anthropic's agent capabilities to directly serve Office users.

This is platform logic, not model logic.

On the front lines, Microsoft is simultaneously battling Google's Gemini multimodal approach and Anthropic Claude Cowork's autonomous agent approach.

However, with the model landscape of Anthropic, OpenAI, and Google already established, Microsoft's strategy is not to enter the game as a player, but to use its open ecosystem to incorporate the capabilities of all players into its own platform.

For developers, the signal is very clear: future competitiveness will not lie in being tied to a single model, but in the ability to orchestrate multiple models .

However, the market doesn't seem to be buying into Microsoft's Copilot upgrade.

Microsoft's stock price rose only about 1% that day, and it still faces a nearly 25% drop this quarter: its worst quarterly performance since the 2008 financial crisis.

What Wall Street is more concerned about is the actual data: who pays for the cost of calling multiple models back and forth? Can employees really integrate it into their daily workflows?

What is certain is that this upgrade has rewritten the partnership between Microsoft and OpenAI. OpenAI's position in the Microsoft ecosystem has changed from "the only trump card" to "a card on the table".

For Anthropic, OpenAI, and Google, it is worth noting that when platforms start to orchestrate your capabilities as replaceable modules, the model capabilities themselves may no longer be a moat.

Enterprise AI is transitioning from the era of "chatbots" to the era of "work systems".

At this turning point, the deciding factor is no longer who has the highest benchmark score, but who can orchestrate multiple models into a reliable, auditable, and implementable workflow.

References:

https://www.reuters.com/business/microsoft-unveils-ai-upgrades-rolls-out-copilot-cowork-early-access-customers-2026-03-30/

https://techcommunity.microsoft.com/blog/microsoft365copilotblog/introducing-multi-model-intelligence-in-researcher/4506011

https://www.microsoft.com/en-us/microsoft-365/blog/2026/03/30/copilot-cowork-now-available-in-frontier/

This article is from the WeChat official account "New Intelligence" , edited by Yuan Yu, and published with authorization from 36Kr.