This article will analyze the core drivers of the price increases of GPUs and RAM from a historical perspective, and based on this, put forward independent thoughts and judgments on the future development pattern of the crypto industry and the AI industry.

Article author and source: 0x9999in1, ME News

Introduction: Computing power and storage: the "oil" and "granary" of the digital world.

In the history of technological evolution over the past decade, whether it is the Web3 and cryptocurrency industry advocating "decentralization" and "reshaping of asset ownership," or the AI industry dedicated to breakthroughs in "artificial general intelligence (AGI)," the underlying support for their grand narratives has always been inseparable from two physical cornerstones: computing power represented by GPUs (graphics cards) and storage represented by RAM (memory/DRAM).

Throughout history, the prices of graphics cards and memory have not followed a linear, stable trajectory. Instead, they have experienced numerous dramatic price fluctuations, driven by technological revolutions, black swan events, and capital frenzy. ME News Think Tank believes that reviewing the history of these core hardware price surges is not merely about navigating the cyclical fluctuations of the supply chain, but rather about penetrating the surface to understand the most fundamental resource allocation rules in today's digital economy. This article will analyze the core drivers of GPU and RAM price increases from a historical perspective, and based on this analysis, offer independent thoughts and judgments regarding the future development of the crypto and AI industries.

A Chronological Breakthrough in Graphics Card (GPU) Price Soaring: From the "Mining Frenzy" to the "Thousand-Module War"

As the core hub of parallel computing, the price curve of GPUs perfectly reflects the explosive growth in global demand for "brute-force computing" over the past decade. The irrational price surge has primarily occurred in the following three historical phases.

2017-2018: The First Awakening of Cryptocurrency Computing Power

2017 can be considered the year that consumer graphics card prices broke away from Moore's Law and the conventional depreciation curve. During this period, cryptocurrencies such as Ethereum, which use the Proof-of-Work (PoW) consensus mechanism, ushered in a historic bull market, with the price of Bitcoin approaching the $20,000 mark for the first time.

Key driving factor: Because Ethereum's Ethash algorithm is highly sensitive to video memory bandwidth and is inherently resistant to ASIC (Application-Specific Integrated Circuit) mining rigs, ordinary consumer-grade graphics cards (such as NVIDIA's GTX 1060 and 1080 series and AMD's RX 580 series) have become the best "mining productivity tools." GPUs originally intended for gamers were snapped up by miners on a massive scale, directly leading to global supply shortages.

Data support: At its peak in late 2017 and early 2018, a GTX 1060 6GB graphics card with an official suggested retail price (MSRP) of $249 was speculated to fetch over $500 on the secondary market, a premium of over 100%. This irrational demand driven by purely financial speculation demonstrated for the first time the destructive power of "financialization of computing power" on the hardware supply chain.

2020-2021: A Perfect Storm (Pandemic, Supply Chain, and the DeFi/NFT Bull Market)

If 2017 was a localized miner's frenzy, then the GPU price surge from 2020 to 2021 was a "perfect storm" triggered by the resonance of the macro environment and the cryptocurrency industry cycle.

Key driving factors:

First, the COVID-19 pandemic led to a surge in demand for working from home (WFH) and home entertainment, resulting in a global spike in demand for PCs and game consoles. Second, the global semiconductor supply chain was disrupted by the pandemic, causing severe capacity constraints at wafer foundries (such as TSMC and Samsung), leading to significant delays in chip delivery times. Most critically, the crypto market experienced a super bull market driven by DeFi (decentralized finance) and NFTs, with Ethereum prices breaking through $4,000 and mining yields reaching record highs.

Supporting data: NVIDIA's RTX 30 series graphics cards (such as the RTX 3080), released at the end of 2020, had an MSRP of $699. However, for most of 2021, due to miners using automated programs (bots) to buy and resell these cards on e-commerce platforms at inflated prices, the actual transaction price remained stable between $1,500 and $2,000, a premium of 200% to 300%. This phenomenon directly prompted NVIDIA to launch LHR versions of graphics cards with limited hash rates, attempting to forcibly separate the gaming and mining markets.

2023-Present: Computing Power Hegemony and Structural Shortages in the AI Era

With the emergence of ChatGPT at the end of 2022, the demand logic for GPUs underwent a qualitative leap. The main battleground for soaring prices shifted entirely from "consumer-grade graphics cards" to "data center-grade accelerator cards".

The core driving factor: Training and inference of Large Language Models (LLMs) require parallel computing on clusters with tens of thousands of gigabytes of computing power. Tech giants led by OpenAI, Microsoft, Google, and Meta, along with AI startups worldwide, have engaged in a cost-no-holds-barred arms race for computing power. This level of demand is no longer speculative activity by individual miners, but rather a strategic capital expenditure (CapEx) by the world's top tech giants.

Data support: NVIDIA's flagship AI chip, the H100, is officially priced between $25,000 and $30,000. However, during the period of extreme computing power shortage in 2023 and 2024, the price of a single H100 card on the spot market once exceeded $40,000 or even higher, with lead times lasting several months. More importantly, this price increase has gone beyond simple hardware manufacturing costs; it represents the "monopoly rent" that NVIDIA has extracted through its CUDA ecosystem moat.

The underlying logic of RAM price cycles: from natural disasters and supply-demand mismatches to the squeeze from AI.

Compared to the high premiums of GPUs driven by the explosive growth in computing power demand, RAM (DRAM and NAND Flash), as highly standardized semiconductor commodities, has historically been more influenced by the "cobweb model" in terms of price fluctuations: capacity planning lags behind changes in demand. However, in the AI era, this logic is being reshaped.

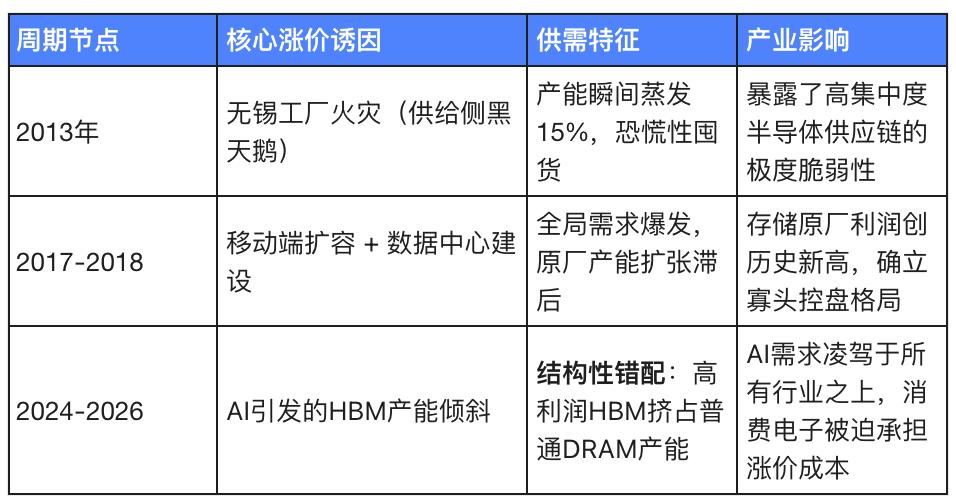

2013: The black swan event (SK Hynix fire) and the resulting supply shock

The memory industry is characterized by extremely high capital intensity and strong oligopolistic features (Samsung, SK Hynix, and Micron form a three-way balance of power). Physical damage to any single node can trigger a major upheaval in the global market.

Key driving factor: In September 2013, a serious fire occurred at SK Hynix's semiconductor factory in Wuxi, China. This factory accounted for approximately half of SK Hynix's total DRAM production capacity at the time, and nearly 15% of the global DRAM supply.

Data support: Following the fire, panic spread throughout the supply chain, causing global spot DRAM prices to surge by more than 30% in just a few weeks. This incident fully exposed the vulnerability of the highly centralized global semiconductor supply chain and became the most typical case in memory history of a price spike caused by a "physical supply shock".

2017-2018: The Mobile Internet Dividend and Early Cloud-Native Expansion

At the same time that graphics cards saw a surge in price due to cryptocurrency mining, the memory market also experienced an epic "supercycle," but the underlying driving forces were completely different.

Key driving factors: First, smartphones are undergoing a hardware upgrade from 2GB/4GB to 6GB/8GB of RAM, leading to a surge in demand for mobile DRAM. Second, the four major North American internet giants (AWS, Google, Microsoft, and Meta) have begun large-scale expansion of data centers, completely igniting demand for server DRAM. Finally, the three major memory manufacturers tacitly controlled the pace of capacity expansion during this period, resulting in a supply shortage.

Data Support: According to TrendForce, global DRAM industry revenue surged 76% year-on-year in 2017. Server DRAM and mobile DRAM prices maintained double-digit quarter-on-quarter growth for several consecutive quarters. Giants like Samsung reaped record profits from this round of memory price surges. The core logic behind this price increase is a "systemic surge in demand from both the consumer and enterprise sectors."

2024-2026: HBM Capacity Expansion and AI-Driven "Structural Shortages"

In the AI era, the rise in memory prices is no longer a simple supply and demand cycle, but a "structural deprivation" triggered by the evolution of technological architecture.

Key driving factors: Training large AI models requires not only computing power but also extremely high memory bandwidth to break the "memory wall." High-bandwidth memory (HBM) has become a standard feature of AI chips. Due to the extremely complex manufacturing process of HBM (stacked multiple DRAM dies) and its much higher profit margin than ordinary DRAM, manufacturers such as Samsung and SK Hynix have forcibly transferred a large amount of traditional DRAM production capacity (wafer and packaging lines) to HBM production.

Data support: Industry data shows that by the end of 2025 and the beginning of 2026, AI data centers will not only consume about 30% of the global DRAM bit demand, but will also force giants such as Samsung to raise traditional DRAM contract prices by 30% to 60% within a few months. Due to the drain on production capacity by HBM and server-grade DDR5, ordinary DRAM for consumer PCs, smartphones, and even industrial control is facing a severe supply shortage.

In-depth analysis: Implications of underlying hardware changes for the encryption and AI industries

After reviewing historical cycles of hardware prices, ME News Think Tank believes that each surge in GPU and RAM prices should not be viewed merely as fluctuation in the hardware supply chain. They act as a mirror, reflecting the deep-seated contradictions and hidden concerns within the rapid development of the crypto and AI industries.

Viewpoint 1: Computing power equals power – the “centralized hardware shackles” under the decentralized narrative

The birth of cryptocurrencies and the Web3 industry was built on grand narratives of "decentralization" and "censorship resistance." However, from the historical phenomenon of GPU prices skyrocketing with the mining frenzy, we can draw a clear conclusion: software-level decentralization is being deconstructed by the extreme centralization at the underlying hardware level.

Whether it was early Ethereum GPU mining or Bitcoin's current ASIC mining, the core element maintaining the operation of the blockchain network—computing power—relies entirely on the production capacity provided by a few semiconductor giants (such as NVIDIA, TSMC, and Samsung). When GPU prices skyrocketed in 2021, those who could continuously acquire computing power and maintain network power were no longer individual nodes distributed globally, but institutional mining farms with massive capital that could directly monopolize supplies from distributors. The soaring hardware costs created an insurmountable capital barrier, completely excluding ordinary participants from the consensus maintenance process. This directly proves that as long as the manufacturing and pricing power of the underlying silicon-based hardware remains in the hands of a very few monopolistic giants, absolute "decentralization" will forever exist only in the utopia of white papers.

Viewpoint 2: The Matthew Effect of Capital – The AI Arms Race is Ending the Myth of “Garage Startups”

If the hardware barriers in the encryption industry are still in the millions or tens of millions of dollars, then the current AI computing power costs, dominated by H100/B200 and HBM memory, have already pushed the innovation threshold to the tens of billions of dollars. ME News Think Tank predicts that the high cost of underlying hardware is ruthlessly ending Silicon Valley's proud "garage startup" model, and AI is rapidly becoming a capital game exclusively for oligopolies.

In the past, the explosion of the internet and mobile internet largely benefited from the widespread adoption of cloud computing, which drastically reduced the cost of underlying infrastructure. Code written by a few college students in a garage could disrupt traditional industries. However, in the era of large-scale AI, the persistently high prices and supply shortages of GPUs and HBM memory have rendered computing power no longer a public resource, but rather a strategically allocated commodity. Only Microsoft, Google, Meta, and a few star unicorns backed by giants (such as OpenAI and Anthropic) can afford to queue up to buy tens of thousands of AI accelerator cards and reserve expensive DRAM production capacity. Ordinary startups cannot even acquire the computing power to "play the game," and are forced to become shell applications within the API ecosystems of these giants. The skyrocketing prices of underlying hardware are essentially an invisible Great Wall built by tech giants using their capital advantages, completely locking up social mobility in the large-scale AI race.

Viewpoint 3: Supply Chain Restructuring – From “Cyclical Fluctuations” to “Structural Deprivation”

Previous price increases for memory or graphics cards were mostly cyclical (such as prices returning to rationality after the mining boom subsided). However, the current surge in hardware prices driven by AI is essentially a form of "structural deprivation."

This represents an extremely dangerous trend: the semiconductor manufacturing industry is losing its "inclusivity." When memory manufacturers find that the profit margin for producing HBM is several times higher than that of ordinary DDR5, and when wafer foundries find that NVIDIA's orders are not only highly profitable but also come with ample upfront payments, the world's limited advanced packaging and wafer capacity is being shifted towards the AI field at an unprecedented rate. This means that the underlying hardware needs of traditional consumer electronics (PCs, mobile phones), autonomous vehicles, and even basic IoT devices are being "destroyed" and "legally deprived" by the AI industry. If the crypto industry wants to develop high-throughput decentralized physical infrastructure networks (DePIN) or zero-knowledge proof (ZK) hardware acceleration in the future, it will inevitably face a huge cost disadvantage in this capacity competition with AI giants. This severe misallocation of resources will cause innovation in non-AI technology fields to fall into a long-term cost quagmire.

Conclusion: Searching for the next paradigm in the "silicon cycle"

Whether it was the crypto bull market that once made graphics cards extremely scarce, or the current AI boom that has transformed HBM memory into digital gold, the price curve of underlying hardware has always been the most honest thermometer of human technological desire. As witnesses, we must be keenly aware that computing power and storage are not merely physical carriers for running code; they have evolved into the most core means of production in the 21st century.

The crypto industry needs to embrace hardware while exploring lighter, more monopolistic consensus mechanisms (such as the transition to PoS, which was a painful compromise). Meanwhile, the AI industry, under the tyranny of computing power, must quickly find next-generation technological paradigms such as algorithm optimization, edge computing, or in-memory computing to break free from the dual hardware shackles of Moore's Law's failure and oligopolistic monopolies. Otherwise, the intelligent and decentralized future we envision will ultimately become prey for a few silicon-based monopolists.

Source cited:

- TrendForce. (2018). DRAM Revenue Grew by 76% YoY in 2017, and is Expected to Increase Further by More than 30% in 2018. TrendForce Press Center.

- Sourceability. (2025). DRAM prices surge amid AI-driven shortage . Sourceability Insights.

- Edge AI and Vision Alliance. (2026). Why DRAM Prices Keep Rising in the Age of AI . Edge AI and Vision Alliance Industry Reports.

- SoftwareSeni. (2026). When Will DRAM Prices Normalise? Analyzing the Timeline for Memory Market Recovery . SoftwareSeni Tech Analysis.

- Bacloud. (2025). When Will RAM Prices Drop? Global Memory Market Outlook 2024–2026 . Bacloud Blog.