Jack Clark predicts that by the end of 2028, the probability of AI achieving recursive self-improvement without human intervention will exceed 60%. Based on benchmark data from SWE-Bench, MLE-Bench, and CORE-Bench, he demonstrates the rapid progress of AI in core R&D tasks such as coding, paper reproduction, kernel optimization, and model fine-tuning, pointing out that the engineering capabilities required for automated AI R&D are basically in place. If AI achieves end-to-end self-construction, it will bring profound challenges to alignment, economic structures, and governance systems.

Article author and source: BlockBeats

This viewpoint did not come from nowhere. He reviewed a number of publicly available benchmarks and found that AI is making very rapid progress in AI research and development-related tasks.

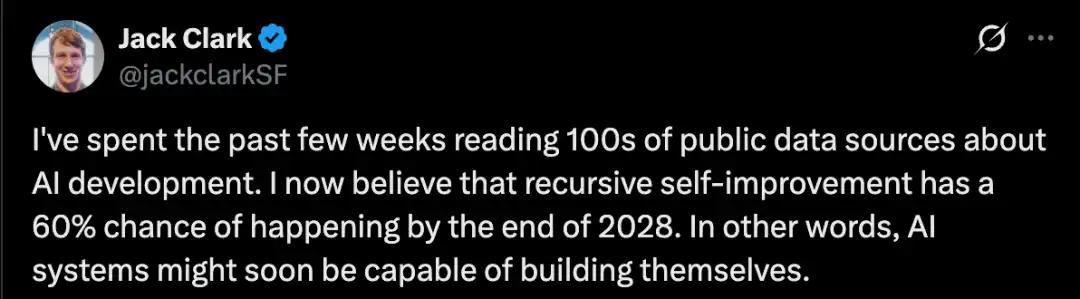

For example, CORE-Bench examines the ability of AI to implement research papers by others, which is a crucial part of AI research.

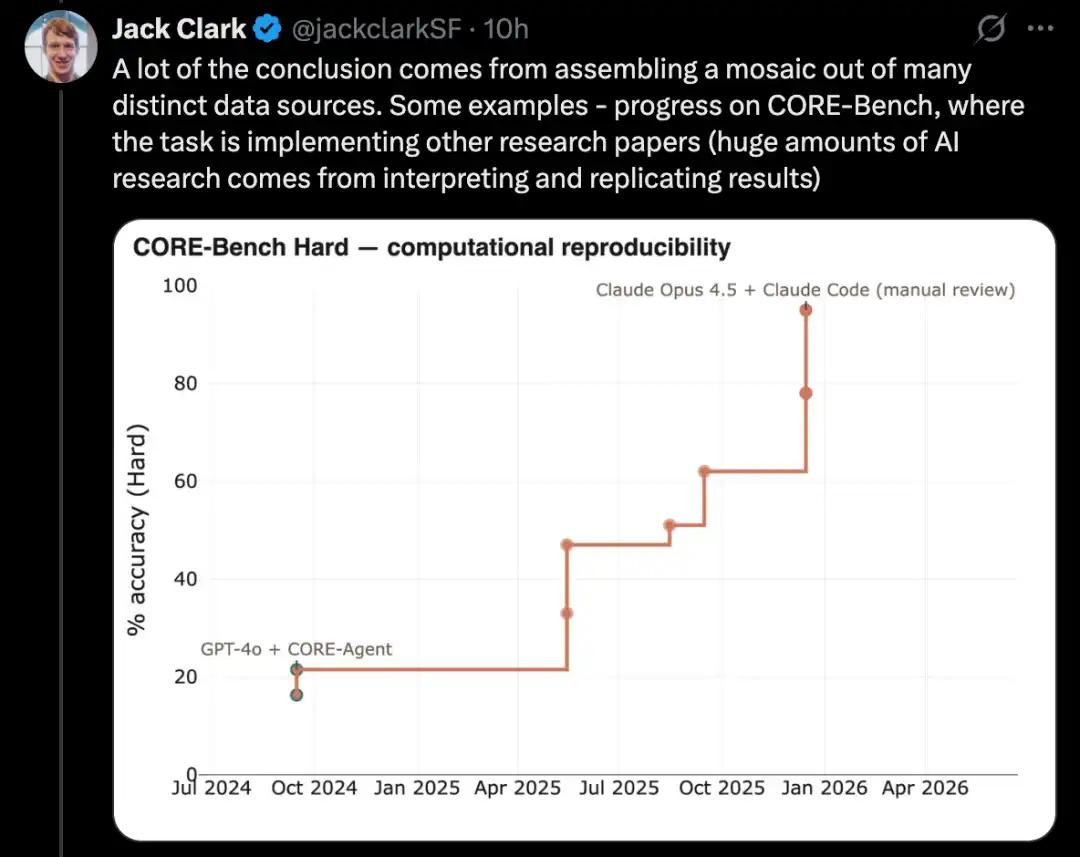

PostTrainBench tests whether a powerful model can autonomously fine-tune a weaker open-source model to improve performance, which is a key subset of AI research and development tasks.

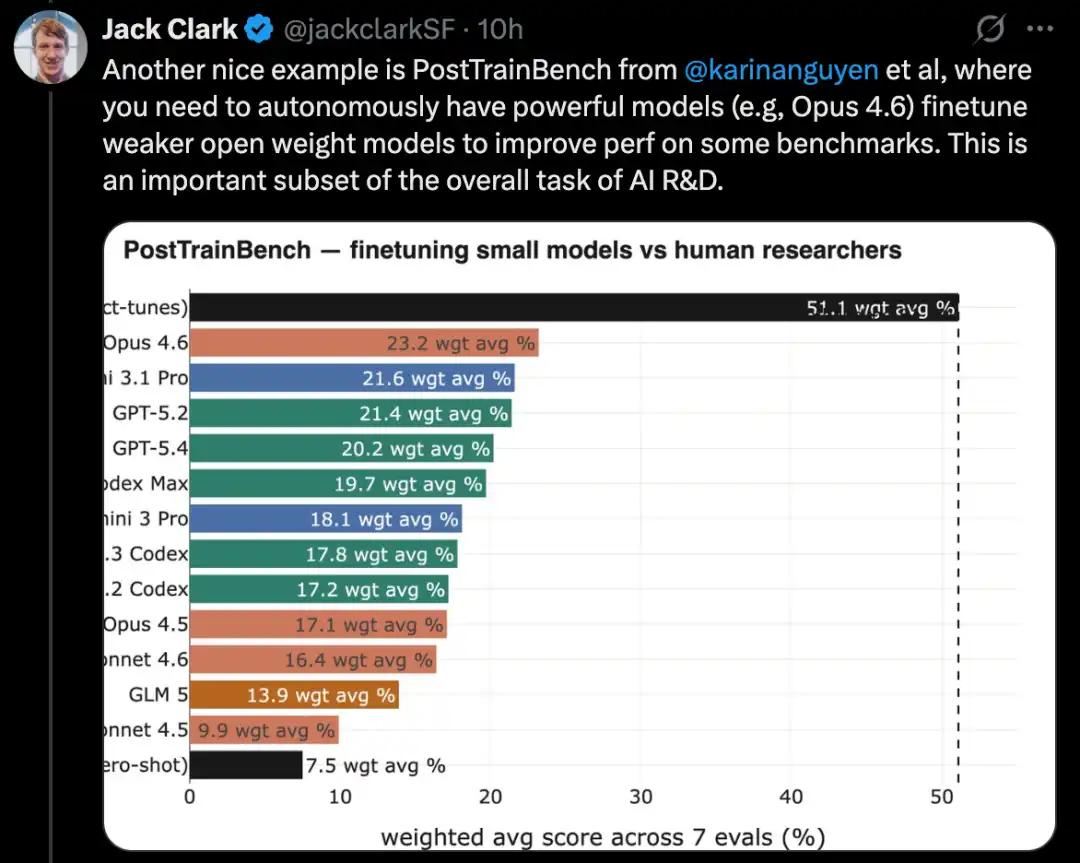

MLE-Bench is based on real-world Kaggle competition tasks, requiring the construction of diverse machine learning applications to solve specific problems. Furthermore, well-known coding benchmarks like SWE-Bench have also shown similar advancements.

Jack Clark describes this phenomenon as a "fractal" upward and rightward trend, meaning that meaningful progress can be observed at different resolutions and scales. He believes that AI is gradually approaching the capability of end-to-end automated R&D, and once achieved, AI will be able to autonomously build its own successor systems, initiating a self-iterable cycle.

This statement sparked considerable discussion on social media.

Some see it as a crucial first step toward ASI and the singularity, potentially revolutionizing the pace of technological development.

However, dissenting voices also exist.

Pedro Domingos, a computer science professor at the University of Washington, points out that AI systems have had the ability to "build themselves" since the invention of the LISP language in the 1950s. The real question is whether they can obtain incremental rewards, and there is currently no clear evidence to support this.

Some netizens questioned whether the 30% increase in probability from 2027 to 2028 suggests a sudden and significant breakthrough in AI capabilities around the end of 2027. What specific milestone or event could cause such a dramatic increase in the probability of AI achieving recursive self-improvement in such a short period?

Some netizens also pointed out that Jack Clark is Anthropic's newly appointed head of public relations, which is part of their new strategy: We are not alarmists, and a large number of papers have confirmed what we have been warning you about.

Jack Clark wrote a long article in the Import AI 455 newsletter to elaborate on this point.

Next, let's take a full look at this article.

What does it mean that AI systems are about to begin building themselves?

Clark stated that he wrote this article because, after reviewing all publicly available information, he had to reach a not-so-easy conclusion: the possibility of AI research and development without human involvement by the end of 2028 is quite high, perhaps exceeding 60%.

The so-called AI research and development without human involvement refers to a sufficiently powerful AI system that can not only assist humans in research, but also autonomously complete key research and development processes, and even build its own next-generation system.

Clark considered this to be a big deal.

He admitted that he also found it difficult to fully understand the meaning of this matter.

This is a reluctant judgment because its implications are so enormous that he finds it difficult to grasp. Clark is also unsure whether society as a whole is ready to embrace the profound changes brought about by the automation of AI research and development.

He now believes that humanity may be living at a unique point in time: AI research is about to be automated end-to-end. If this moment truly arrives, humanity will be like crossing the Rubicon, entering a future that is almost impossible to predict.

Clark stated that the purpose of this article is to explain why he believes that the takeoff towards fully automated AI research and development is underway.

He will discuss some of the potential consequences of this trend, but the majority of the article will focus on the evidence supporting this assessment. As for the deeper implications, Clark plans to continue exploring them for most of the year.

From a timeline perspective, Clark doesn't believe this will actually happen in 2026. However, he thinks we might see some kind of model training its successor end-to-end within the next year or two. At least at the non-cutting-edge model level, a proof-of-concept is quite possible; as for cutting-edge models, the difficulty will be much higher because they are extremely expensive and rely on the intensive work of a large number of human researchers.

Clark's judgment is primarily based on publicly available information: including papers on arXiv, bioRxiv, and NBER, as well as products already deployed in the real world by leading AI companies. Based on this information, he concludes that the automation required for current AI systems, especially the engineering components in AI development, is largely in place.

If the scaling trend continues, we should begin to prepare for a scenario where models become creative enough not only to automatically improve known methods, but also to replace human researchers in proposing entirely new research directions and original ideas, thereby driving the frontiers of AI forward on their own.

Coding Singularity: Capabilities Changing Over Time

AI systems are implemented through software, and software is composed of code.

AI systems have revolutionized the way code is produced. Two related trends underlie this: firstly, AI systems are becoming increasingly adept at writing complex, real-world code; secondly, they are also becoming increasingly skilled at chaining together many linear coding tasks with minimal human supervision, such as writing code first and then testing it.

Two typical examples illustrating this trend are the SWE-Bench and METR time horizons plot.

Solving real-world software engineering problems

SWE-Bench is a widely used programming test used to evaluate the ability of AI systems to solve real-world GitHub issues.

When SWE-Bench was launched at the end of 2023, the best-performing model was Claude 2, with an overall success rate of only about 2%. Claude Mythos Preview, on the other hand, achieved a score of 93.9%, essentially hitting the maximum score on the benchmark.

Of course, all benchmarks inherently contain some noise, so there's often a stage where, once the score reaches a certain level, the limitation you encounter may no longer be the method itself, but rather the benchmark's limitations. For example, in the ImageNet validation set, approximately 6% of the labels are incorrect or ambiguous.

SWE-Bench can be considered a reliable indicator of general programming ability and the impact of AI on software engineering. Clark stated that most people he has encountered in cutting-edge AI labs and Silicon Valley are now almost entirely writing code using AI systems, and an increasing number of people are starting to use AI systems to write tests and check code.

In other words, AI systems are powerful enough to automate a key component of AI research and development, and significantly accelerate the work of all human researchers and engineers involved in AI development.

Measuring the ability of an AI system to complete long-term tasks

METR created a graph to measure the complexity of tasks that AI can perform. This complexity is calculated based on how many hours a skilled human would typically need to complete these tasks.

The most critical metric is the approximate task time span when the AI system achieves 50% reliability on a set of tasks.

In this respect, the progress has been remarkable:

In 2022, GPT-3.5 was capable of completing tasks that would take humans approximately 30 seconds to complete.

In 2023, GPT-4 increased this time to 4 minutes.

In 2024, o1 increased this time to 40 minutes.

In 2025, GPT-5.2 High reached approximately 6 hours.

By 2026, Opus 4.6 had further increased this time to approximately 12 hours.

Ajeya Cotra, who works at METR and has long focused on AI predictions, believes that it is not unreasonable to expect that by the end of 2026, AI systems will be able to complete tasks that would take humans 100 hours.

The significant increase in the time span during which AI systems can work independently is also highly correlated with the explosion of aggression coding tools. Essentially, aggression coding tools are productizations of AI systems capable of performing tasks in place of humans: they can act on behalf of humans and perform tasks relatively independently for a considerable period.

This also points back to AI research and development itself. A close look at the daily work of many AI researchers reveals that many of their tasks can actually be broken down into several hours of work, such as cleaning data, reading data, and starting experiments.

This type of work now falls within the timeframe that modern AI systems can cover.

The more proficient an AI system is, the more independently it can work, and the more it can help automate some aspects of AI research and development.

There are two main factors for task delegation:

• First, your confidence in the abilities of the person you are entrusting the task to;

• Second, you believe that the other party can independently complete the work according to your intentions without relying on your continuous supervision.

When users observe AI's programming capabilities, they will find that AI systems are not only becoming more proficient, but also able to work independently for longer periods of time without human recalibration.

This aligns with what's happening around us: engineers and researchers are delegating increasingly larger tasks to AI systems. As AI capabilities continue to improve, the work entrusted to AI is becoming more complex and important.

AI is mastering the core scientific skills necessary for AI research and development.

Think about how modern scientific research is conducted. A large part of the work actually involves first determining a direction and clarifying what kind of empirical information you want to obtain; then designing and running experiments to generate this information; and finally checking the rationality of the experimental results.

With the continuous improvement of AI programming capabilities, coupled with the increasingly powerful world modeling capabilities of large language models, a number of tools have emerged that can help human scientists accelerate their work and partially automate certain processes in a wider range of research and development scenarios.

Here, we can observe the pace of AI's progress in several key scientific skills, which are themselves an indispensable part of AI research:

First, reproduce the research results;

Second, it combines machine learning techniques with other methods to solve technical problems;

Third, optimize the AI system itself.

To complete the entire scientific paper and related experiments.

A core task in AI research is reading scientific papers and reproducing their results. In this regard, AI has made significant progress on a range of benchmarks.

A good example is CORE-Bench, which stands for Computational Reproducibility Agent Benchmark.

This benchmark requires the AI system to reproduce the results in a given paper and its code repository. Specifically, the agent needs to install the relevant libraries, packages, and dependencies, and run the code; if the code runs successfully, it also needs to search all the output results and answer the questions in the task.

CORE-Bench was proposed in September 2024. At that time, the best-performing system was the GPT-4o model running in the CORE-Agent scaffold. It scored approximately 21.5% on the most difficult set of tasks in the benchmark.

In December 2025, an author of CORE-Bench announced that the benchmark had been solved: the Opus 4.5 model achieved a score of 95.5%.

Build a complete machine learning system to solve Kaggle competition problems.

MLE-Bench is a benchmark built by OpenAI to test the ability of AI systems to participate in Kaggle competitions in an offline environment.

It covers 75 different types of Kaggle competitions, spanning multiple fields including natural language processing, computer vision, and signal processing.

MLE-Bench was released in October 2024. At the time of release, the best-performing system was an O1 model running in an agent scaffold, scoring 16.9%.

As of February 2026, the best-performing system had become Gemini 3, running in an agent harness with search capabilities, with a score of 64.4%.

Kernel Design

An even more challenging task in AI development is kernel optimization. Kernel optimization involves writing and improving the underlying code to map specific operations, such as matrix multiplication, to the underlying hardware more efficiently.

Kernel optimization is central to AI development because it determines the efficiency of training and inference: on the one hand, it affects how much computing power you can effectively utilize when developing an AI system; on the other hand, once the model is trained, it also determines how efficiently you can convert computing power into inference capabilities.

In recent years, using AI for kernel design has transformed from an interesting niche into a highly competitive research field with several benchmarks emerging. However, these benchmarks are not yet widely accepted, making it difficult to model its long-term progress as clearly as in other fields. On the other hand, we can get a sense of the pace of progress in this area through some ongoing research.

Related work includes:

• Try building a better GPU kernel using DeepSeek models;

• Automatically converts PyTorch modules into CUDA code;

Meta uses LLM to automatically generate an optimized Triton kernel and deploys it into its own infrastructure;

• And fine-tuning open-source weight models for GPU kernel design, such as the Cuda Agent.

One point needs to be added here: kernel design does have some attributes that are particularly suitable for AI-driven research and development, such as easy verification of results and relatively clear reward signals.

Fine-tuning the language model using PostTrainBench

A more challenging version of this type of test is PostTrainBench. It tests whether different cutting-edge models can take over smaller, open-source weighted models and improve their performance on certain benchmarks through fine-tuning.

One advantage of this benchmark is that it has a very strong human baseline: existing, instruction-tuned versions of these small models. These versions are typically developed by outstanding human AI researchers in cutting-edge labs, refined by highly capable researchers and engineers, and deployed in the real world. Therefore, they constitute a human benchmark that is very difficult to surpass.

As of March 2026, AI systems were able to post-train models and achieve performance improvements roughly equivalent to half that of human-trained models.

The specific evaluation score comes from a weighted average: it combines multiple post-trained large language models, including Qwen 3 1.7B, Qwen 3 4B, SmolLM3-3B, Gemma 3 4B, and multiple benchmarks, including AIME 2025, Arena Hard, BFCL, GPQA Main, GSM8K, HealthBench, and HumanEval.

In each run, the evaluator will ask a CLI agent to improve the performance of a specific base model on a specific benchmark as much as possible.

As of April 2026, the highest-scoring AI systems will achieve approximately 25% to 28%, with representative models including Opus 4.6 and GPT 5.4; in comparison, the human score is 51%.

This is already a fairly significant result.

Optimize language model training

For the past year, Anthropic has been reporting on the performance of its system on an LLM training task. This task requires optimizing a small language model training implementation that uses only the CPU to run as fast as possible.

The scoring method is: the average speedup of the model implementation compared to the unmodified initial code.

This result represents a very significant advancement:

• In May 2025, Claude Opus 4 achieved an average speedup of 2.9x;

• In November 2025, Opus 4.5 improved by 16.5 times;

• In February 2026, Opus 4.6 achieved a 30-fold increase in performance;

• In April 2026, Claude Mythos Preview reached 52x.

To understand the meaning of these numbers, consider this: for human researchers, this task typically requires 4 to 8 hours of work to achieve a 4x speedup.

Meta-skills: Management

AI systems are also learning how to manage other AI systems.

This is already seen in some widely deployed products, such as Claude Code or OpenCode. In these products, a master agent can supervise multiple sub-agents.

This allows AI systems to handle larger-scale projects: projects that may require multiple agents with different expertise to work in parallel, typically coordinated by a single AI manager. This manager is itself an AI system.

Is AI research more like discovering general relativity, or building Lego?

A key question is: Can AI invent new ideas to help it improve itself? Or are these systems better suited for the less glamorous, but essential, step-by-step work in research?

This is an important question because it relates to the extent to which AI systems can automate AI research itself end-to-end.

The author concludes that AI is currently incapable of generating truly radical new ideas. However, to achieve automated R&D, it may not necessarily need to do so.

As a field, progress in AI largely depends on increasingly large-scale experiments and more and more inputs, such as data and computing power.

Occasionally, humans come up with paradigm-changing ideas that significantly improve resource efficiency across an entire field. The Transformer architecture is a prime example, and the mixture-of-experts model is another.

But more often than not, the way AI is advanced is actually simpler: humans take a well-performing system, expand one aspect of it, such as training data and computing power; observe where the problems occur after scaling up; find engineering fixes to allow the system to continue to expand; and then scale up again.

In this process, the truly insightful aspects are actually quite few. Much of the work is more like a less glamorous but very solid foundational project.

Similarly, much AI research involves running variations of existing experiments to explore the results of different parameter settings. While research intuition can certainly help humans select the most worthwhile parameters to try, this process can also be automated, allowing AI to determine which parameters are worth adjusting. Early neural architecture search was one version of this approach.

As Edison once said, "Genius is one percent inspiration and ninety-nine percent perspiration." Even after 150 years, this statement remains very true.

Occasionally, new insights do emerge that completely transform a field. But most of the time, progress in a field comes little by little from the arduous process of humans improving and debugging various systems.

The publicly available data mentioned earlier shows that AI is already very good at performing many of the necessary, tedious tasks in AI development.

At the same time, there is an even larger trend: fundamental capabilities, such as programming skills, are being combined with ever-expanding task time spans. This means that AI systems can chain together more and more of these tasks to form complex work sequences.

Therefore, even though AI systems are currently relatively lacking in creativity, there is reason to believe that they can still drive themselves forward. It's just that this progress may be slower than when they generate entirely new insights.

However, if we continue to observe the publicly available data, we will find another intriguing signal: AI systems may be exhibiting a kind of creativity, which may allow them to drive their progress in more surprising ways.

Promoting scientific frontiers forward

There are already some very preliminary signs that general AI systems have the ability to continue pushing the frontiers of human science forward. However, so far, this has only been happening in a few fields, mainly computer science and mathematics. And often, breakthroughs are not achieved by AI systems alone, but rather through human-machine collaboration, working together with human researchers.

Nevertheless, these trends are still worth observing:

Erdős Problems: A group of mathematicians collaborated with the Gemini model to test its performance in solving some Erdős mathematical problems. They guided the system to attempt approximately 700 problems, ultimately obtaining 13 solutions. Of these solutions, one was considered interesting.

The researchers write that they initially believe Aletheia's solution to Erdős-1051 represents an early example of an AI system autonomously solving a slightly nontrivial open Erdős problem with broader mathematical interest. There has been some closely related research literature on this problem previously.

If viewed optimistically, these cases can be seen as a signal that AI systems are developing a kind of creative intuition that can drive the field to the forefront, an intuition that has historically belonged primarily to humans.

However, it can also be explained from another perspective: mathematics and computer science may be fields that are particularly well-suited for AI-driven inventions, so they may just be exceptions and do not represent that broader scientific research will be advanced by AI in the same way.

Another similar example is AlphaGo's 37th move. However, Clark believes that ten years have passed since that AlphaGo result, and the fact that the 37th move was not replaced by a more modern and astonishing insight can itself be seen as a slightly pessimistic sign.

AI can already automate a large part of the work in AI engineering.

If we put all the evidence together, we can see the following picture:

AI systems are already capable of writing code for almost any program, and these systems can be trusted to complete tasks independently that would typically require dozens of hours of intense, focused work if given to humans.

AI systems are becoming increasingly adept at performing core tasks in AI development, gradually covering everything from model fine-tuning to kernel design.

• AI systems are already able to manage other AI systems, effectively forming a synthetic team: multiple AIs can handle complex problems separately, with some AIs playing the roles of manager, critic, and editor, while others play the role of engineer.

AI systems have sometimes outperformed humans in difficult engineering and scientific tasks, although it is still difficult to determine whether this is due to their genuine creativity or their mastery of a large amount of patterned knowledge.

Clark believes that this evidence is very convincing enough to show that today's AI can automate a large part of the work in AI engineering, and may even cover all aspects of it.

However, it remains unclear to what extent AI can automate AI research itself. This is because some aspects of research, unlike purely engineering skills, may still rely on higher levels of judgment, problem awareness, and creativity.

But in any case, a clear signal has emerged: today's AI is significantly accelerating the work of humans engaged in AI development, allowing these researchers and engineers to amplify their capabilities by pairing up and collaborating with countless synthetic colleagues.

Finally, the AI industry itself is practically making it clear that its goal is to develop automated AI.

OpenAI hopes to build an automated AI research intern by September 2026. Anthropic is publishing work on building an automated AI alignment researcher. DeepMind is the most cautious of the three labs, but has also stated that it should move towards automating alignment research when feasible.

Automating AI research has become a goal for many startups. Recursive Superintelligence, which recently raised $500 million, focuses on automating AI research.

In other words, hundreds of billions of dollars of existing and new capital are being invested in a number of institutions that aim to develop automated AI.

Therefore, we should certainly expect that this direction will at least make some progress.

Why this is important

This has far-reaching implications, yet it is rarely discussed in mainstream media reports on AI research and development. The following aspects reflect the enormous challenges brought about by AI research and development.

1. We must get the alignment right: Alignment techniques that work today may fail in recursive self-improvement because AI systems can become far more intelligent than the people or systems that supervise them. This is an area that has been extensively researched, so he will only briefly outline some issues:

Training an AI system not to lie and cheat is a surprisingly subtle process (for example, despite efforts to build good tests for the environment, sometimes the best way for AI to solve a problem is to cheat, thus teaching it that cheating is feasible).

• AI systems may deceive us by "pretending to align," outputting scores that make us think they've performed well, but actually concealing their true intentions. (Generally speaking, AI systems are already able to detect when they are being tested.)

As AI systems begin to participate more in the fundamental research agenda of their own training, we may drastically change the overall way AI systems are trained, but we lack a good intuition or theoretical basis to understand what this means.

When you put a system in a recursive loop, a very basic "error accumulation" problem arises, which can affect all the problems mentioned above, as well as others: unless your alignment method is "100% accurate" and theoretically can remain accurate in a smarter system, things can quickly go wrong. For example, your technique might start with 99.9% accuracy, but after 50 generations it might drop to 95.12%, and after 500 generations it might drop to 60.5%.

2. Everything AI touches will experience a massive productivity boost: Just as AI has significantly improved the productivity of software engineers, we should expect the same for other areas where AI is involved. This raises several issues that need to be addressed:

• Inequality in access to resources: Assuming the demand for AI continues to exceed the supply of computing resources, we must decide how to allocate AI to achieve the greatest social benefit. I am skeptical that market incentives can guarantee that we obtain the best social benefits from limited AI computing power. Determining how to allocate the accelerated capabilities derived from AI R&D will be a highly political issue.

• Amdahl's Law of Economics: As AI flows into the economy, we will find that certain links will encounter bottlenecks when facing rapid growth, and we will need to find ways to fix these weak links in the chain. This may be particularly evident in fields that need to coordinate the fast-paced digital world and the slow-paced physical world, such as clinical trials for new drugs.

3. The formation of a capital-intensive, labor-light economy: All the evidence above regarding AI research and development also indicates that AI systems are increasingly capable of operating businesses autonomously.

This means we can expect a new generation of companies to take over the economy. These companies may be capital-intensive (because they own a lot of computers) or operating expense-intensive (because they spend a lot of money on AI services and create value on top of them). Compared to today's businesses, they are relatively less dependent on human labor—because the marginal value of investing in AI will continue to grow as the capabilities of AI systems continue to improve.

In reality, this will manifest as a "machine economy" gradually forming within a larger "human economy." Over time, AI-operated companies may begin trading with each other, thereby altering the economic structure and raising various issues regarding inequality and redistribution. Ultimately, companies that are entirely autonomously operated by AI systems may emerge, exacerbating these problems and creating numerous new governance challenges.

Staring at a black hole

Based on the above analysis, the author believes there is a 60% probability that we will see automated AI development (i.e., cutting-edge models being able to autonomously train their successors) by the end of 2028. Why not expect it to occur in 2027?

The reason is that the author believes AI research still needs creativity and dissenting insights to move forward, and so far, AI systems have not demonstrated this in a transformative and significant way (although some results in accelerating mathematical research have been enlightening).

If you absolutely had to give him the probability for 2027, he would say 30%.

If this doesn't happen by the end of 2028, we may uncover some fundamental flaws in the current technological paradigm, requiring human invention to drive further development.