Lark CLI, an open-source project, surpassed 10,000 stars on GitHub in just 47 days, becoming the top choice for developers' agent-based work platforms. Open-sourced on March 28th, the project covers 17 business domains, offers over 200 commands, and seamlessly integrates the entire office workflow, including messaging, documents, spreadsheets, multi-dimensional tables, and emails. Agents gain the "hands and feet" to operate office software through CLI, reducing post-meeting organization processes from half an hour to tens of seconds. Lark CLI employs a three-tier command architecture, supporting Shortcuts, API Commands, and Raw APIs, and offering over 2,500 endpoints. In just over 40 days since its open-source release, it has iterated over 100 capabilities, released 32 versions, and hundreds of teams are building toolchains based on it. Global developers are truly embedding agents into workflows, exploring AI-native office methods.

Article author and source: Synced

Lark CLI has become incredibly popular.

OpenClaw has become a hit, Hermes has become a hit, and the AI industry today is no longer what it was last year.

Previously, everyone was focused on large model parameters and token prices, but now the hottest questions have shifted. How exactly does AI integrate into the workflow? Can agents actually help me get the work done?

On GitHub, developers have already voted with their feet on this issue.

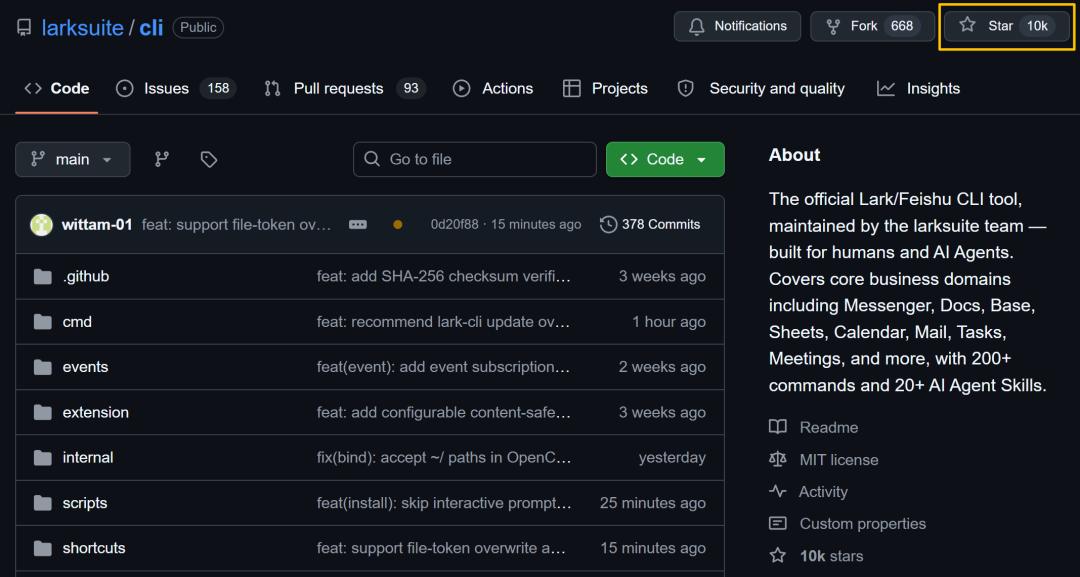

Just recently, an open-source project called "Feishu CLI" has been growing rapidly on GitHub.

It was open-sourced on March 28th, and received over 1000 stars on the same day.

Just 47 days later, yesterday, the number of stars officially surpassed 10,000!

Globally, CLI has become incredibly popular because of Agents!

However, officially supported open-source CLI (Content Provider Interface) projects for office use remain scarce. Among similar CLI projects released around the same time, Lark has already established a significant lead.

This command-line interface was originally something that developers had been using for decades.

The reason for its sudden resurgence is quite simple:

The training data of large models contains billions of CLI commands, which AI naturally "recognizes" without needing additional learning.

When an office platform transforms its capabilities into CLI, the Agent truly gains control over the office software.

Lark is becoming the best platform for agents to change the way businesses work.

What can an agent do in Lark?

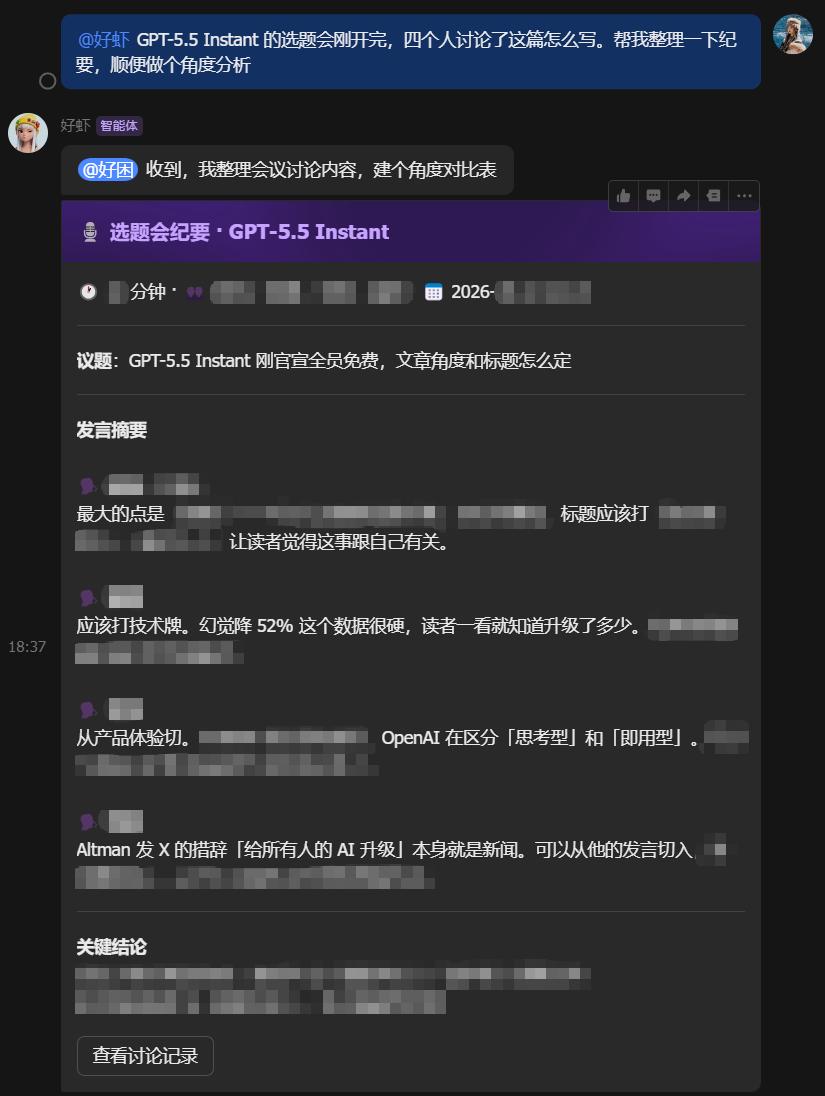

Let's take a typical everyday scenario as an example.

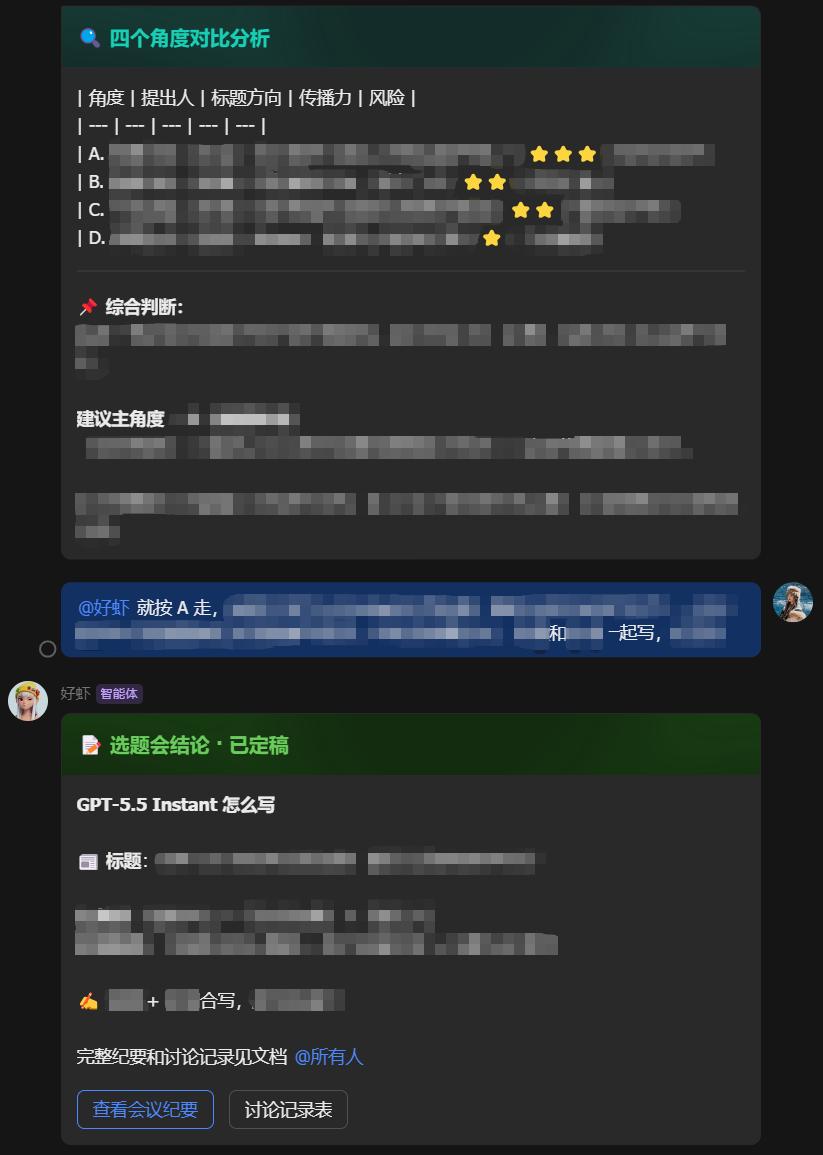

Every morning at the topic selection meeting, the editors would offer their own different writing perspectives.

At this point, simply mention "@assistant" in the group chat, and the agent connected to Lark CLI will automatically take over.

It first writes the editors' discussions into a multidimensional table, turning verbal discussions into structured data in seconds.

Immediately afterwards, two cards will pop up in the group chat.

One document summarizes the meeting minutes, while the other provides comparative analysis from different perspectives, helping editors make direct judgments and finalize decisions.

After the editor replies with the final direction, it immediately updates the conclusion, sends out a conclusion summary card, and @everyone.

In addition, an automatically generated minutes document will be attached, and group editing permissions will be granted.

During this process, the human editor only sent 2 messages, while the Agent called Lark CLI 21 times, spanning 4 Lark product modules: messaging, multidimensional tables, cloud documents, and permission management.

What used to take half an hour to organize after a meeting can now be completed in just a few dozen seconds.

This process uses the same CLI commands as project management and payment verification; it can be reused by changing a few parameters in different scenarios.

They enabled the agent to truly integrate into the workflow.

Our actual measurements are just the tip of the iceberg.

More and more developers are using Lark CLI's capabilities to truly embed agents into workflows and explore AI's native working methods.

For example, Chen Chunyu, CEO of Intent, had the agent access all historical data on the company's Lark platform to review 34 decisions he made since the end of February. The results showed that 70% of the decisions overlapped with those made by the AI.

What's even more interesting is that this tool can also be used in reverse.

After extensive use of AI for data recording, the agent can reverse-engineer its growth path and upgrade its decision-making framework. One CEO even turned the agent into his "decision coach."

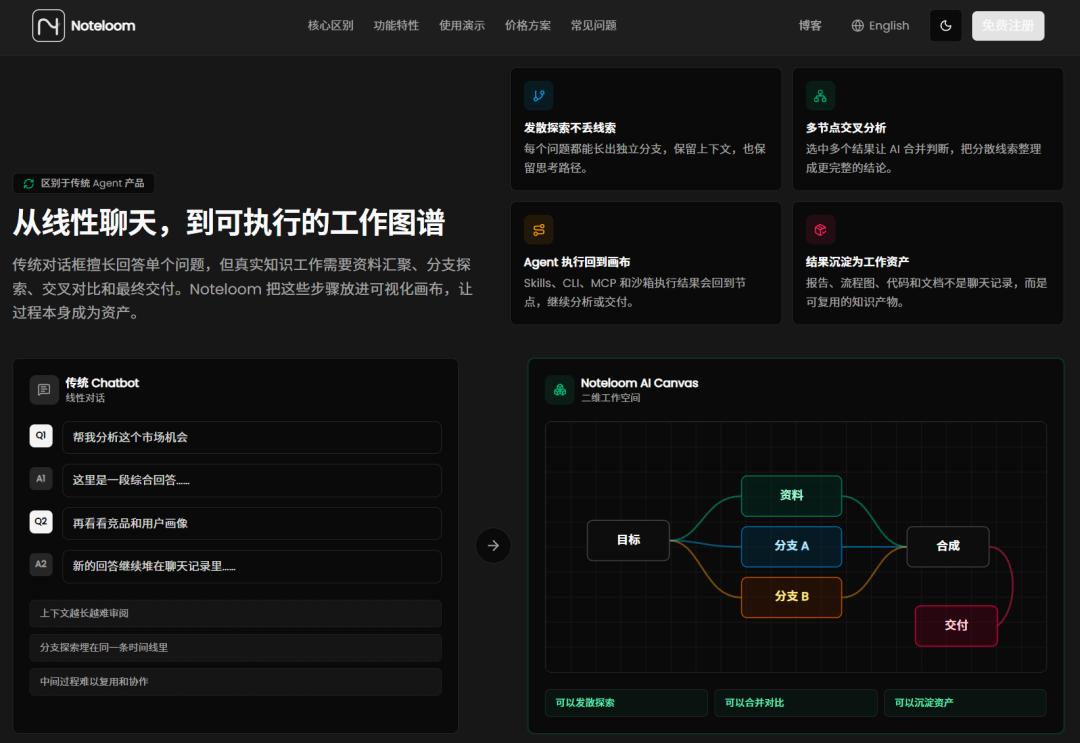

Developer Zhang Hao created NoteLoom, a knowledge workflow tool based on Lark CLI. It skips the dialog box approach and uses a canvas view to organize multi-threaded, multi-starting-point workflows.

Lark serves as the foundation for team collaboration and data asset accumulation. Content is imported from Lark documents and multidimensional tables into NoteLoom for processing, and then back into Lark after processing.

In this system, users' knowledge assets remain in Lark, with NoteLoom serving as the intermediate processing layer.

Pokoclaw, developed by Daniel, uses Lark to transform Agents from chat toys into manageable and supervised work systems.

The underlying layer connects to Lark's open platform SDK and API, and the Agent uses Lark CLI extensively when running daily tasks. Checking the SubAgent status, pulling project progress, and making autonomous decisions about the next step are all done within the Lark dialog box.

From the CEO's decision review to the data hub of the knowledge workflow, to the continuously running Agent work system.

In Lark, the Agent is no longer a brain floating in the clouds.

Global developers are flocking to [the area].

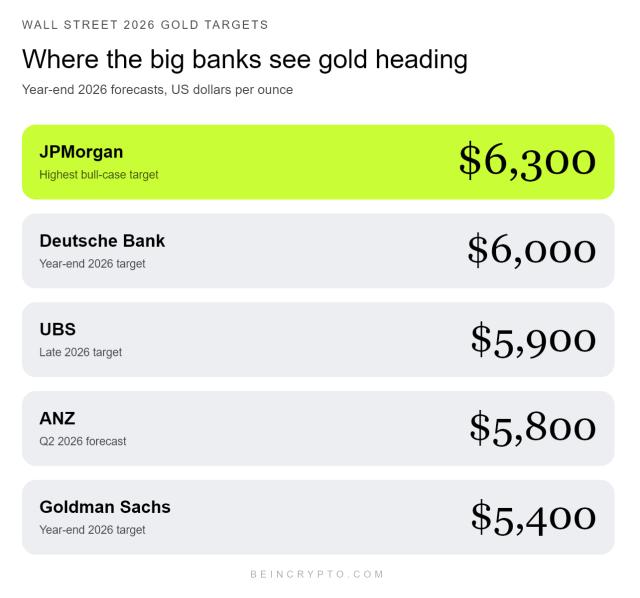

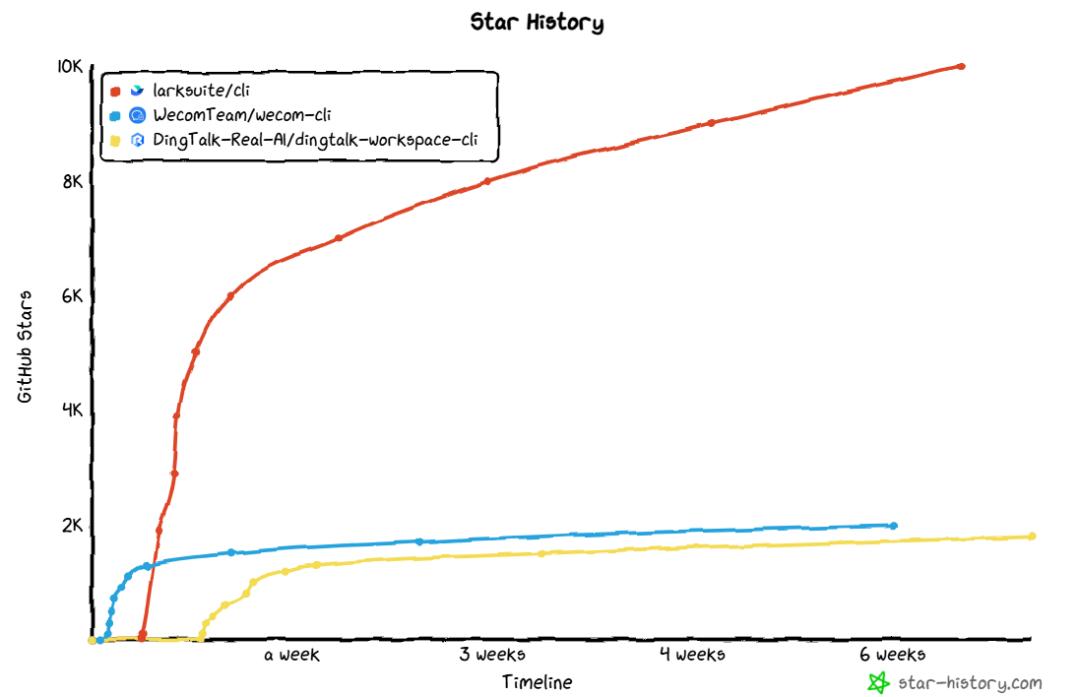

In late March 2026, several leading domestic work platforms simultaneously open-sourced their respective CLIs.

But in less than two months, the data showed a significant gap: Lark CLI's number of stars was already several times that of other projects in the same period.

The core reason is its breadth and depth of openness, which allows agents to do more.

Lark CLI now covers 17 business domains and offers over 200 commands. It seamlessly integrates the entire office workflow, including messaging, documents, spreadsheets, multidimensional tables, emails, calendars, tasks, approvals, knowledge bases, whiteboards, slideshows, meetings, note-taking apps, OKRs, attendance, cloud storage, and contacts.

In Lark, Agent can perform almost all the tasks a white-collar worker can do, including checking schedules, sending messages, writing documents, creating spreadsheets, submitting approvals, searching emails, managing tasks, and reading notes. It doesn't just generate text for you to copy and paste; it gets things done for you.

Moreover, these capabilities are interconnected.

In short, you can have the agent pull a two-week calendar, automatically tag each meeting, write it into a multi-dimensional table, and generate a dashboard.

Alternatively, an agent can scan the meeting notes and assign a "productivity density" score to each meeting, helping the team identify which topics are being discussed repeatedly.

More than 40 days after being open sourced, Lark CLI continues to accelerate, adding more than 100 new capabilities and releasing 32 versions.

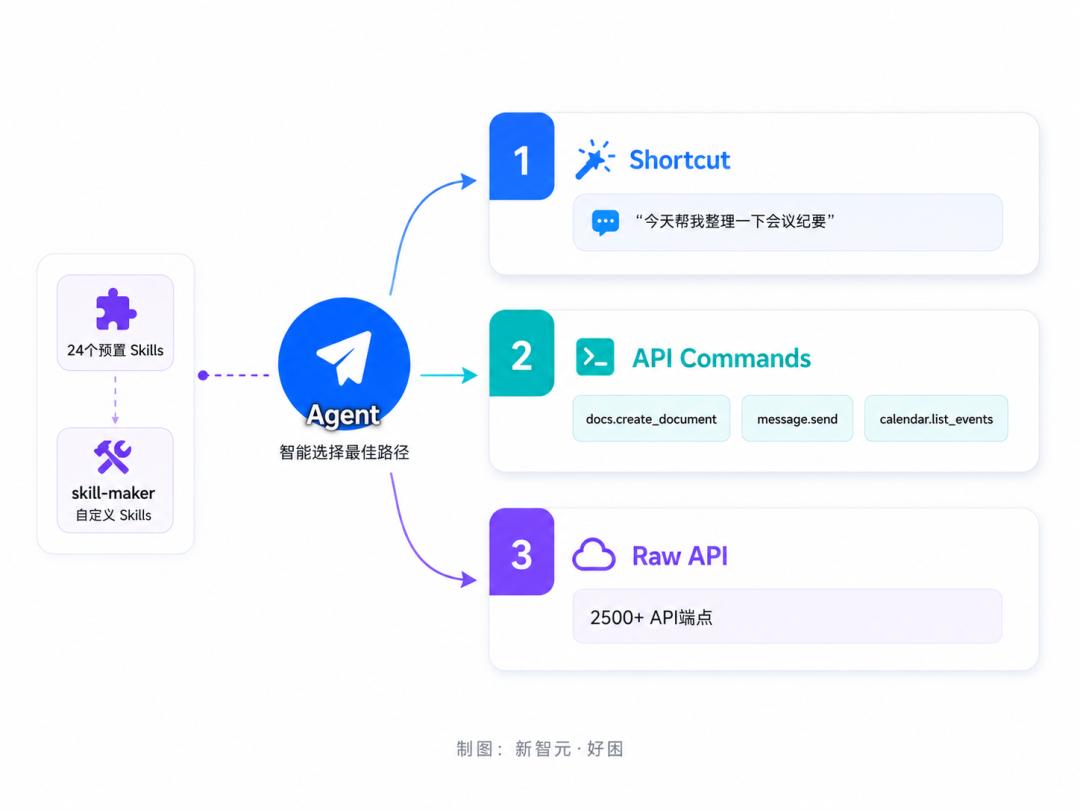

The key here is that Lark CLI's architecture is truly designed for agents. Its three-tier command architecture is as follows—

The first-level shortcut is shared by both humans and AI, and is highly encapsulated; a simple "Check my schedule for today" will get it running.

The second layer, API Commands, maps one-to-one with Lark OpenAPI. Agents use this layer when precise control is required.

The third layer, the Raw API, can call over 2,500 endpoints across the Lark platform, theoretically allowing for unlimited expansion. Even if the CLI version hasn't yet encapsulated a new API, the Agent can find a solution itself through the Raw API.

When encountering an unfamiliar interface, the Agent can use the schema command to instantly query the parameter definitions and example values without needing to exit the workflow to consult documentation.

On top of the three-tier architecture, Lark CLI provides an additional Skill mechanism. 24 pre-built Skills cover core scenarios such as messaging, documents, spreadsheets, calendars, and approvals.

Skills use natural language to describe "how to use CLI to complete a certain type of task". Once the Agent is loaded, it knows how to combine commands to complete the task, without having to figure it out from scratch every time.

In addition to the officially packaged skills, Lark CLI also provides the skill-maker framework, which allows developers to create their own skills and consolidate their team's unique workflows.

Meanwhile, Lark CLI also has a built-in real-time event subscription based on WebSocket. The agent can continuously monitor changes in Lark, such as new messages, schedule changes, and approval updates, and respond autonomously in real time. The agent has changed from "only acting when called" to "taking initiative".

Error handling is also for the AI. When an error occurs, it not only reports an error code, but also tells the Agent what to do next, such as switching identities or re-authorizing.

From a security perspective, input injection protection, terminal output cleanup, and keychain credential storage are enabled by default. The agent's ability to perform its functions is the first step; ensuring its secure operation is the prerequisite for enterprises to dare to use it.

True open source, true collaborative development

It's worth mentioning that Lark CLI isn't just about uploading the code to GitHub.

668 forks mean that hundreds of teams are building their own toolchains based on it. More than 50 external contributors have submitted code, and 10 contributions have been merged into the main branch.

Now, Lark CLI receives external code merges almost every week.

Among the contributors are e-commerce engineers from Hepsiburada, Türkiye, independent developers from Vietnam, engineers from Hangzhou-based SaaS company Zaihui, and others.

In other words, developers around the world are contributing to this project.

Feedback in the Issues section has clearly moved into the Agent workflow, with developers discussing the impact of onboarding on the success rates of both human and AI agents, as well as real-world implementation issues such as intent recognition, tool selection, and context management.

Clearly, the developers weren't just observing; they were putting it into practice. They were seriously evaluating whether Lark CLI could be integrated into their production environment.

Lark is becoming the core workspace for Agents.

From developers' practices to community building, everything points to the same conclusion—Lark is becoming the core workspace for Agents.

Why Lark? Because for an agent to truly accomplish something, it needs three things simultaneously.

1. Context. Lark has accumulated years of enterprise messages, documents, schedules, notes, multidimensional tables, and OKRs. The agent connects not to an empty shell, but to a pond full of data.

2. Execution capability. The CLI connects 17 business domains. After the Agent is authorized, it knows which department you belong to, what role you have, and what data you can view. This set of permissions applies to all modules.

3. User Interface. Lark's bot mechanism instantly provides the Agent with a front-end that every colleague can use. No separate UI development is needed.

With the three layers stacked together, Lark becomes the most natural workspace for the Agent.

One detail from the OpenClaw craze confirms this: a large number of developers spontaneously flocked to the Lark ecosystem without any official call to action.

Yang Mingfeng, founder of the OpenClaw Chinese community, recalled, "I judged that in the domestic environment, Lark was probably the fastest and most suitable platform to integrate with OpenClaw."

From community-driven initiatives to official follow-up, and then to the full open-source release of CLI, this timeline was written line by line of code by the developers.

Wanxing is just the beginning.

In the spring of 2026, amidst the OpenClaw craze, developers had already voted with their feet to choose Agent as their working platform.

Previously, agent-based office work might have been limited to "generating a piece of text for me." But now, some people are keeping an AI colleague in Lark, some CEOs are having agents review decisions, and hundreds of teams are building their own workflows based on CLI.

Currently, if you want to seriously explore the productivity applications of agents in enterprise office scenarios, Lark is the leading agent workspace in China, jointly launched by developers, bar none.

Here, AI is no longer a tool, but a digital workforce that truly coexists with humans.